Meenakshi Sarkar

Enhancing Image Resolution: A Simulation Study and Sensitivity Analysis of System Parameters for Resourcesat-3S/3SA

Oct 30, 2024

Abstract:Resourcesat-3S/3SA, an upcoming Indian satellite, is designed with Aft and Fore payloads capturing stereo images at look angles of -5deg and 26deg, respectively. Operating at 632.6 km altitude, it features a panchromatic (PAN) band offering a Ground Sampling Distance (GSD) of 1.25 meters and a 60 km swath. To balance swath width and resolution, an Instantaneous Geometric Field of View (IGFOV) of 2.5 meters is maintained while ensuring a 1.25-meter GSD both along and across track. Along-track sampling is achieved through precise timing, while across-track accuracy is ensured by using two staggered pixel arrays. Signal-to-Noise Ratio (SNR) is enhanced through Time Delay and Integration (TDI), employing two five-stage subarrays spaced 80 {\mu}m apart along the track, with a 4 {\mu}m (0.5 pixel) stagger in the across-track direction to achieve 1.25-meter resolution. To further boost resolution, the satellite employs super-resolution (SR), combining multiple low-resolution captures using sub-pixel shifts to produce high-resolution images. This technique, effective when images contain aliased high-frequency details, reconstructs full-resolution imagery using phase information from multiple observations, and has been successfully applied in remote sensing missions like SPOT-5, SkySat, and DubaiSat-1. A Monte Carlo simulation explores the factors influencing the resolution in Resourcesat-3S/3SA, with sensitivity analysis highlighting key impacts. The simulation methodology is broadly applicable to other remote sensing missions, optimizing SR for enhanced image clarity and resolution in future satellite systems.

Geometric Correction and Mosaic Generation of Geo High Resolution Camera Images

Oct 25, 2024

Abstract:The Geo High Resolution Camera (GHRC) aboard ISRO GSAT-29 satellite is a state-of-the-art 6-band Visible and Near Infrared (VNIR) imager in geostationary orbit at 55degE longitude. It provides a ground sampling distance of 55 meters at nadir, covering 110x110 km at a time, and can image the entire Earth disk using a scan mirror mechanism. To cover India, GHRC uses a two-dimensional raster scanning technique, resulting in over 1,000 scenes that must be stitched into a seamless mosaic. This paper presents the geolocation model and examines potential sources of targeting error, with an assessment of location accuracy. Challenges in inter-band registration and inter-frame mosaicing are addressed through algorithms for geometric correction, band-to-band registration, and seamless mosaic generation. In-flight geometric calibration, including adjustments to the instrument interior alignment angles using ground reference images, has improved pointing and location accuracy. A backtracking algorithm has been developed to correct frame-to-frame mosaicing errors for large-scale mosaics, leveraging geometric models, image processing, and space resection techniques. These advancements now enable the operational generation of full India mosaics with 100-meter resolution and high geometric fidelity, enhancing the GHRC capabilities for Earth observation and monitoring applications.

Advancements in Image Resolution: Super-Resolution Algorithm for Enhanced EOS-06 OCM-3 Data

Oct 24, 2024Abstract:The Ocean Color Monitor-3 (OCM-3) sensor is instrumental in Earth observation, achieving a critical balance between high-resolution imaging and broad coverage. This paper explores innovative imaging methods employed in OCM-3 and the transformative potential of super-resolution techniques to enhance image quality. The super-resolution model for OCM-3 (SOCM-3) addresses the challenges of contemporary satellite imaging by effectively navigating the trade-off between image clarity and swath width. With resolutions below 240 meters in Local Area Coverage (LAC) mode and below 750 meters in Global Area Coverage (GAC) mode, coupled with a wide 1550-kilometer swath and a 2-day revisit time, SOCM-3 emerges as a leading asset in remote sensing. The paper details the intricate interplay of atmospheric, motion, optical, and detector effects that impact image quality, emphasizing the necessity for advanced computational techniques and sophisticated algorithms for effective image reconstruction. Evaluation methods are thoroughly discussed, incorporating visual assessments using the Blind/Referenceless Image Spatial Quality Evaluator (BRISQUE) metric and computational metrics such as Line Spread Function (LSF), Full Width at Half Maximum (FWHM), and Super-Resolution (SR) ratio. Additionally, statistical analyses, including power spectrum evaluations and target-wise spectral signatures, are employed to gauge the efficacy of super-resolution techniques. By enhancing both spatial resolution and revisit frequency, this study highlights significant advancements in remote sensing capabilities, providing valuable insights for applications across cryospheric, vegetation, oceanic, coastal, and domains. Ultimately, the findings underscore the potential of SOCM-3 to contribute meaningfully to our understanding of finescale oceanic phenomena and environmental monitoring.

Hyperspectral Spatial Super-Resolution using Keystone Error

Oct 24, 2024

Abstract:Hyperspectral images enable precise identification of ground objects by capturing their spectral signatures with fine spectral resolution.While high spatial resolution further enhances this capability, increasing spatial resolution through hardware like larger telescopes is costly and inefficient. A more optimal solution is using ground processing techniques, such as hypersharpening, to merge high spectral and spatial resolution data. However, this method works best when datasets are captured under similar conditions, which is difficult when using data from different times. In this work, we propose a superresolution approach to enhance hyperspectral data's spatial resolution without auxiliary input. Our method estimates the high-resolution point spread function (PSF) using blind deconvolution and corrects for sampling-related blur using a model-based superresolution framework. This differs from previous approaches by not assuming a known highresolution blur. We also introduce an adaptive prior that improves performance compared to existing methods. Applied to the visible and near-infrared (VNIR) spectrometer of HySIS, ISRO hyperspectral sensor, our algorithm removes aliasing and boosts resolution by approximately 1.3 times. It is versatile and can be applied to similar systems.

Data Processing Chain and Products of EOS-06 OCM-3 Payload From Signal Processing to Geometric Precision

Oct 22, 2024Abstract:The Ocean Color Monitor-3, launched aboard Oceansat-3, represents a significant advancement in ocean observation technology, building upon the capabilities of its predecessors. With thirteen spectral bands, OCM-3 enhances feature identification and atmospheric correction, enabling precise data collection from a sun-synchronous orbit. With thirteen spectral bands, OCM-3 enhances feature identification and atmospheric correction, enabling precise data collection from a sunsynchronous orbit. Operating at an altitude of 732.5 km, the satellite achieves high signal-to-noise ratios (SNR) through sophisticated onboard and ground processing techniques, including advanced geometric modeling for pixel registration.The OCM-3 processing pipeline, consisting of multiple levels, ensures rigorous calibration and correction of radiometric and geometric data. This paper presents key methodologies such as dark data modeling, photo response non-uniformity correction, and smear correction, are employed to enhance data quality. The effective implementation of ground time delay integration (TDI) allows for the refinement of SNR, with evaluations demonstrating that performance specifications were exceeded. Geometric calibration procedures, including band-to-band registration and geolocation accuracy assessments, which further optimize data reliability are presented in the paper. Advanced image registration techniques leveraging Ground Control Points (GCPs) and residual error analysis significantly reduce geolocation errors, achieving precision within specified thresholds. Overall, OCM-3 comprehensive calibration and processing strategies ensure high-quality, reliable data crucial for ocean monitoring and change detection applications, facilitating improved understanding of ocean dynamics and environmental changes.

Video Generation with Learned Action Prior

Jun 20, 2024

Abstract:Stochastic video generation is particularly challenging when the camera is mounted on a moving platform, as camera motion interacts with observed image pixels, creating complex spatio-temporal dynamics and making the problem partially observable. Existing methods typically address this by focusing on raw pixel-level image reconstruction without explicitly modelling camera motion dynamics. We propose a solution by considering camera motion or action as part of the observed image state, modelling both image and action within a multi-modal learning framework. We introduce three models: Video Generation with Learning Action Prior (VG-LeAP) treats the image-action pair as an augmented state generated from a single latent stochastic process and uses variational inference to learn the image-action latent prior; Causal-LeAP, which establishes a causal relationship between action and the observed image frame at time $t$, learning an action prior conditioned on the observed image states; and RAFI, which integrates the augmented image-action state concept into flow matching with diffusion generative processes, demonstrating that this action-conditioned image generation concept can be extended to other diffusion-based models. We emphasize the importance of multi-modal training in partially observable video generation problems through detailed empirical studies on our new video action dataset, RoAM.

Action-conditioned video data improves predictability

Apr 08, 2024

Abstract:Long-term video generation and prediction remain challenging tasks in computer vision, particularly in partially observable scenarios where cameras are mounted on moving platforms. The interaction between observed image frames and the motion of the recording agent introduces additional complexities. To address these issues, we introduce the Action-Conditioned Video Generation (ACVG) framework, a novel approach that investigates the relationship between actions and generated image frames through a deep dual Generator-Actor architecture. ACVG generates video sequences conditioned on the actions of robots, enabling exploration and analysis of how vision and action mutually influence one another in dynamic environments. We evaluate the framework's effectiveness on an indoor robot motion dataset which consists of sequences of image frames along with the sequences of actions taken by the robotic agent, conducting a comprehensive empirical study comparing ACVG to other state-of-the-art frameworks along with a detailed ablation study.

Action-conditioned Deep Visual Prediction with RoAM, a new Indoor Human Motion Dataset for Autonomous Robots

Jun 28, 2023

Abstract:With the increasing adoption of robots across industries, it is crucial to focus on developing advanced algorithms that enable robots to anticipate, comprehend, and plan their actions effectively in collaboration with humans. We introduce the Robot Autonomous Motion (RoAM) video dataset, which is collected with a custom-made turtlebot3 Burger robot in a variety of indoor environments recording various human motions from the robot's ego-vision. The dataset also includes synchronized records of the LiDAR scan and all control actions taken by the robot as it navigates around static and moving human agents. The unique dataset provides an opportunity to develop and benchmark new visual prediction frameworks that can predict future image frames based on the action taken by the recording agent in partially observable scenarios or cases where the imaging sensor is mounted on a moving platform. We have benchmarked the dataset on our novel deep visual prediction framework called ACPNet where the approximated future image frames are also conditioned on action taken by the robot and demonstrated its potential for incorporating robot dynamics into the video prediction paradigm for mobile robotics and autonomous navigation research.

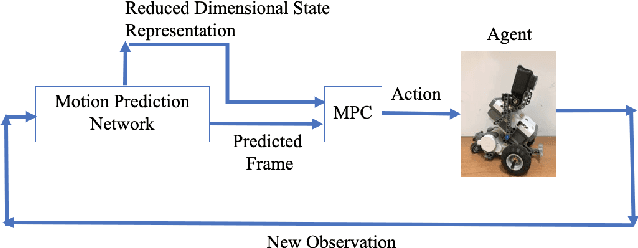

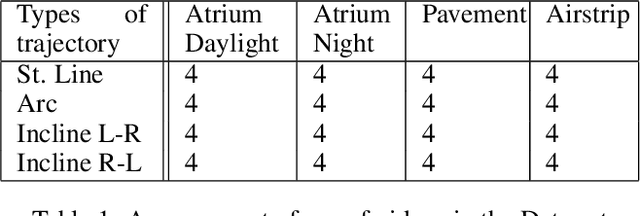

Planning Robot Motion using Deep Visual Prediction

Jun 24, 2019

Abstract:In this paper, we introduce a novel framework that can learn to make visual predictions about the motion of a robotic agent from raw video frames. Our proposed motion prediction network (PROM-Net) can learn in a completely unsupervised manner and efficiently predict up to 10 frames in the future. Moreover, unlike any other motion prediction models, it is lightweight and once trained it can be easily implemented on mobile platforms that have very limited computing capabilities. We have created a new robotic data set comprising LEGO Mindstorms moving along various trajectories in three different environments under different lighting conditions for testing and training the network. Finally, we introduce a framework that would use the predicted frames from the network as an input to a model predictive controller for motion planning in unknown dynamic environments with moving obstacles.

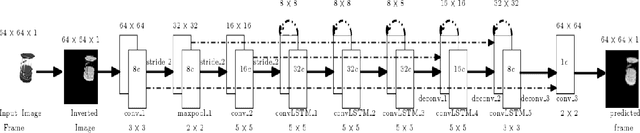

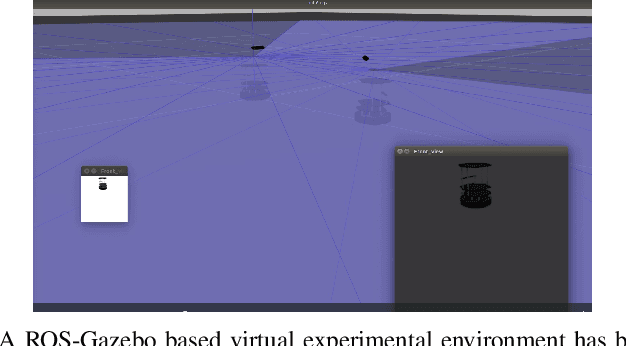

Sequential Learning of Movement Prediction in Dynamic Environments using LSTM Autoencoder

Oct 12, 2018

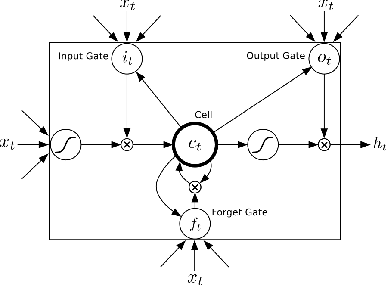

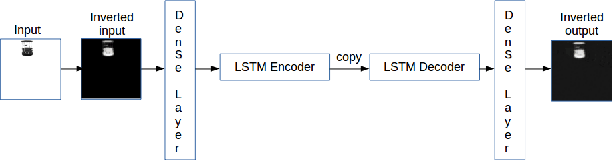

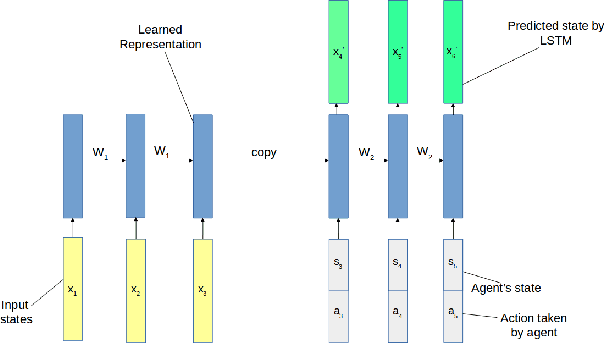

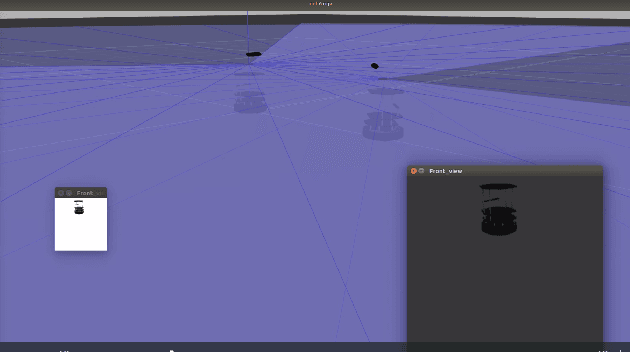

Abstract:Predicting movement of objects while the action of learning agent interacts with the dynamics of the scene still remains a key challenge in robotics. We propose a multi-layer Long Short Term Memory (LSTM) autoendocer network that predicts future frames for a robot navigating in a dynamic environment with moving obstacles. The autoencoder network is composed of a state and action conditioned decoder network that reconstructs the future frames of video, conditioned on the action taken by the agent. The input image frames are first transformed into low dimensional feature vectors with a pre-trained encoder network and then reconstructed with the LSTM autoencoder network to generate the future frames. A virtual environment, based on the OpenAi-Gym framework for robotics, is used to gather training data and test the proposed network. The initial experiments show promising results indicating that these predicted frames can be used by an appropriate reinforcement learning framework in future to navigate around dynamic obstacles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge