Matthieu Molinier

A lightweight deep learning based cloud detection method for Sentinel-2A imagery fusing multi-scale spectral and spatial features

Apr 29, 2021

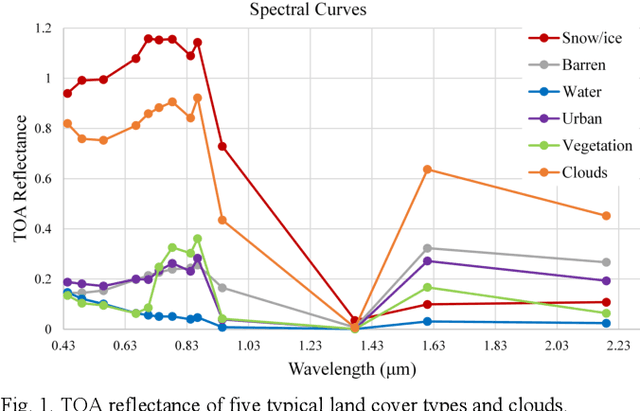

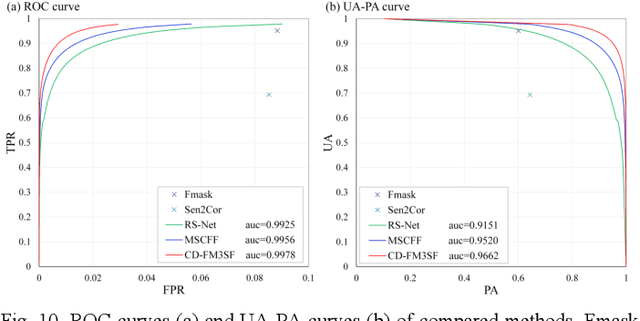

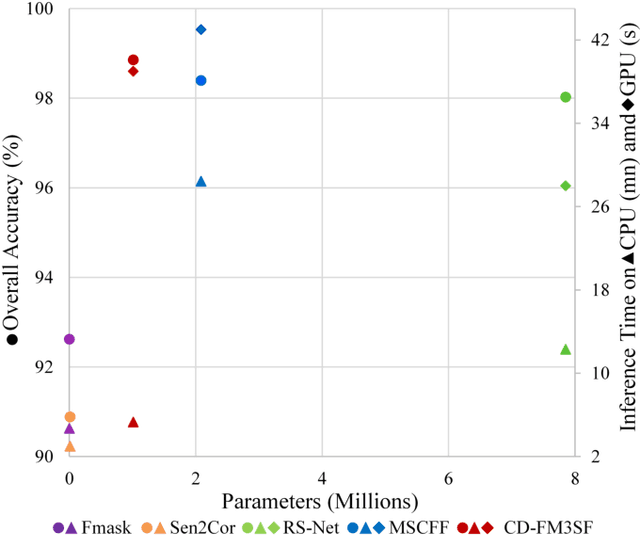

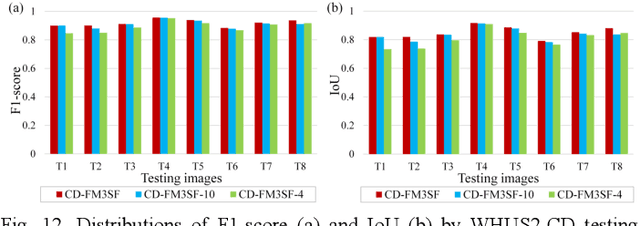

Abstract:Clouds are a very important factor in the availability of optical remote sensing images. Recently, deep learning-based cloud detection methods have surpassed classical methods based on rules and physical models of clouds. However, most of these deep models are very large which limits their applicability and explainability, while other models do not make use of the full spectral information in multi-spectral images such as Sentinel-2. In this paper, we propose a lightweight network for cloud detection, fusing multi-scale spectral and spatial features (CDFM3SF) and tailored for processing all spectral bands in Sentinel- 2A images. The proposed method consists of an encoder and a decoder. In the encoder, three input branches are designed to handle spectral bands at their native resolution and extract multiscale spectral features. Three novel components are designed: a mixed depth-wise separable convolution (MDSC) and a shared and dilated residual block (SDRB) to extract multi-scale spatial features, and a concatenation and sum (CS) operation to fuse multi-scale spectral and spatial features with little calculation and no additional parameters. The decoder of CD-FM3SF outputs three cloud masks at the same resolution as input bands to enhance the supervision information of small, middle and large clouds. To validate the performance of the proposed method, we manually labeled 36 Sentinel-2A scenes evenly distributed over mainland China. The experiment results demonstrate that CD-FM3SF outperforms traditional cloud detection methods and state-of-theart deep learning-based methods in both accuracy and speed.

Binary Patterns Encoded Convolutional Neural Networks for Texture Recognition and Remote Sensing Scene Classification

Mar 26, 2018

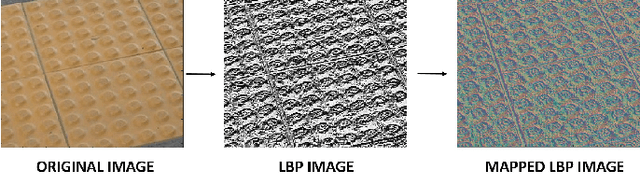

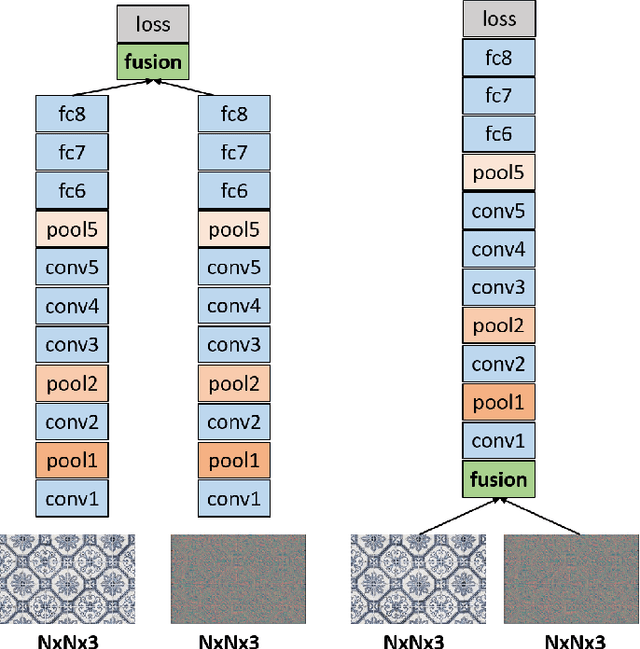

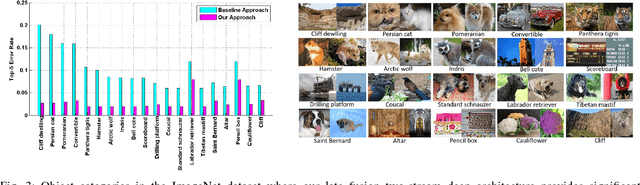

Abstract:Designing discriminative powerful texture features robust to realistic imaging conditions is a challenging computer vision problem with many applications, including material recognition and analysis of satellite or aerial imagery. In the past, most texture description approaches were based on dense orderless statistical distribution of local features. However, most recent approaches to texture recognition and remote sensing scene classification are based on Convolutional Neural Networks (CNNs). The d facto practice when learning these CNN models is to use RGB patches as input with training performed on large amounts of labeled data (ImageNet). In this paper, we show that Binary Patterns encoded CNN models, codenamed TEX-Nets, trained using mapped coded images with explicit texture information provide complementary information to the standard RGB deep models. Additionally, two deep architectures, namely early and late fusion, are investigated to combine the texture and color information. To the best of our knowledge, we are the first to investigate Binary Patterns encoded CNNs and different deep network fusion architectures for texture recognition and remote sensing scene classification. We perform comprehensive experiments on four texture recognition datasets and four remote sensing scene classification benchmarks: UC-Merced with 21 scene categories, WHU-RS19 with 19 scene classes, RSSCN7 with 7 categories and the recently introduced large scale aerial image dataset (AID) with 30 aerial scene types. We demonstrate that TEX-Nets provide complementary information to standard RGB deep model of the same network architecture. Our late fusion TEX-Net architecture always improves the overall performance compared to the standard RGB network on both recognition problems. Our final combination outperforms the state-of-the-art without employing fine-tuning or ensemble of RGB network architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge