Mart Kartašev

Design and Evaluation of an Assisted Programming Interface for Behavior Trees in Robotics

Feb 10, 2026Abstract:The possibility to create reactive robot programs faster without the need for extensively trained programmers is becoming increasingly important. So far, it has not been explored how various techniques for creating Behavior Tree (BT) program representations could be combined with complete graphical user interfaces (GUIs) to allow a human user to validate and edit trees suggested by automated methods. In this paper, we introduce BEhavior TRee GUI (BETR-GUI) for creating BTs with the help of an AI assistant that combines methods using large language models, planning, genetic programming, and Bayesian optimization with a drag-and-drop editor. A user study with 60 participants shows that by combining different assistive methods, BETR-GUI enables users to perform better at solving the robot programming tasks. The results also show that humans using the full variant of BETR-GUI perform better than the AI assistant running on its own.

Progress Constraints for Reinforcement Learning in Behavior Trees

Feb 06, 2026Abstract:Behavior Trees (BTs) provide a structured and reactive framework for decision-making, commonly used to switch between sub-controllers based on environmental conditions. Reinforcement Learning (RL), on the other hand, can learn near-optimal controllers but sometimes struggles with sparse rewards, safe exploration, and long-horizon credit assignment. Combining BTs with RL has the potential for mutual benefit: a BT design encodes structured domain knowledge that can simplify RL training, while RL enables automatic learning of the controllers within BTs. However, naive integration of BTs and RL can lead to some controllers counteracting other controllers, possibly undoing previously achieved subgoals, thereby degrading the overall performance. To address this, we propose progress constraints, a novel mechanism where feasibility estimators constrain the allowed action set based on theoretical BT convergence results. Empirical evaluations in a 2D proof-of-concept and a high-fidelity warehouse environment demonstrate improved performance, sample efficiency, and constraint satisfaction, compared to prior methods of BT-RL integration.

SMaRCSim: Maritime Robotics Simulation Modules

Jun 09, 2025Abstract:Developing new functionality for underwater robots and testing them in the real world is time-consuming and resource-intensive. Simulation environments allow for rapid testing before field deployment. However, existing tools lack certain functionality for use cases in our project: i) developing learning-based methods for underwater vehicles; ii) creating teams of autonomous underwater, surface, and aerial vehicles; iii) integrating the simulation with mission planning for field experiments. A holistic solution to these problems presents great potential for bringing novel functionality into the underwater domain. In this paper we present SMaRCSim, a set of simulation packages that we have developed to help us address these issues.

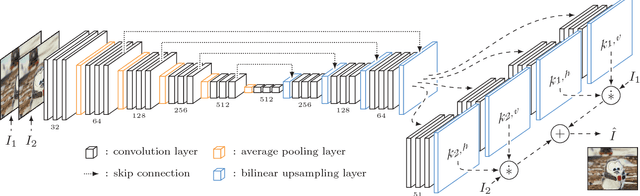

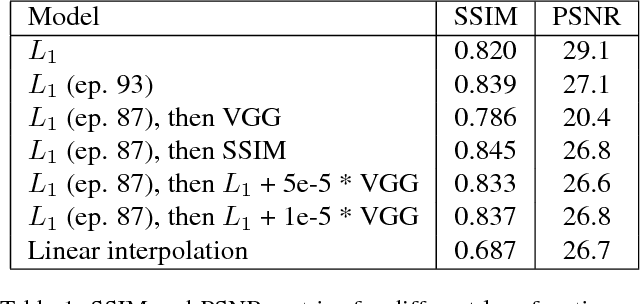

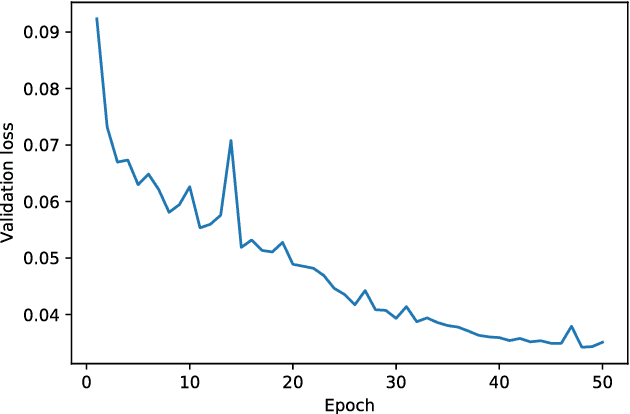

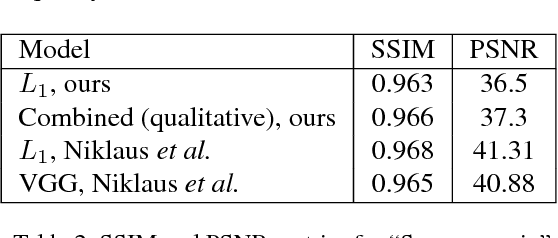

Implementing Adaptive Separable Convolution for Video Frame Interpolation

Sep 20, 2018

Abstract:As Deep Neural Networks are becoming more popular, much of the attention is being devoted to Computer Vision problems that used to be solved with more traditional approaches. Video frame interpolation is one of such challenges that has seen new research involving various techniques in deep learning. In this paper, we replicate the work of Niklaus et al. on Adaptive Separable Convolution, which claims high quality results on the video frame interpolation task. We apply the same network structure trained on a smaller dataset and experiment with various different loss functions, in order to determine the optimal approach in data-scarce scenarios. The best resulting model is still able to provide visually pleasing videos, although achieving lower evaluation scores.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge