Marco Ragni

Bayesian Inverse Physics for Neuro-Symbolic Robot Learning

Jun 10, 2025Abstract:Real-world robotic applications, from autonomous exploration to assistive technologies, require adaptive, interpretable, and data-efficient learning paradigms. While deep learning architectures and foundation models have driven significant advances in diverse robotic applications, they remain limited in their ability to operate efficiently and reliably in unknown and dynamic environments. In this position paper, we critically assess these limitations and introduce a conceptual framework for combining data-driven learning with deliberate, structured reasoning. Specifically, we propose leveraging differentiable physics for efficient world modeling, Bayesian inference for uncertainty-aware decision-making, and meta-learning for rapid adaptation to new tasks. By embedding physical symbolic reasoning within neural models, robots could generalize beyond their training data, reason about novel situations, and continuously expand their knowledge. We argue that such hybrid neuro-symbolic architectures are essential for the next generation of autonomous systems, and to this end, we provide a research roadmap to guide and accelerate their development.

Modeling Associative Reasoning Processes

Jan 07, 2022

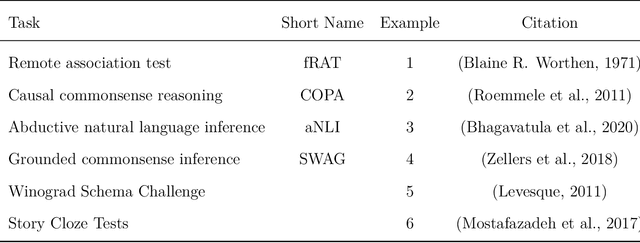

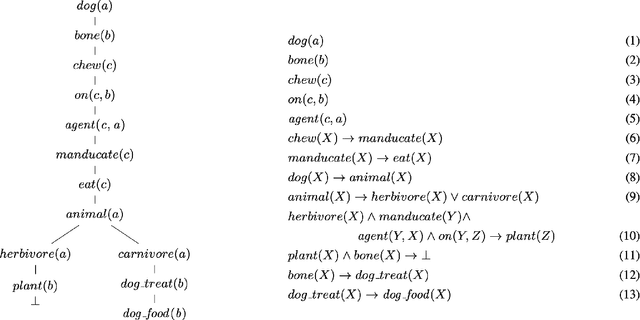

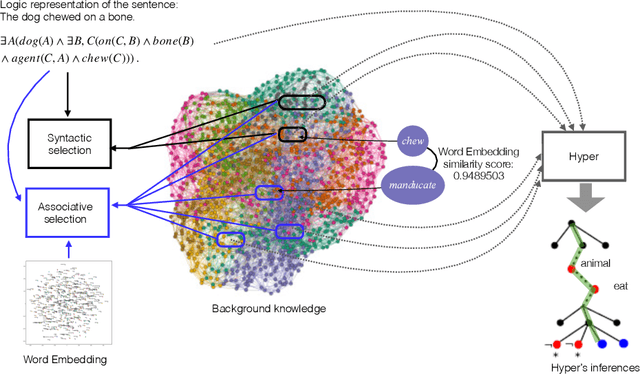

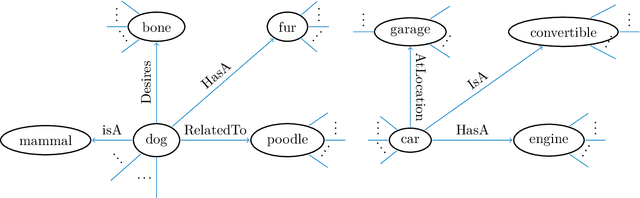

Abstract:The human capability to reason about one domain by using knowledge of other domains has been researched for more than 50 years, but models that are formally sound and predict cognitive process are sparse. We propose a formally sound method that models associative reasoning by adapting logical reasoning mechanisms. In particular it is shown that the combination with large commensense knowledge within a single reasoning system demands for an efficient and powerful association technique. This approach is also used for modelling mind-wandering and the Remote Associates Test (RAT) for testing creativity. In a general discussion we show implications of the model for a broad variety of cognitive phenomena including consciousness.

Uncovering the Data-Related Limits of Human Reasoning Research: An Analysis based on Recommender Systems

Mar 11, 2020

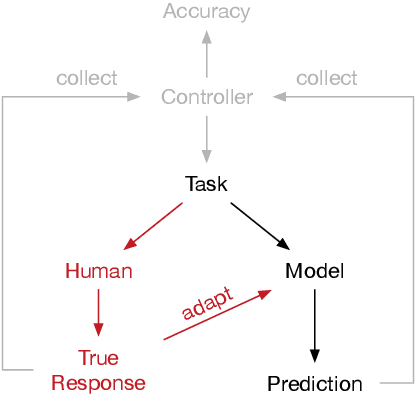

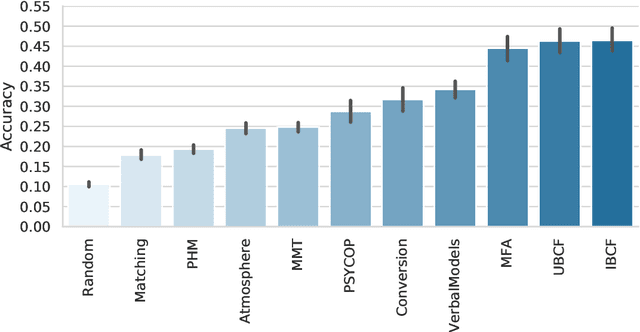

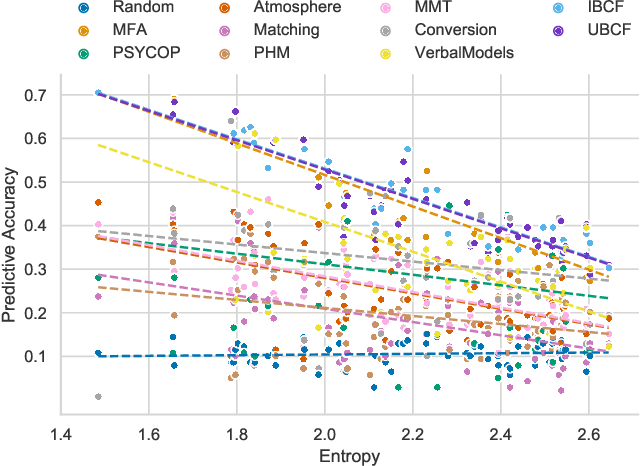

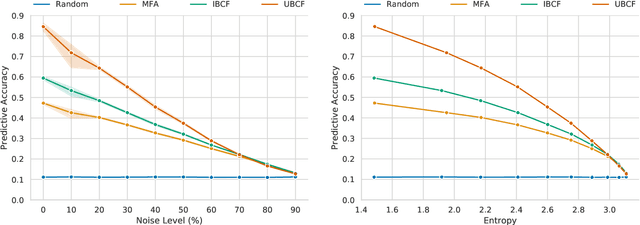

Abstract:Understanding the fundamentals of human reasoning is central to the development of any system built to closely interact with humans. Cognitive science pursues the goal of modeling human-like intelligence from a theory-driven perspective with a strong focus on explainability. Syllogistic reasoning as one of the core domains of human reasoning research has seen a surge of computational models being developed over the last years. However, recent analyses of models' predictive performances revealed a stagnation in improvement. We believe that most of the problems encountered in cognitive science are not due to the specific models that have been developed but can be traced back to the peculiarities of behavioral data instead. Therefore, we investigate potential data-related reasons for the problems in human reasoning research by comparing model performances on human and artificially generated datasets. In particular, we apply collaborative filtering recommenders to investigate the adversarial effects of inconsistencies and noise in data and illustrate the potential for data-driven methods in a field of research predominantly concerned with gaining high-level theoretical insight into a domain. Our work (i) provides insight into the levels of noise to be expected from human responses in reasoning data, (ii) uncovers evidence for an upper-bound of performance that is close to being reached urging for an extension of the modeling task, and (iii) introduces the tools and presents initial results to pioneer a new paradigm for investigating and modeling reasoning focusing on predicting responses for individual human reasoners.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge