Marco Di Felice

Say the Mission, Execute the Swarm: Agent-Enhanced LLM Reasoning in the Web-of-Drones

May 05, 2026Abstract:Large Language Models (LLMs) are increasingly explored as high-level reasoning engines for cyber-physical systems, yet their application to real-time UAV swarm management remains challenging due to heterogeneous interfaces, limited grounding, and the need for long-running closed-loop execution. This paper presents a mission-agnostic, agent-enhanced LLM framework for UAV swarm control, where users express mission objectives in natural language and the system autonomously executes them through grounded, real-time interactions. The proposed architecture combines an LLM-based Agent Core with a Model Context Protocol (MCP) gateway and a Web-of-Drones abstraction based on W3C Web of Things (WoT) standards. By exposing drones, sensors, and services as standardized WoT Things, the framework enables structured tool-based interaction, continuous state observation, and safe actuation without relying on code generation. We evaluate the framework using ArduPilot-based simulation across four swarm missions and six state-of-the-art LLMs. Results show that, despite strong reasoning abilities, current general-purpose LLMs still struggle to achieve reliable execution - even for simple swarm tasks - when operating without explicit grounding and execution support. Task-specific planning tools and runtime guardrails substantially improve robustness, while token consumption alone is not indicative of execution quality or reliability.

Relativistic Digital Twin: Bringing the IoT to the Future

Jan 18, 2023

Abstract:Complex IoT ecosystems often require the usage of Digital Twins (DTs) of their physical assets in order to perform predictive analytics and simulate what-if scenarios. DTs are able to replicate IoT devices and adapt over time to their behavioral changes. However, DTs in IoT are typically tailored to a specific use case, without the possibility to seamlessly adapt to different scenarios. Further, the fragmentation of IoT poses additional challenges on how to deploy DTs in heterogeneous scenarios characterized by the usage of multiple data formats and IoT network protocols. In this paper, we propose the Relativistic Digital Twin (RDT) framework, through which we automatically generate general purpose DTs of IoT entities and tune their behavioral models over time by constantly observing their real counterparts. The framework relies on the object representation via the Web of Things (WoT), to offer a standardized interface to each of the IoT devices as well as to their DTs. To this purpose, we extended the W3C WoT standard in order to encompass the concept of behavioral model and define it in the Thing Description (TD) through a new vocabulary. Finally, we evaluated the RDT framework over two disjoint use cases to assess its correctness and learning performance, i.e. the DT of a simulated smart home scenario with the capability of forecasting the indoor temperature, and the DT of a real-world drone with the capability of forecasting its trajectory in an outdoor scenario.

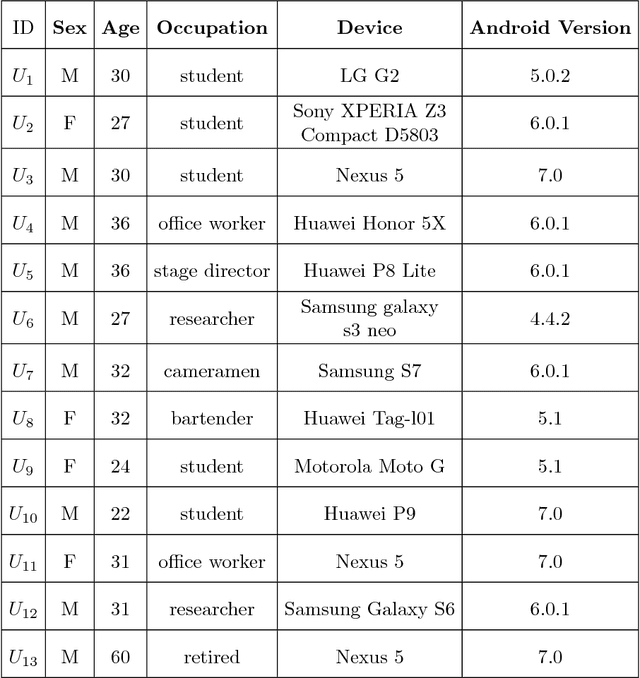

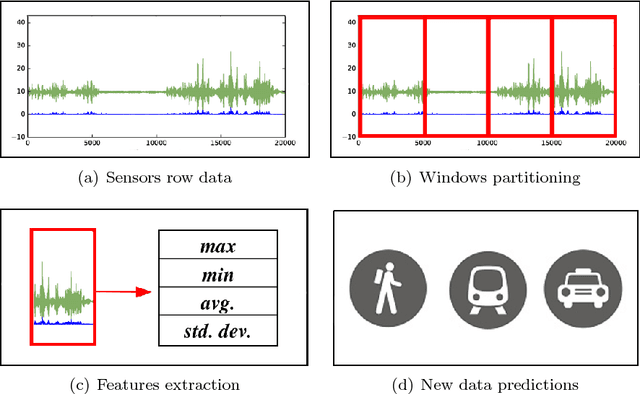

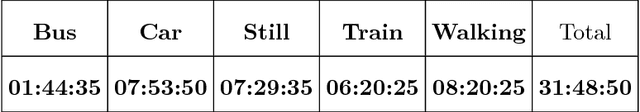

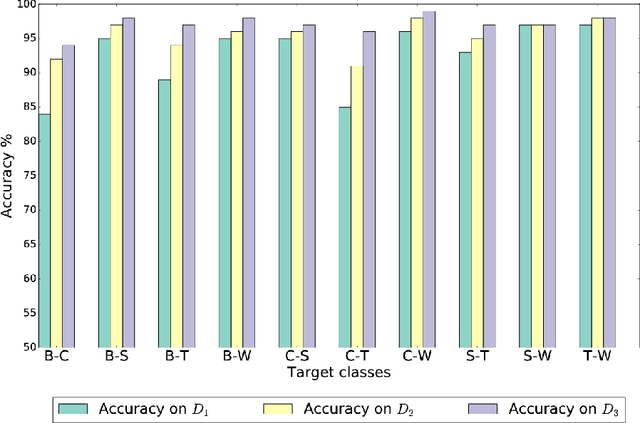

Custom Dual Transportation Mode Detection by Smartphone Devices Exploiting Sensor Diversity

Oct 12, 2018

Abstract:Making applications aware of the mobility experienced by the user can open the door to a wide range of novel services in different use-cases, from smart parking to vehicular traffic monitoring. In the literature, there are many different studies demonstrating the theoretical possibility of performing Transportation Mode Detection (TMD) by mining smart-phones embedded sensors data. However, very few of them provide details on the benchmarking process and on how to implement the detection process in practice. In this study, we provide guidelines and fundamental results that can be useful for both researcher and practitioners aiming at implementing a working TMD system. These guidelines consist of three main contributions. First, we detail the construction of a training dataset, gathered by heterogeneous users and including five different transportation modes; the dataset is made available to the research community as reference benchmark. Second, we provide an in-depth analysis of the sensor-relevance for the case of Dual TDM, which is required by most of mobility-aware applications. Third, we investigate the possibility to perform TMD of unknown users/instances not present in the training set and we compare with state-of-the-art Android APIs for activity recognition.

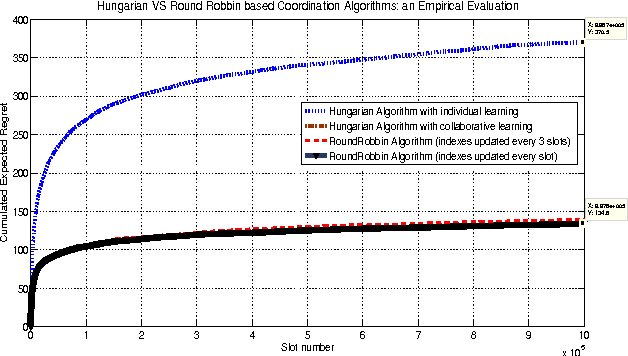

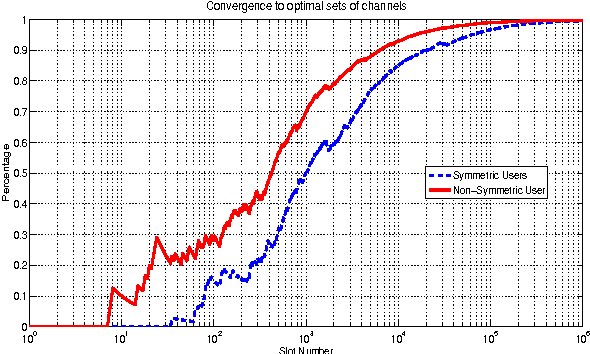

Collaboration and Coordination in Secondary Networks for Opportunistic Spectrum Access

Apr 13, 2012

Abstract:In this paper, we address the general case of a coordinated secondary network willing to exploit communication opportunities left vacant by a licensed primary network. Since secondary users (SU) usually have no prior knowledge on the environment, they need to learn the availability of each channel through sensing techniques, which however can be prone to detection errors. We argue that cooperation among secondary users can enable efficient learning and coordination mechanisms in order to maximize the spectrum exploitation by SUs, while minimizing the impact on the primary network. To this goal, we provide three novel contributions in this paper. First, we formulate the spectrum selection in secondary networks as an instance of the Multi-Armed Bandit (MAB) problem, and we extend the analysis to the collaboration learning case, in which each SU learns the spectrum occupation, and shares this information with other SUs. We show that collaboration among SUs can mitigate the impact of sensing errors on system performance, and improve the convergence of the learning process to the optimal solution. Second, we integrate the learning algorithms with two collaboration techniques based on modified versions of the Hungarian algorithm and of the Round Robin algorithm that allows reducing the interference among SUs. Third, we derive fundamental limits to the performance of cooperative learning algorithms based on Upper Confidence Bound (UCB) policies in a symmetric scenario where all SU have the same perception of the quality of the resources. Extensive simulation results confirm the effectiveness of our joint learning-collaboration algorithm in protecting the operations of Primary Users (PUs), while maximizing the performance of SUs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge