Manuel Lopez-Ibanez

An adaptive approach to Bayesian Optimization with switching costs

May 14, 2024

Abstract:We investigate modifications to Bayesian Optimization for a resource-constrained setting of sequential experimental design where changes to certain design variables of the search space incur a switching cost. This models the scenario where there is a trade-off between evaluating more while maintaining the same setup, or switching and restricting the number of possible evaluations due to the incurred cost. We adapt two process-constrained batch algorithms to this sequential problem formulation, and propose two new methods: one cost-aware and one cost-ignorant. We validate and compare the algorithms using a set of 7 scalable test functions in different dimensionalities and switching-cost settings for 30 total configurations. Our proposed cost-aware hyperparameter-free algorithm yields comparable results to tuned process-constrained algorithms in all settings we considered, suggesting some degree of robustness to varying landscape features and cost trade-offs. This method starts to outperform the other algorithms with increasing switching-cost. Our work broadens out from other recent Bayesian Optimization studies in resource-constrained settings that consider a batch setting only. While the contributions of this work are relevant to the general class of resource-constrained problems, they are particularly relevant to problems where adaptability to varying resource availability is of high importance

Benchmarking in Optimization: Best Practice and Open Issues

Jul 07, 2020

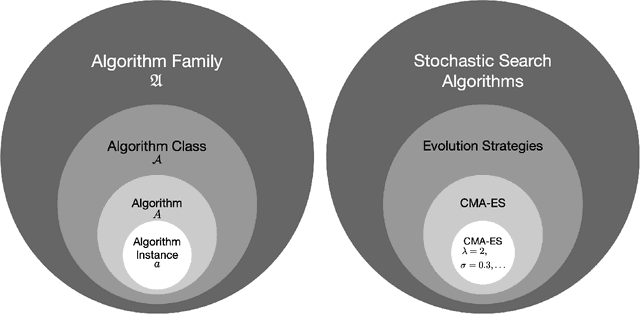

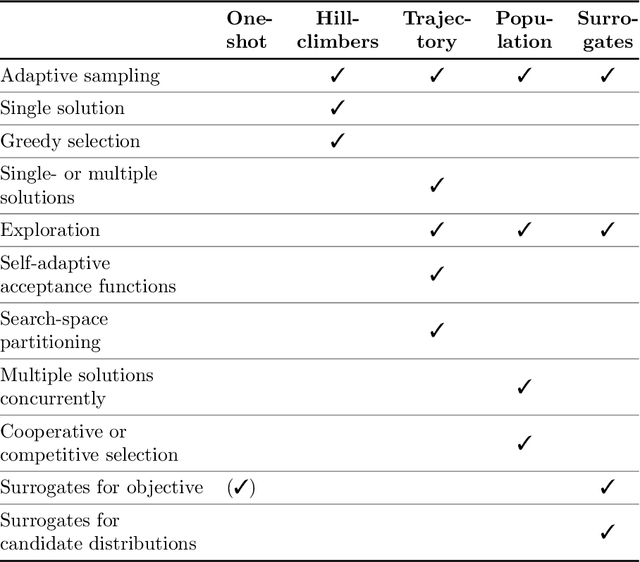

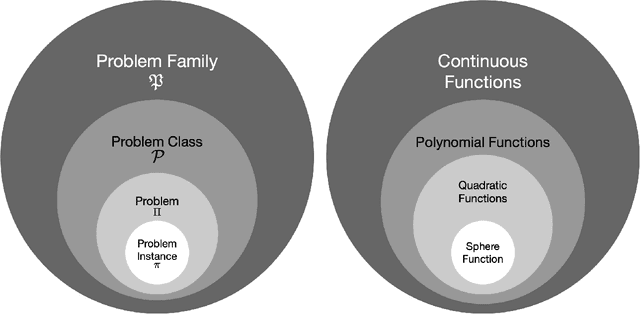

Abstract:This survey compiles ideas and recommendations from more than a dozen researchers with different backgrounds and from different institutes around the world. Promoting best practice in benchmarking is its main goal. The article discusses eight essential topics in benchmarking: clearly stated goals, well-specified problems, suitable algorithms, adequate performance measures, thoughtful analysis, effective and efficient designs, comprehensible presentations, and guaranteed reproducibility. The final goal is to provide well-accepted guidelines (rules) that might be useful for authors and reviewers. As benchmarking in optimization is an active and evolving field of research this manuscript is meant to co-evolve over time by means of periodic updates.

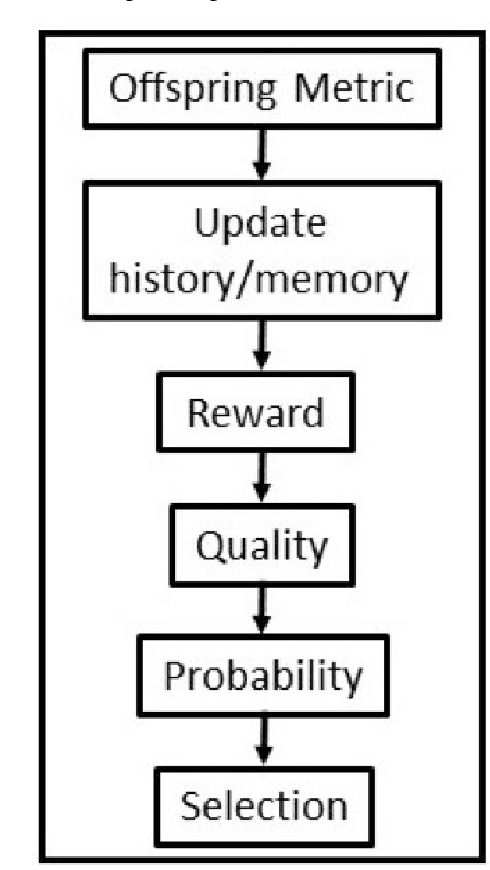

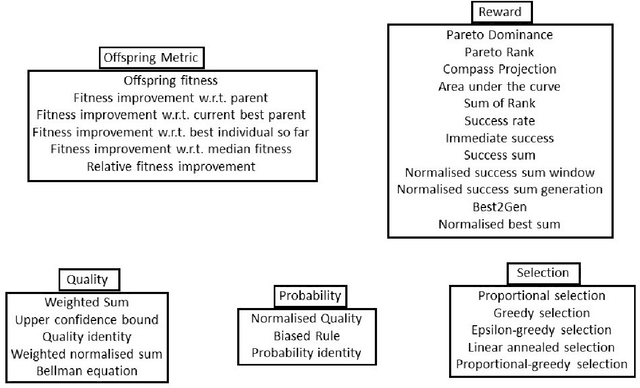

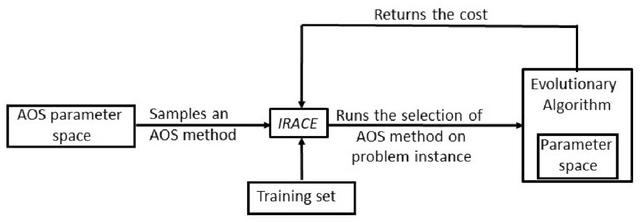

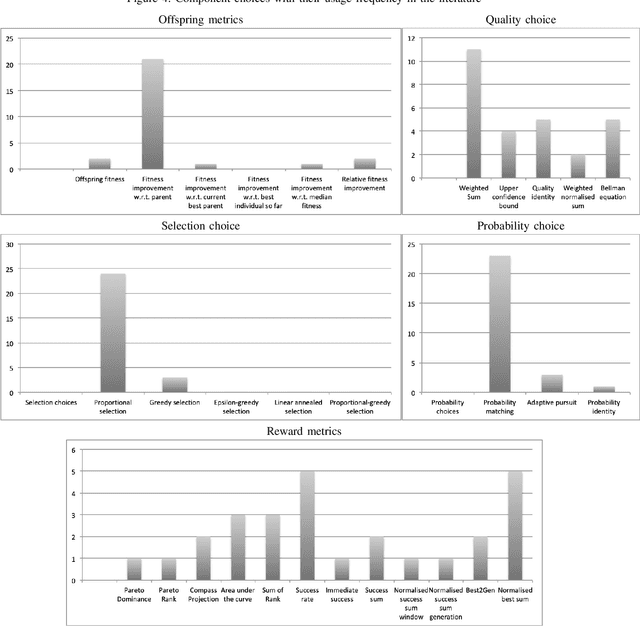

Unified Framework for the Adaptive Operator Selection of Discrete Parameters

May 12, 2020

Abstract:We conduct an exhaustive survey of adaptive selection of operators (AOS) in Evolutionary Algorithms (EAs). We simplified the AOS structure by adding more components to the framework to built upon the existing categorisation of AOS methods. In addition to simplifying, we looked at the commonality among AOS methods from literature to generalise them. Each component is presented with a number of alternative choices, each represented with a formula. We make three sets of comparisons. First, the methods from literature are tested on the BBOB test bed with their default hyper parameters. Second, the hyper parameters of these methods are tuned using an offline configurator known as IRACE. Third, for a given set of problems, we use IRACE to select the best combination of components and tune their hyper parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge