Mandeep Singh

Tony

OpenAI GPT-5 System Card

Dec 19, 2025Abstract:This is the system card published alongside the OpenAI GPT-5 launch, August 2025. GPT-5 is a unified system with a smart and fast model that answers most questions, a deeper reasoning model for harder problems, and a real-time router that quickly decides which model to use based on conversation type, complexity, tool needs, and explicit intent (for example, if you say 'think hard about this' in the prompt). The router is continuously trained on real signals, including when users switch models, preference rates for responses, and measured correctness, improving over time. Once usage limits are reached, a mini version of each model handles remaining queries. This system card focuses primarily on gpt-5-thinking and gpt-5-main, while evaluations for other models are available in the appendix. The GPT-5 system not only outperforms previous models on benchmarks and answers questions more quickly, but -- more importantly -- is more useful for real-world queries. We've made significant advances in reducing hallucinations, improving instruction following, and minimizing sycophancy, and have leveled up GPT-5's performance in three of ChatGPT's most common uses: writing, coding, and health. All of the GPT-5 models additionally feature safe-completions, our latest approach to safety training to prevent disallowed content. Similarly to ChatGPT agent, we have decided to treat gpt-5-thinking as High capability in the Biological and Chemical domain under our Preparedness Framework, activating the associated safeguards. While we do not have definitive evidence that this model could meaningfully help a novice to create severe biological harm -- our defined threshold for High capability -- we have chosen to take a precautionary approach.

Emotion Recognition in Audio and Video Using Deep Neural Networks

Jun 15, 2020

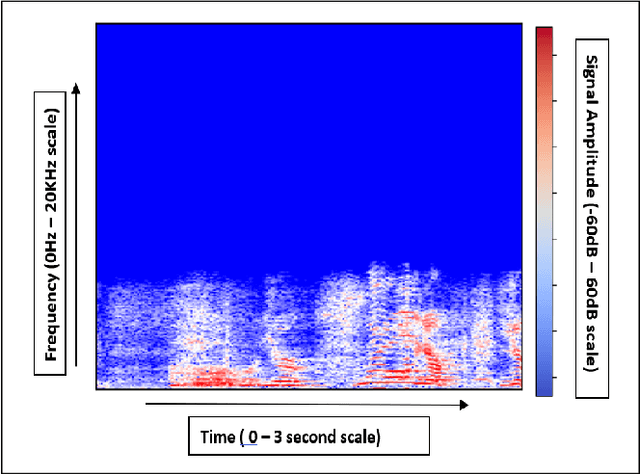

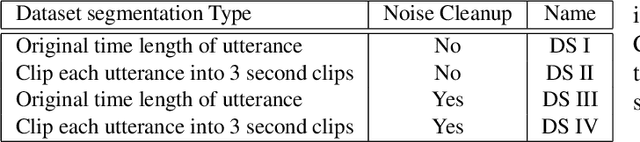

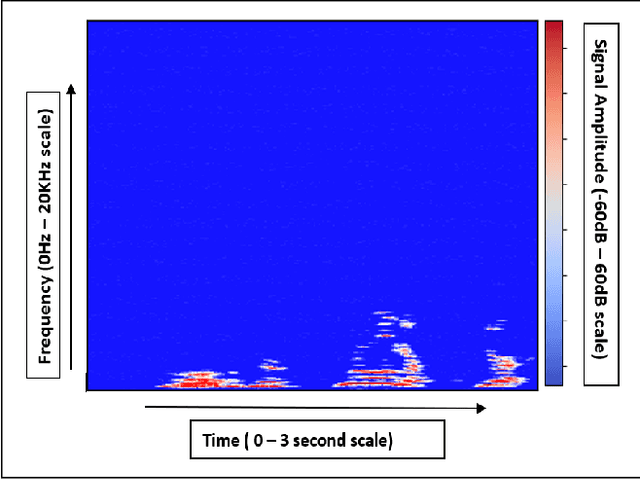

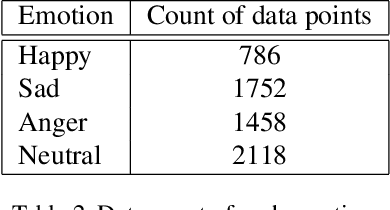

Abstract:Humans are able to comprehend information from multiple domains for e.g. speech, text and visual. With advancement of deep learning technology there has been significant improvement of speech recognition. Recognizing emotion from speech is important aspect and with deep learning technology emotion recognition has improved in accuracy and latency. There are still many challenges to improve accuracy. In this work, we attempt to explore different neural networks to improve accuracy of emotion recognition. With different architectures explored, we find (CNN+RNN) + 3DCNN multi-model architecture which processes audio spectrograms and corresponding video frames giving emotion prediction accuracy of 54.0% among 4 emotions and 71.75% among 3 emotions using IEMOCAP[2] dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge