Maciej Paszyński

Collocation-based Robust Physics Informed Neural Networks for time-dependent simulations of pollution propagation under thermal inversion conditions on Spitsbergen

Apr 24, 2026Abstract:In this paper, we propose a Physics-Informed Neural Network framework for time-dependent simulations of pollution propagation originating from moving emission sources. We formulate a robust variational framework for the time-dependent advection-diffusion problem and establish the boundedness and inf-sup stability of the corresponding discrete weak formulation. Based on this mathematical foundation, we construct a robust loss function that is directly related to the true approximation error, defined as the difference between the neural network approximation and the (unknown) exact solution. Additionally, a collocation-based strategy is introduced to speed up neural network training. As a case study, we investigate pollution propagation caused by snowmobile traffic in Longyearbyen, Spitsbergen, supported by detailed in-field measurements collected using dedicated sensors. The proposed framework is applied to analyze the effects of thermal inversion on pollutant accumulation. Our results demonstrate that thermal inversion traps dense and humid air masses near the ground, significantly enhancing particulate matter (PM) concentration and worsening local air quality.

CO$_2$ sequestration hybrid solver using isogeometric alternating-directions and collocation-based robust variational physics informed neural networks (IGA-ADS-CRVPINN)

Apr 22, 2026Abstract:This paper presents the hybrid solver for a $CO_2$ sequestration problem. The solver uses the IGA-ADS (IsoGeometric Analysis Alternating Directions solver) to compute the saturation scalar field update using the explicit method, and CRVPINN (Collocation-based Robust Variational Physics Informed Neural Networks solver) to compute the pressure scalar field. The study focuses on simulating the physical behavior of $CO_2$ in porous structures, excluding chemical reactions. The mathematical model is based on Darcy's Law. The CRVPINN is pretrained on the initial pressure configuration, and the time step pressure updates require only 100 iterations of the Adam method per time step. We compare our hybrid IGA-ADS solver, coupled with the CRVPINN method, with a baseline of the IGA-ADS solver coupled with the MUMPS direct solver. Our hybrid solver is over 3 times faster on a single computational node from the ARES cluster of ACK CYFRONET. Future work includes extensive testing, inverse problem solving, and potential application to $H_2$ storage problems.

Robust Physics Informed Neural Networks

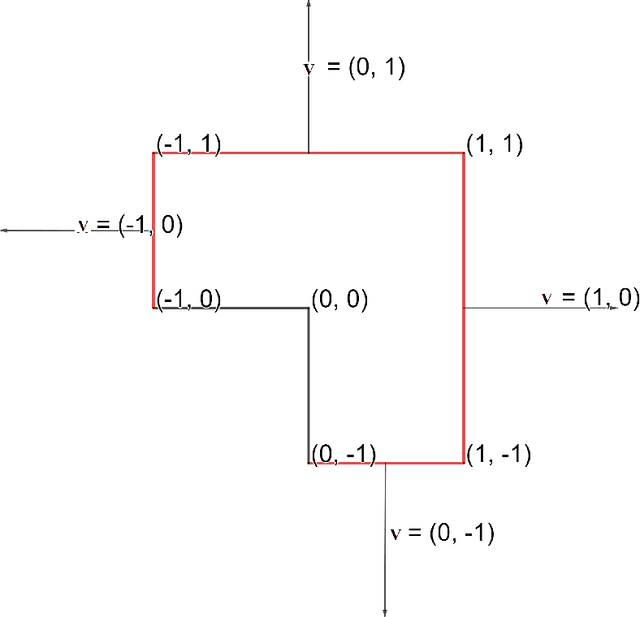

Jan 12, 2024Abstract:We introduce a Robust version of the Physics-Informed Neural Networks (RPINNs) to approximate the Partial Differential Equations (PDEs) solution. Standard Physics Informed Neural Networks (PINN) takes into account the governing physical laws described by PDE during the learning process. The network is trained on a data set that consists of randomly selected points in the physical domain and its boundary. PINNs have been successfully applied to solve various problems described by PDEs with boundary conditions. The loss function in traditional PINNs is based on the strong residuals of the PDEs. This loss function in PINNs is generally not robust with respect to the true error. The loss function in PINNs can be far from the true error, which makes the training process more difficult. In particular, we do not know if the training process has already converged to the solution with the required accuracy. This is especially true if we do not know the exact solution, so we cannot estimate the true error during the training. This paper introduces a different way of defining the loss function. It incorporates the residual and the inverse of the Gram matrix, computed using the energy norm. We test our RPINN algorithm on two Laplace problems and one advection-diffusion problem in two spatial dimensions. We conclude that RPINN is a robust method. The proposed loss coincides well with the true error of the solution, as measured in the energy norm. Thus, we know if our training process goes well, and we know when to stop the training to obtain the neural network approximation of the solution of the PDE with the true error of required accuracy.

Deep neural networks for smooth approximation of physics with higher order and continuity B-spline base functions

Jan 03, 2022

Abstract:This paper deals with the following important research question. Traditionally, the neural network employs non-linear activation functions concatenated with linear operators to approximate a given physical phenomenon. They "fill the space" with the concatenations of the activation functions and linear operators and adjust their coefficients to approximate the physical phenomena. We claim that it is better to "fill the space" with linear combinations of smooth higher-order B-splines base functions as employed by isogeometric analysis and utilize the neural networks to adjust the coefficients of linear combinations. In other words, the possibilities of using neural networks for approximating the B-spline base functions' coefficients and by approximating the solution directly are evaluated. Solving differential equations with neural networks has been proposed by Maziar Raissi et al. in 2017 by introducing Physics-informed Neural Networks (PINN), which naturally encode underlying physical laws as prior information. Approximation of coefficients using a function as an input leverages the well-known capability of neural networks being universal function approximators. In essence, in the PINN approach the network approximates the value of the given field at a given point. We present an alternative approach, where the physcial quantity is approximated as a linear combination of smooth B-spline basis functions, and the neural network approximates the coefficients of B-splines. This research compares results from the DNN approximating the coefficients of the linear combination of B-spline basis functions, with the DNN approximating the solution directly. We show that our approach is cheaper and more accurate when approximating smooth physical fields.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge