Maciej K. Hryniewicki

XGBOD: Improving Supervised Outlier Detection with Unsupervised Representation Learning

Dec 01, 2019

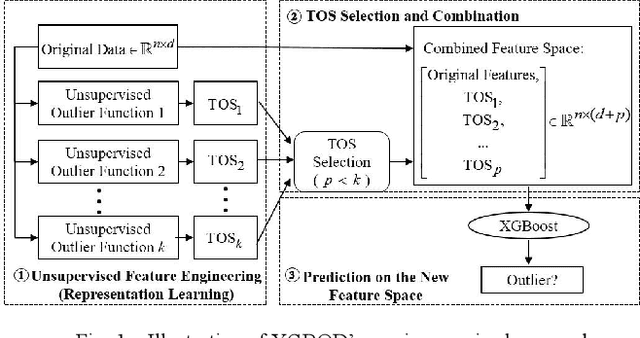

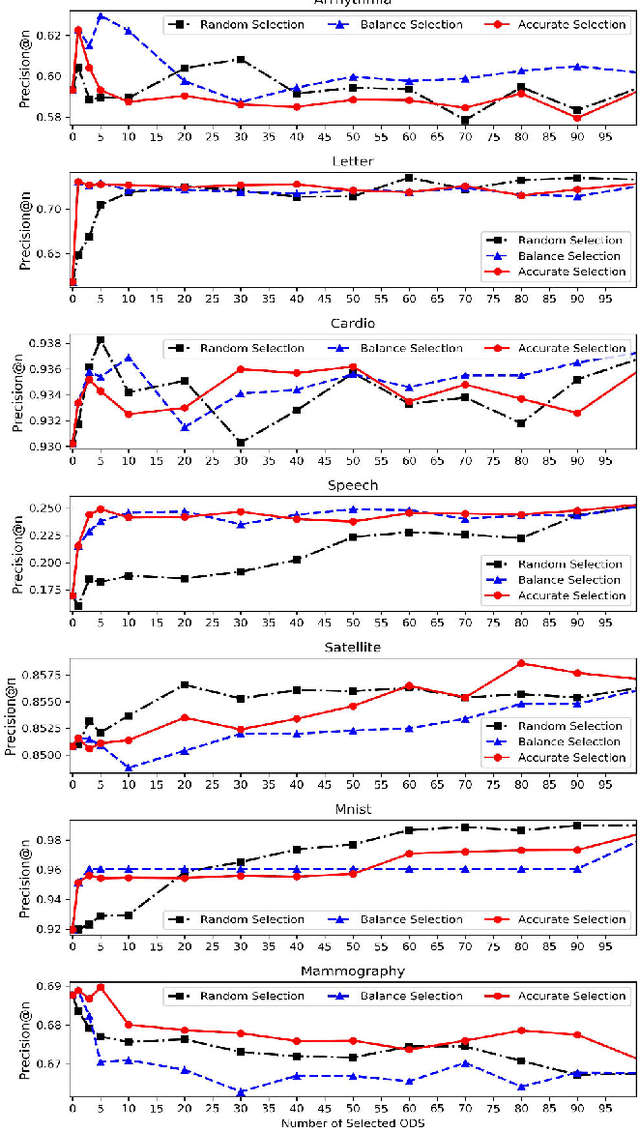

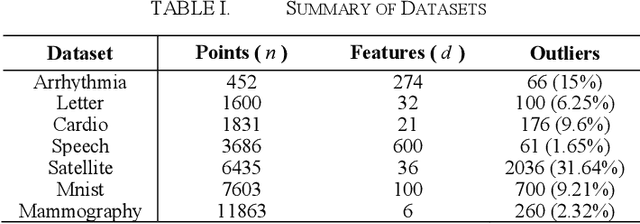

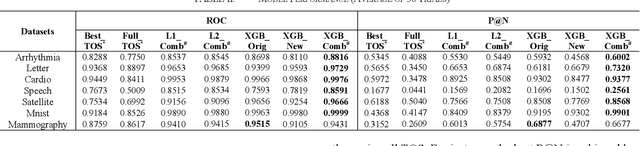

Abstract:A new semi-supervised ensemble algorithm called XGBOD (Extreme Gradient Boosting Outlier Detection) is proposed, described and demonstrated for the enhanced detection of outliers from normal observations in various practical datasets. The proposed framework combines the strengths of both supervised and unsupervised machine learning methods by creating a hybrid approach that exploits each of their individual performance capabilities in outlier detection. XGBOD uses multiple unsupervised outlier mining algorithms to extract useful representations from the underlying data that augment the predictive capabilities of an embedded supervised classifier on an improved feature space. The novel approach is shown to provide superior performance in comparison to competing individual detectors, the full ensemble and two existing representation learning based algorithms across seven outlier datasets.

DCSO: Dynamic Combination of Detector Scores for Outlier Ensembles

Nov 23, 2019

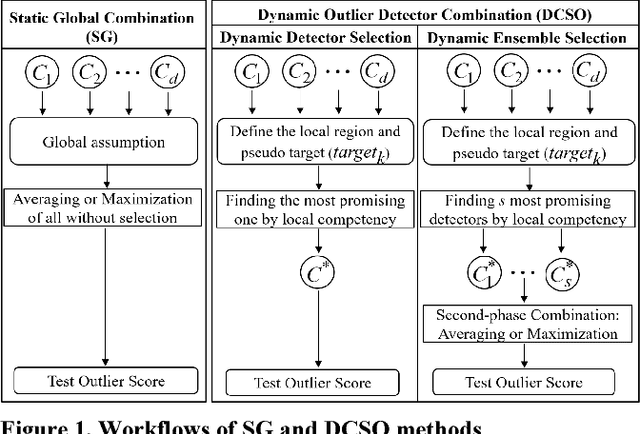

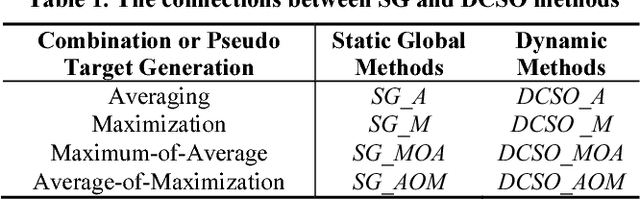

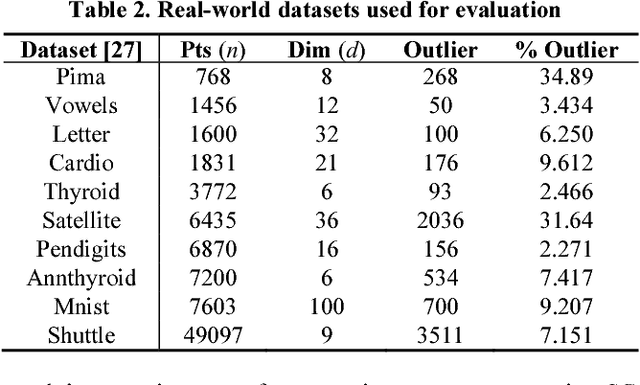

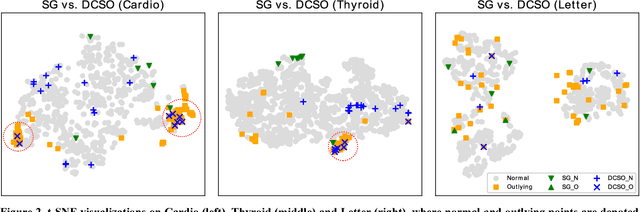

Abstract:Selecting and combining the outlier scores of different base detectors used within outlier ensembles can be quite challenging in the absence of ground truth. In this paper, an unsupervised outlier detector combination framework called DCSO is proposed, demonstrated and assessed for the dynamic selection of most competent base detectors, with an emphasis on data locality. The proposed DCSO framework first defines the local region of a test instance by its k nearest neighbors and then identifies the top-performing base detectors within the local region. Experimental results on ten benchmark datasets demonstrate that DCSO provides consistent performance improvement over existing static combination approaches in mining outlying objects. To facilitate interpretability and reliability of the proposed method, DCSO is analyzed using both theoretical frameworks and visualization techniques, and presented alongside empirical parameter setting instructions that can be used to improve the overall performance.

LSCP: Locally Selective Combination in Parallel Outlier Ensembles

Dec 04, 2018

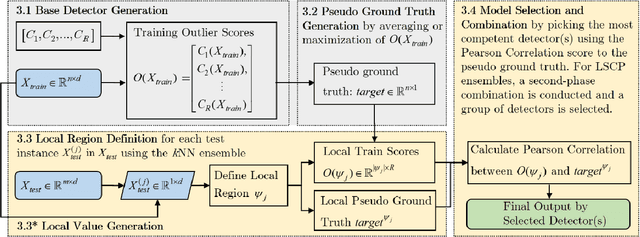

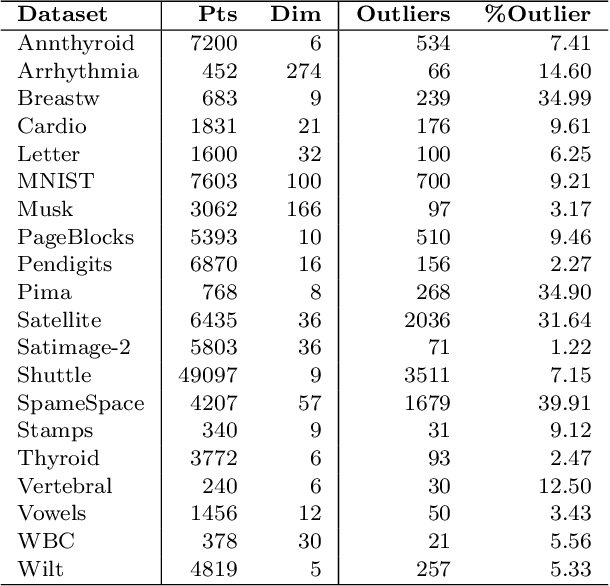

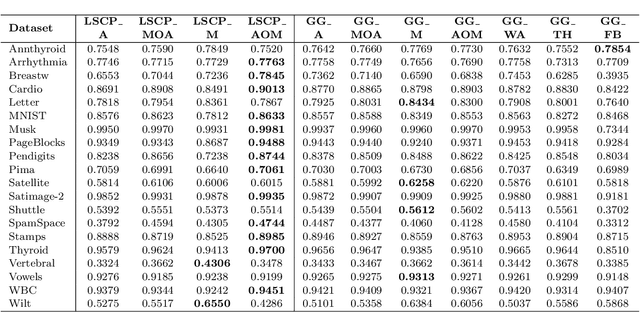

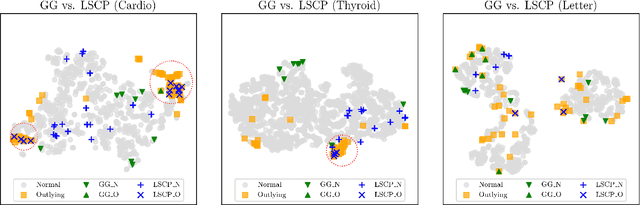

Abstract:In unsupervised outlier ensembles, the absence of ground truth makes the combination of base detectors a challenging task. Specifically, existing parallel outlier ensembles lack a reliable way of selecting competent base detectors, affecting accuracy and stability, during model combination. In this paper, we propose a framework---called Locally Selective Combination in Parallel Outlier Ensembles (LSCP)---which addresses this issue by defining a local region around a test instance using the consensus of its nearest neighbors in randomly generated feature spaces. The top-performing base detectors in this local region are selected and combined as the model's final output. Four variants of the LSCP framework are compared with six widely used combination algorithms for parallel ensembles. Experimental results demonstrate that one of these LSCP variants consistently outperforms baseline algorithms on the majority of eighteen real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge