Mário de Castro

LOCUS: A Distribution-Free Loss-Quantile Score for Risk-Aware Predictions

Mar 02, 2026Abstract:Modern machine learning models can be accurate on average yet still make mistakes that dominate deployment cost. We introduce Locus, a distribution-free wrapper that produces a per-input loss-scale reliability score for a fixed prediction function. Rather than quantifying uncertainty about the label, Locus models the realized loss of the prediction function using any engine that outputs a predictive distribution for the loss given an input. A simple split-calibration step turns this function into a distribution-free interpretable score that is comparable across inputs and can be read as an upper loss level. The score is useful on its own for ranking, and it can optionally be thresholded to obtain a transparent flagging rule with distribution-free control of large-loss events. Experiments across 13 regression benchmarks show that Locus yields effective risk ranking and reduces large-loss frequency compared to standard heuristics.

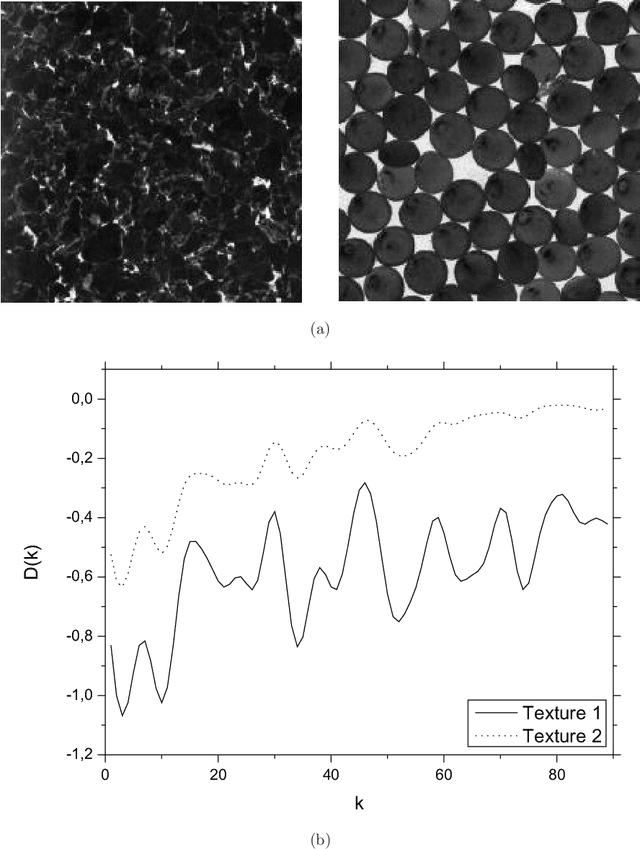

Enhancing Volumetric Bouligand-Minkowski Fractal Descriptors by using Functional Data Analysis

Jan 15, 2012

Abstract:This work proposes and study the concept of Functional Data Analysis transform, applying it to the performance improving of volumetric Bouligand-Minkowski fractal descriptors. The proposed transform consists essentially in changing the descriptors originally defined in the space of the calculus of fractal dimension into the space of coefficients used in the functional data representation of these descriptors. The transformed decriptors are used here in texture classification problems. The enhancement provided by the FDA transform is measured by comparing the transformed to the original descriptors in terms of the correctness rate in the classification of well known datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge