Krithika Iyer

Jack

The Llama 4 Herd: Architecture, Training, Evaluation, and Deployment Notes

Jan 15, 2026Abstract:This document consolidates publicly reported technical details about Metas Llama 4 model family. It summarizes (i) released variants (Scout and Maverick) and the broader herd context including the previewed Behemoth teacher model, (ii) architectural characteristics beyond a high-level MoE description covering routed/shared-expert structure, early-fusion multimodality, and long-context design elements reported for Scout (iRoPE and length generalization strategies), (iii) training disclosures spanning pre-training, mid-training for long-context extension, and post-training methodology (lightweight SFT, online RL, and lightweight DPO) as described in release materials, (iv) developer-reported benchmark results for both base and instruction-tuned checkpoints, and (v) practical deployment constraints observed across major serving environments, including provider-specific context limits and quantization packaging. The manuscript also summarizes licensing obligations relevant to redistribution and derivative naming, and reviews publicly described safeguards and evaluation practices. The goal is to provide a compact technical reference for researchers and practitioners who need precise, source-backed facts about Llama 4.

MRI-to-CT Synthesis With Cranial Suture Segmentations Using A Variational Autoencoder Framework

Dec 29, 2025Abstract:Quantifying normative pediatric cranial development and suture ossification is crucial for diagnosing and treating growth-related cephalic disorders. Computed tomography (CT) is widely used to evaluate cranial and sutural deformities; however, its ionizing radiation is contraindicated in children without significant abnormalities. Magnetic resonance imaging (MRI) offers radiation free scans with superior soft tissue contrast, but unlike CT, MRI cannot elucidate cranial sutures, estimate skull bone density, or assess cranial vault growth. This study proposes a deep learning driven pipeline for transforming T1 weighted MRIs of children aged 0.2 to 2 years into synthetic CTs (sCTs), predicting detailed cranial bone segmentation, generating suture probability heatmaps, and deriving direct suture segmentation from the heatmaps. With our in-house pediatric data, sCTs achieved 99% structural similarity and a Frechet inception distance of 1.01 relative to real CTs. Skull segmentation attained an average Dice coefficient of 85% across seven cranial bones, and sutures achieved 80% Dice. Equivalence of skull and suture segmentation between sCTs and real CTs was confirmed using two one sided tests (TOST p < 0.05). To our knowledge, this is the first pediatric cranial CT synthesis framework to enable suture segmentation on sCTs derived from MRI, despite MRI's limited depiction of bone and sutures. By combining robust, domain specific variational autoencoders, our method generates perceptually indistinguishable cranial sCTs from routine pediatric MRIs, bridging critical gaps in non invasive cranial evaluation.

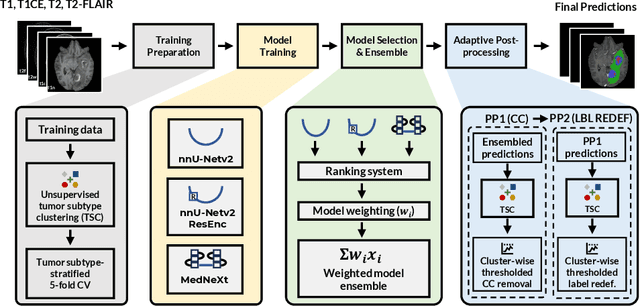

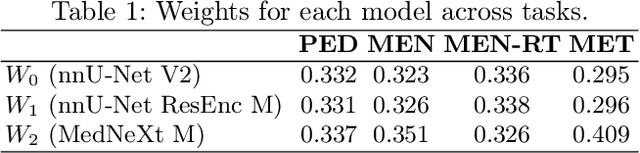

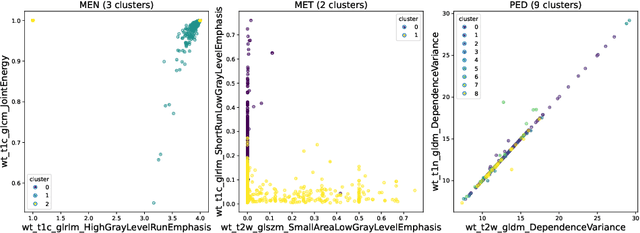

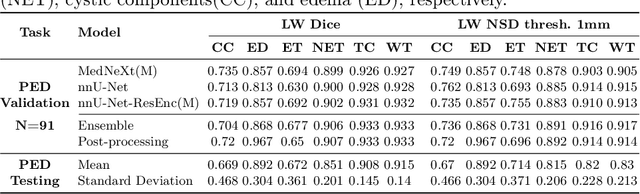

Adaptable Segmentation Pipeline for Diverse Brain Tumors with Radiomic-guided Subtyping and Lesion-Wise Model Ensemble

Dec 16, 2025

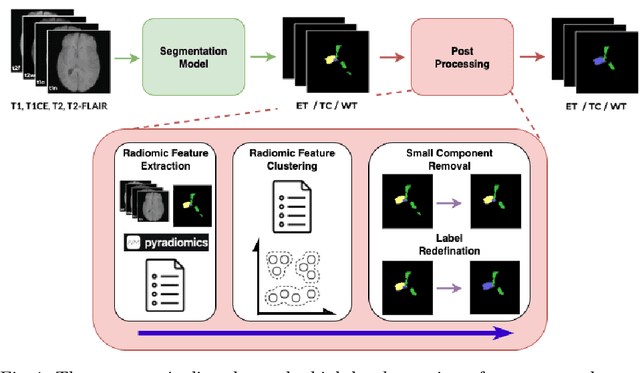

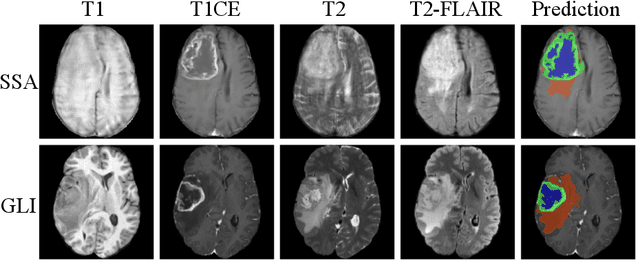

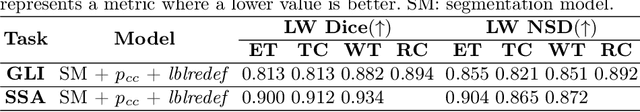

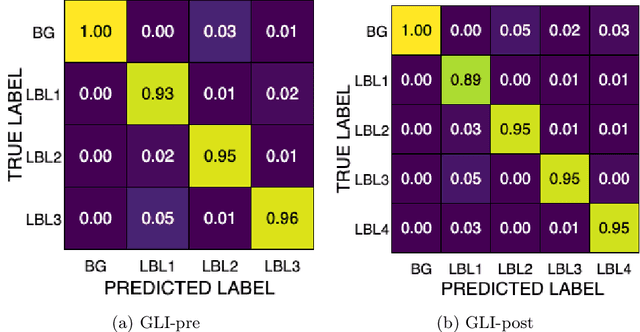

Abstract:Robust and generalizable segmentation of brain tumors on multi-parametric magnetic resonance imaging (MRI) remains difficult because tumor types differ widely. The BraTS 2025 Lighthouse Challenge benchmarks segmentation methods on diverse high-quality datasets of adult and pediatric tumors: multi-consortium international pediatric brain tumor segmentation (PED), preoperative meningioma tumor segmentation (MEN), meningioma radiotherapy segmentation (MEN-RT), and segmentation of pre- and post-treatment brain metastases (MET). We present a flexible, modular, and adaptable pipeline that improves segmentation performance by selecting and combining state-of-the-art models and applying tumor- and lesion-specific processing before and after training. Radiomic features extracted from MRI help detect tumor subtype, ensuring a more balanced training. Custom lesion-level performance metrics determine the influence of each model in the ensemble and optimize post-processing that further refines the predictions, enabling the workflow to tailor every step to each case. On the BraTS testing sets, our pipeline achieved performance comparable to top-ranked algorithms across multiple challenges. These findings confirm that custom lesion-aware processing and model selection yield robust segmentations yet without locking the method to a specific network architecture. Our method has the potential for quantitative tumor measurement in clinical practice, supporting diagnosis and prognosis.

Improving Pre-trained Segmentation Models using Post-Processing

Dec 16, 2025

Abstract:Gliomas are the most common malignant brain tumors in adults and are among the most lethal. Despite aggressive treatment, the median survival rate is less than 15 months. Accurate multiparametric MRI (mpMRI) tumor segmentation is critical for surgical planning, radiotherapy, and disease monitoring. While deep learning models have improved the accuracy of automated segmentation, large-scale pre-trained models generalize poorly and often underperform, producing systematic errors such as false positives, label swaps, and slice discontinuities in slices. These limitations are further compounded by unequal access to GPU resources and the growing environmental cost of large-scale model training. In this work, we propose adaptive post-processing techniques to refine the quality of glioma segmentations produced by large-scale pretrained models developed for various types of tumors. We demonstrated the techniques in multiple BraTS 2025 segmentation challenge tasks, with the ranking metric improving by 14.9 % for the sub-Saharan Africa challenge and 0.9% for the adult glioma challenge. This approach promotes a shift in brain tumor segmentation research from increasingly complex model architectures to efficient, clinically aligned post-processing strategies that are precise, computationally fair, and sustainable.

Mesh2SSM++: A Probabilistic Framework for Unsupervised Learning of Statistical Shape Model of Anatomies from Surface Meshes

Feb 11, 2025

Abstract:Anatomy evaluation is crucial for understanding the physiological state, diagnosing abnormalities, and guiding medical interventions. Statistical shape modeling (SSM) is vital in this process. By enabling the extraction of quantitative morphological shape descriptors from MRI and CT scans, SSM provides comprehensive descriptions of anatomical variations within a population. However, the effectiveness of SSM in anatomy evaluation hinges on the quality and robustness of the shape models. While deep learning techniques show promise in addressing these challenges by learning complex nonlinear representations of shapes, existing models still have limitations and often require pre-established shape models for training. To overcome these issues, we propose Mesh2SSM++, a novel approach that learns to estimate correspondences from meshes in an unsupervised manner. This method leverages unsupervised, permutation-invariant representation learning to estimate how to deform a template point cloud into subject-specific meshes, forming a correspondence-based shape model. Additionally, our probabilistic formulation allows learning a population-specific template, reducing potential biases associated with template selection. A key feature of Mesh2SSM++ is its ability to quantify aleatoric uncertainty, which captures inherent data variability and is essential for ensuring reliable model predictions and robust decision-making in clinical tasks, especially under challenging imaging conditions. Through extensive validation across diverse anatomies, evaluation metrics, and downstream tasks, we demonstrate that Mesh2SSM++ outperforms existing methods. Its ability to operate directly on meshes, combined with computational efficiency and interpretability through its probabilistic framework, makes it an attractive alternative to traditional and deep learning-based SSM approaches.

MORPH-LER: Log-Euclidean Regularization for Population-Aware Image Registration

Feb 04, 2025

Abstract:Spatial transformations that capture population-level morphological statistics are critical for medical image analysis. Commonly used smoothness regularizers for image registration fail to integrate population statistics, leading to anatomically inconsistent transformations. Inverse consistency regularizers promote geometric consistency but lack population morphometrics integration. Regularizers that constrain deformation to low-dimensional manifold methods address this. However, they prioritize reconstruction over interpretability and neglect diffeomorphic properties, such as group composition and inverse consistency. We introduce MORPH-LER, a Log-Euclidean regularization framework for population-aware unsupervised image registration. MORPH-LER learns population morphometrics from spatial transformations to guide and regularize registration networks, ensuring anatomically plausible deformations. It features a bottleneck autoencoder that computes the principal logarithm of deformation fields via iterative square-root predictions. It creates a linearized latent space that respects diffeomorphic properties and enforces inverse consistency. By integrating a registration network with a diffeomorphic autoencoder, MORPH-LER produces smooth, meaningful deformation fields. The framework offers two main contributions: (1) a data-driven regularization strategy that incorporates population-level anatomical statistics to enhance transformation validity and (2) a linearized latent space that enables compact and interpretable deformation fields for efficient population morphometrics analysis. We validate MORPH-LER across two families of deep learning-based registration networks, demonstrating its ability to produce anatomically accurate, computationally efficient, and statistically meaningful transformations on the OASIS-1 brain imaging dataset.

LEDA: Log-Euclidean Diffeomorphic Autoencoder for Efficient Statistical Analysis of Diffeomorphism

Dec 20, 2024

Abstract:Image registration is a core task in computational anatomy that establishes correspondences between images. Invertible deformable registration, which computes a deformation field and handles complex, non-linear transformation, is essential for tracking anatomical variations, especially in neuroimaging applications where inter-subject differences and longitudinal changes are key. Analyzing the deformation fields is challenging due to their non-linearity, limiting statistical analysis. However, traditional approaches for analyzing deformation fields are computationally expensive, sensitive to initialization, and prone to numerical errors, especially when the deformation is far from the identity. To address these limitations, we propose the Log-Euclidean Diffeomorphic Autoencoder (LEDA), an innovative framework designed to compute the principal logarithm of deformation fields by efficiently predicting consecutive square roots. LEDA operates within a linearized latent space that adheres to the diffeomorphisms group action laws, enhancing our model's robustness and applicability. We also introduce a loss function to enforce inverse consistency, ensuring accurate latent representations of deformation fields. Extensive experiments with the OASIS-1 dataset demonstrate the effectiveness of LEDA in accurately modeling and analyzing complex non-linear deformations while maintaining inverse consistency. Additionally, we evaluate its ability to capture and incorporate clinical variables, enhancing its relevance for clinical applications.

Automated Red Teaming with GOAT: the Generative Offensive Agent Tester

Oct 02, 2024

Abstract:Red teaming assesses how large language models (LLMs) can produce content that violates norms, policies, and rules set during their safety training. However, most existing automated methods in the literature are not representative of the way humans tend to interact with AI models. Common users of AI models may not have advanced knowledge of adversarial machine learning methods or access to model internals, and they do not spend a lot of time crafting a single highly effective adversarial prompt. Instead, they are likely to make use of techniques commonly shared online and exploit the multiturn conversational nature of LLMs. While manual testing addresses this gap, it is an inefficient and often expensive process. To address these limitations, we introduce the Generative Offensive Agent Tester (GOAT), an automated agentic red teaming system that simulates plain language adversarial conversations while leveraging multiple adversarial prompting techniques to identify vulnerabilities in LLMs. We instantiate GOAT with 7 red teaming attacks by prompting a general-purpose model in a way that encourages reasoning through the choices of methods available, the current target model's response, and the next steps. Our approach is designed to be extensible and efficient, allowing human testers to focus on exploring new areas of risk while automation covers the scaled adversarial stress-testing of known risk territory. We present the design and evaluation of GOAT, demonstrating its effectiveness in identifying vulnerabilities in state-of-the-art LLMs, with an ASR@10 of 97% against Llama 3.1 and 88% against GPT-4 on the JailbreakBench dataset.

The Llama 3 Herd of Models

Jul 31, 2024Abstract:Modern artificial intelligence (AI) systems are powered by foundation models. This paper presents a new set of foundation models, called Llama 3. It is a herd of language models that natively support multilinguality, coding, reasoning, and tool usage. Our largest model is a dense Transformer with 405B parameters and a context window of up to 128K tokens. This paper presents an extensive empirical evaluation of Llama 3. We find that Llama 3 delivers comparable quality to leading language models such as GPT-4 on a plethora of tasks. We publicly release Llama 3, including pre-trained and post-trained versions of the 405B parameter language model and our Llama Guard 3 model for input and output safety. The paper also presents the results of experiments in which we integrate image, video, and speech capabilities into Llama 3 via a compositional approach. We observe this approach performs competitively with the state-of-the-art on image, video, and speech recognition tasks. The resulting models are not yet being broadly released as they are still under development.

Probabilistic 3D Correspondence Prediction from Sparse Unsegmented Images

Jul 02, 2024Abstract:The study of physiology demonstrates that the form (shape)of anatomical structures dictates their functions, and analyzing the form of anatomies plays a crucial role in clinical research. Statistical shape modeling (SSM) is a widely used tool for quantitative analysis of forms of anatomies, aiding in characterizing and identifying differences within a population of subjects. Despite its utility, the conventional SSM construction pipeline is often complex and time-consuming. Additionally, reliance on linearity assumptions further limits the model from capturing clinically relevant variations. Recent advancements in deep learning solutions enable the direct inference of SSM from unsegmented medical images, streamlining the process and improving accessibility. However, the new methods of SSM from images do not adequately account for situations where the imaging data quality is poor or where only sparse information is available. Moreover, quantifying aleatoric uncertainty, which represents inherent data variability, is crucial in deploying deep learning for clinical tasks to ensure reliable model predictions and robust decision-making, especially in challenging imaging conditions. Therefore, we propose SPI-CorrNet, a unified model that predicts 3D correspondences from sparse imaging data. It leverages a teacher network to regularize feature learning and quantifies data-dependent aleatoric uncertainty by adapting the network to predict intrinsic input variances. Experiments on the LGE MRI left atrium dataset and Abdomen CT-1K liver datasets demonstrate that our technique enhances the accuracy and robustness of sparse image-driven SSM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge