Khaled Shuaib

The Nonverbal Syntax Framework: An Evidence-Based Tiered System for Inferring Learner States from Observable Behavioral Cues

Apr 28, 2026Abstract:Understanding learners' cognitive and affective states underpins adaptive educational systems and effective teaching. Although research links nonverbal cues to internal states, no framework calibrates them to evidence. We present the Nonverbal Syntax Framework, drawn from a systematic review of 908 studies and 17,043 cue-state mappings (Turaev et al., 2026). The framework addresses three challenges: terminological fragmentation (behaviors described inconsistently), evidence heterogeneity (single observations to replicated findings), and state ambiguity (similar patterns indicating multiple states). Normalization consolidated 5,537 state labels into 2,010 canonical states (63.7%) and 11,521 cues into 6,434 normalized cues (44.2%) across nine behavioral channels. Dual-evidence assessment separately evaluates Component Evidence (coverage of cues and states) and Relationship Evidence (independent studies per cue-state link). 52% of "Very High" relationships rest on one paper, so separation enables calibrated rather than overconfident inference from preliminary findings. The framework's four levels comprise a Cue Vocabulary of 6,434 indicators classified as observable/instrumental; State Clusters linking 2,010 states to indicative cues; State Profiles with multimodal behavioral signatures and actionable specifications; and Discriminative Analysis distinguishing 1,215 confusable state pairs. We identify 480 actionable R1-R4 relationships (three or more independent papers), the replicated core of six decades of research, covering 35.5% of mappings across 47 key learning states and 111 distinct indicators. The remaining 91.5% (9,653 single-paper findings) form exploratory hypotheses for replication. The framework gives researchers an empirical foundation for identifying gaps, practitioners evidence-based tools for state inference, and technologists validated features for multimodal detection.

SDN Flow Entry Management Using Reinforcement Learning

Sep 24, 2018

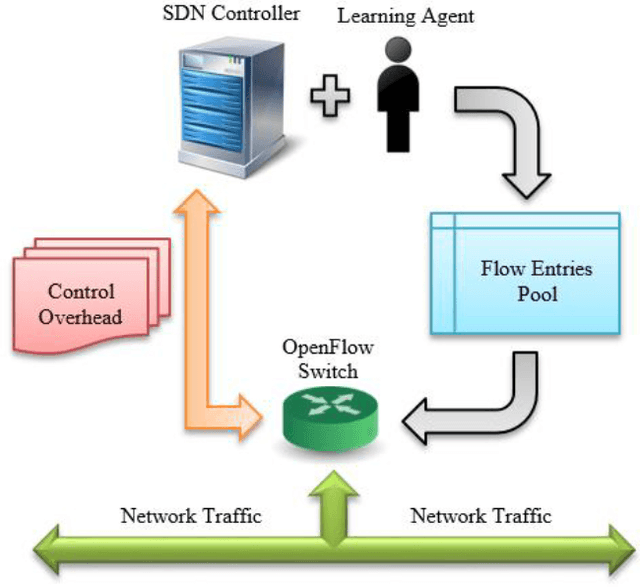

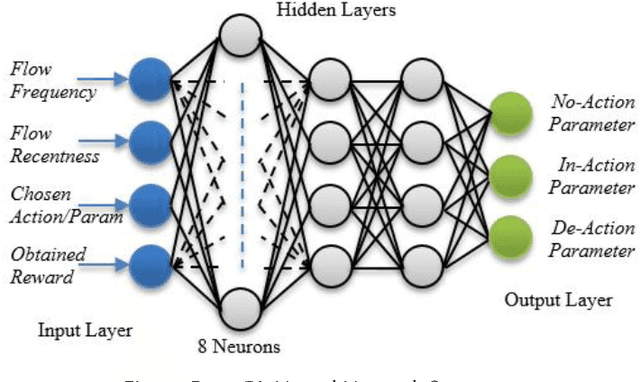

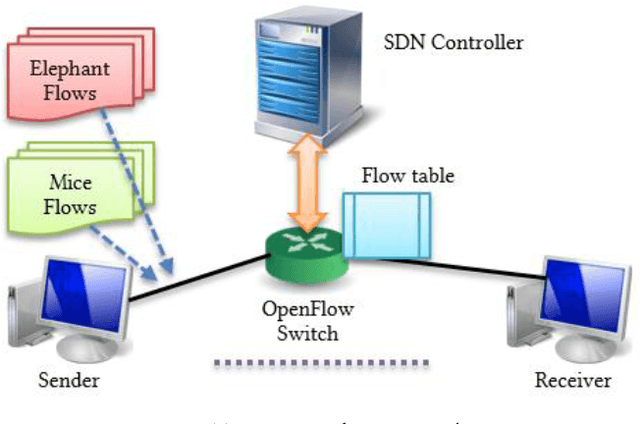

Abstract:Modern information technology services largely depend on cloud infrastructures to provide their services. These cloud infrastructures are built on top of datacenter networks (DCNs) constructed with high-speed links, fast switching gear, and redundancy to offer better flexibility and resiliency. In this environment, network traffic includes long-lived (elephant) and short-lived (mice) flows with partitioned and aggregated traffic patterns. Although SDN-based approaches can efficiently allocate networking resources for such flows, the overhead due to network reconfiguration can be significant. With limited capacity of Ternary Content-Addressable Memory (TCAM) deployed in an OpenFlow enabled switch, it is crucial to determine which forwarding rules should remain in the flow table, and which rules should be processed by the SDN controller in case of a table-miss on the SDN switch. This is needed in order to obtain the flow entries that satisfy the goal of reducing the long-term control plane overhead introduced between the controller and the switches. To achieve this goal, we propose a machine learning technique that utilizes two variations of reinforcement learning (RL) algorithms-the first of which is traditional reinforcement learning algorithm based while the other is deep reinforcement learning based. Emulation results using the RL algorithm show around 60% improvement in reducing the long-term control plane overhead, and around 14% improvement in the table-hit ratio compared to the Multiple Bloom Filters (MBF) method given a fixed size flow table of 4KB.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge