Kento Sato

ROIX-Comp: Optimizing X-ray Computed Tomography Imaging Strategy for Data Reduction and Reconstruction

Feb 17, 2026Abstract:In high-performance computing (HPC) environments, particularly in synchrotron radiation facilities, vast amounts of X-ray images are generated. Processing large-scale X-ray Computed Tomography (X-CT) datasets presents significant computational and storage challenges due to their high dimensionality and data volume. Traditional approaches often require extensive storage capacity and high transmission bandwidth, limiting real-time processing capabilities and workflow efficiency. To address these constraints, we introduce a region-of-interest (ROI)-driven extraction framework (ROIX-Comp) that intelligently compresses X-CT data by identifying and retaining only essential features. Our work reduces data volume while preserving critical information for downstream processing tasks. At pre-processing stage, we utilize error-bounded quantization to reduce the amount of data to be processed and therefore improve computational efficiencies. At the compression stage, our methodology combines object extraction with multiple state-of-the-art lossless and lossy compressors, resulting in significantly improved compression ratios. We evaluated this framework against seven X-CT datasets and observed a relative compression ratio improvement of 12.34x compared to the standard compression.

MLPerf HPC: A Holistic Benchmark Suite for Scientific Machine Learning on HPC Systems

Oct 26, 2021

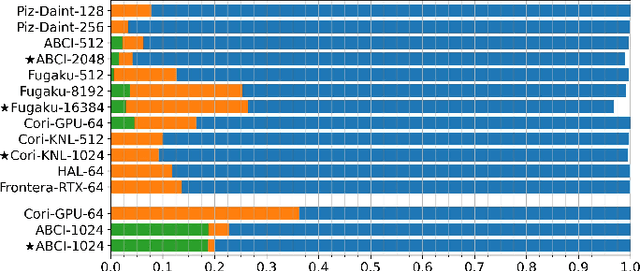

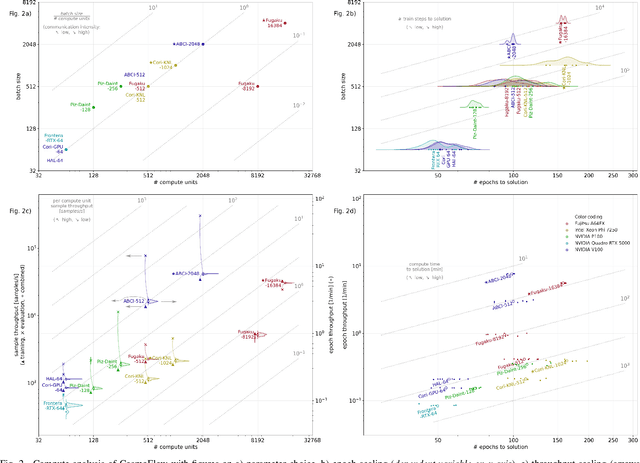

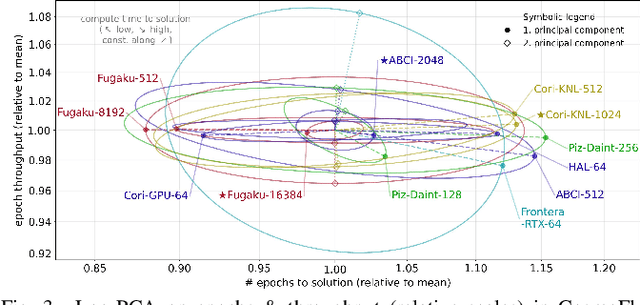

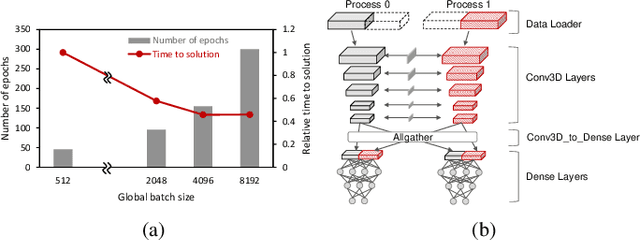

Abstract:Scientific communities are increasingly adopting machine learning and deep learning models in their applications to accelerate scientific insights. High performance computing systems are pushing the frontiers of performance with a rich diversity of hardware resources and massive scale-out capabilities. There is a critical need to understand fair and effective benchmarking of machine learning applications that are representative of real-world scientific use cases. MLPerf is a community-driven standard to benchmark machine learning workloads, focusing on end-to-end performance metrics. In this paper, we introduce MLPerf HPC, a benchmark suite of large-scale scientific machine learning training applications driven by the MLCommons Association. We present the results from the first submission round, including a diverse set of some of the world's largest HPC systems. We develop a systematic framework for their joint analysis and compare them in terms of data staging, algorithmic convergence, and compute performance. As a result, we gain a quantitative understanding of optimizations on different subsystems such as staging and on-node loading of data, compute-unit utilization, and communication scheduling, enabling overall $>10 \times$ (end-to-end) performance improvements through system scaling. Notably, our analysis shows a scale-dependent interplay between the dataset size, a system's memory hierarchy, and training convergence that underlines the importance of near-compute storage. To overcome the data-parallel scalability challenge at large batch sizes, we discuss specific learning techniques and hybrid data-and-model parallelism that are effective on large systems. We conclude by characterizing each benchmark with respect to low-level memory, I/O, and network behavior to parameterize extended roofline performance models in future rounds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge