Kenji Kubo

C-voting: Confidence-Based Test-Time Voting without Explicit Energy Functions

Apr 15, 2026Abstract:Neural network models with latent recurrent processing, where identical layers are recursively applied to the latent state, have gained attention as promising models for performing reasoning tasks. A strength of such models is that they enable test-time scaling, where the models can enhance their performance in the test phase without additional training. Models such as the Hierarchical Reasoning Model (HRM) and Artificial Kuramoto Oscillatory Neurons (AKOrN) can facilitate deeper reasoning by increasing the number of recurrent steps, thereby enabling the completion of challenging tasks, including Sudoku, Maze solving, and AGI benchmarks. In this work, we introduce confidence-based voting (C-voting), a test-time scaling strategy designed for recurrent models with multiple latent candidate trajectories. Initializing the latent state with multiple candidates using random variables, C-voting selects the one maximizing the average of top-1 probabilities of the predictions, reflecting the model's confidence. Additionally, it yields 4.9% higher accuracy on Sudoku-hard than the energy-based voting strategy, which is specific to models with explicit energy functions. An essential advantage of C-voting is its applicability: it can be applied to recurrent models without requiring an explicit energy function. Finally, we introduce a simple attention-based recurrent model with randomized initial values named ItrSA++, and demonstrate that when combined with C-voting, it outperforms HRM on Sudoku-extreme (95.2% vs. 55.0%) and Maze (78.6% vs. 74.5%) tasks.

Quantum tangent kernel

Nov 04, 2021

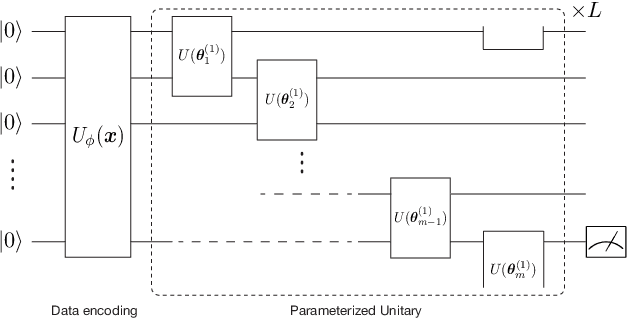

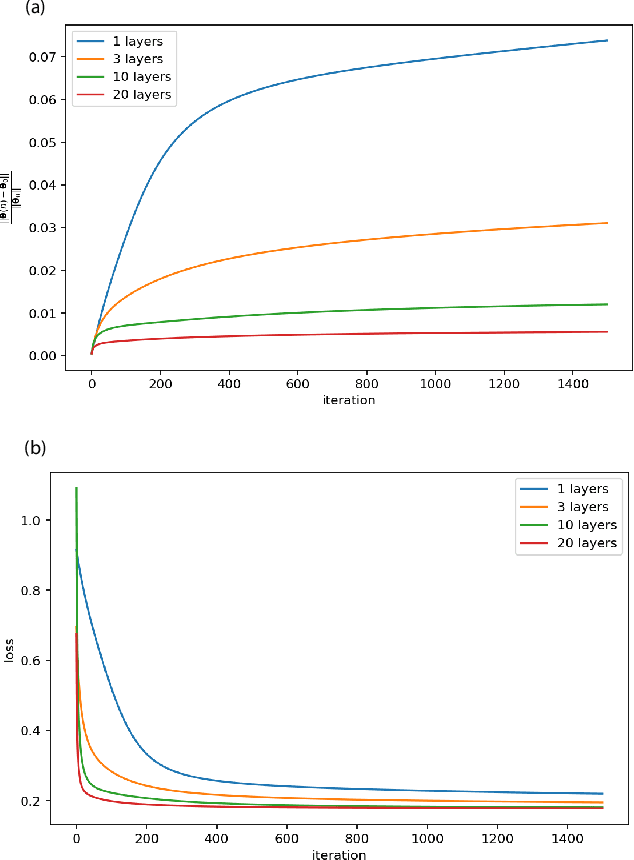

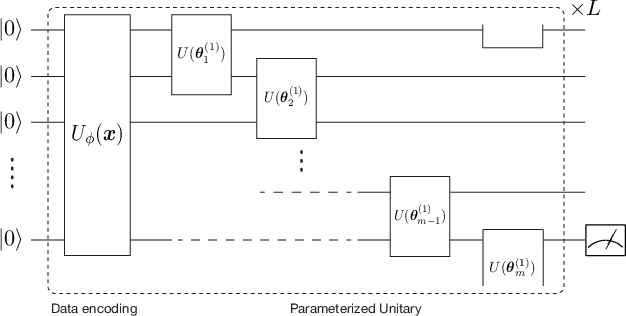

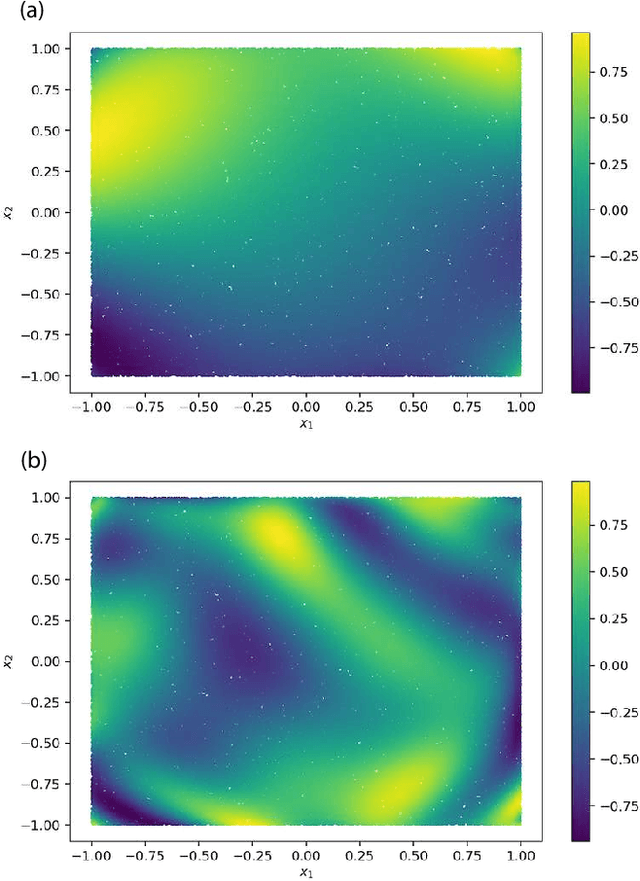

Abstract:Quantum kernel method is one of the key approaches to quantum machine learning, which has the advantages that it does not require optimization and has theoretical simplicity. By virtue of these properties, several experimental demonstrations and discussions of the potential advantages have been developed so far. However, as is the case in classical machine learning, not all quantum machine learning models could be regarded as kernel methods. In this work, we explore a quantum machine learning model with a deep parameterized quantum circuit and aim to go beyond the conventional quantum kernel method. In this case, the representation power and performance are expected to be enhanced, while the training process might be a bottleneck because of the barren plateaus issue. However, we find that parameters of a deep enough quantum circuit do not move much from its initial values during training, allowing first-order expansion with respect to the parameters. This behavior is similar to the neural tangent kernel in the classical literatures, and such a deep variational quantum machine learning can be described by another emergent kernel, quantum tangent kernel. Numerical simulations show that the proposed quantum tangent kernel outperforms the conventional quantum kernel method for an ansatz-generated dataset. This work provides a new direction beyond the conventional quantum kernel method and explores potential power of quantum machine learning with deep parameterized quantum circuits.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge