Ken Sakurada

Scale Estimation of Monocular SfM for a Multi-modal Stereo Camera

Oct 28, 2018

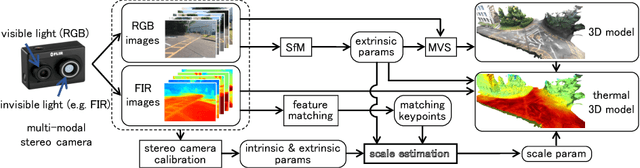

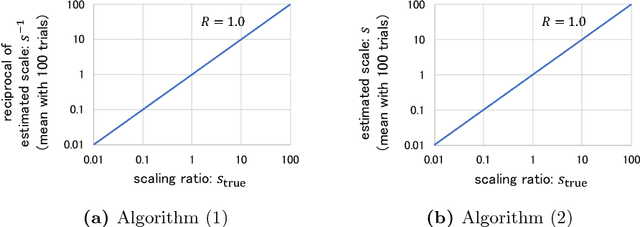

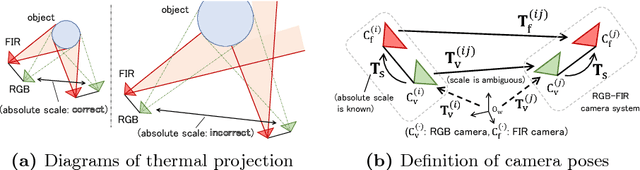

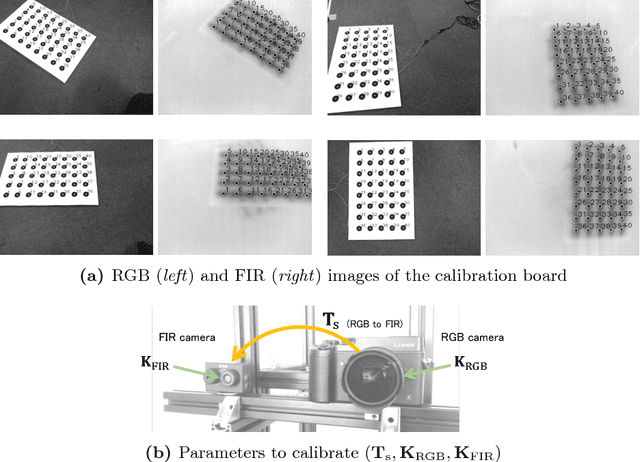

Abstract:This paper proposes a novel method of estimating the absolute scale of monocular SfM for a multi-modal stereo camera. In the fields of computer vision and robotics, scale estimation for monocular SfM has been widely investigated in order to simplify systems. This paper addresses the scale estimation problem for a stereo camera system in which two cameras capture different spectral images (e.g., RGB and FIR), whose feature points are difficult to directly match using descriptors. Furthermore, the number of matching points between FIR images can be comparatively small, owing to the low resolution and lack of thermal scene texture. To cope with these difficulties, the proposed method estimates the scale parameter using batch optimization, based on the epipolar constraint of a small number of feature correspondences between the invisible light images. The accuracy and numerical stability of the proposed method are verified by synthetic and real image experiments.

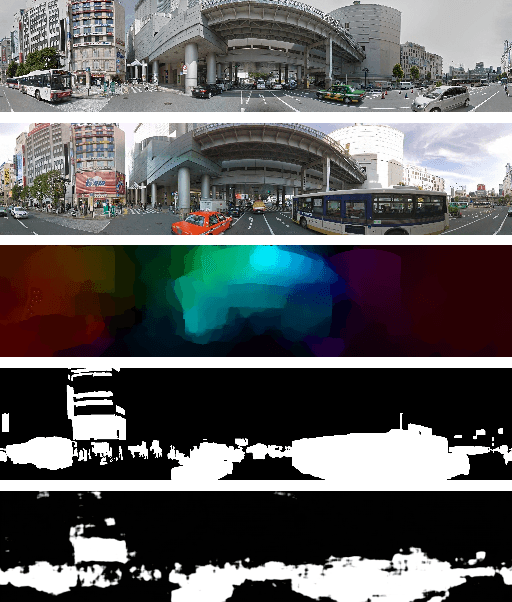

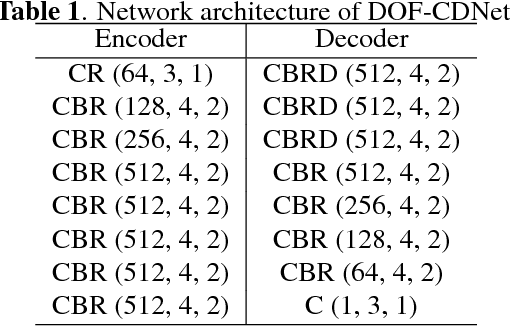

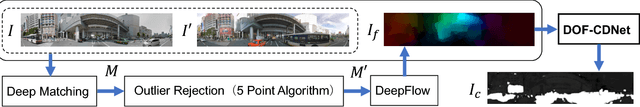

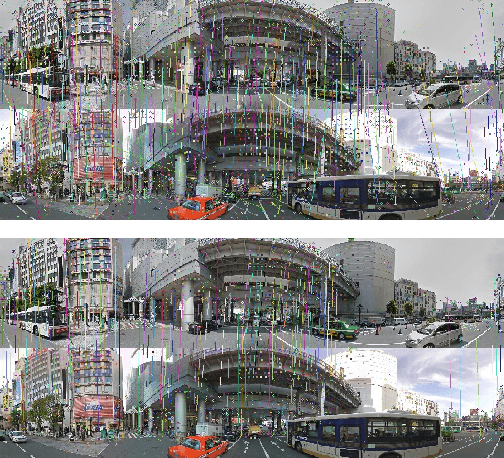

Dense Optical Flow based Change Detection Network Robust to Difference of Camera Viewpoints

Dec 08, 2017

Abstract:This paper presents a novel method for detecting scene changes from a pair of images with a difference of camera viewpoints using a dense optical flow based change detection network. In the case that camera poses of input images are fixed or known, such as with surveillance and satellite cameras, the pixel correspondence between the images captured at different times can be known. Hence, it is possible to comparatively accurately detect scene changes between the images by modeling the appearance of the scene. On the other hand, in case of cameras mounted on a moving object, such as ground and aerial vehicles, we must consider the spatial correspondence between the images captured at different times. However, it can be difficult to accurately estimate the camera pose or 3D model of a scene, owing to the scene changes or lack of imagery. To solve this problem, we propose a change detection convolutional neural network utilizing dense optical flow between input images to improve the robustness to the difference between camera viewpoints. Our evaluation based on the panoramic change detection dataset shows that the proposed method outperforms state-of-the-art change detection algorithms.

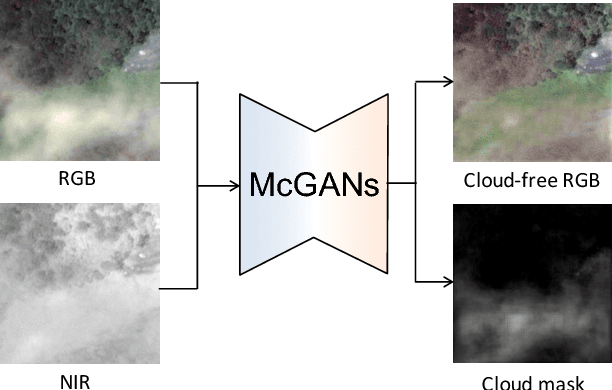

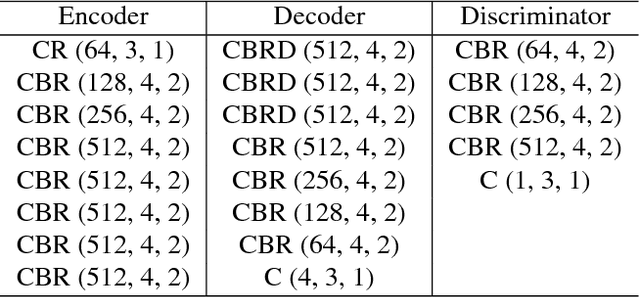

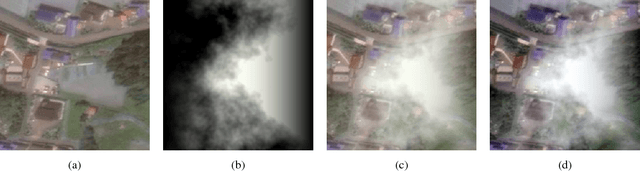

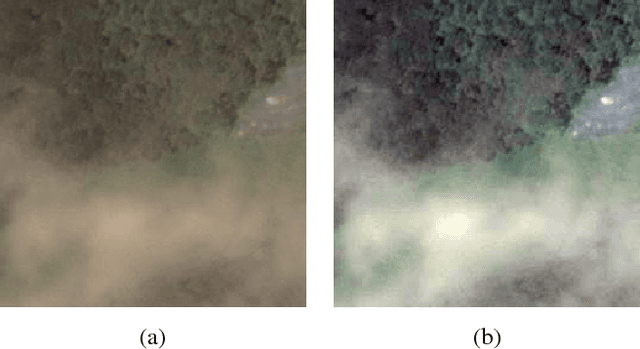

Filmy Cloud Removal on Satellite Imagery with Multispectral Conditional Generative Adversarial Nets

Oct 13, 2017

Abstract:In this paper, we propose a method for cloud removal from visible light RGB satellite images by extending the conditional Generative Adversarial Networks (cGANs) from RGB images to multispectral images. Satellite images have been widely utilized for various purposes, such as natural environment monitoring (pollution, forest or rivers), transportation improvement and prompt emergency response to disasters. However, the obscurity caused by clouds makes it unstable to monitor the situation on the ground with the visible light camera. Images captured by a longer wavelength are introduced to reduce the effects of clouds. Synthetic Aperture Radar (SAR) is such an example that improves visibility even the clouds exist. On the other hand, the spatial resolution decreases as the wavelength increases. Furthermore, the images captured by long wavelengths differs considerably from those captured by visible light in terms of their appearance. Therefore, we propose a network that can remove clouds and generate visible light images from the multispectral images taken as inputs. This is achieved by extending the input channels of cGANs to be compatible with multispectral images. The networks are trained to output images that are close to the ground truth using the images synthesized with clouds over the ground truth as inputs. In the available dataset, the proportion of images of the forest or the sea is very high, which will introduce bias in the training dataset if uniformly sampled from the original dataset. Thus, we utilize the t-Distributed Stochastic Neighbor Embedding (t-SNE) to improve the problem of bias in the training dataset. Finally, we confirm the feasibility of the proposed network on the dataset of four bands images, which include three visible light bands and one near-infrared (NIR) band.

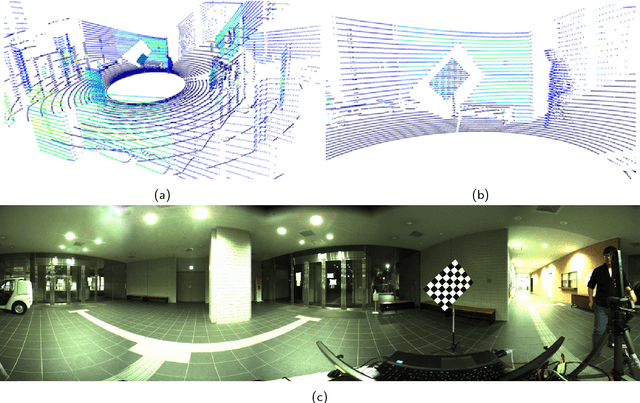

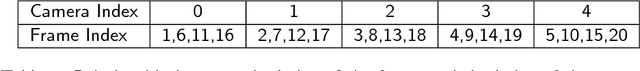

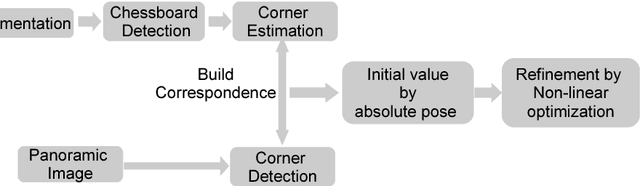

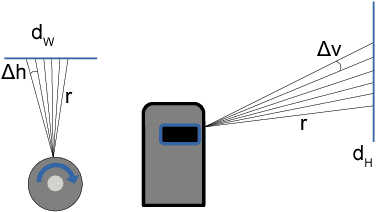

Reflectance Intensity Assisted Automatic and Accurate Extrinsic Calibration of 3D LiDAR and Panoramic Camera Using a Printed Chessboard

Aug 18, 2017

Abstract:This paper presents a novel method for fully automatic and convenient extrinsic calibration of a 3D LiDAR and a panoramic camera with a normally printed chessboard. The proposed method is based on the 3D corner estimation of the chessboard from the sparse point cloud generated by one frame scan of the LiDAR. To estimate the corners, we formulate a full-scale model of the chessboard and fit it to the segmented 3D points of the chessboard. The model is fitted by optimizing the cost function under constraints of correlation between the reflectance intensity of laser and the color of the chessboard's patterns. Powell's method is introduced for resolving the discontinuity problem in optimization. The corners of the fitted model are considered as the 3D corners of the chessboard. Once the corners of the chessboard in the 3D point cloud are estimated, the extrinsic calibration of the two sensors is converted to a 3D-2D matching problem. The corresponding 3D-2D points are used to calculate the absolute pose of the two sensors with Unified Perspective-n-Point (UPnP). Further, the calculated parameters are regarded as initial values and are refined using the Levenberg-Marquardt method. The performance of the proposed corner detection method from the 3D point cloud is evaluated using simulations. The results of experiments, conducted on a Velodyne HDL-32e LiDAR and a Ladybug3 camera under the proposed re-projection error metric, qualitatively and quantitatively demonstrate the accuracy and stability of the final extrinsic calibration parameters.

* 20 pages, submitted to the journal of Remote Sensing

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge