Junhyeong Kwon

OmniLight: One Model to Rule All Lighting Conditions

Apr 16, 2026Abstract:Adverse lighting conditions, such as cast shadows and irregular illumination, pose significant challenges to computer vision systems by degrading visibility and color fidelity. Consequently, effective shadow removal and ALN are critical for restoring underlying image content, improving perceptual quality, and facilitating robust performance in downstream tasks. However, while achieving state-of-the-art results on specific benchmarks is a primary goal in image restoration challenges, real-world applications often demand robust models capable of handling diverse domains. To address this, we present a comprehensive study on lighting-related image restoration by exploring two contrasting strategies. We leverage a robust framework for ALN, DINOLight, as a specialized baseline to exploit the characteristics of each individual dataset, and extend it to OmniLight, a generalized alternative incorporating our proposed Wavelet Domain Mixture-of-Experts (WD-MoE) that is trained across all provided datasets. Through a comparative analysis of these two methods, we discuss the impact of data distribution on the performance of specialized and unified architectures in lighting-related image restoration. Notably, both approaches secured top-tier rankings across all three lighting-related tracks in the NTIRE 2026 Challenge, demonstrating their outstanding perceptual quality and generalization capabilities. Our codes are available at https://github.com/OBAKSA/Lighting-Restoration.

DINOLight: Robust Ambient Light Normalization with Self-supervised Visual Prior Integration

Mar 13, 2026Abstract:This paper presents a new ambient light normalization framework, DINOLight, that integrates the self-supervised model DINOv2's image understanding capability into the restoration process as a visual prior. Ambient light normalization aims to restore images degraded by non-uniform shadows and lighting caused by multiple light sources and complex scene geometries. We observe that DINOv2 can reliably extract both semantic and geometric information from a degraded image. Based on this observation, we develop a novel framework to utilize DINOv2 features for lighting normalization. First, we propose an adaptive feature fusion module that combines features from different DINOv2 layers using a point-wise softmax mask. Next, the fused features are integrated into our proposed restoration network in both spatial and frequency domains through an auxiliary cross-attention mechanism. Experiments show that DINOLight achieves superior performance on the Ambient6K dataset, and that DINOv2 features are effective for enhancing ambient light normalization. We also apply our method to shadow-removal benchmark datasets, achieving competitive results compared to methods that use mask priors. Codes will be released upon acceptance.

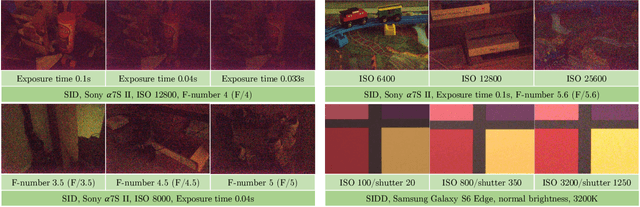

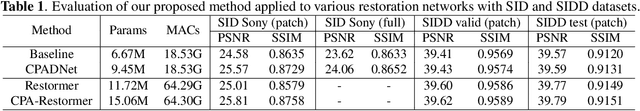

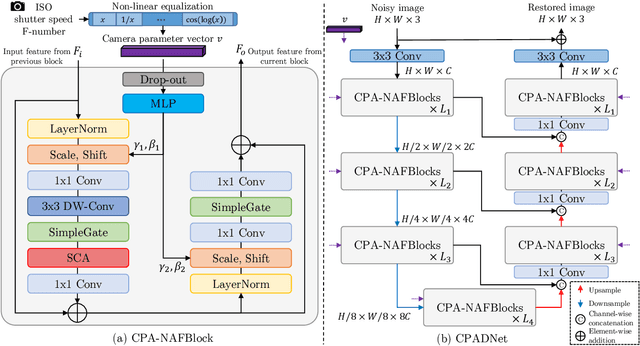

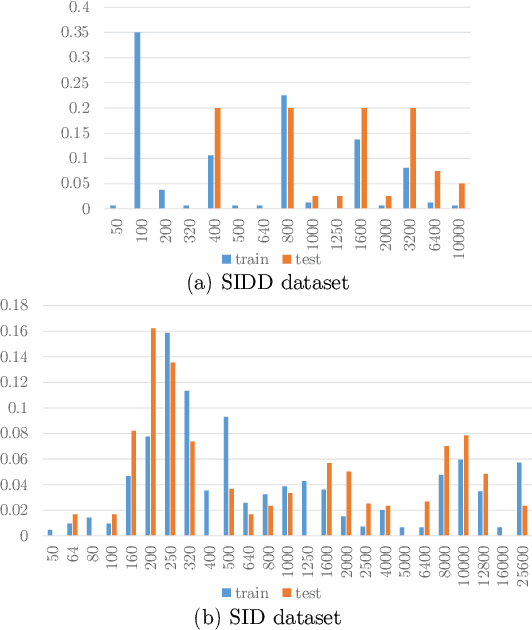

Towards Controllable Real Image Denoising with Camera Parameters

Jul 02, 2025

Abstract:Recent deep learning-based image denoising methods have shown impressive performance; however, many lack the flexibility to adjust the denoising strength based on the noise levels, camera settings, and user preferences. In this paper, we introduce a new controllable denoising framework that adaptively removes noise from images by utilizing information from camera parameters. Specifically, we focus on ISO, shutter speed, and F-number, which are closely related to noise levels. We convert these selected parameters into a vector to control and enhance the performance of the denoising network. Experimental results show that our method seamlessly adds controllability to standard denoising neural networks and improves their performance. Code is available at https://github.com/OBAKSA/CPADNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge