Junha Kim

Is user feedback always informative? Retrieval Latent Defending for Semi-Supervised Domain Adaptation without Source Data

Jul 22, 2024

Abstract:This paper aims to adapt the source model to the target environment, leveraging small user feedback (i.e., labeled target data) readily available in real-world applications. We find that existing semi-supervised domain adaptation (SemiSDA) methods often suffer from poorly improved adaptation performance when directly utilizing such feedback data, as shown in Figure 1. We analyze this phenomenon via a novel concept called Negatively Biased Feedback (NBF), which stems from the observation that user feedback is more likely for data points where the model produces incorrect predictions. To leverage this feedback while avoiding the issue, we propose a scalable adapting approach, Retrieval Latent Defending. This approach helps existing SemiSDA methods to adapt the model with a balanced supervised signal by utilizing latent defending samples throughout the adaptation process. We demonstrate the problem caused by NBF and the efficacy of our approach across various benchmarks, including image classification, semantic segmentation, and a real-world medical imaging application. Our extensive experiments reveal that integrating our approach with multiple state-of-the-art SemiSDA methods leads to significant performance improvements.

Enhancing Breast Cancer Risk Prediction by Incorporating Prior Images

Mar 28, 2023

Abstract:Recently, deep learning models have shown the potential to predict breast cancer risk and enable targeted screening strategies, but current models do not consider the change in the breast over time. In this paper, we present a new method, PRIME+, for breast cancer risk prediction that leverages prior mammograms using a transformer decoder, outperforming a state-of-the-art risk prediction method that only uses mammograms from a single time point. We validate our approach on a dataset with 16,113 exams and further demonstrate that it effectively captures patterns of changes from prior mammograms, such as changes in breast density, resulting in improved short-term and long-term breast cancer risk prediction. Experimental results show that our model achieves a statistically significant improvement in performance over the state-of-the-art based model, with a C-index increase from 0.68 to 0.73 (p < 0.05) on held-out test sets.

Learning to Adapt to Unseen Abnormal Activities under Weak Supervision

Mar 25, 2022

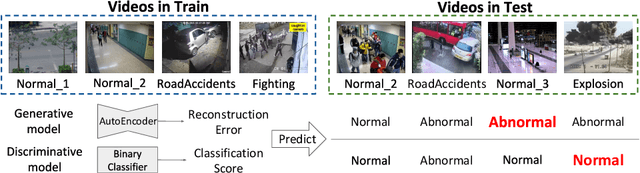

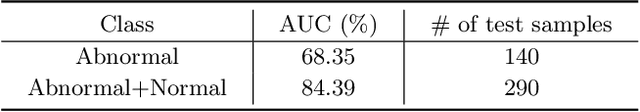

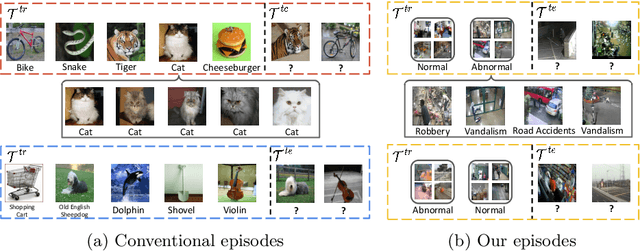

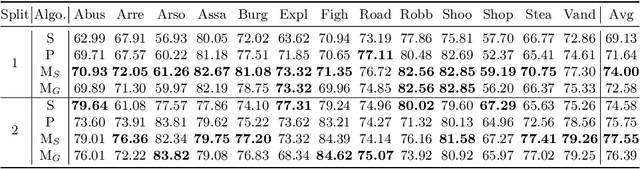

Abstract:We present a meta-learning framework for weakly supervised anomaly detection in videos, where the detector learns to adapt to unseen types of abnormal activities effectively when only video-level annotations of binary labels are available. Our work is motivated by the fact that existing methods suffer from poor generalization to diverse unseen examples. We claim that an anomaly detector equipped with a meta-learning scheme alleviates the limitation by leading the model to an initialization point for better optimization. We evaluate the performance of our framework on two challenging datasets, UCF-Crime and ShanghaiTech. The experimental results demonstrate that our algorithm boosts the capability to localize unseen abnormal events in a weakly supervised setting. Besides the technical contributions, we perform the annotation of missing labels in the UCF-Crime dataset and make our task evaluated effectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge