Jinbao Zhu

Fully Privacy-Preserving Federated Representation Learning via Secure Embedding Aggregation

Jun 18, 2022

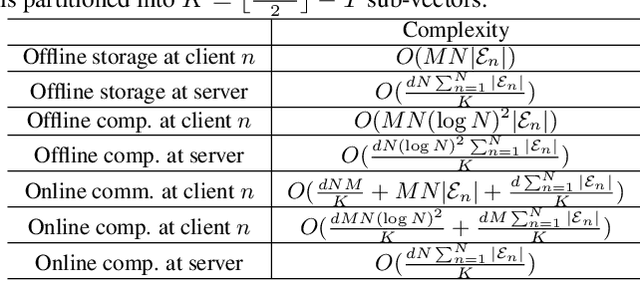

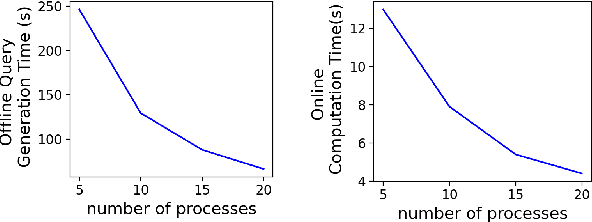

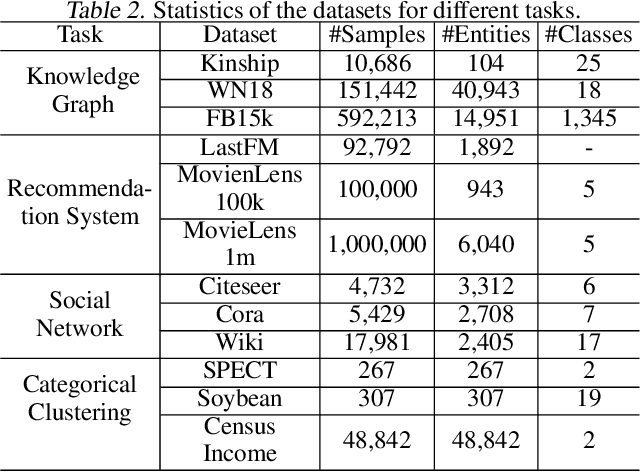

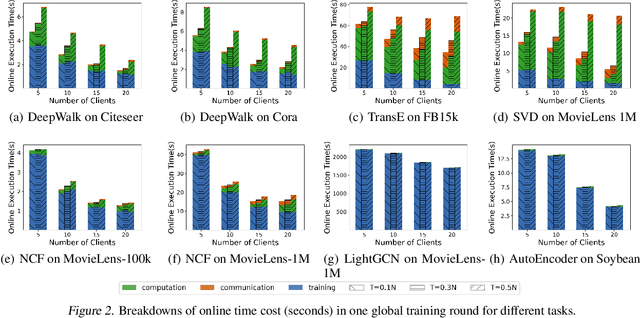

Abstract:We consider a federated representation learning framework, where with the assistance of a central server, a group of $N$ distributed clients train collaboratively over their private data, for the representations (or embeddings) of a set of entities (e.g., users in a social network). Under this framework, for the key step of aggregating local embeddings trained at the clients in a private manner, we develop a secure embedding aggregation protocol named SecEA, which provides information-theoretical privacy guarantees for the set of entities and the corresponding embeddings at each client $simultaneously$, against a curious server and up to $T < N/2$ colluding clients. As the first step of SecEA, the federated learning system performs a private entity union, for each client to learn all the entities in the system without knowing which entities belong to which clients. In each aggregation round, the local embeddings are secretly shared among the clients using Lagrange interpolation, and then each client constructs coded queries to retrieve the aggregated embeddings for the intended entities. We perform comprehensive experiments on various representation learning tasks to evaluate the utility and efficiency of SecEA, and empirically demonstrate that compared with embedding aggregation protocols without (or with weaker) privacy guarantees, SecEA incurs negligible performance loss (within 5%); and the additional computation latency of SecEA diminishes for training deeper models on larger datasets.

Generalized Lagrange Coded Computing: A Flexible Computation-Communication Tradeoff

Apr 24, 2022

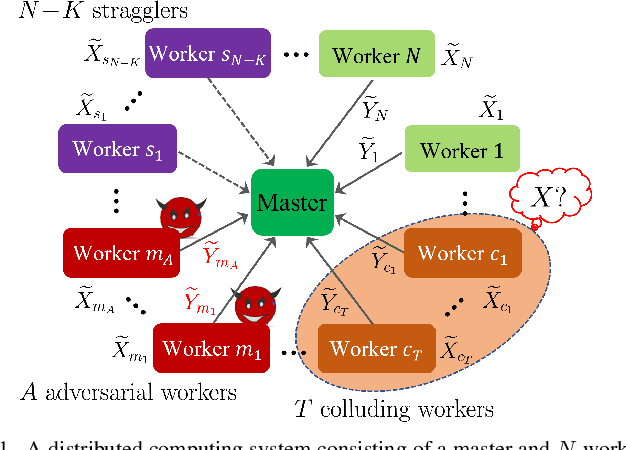

Abstract:We consider the problem of evaluating arbitrary multivariate polynomials over a massive dataset, in a distributed computing system with a master node and multiple worker nodes. Generalized Lagrange Coded Computing (GLCC) codes are proposed to provide robustness against stragglers who do not return computation results in time, adversarial workers who deliberately modify results for their benefit, and information-theoretic security of the dataset amidst possible collusion of workers. GLCC codes are constructed by first partitioning the dataset into multiple groups, and then encoding the dataset using carefully designed interpolation polynomials, such that interference computation results across groups can be eliminated at the master. Particularly, GLCC codes include the state-of-the-art Lagrange Coded Computing (LCC) codes as a special case, and achieve a more flexible tradeoff between communication and computation overheads in optimizing system efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge