Jian Du

Variational Optimization for the Submodular Maximum Coverage Problem

Jun 10, 2020

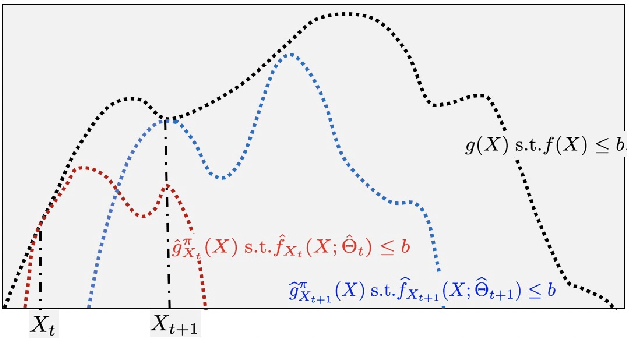

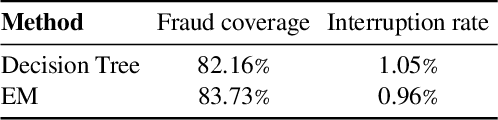

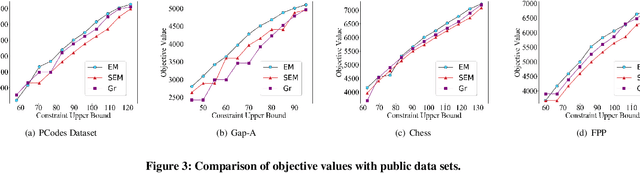

Abstract:We examine the \emph{submodular maximum coverage problem} (SMCP), which is related to a wide range of applications. We provide the first variational approximation for this problem based on the Nemhauser divergence, and show that it can be solved efficiently using variational optimization. The algorithm alternates between two steps: (1) an E step that estimates a variational parameter to maximize a parameterized \emph{modular} lower bound; and (2) an M step that updates the solution by solving the local approximate problem. We provide theoretical analysis on the performance of the proposed approach and its curvature-dependent approximate factor, and empirically evaluate it on a number of public data sets and several application tasks.

Topology Adaptive Graph Convolutional Networks

Feb 11, 2018

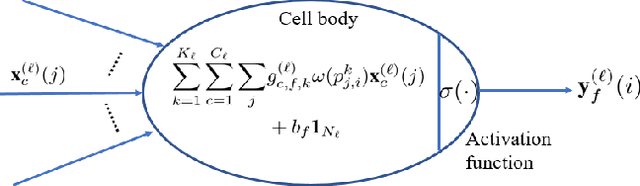

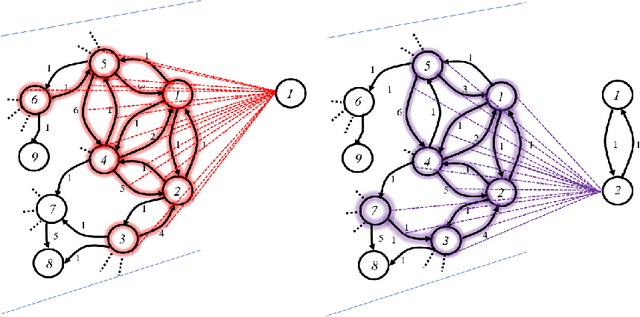

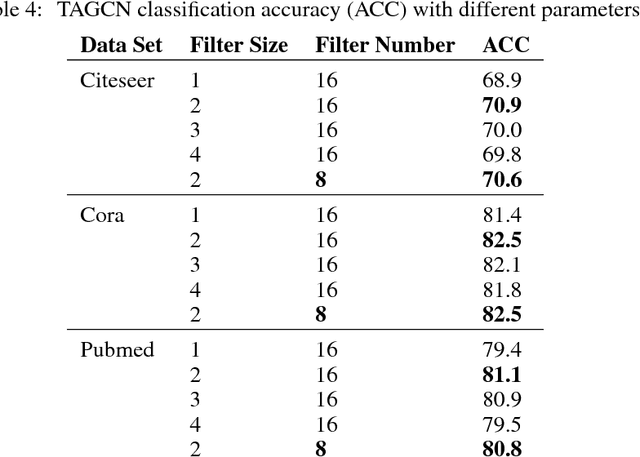

Abstract:Spectral graph convolutional neural networks (CNNs) require approximation to the convolution to alleviate the computational complexity, resulting in performance loss. This paper proposes the topology adaptive graph convolutional network (TAGCN), a novel graph convolutional network defined in the vertex domain. We provide a systematic way to design a set of fixed-size learnable filters to perform convolutions on graphs. The topologies of these filters are adaptive to the topology of the graph when they scan the graph to perform convolution. The TAGCN not only inherits the properties of convolutions in CNN for grid-structured data, but it is also consistent with convolution as defined in graph signal processing. Since no approximation to the convolution is needed, TAGCN exhibits better performance than existing spectral CNNs on a number of data sets and is also computationally simpler than other recent methods.

Convergence Analysis of Distributed Inference with Vector-Valued Gaussian Belief Propagation

Dec 29, 2017

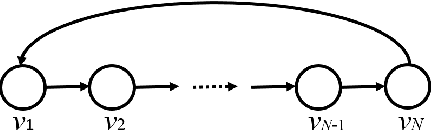

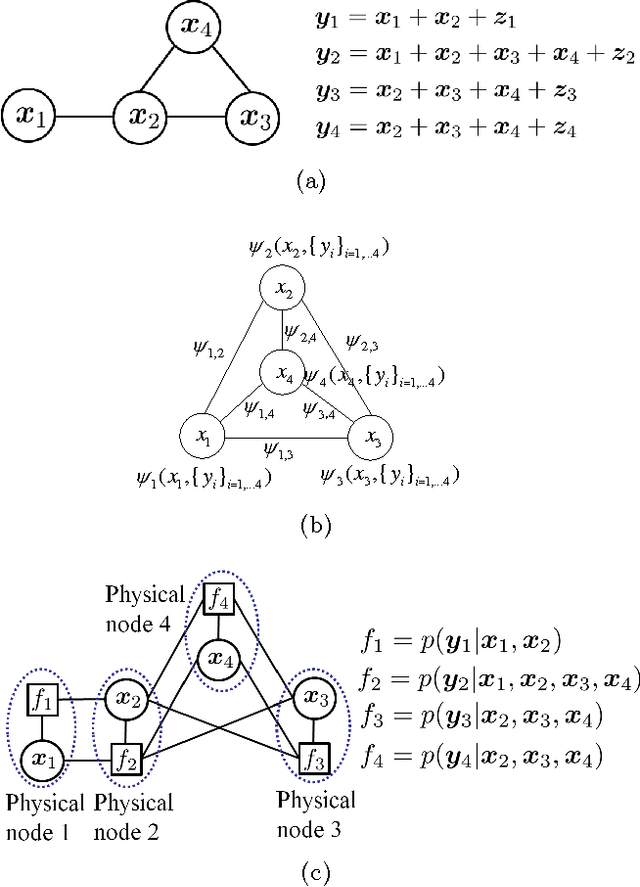

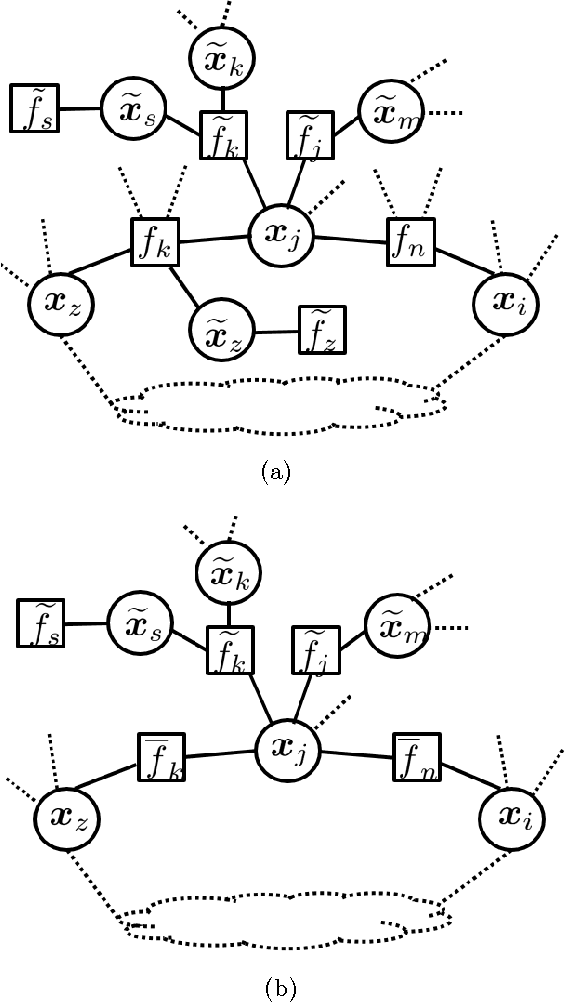

Abstract:This paper considers inference over distributed linear Gaussian models using factor graphs and Gaussian belief propagation (BP). The distributed inference algorithm involves only local computation of the information matrix and of the mean vector, and message passing between neighbors. Under broad conditions, it is shown that the message information matrix converges to a unique positive definite limit matrix for arbitrary positive semidefinite initialization, and it approaches an arbitrarily small neighborhood of this limit matrix at a doubly exponential rate. A necessary and sufficient convergence condition for the belief mean vector to converge to the optimal centralized estimator is provided under the assumption that the message information matrix is initialized as a positive semidefinite matrix. Further, it is shown that Gaussian BP always converges when the underlying factor graph is given by the union of a forest and a single loop. The proposed convergence condition in the setup of distributed linear Gaussian models is shown to be strictly weaker than other existing convergence conditions and requirements, including the Gaussian Markov random field based walk-summability condition, and applicable to a large class of scenarios.

Convergence analysis of belief propagation for pairwise linear Gaussian models

Nov 18, 2017Abstract:Gaussian belief propagation (BP) has been widely used for distributed inference in large-scale networks such as the smart grid, sensor networks, and social networks, where local measurements/observations are scattered over a wide geographical area. One particular case is when two neighboring agents share a common observation. For example, to estimate voltage in the direct current (DC) power flow model, the current measurement over a power line is proportional to the voltage difference between two neighboring buses. When applying the Gaussian BP algorithm to this type of problem, the convergence condition remains an open issue. In this paper, we analyze the convergence properties of Gaussian BP for this pairwise linear Gaussian model. We show analytically that the updating information matrix converges at a geometric rate to a unique positive definite matrix with arbitrary positive semidefinite initial value and further provide the necessary and sufficient convergence condition for the belief mean vector to the optimal estimate.

Convergence analysis of the information matrix in Gaussian belief propagation

Apr 13, 2017Abstract:Gaussian belief propagation (BP) has been widely used for distributed estimation in large-scale networks such as the smart grid, communication networks, and social networks, where local measurements/observations are scattered over a wide geographical area. However, the convergence of Gaus- sian BP is still an open issue. In this paper, we consider the convergence of Gaussian BP, focusing in particular on the convergence of the information matrix. We show analytically that the exchanged message information matrix converges for arbitrary positive semidefinite initial value, and its dis- tance to the unique positive definite limit matrix decreases exponentially fast.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge