Javier Varona

Geometric-Based Nail Segmentation for Clinical Measurements

Jan 10, 2025Abstract:A robust segmentation method that can be used to perform measurements on toenails is presented. The proposed method is used as the first step in a clinical trial to objectively quantify the incidence of a particular pathology. For such an assessment, it is necessary to distinguish a nail, which locally appears to be similar to the skin. Many algorithms have been used, each of which leverages different aspects of toenail appearance. We used the Hough transform to locate the tip of the toe and estimate the nail location and size. Subsequently, we classified the super-pixels of the image based on their geometric and photometric information. Thereafter, the watershed transform delineated the border of the nail. The method was validated using a 348-image medical dataset, achieving an accuracy of 0.993 and an F-measure of 0.925. The proposed method is considerably robust across samples, with respect to factors such as nail shape, skin pigmentation, illumination conditions, and appearance of large regions affected by a medical condition

Dealing with sequences in the RGBDT space

May 10, 2018

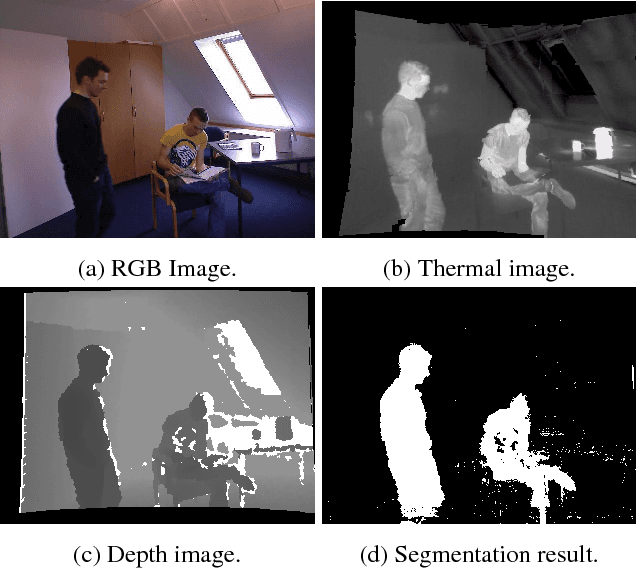

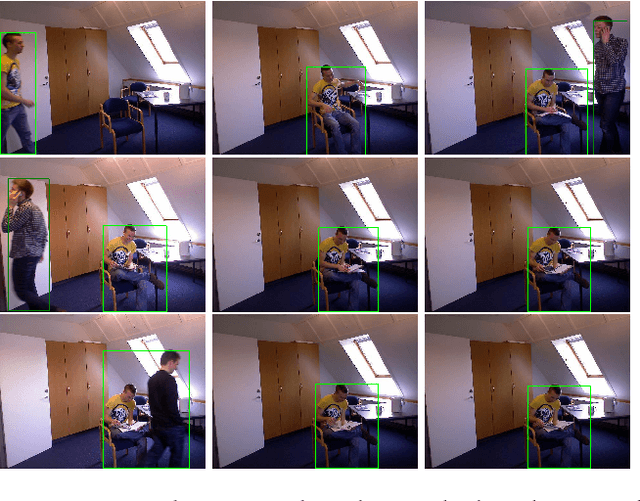

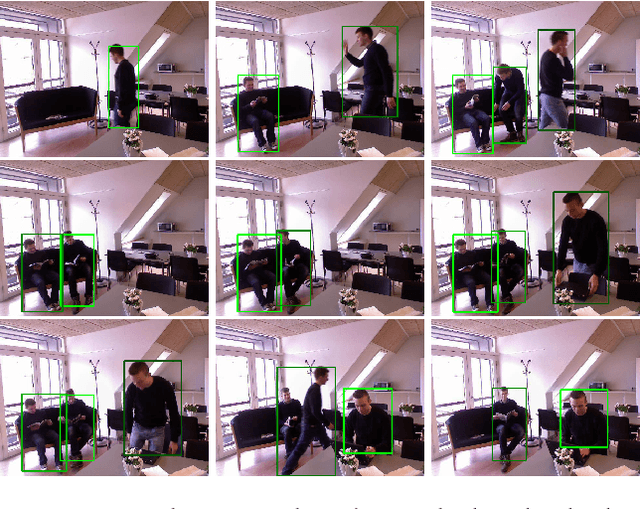

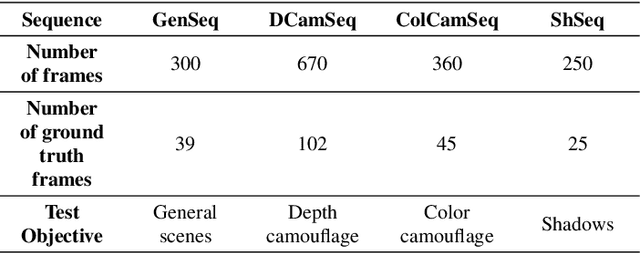

Abstract:Most of the current research in computer vision is focused on working with single images without taking in account temporal information. We present a probabilistic non-parametric model that mixes multiple information cues from devices to segment regions that contain moving objects in image sequences. We prepared an experimental setup to show the importance of using previous information for obtaining an accurate segmentation result, using a novel dataset that provides sequences in the RGBDT space. We label the detected regions ts with a state-of-the-art human detector. Each one of the detected regions is at least marked as human once.

Modelling depth for nonparametric foreground segmentation using RGBD devices

Sep 29, 2016

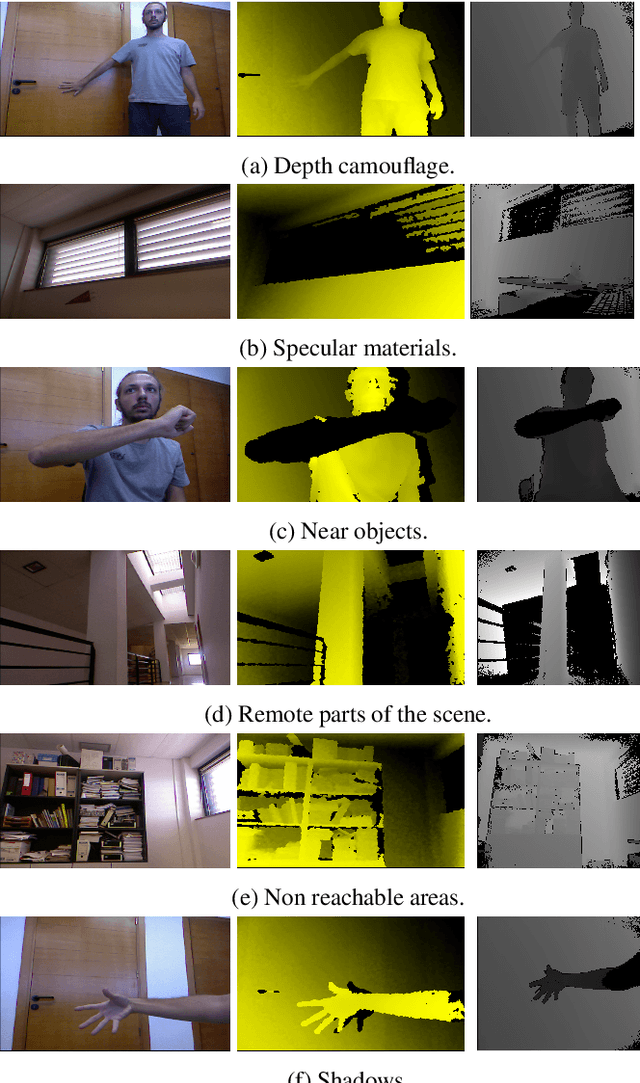

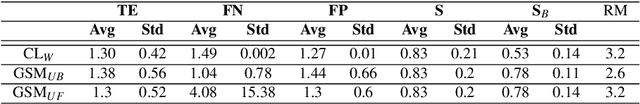

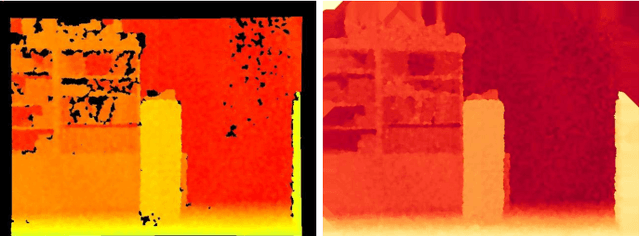

Abstract:The problem of detecting changes in a scene and segmenting the foreground from background is still challenging, despite previous work. Moreover, new RGBD capturing devices include depth cues, which could be incorporated to improve foreground segmentation. In this work, we present a new nonparametric approach where a unified model mixes the device multiple information cues. In order to unify all the device channel cues, a new probabilistic depth data model is also proposed where we show how handle the inaccurate data to improve foreground segmentation. A new RGBD video dataset is presented in order to introduce a new standard for comparison purposes of this kind of algorithms. Results show that the proposed approach can handle several practical situations and obtain good results in all cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge