James R. McBride

Real Time Incremental Foveal Texture Mapping for Autonomous Vehicles

Jan 16, 2021

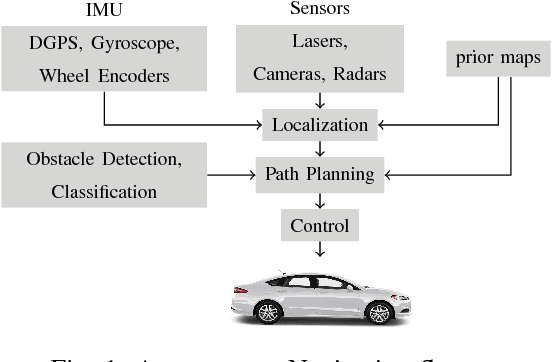

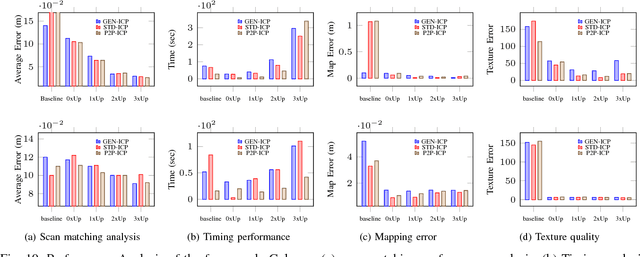

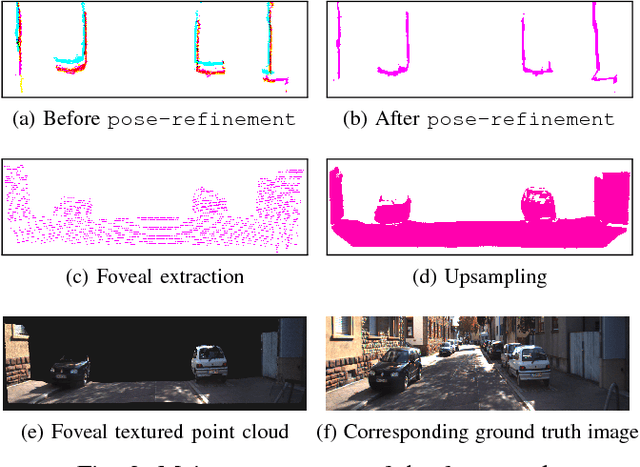

Abstract:We propose an end-to-end real time framework to generate high resolution graphics grade textured 3D map of urban environment. The generated detailed map finds its application in the precise localization and navigation of autonomous vehicles. It can also serve as a virtual test bed for various vision and planning algorithms as well as a background map in the computer games. In this paper, we focus on two important issues: (i) incrementally generating a map with coherent 3D surface, in real time and (ii) preserving the quality of color texture. To handle the above issues, firstly, we perform a pose-refinement procedure which leverages camera image information, Delaunay triangulation and existing scan matching techniques to produce high resolution 3D map from the sparse input LIDAR scan. This 3D map is then texturized and accumulated by using a novel technique of ray-filtering which handles occlusion and inconsistencies in pose-refinement. Further, inspired by human fovea, we introduce foveal-processing which significantly reduces the computation time and also assists ray-filtering to maintain consistency in color texture and coherency in 3D surface of the output map. Moreover, we also introduce texture error (TE) and mean texture mapping error (MTME), which provides quantitative measure of texturing and overall quality of the textured maps.

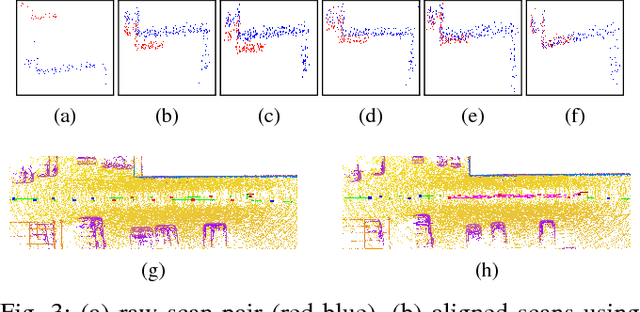

Robust and Fast 3D Scan Alignment using Mutual Information

Sep 20, 2017

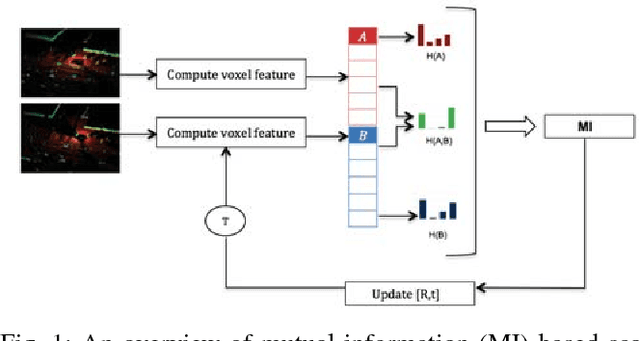

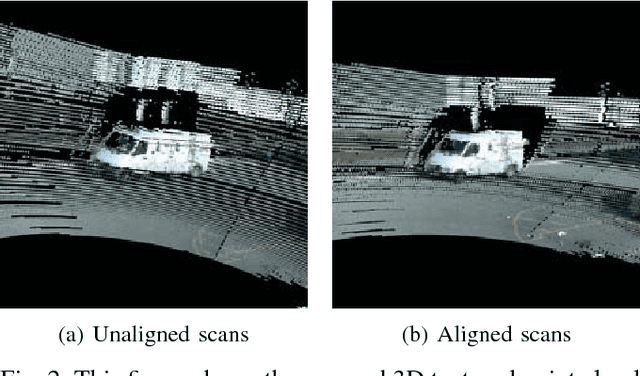

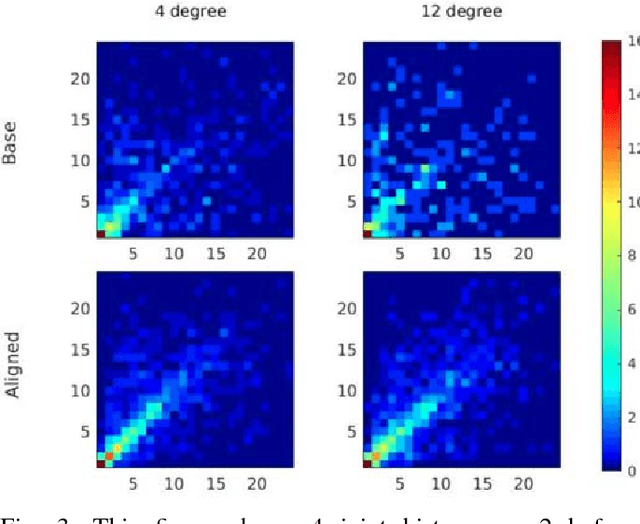

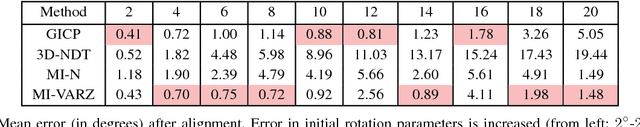

Abstract:This paper presents a mutual information (MI) based algorithm for the estimation of full 6-degree-of-freedom (DOF) rigid body transformation between two overlapping point clouds. We first divide the scene into a 3D voxel grid and define simple to compute features for each voxel in the scan. The two scans that need to be aligned are considered as a collection of these features and the MI between these voxelized features is maximized to obtain the correct alignment of scans. We have implemented our method with various simple point cloud features (such as number of points in voxel, variance of z-height in voxel) and compared the performance of the proposed method with existing point-to-point and point-to- distribution registration methods. We show that our approach has an efficient and fast parallel implementation on GPU, and evaluate the robustness and speed of the proposed algorithm on two real-world datasets which have variety of dynamic scenes from different environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge