James Butterworth

Fly Safe: Aerial Swarm Robotics using Force Field Particle Swarm Optimisation

Jul 17, 2019

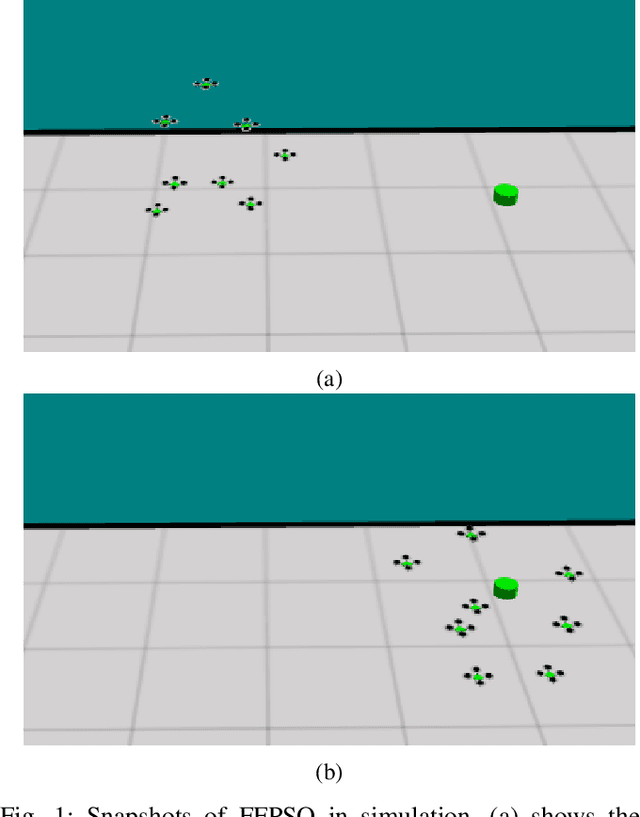

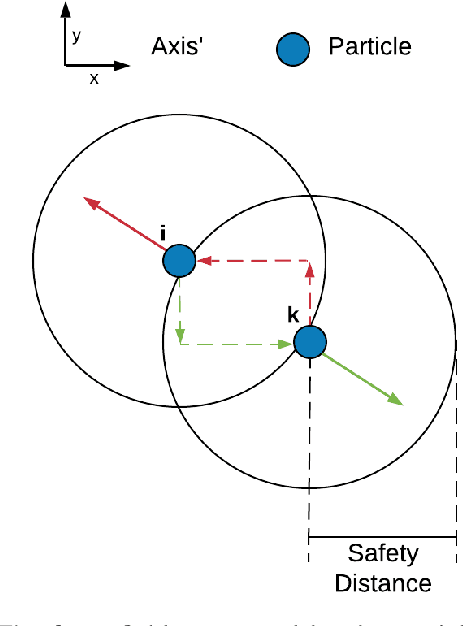

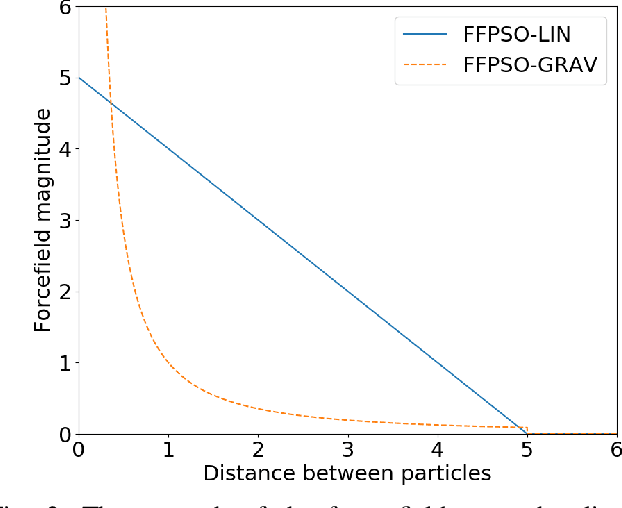

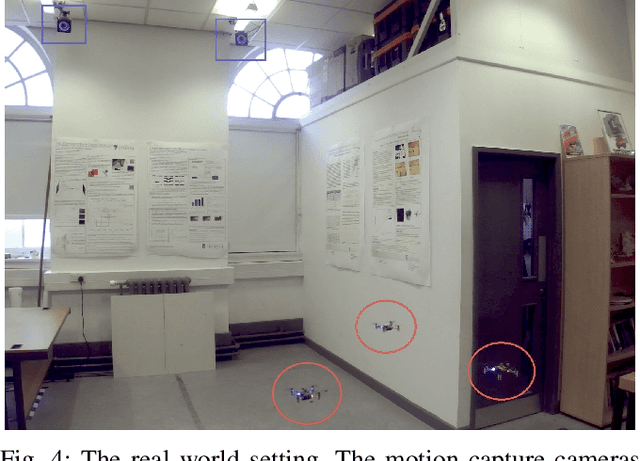

Abstract:Particle Swarm Optimisation (PSO) is a powerful optimisation algorithm that can be used to locate global maxima in a search space. Recent interest in swarms of Micro Aerial Vehicles (MAVs) begs the question as to whether PSO can be used as a method to enable real robotic swarms to locate a target goal point. However, the original PSO algorithm does not take into account collisions between particles during search. In this paper we propose a novel algorithm called Force Field Particle Swarm Optimisation (FFPSO) that designates repellent force fields to particles such that these fields provide an additional velocity component into the original PSO equations. We compare the performance of FFPSO with PSO and show that it has the ability to reduce the number of particle collisions during search to 0 whilst also being able to locate a target of interest in a similar amount of time. The scalability of the algorithm is also demonstrated via a set of experiments that considers how the number of crashes and the time taken to find the goal varies according to swarm size. Finally, we demonstrate the algorithms applicability on a swarm of real MAVs.

Evolving Indoor Navigational Strategies Using Gated Recurrent Units In NEAT

Apr 12, 2019

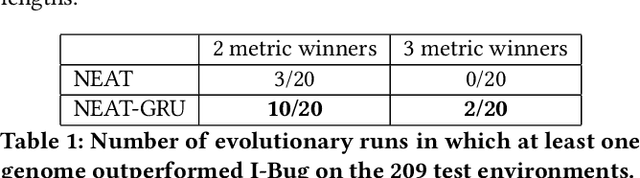

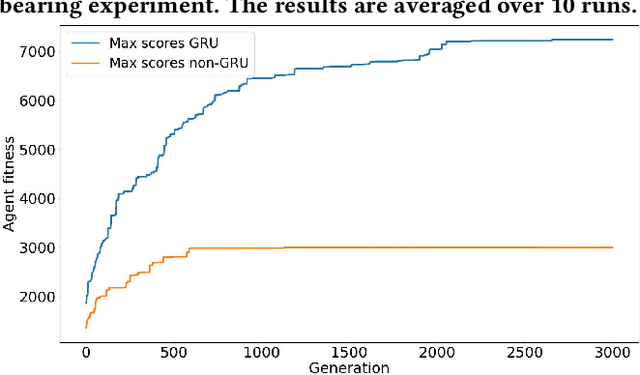

Abstract:Simultaneous Localisation and Mapping (SLAM) algorithms are expensive to run on smaller robotic platforms such as Micro-Aerial Vehicles. Bug algorithms are an alternative that use relatively little processing power, and avoid high memory consumption by not building an explicit map of the environment. Bug Algorithms achieve relatively good performance in simulated and robotic maze solving domains. However, because they are hand-designed, a natural question is whether they are globally optimal control policies. In this work we explore the performance of Neuroevolution - specifically NEAT - at evolving control policies for simulated differential drive robots carrying out generalised maze navigation. We extend NEAT to include Gated Recurrent Units (GRUs) to help deal with long term dependencies. We show that both NEAT and our NEAT-GRU can repeatably generate controllers that outperform I-Bug (an algorithm particularly well-suited for use in real robots) on a test set of 209 indoor maze like environments. We show that NEAT-GRU is superior to NEAT in this task but also that out of the 2 systems, only NEAT-GRU can continuously evolve successful controllers for a much harder task in which no bearing information about the target is provided to the agent.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge