Hassan Rafique

Model-Agnostic Linear Competitors -- When Interpretable Models Compete and Collaborate with Black-Box Models

Sep 23, 2019

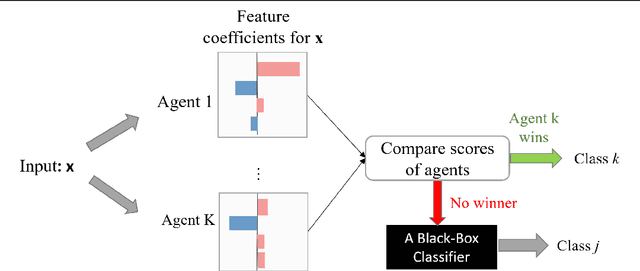

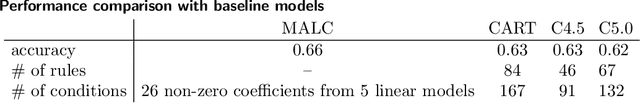

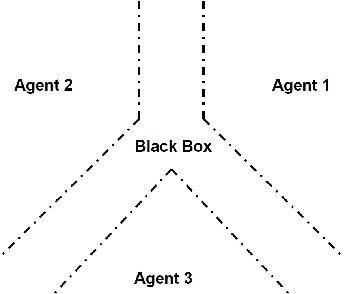

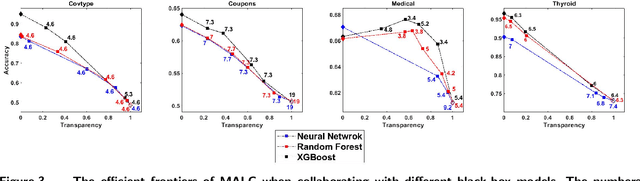

Abstract:Driven by an increasing need for model interpretability, interpretable models have become strong competitors for black-box models in many real applications. In this paper, we propose a novel type of model where interpretable models compete and collaborate with black-box models. We present the Model-Agnostic Linear Competitors (MALC) for partially interpretable classification. MALC is a hybrid model that uses linear models to locally substitute any black-box model, capturing subspaces that are most likely to be in a class while leaving the rest of the data to the black-box. MALC brings together the interpretable power of linear models and good predictive performance of a black-box model. We formulate the training of a MALC model as a convex optimization. The predictive accuracy and transparency (defined as the percentage of data captured by the linear models) balance through a carefully designed objective function and the optimization problem is solved with the accelerated proximal gradient method. Experiments show that MALC can effectively trade prediction accuracy for transparency and provide an efficient frontier that spans the entire spectrum of transparency.

Solving Weakly-Convex-Weakly-Concave Saddle-Point Problems as Weakly-Monotone Variational Inequality

Oct 24, 2018

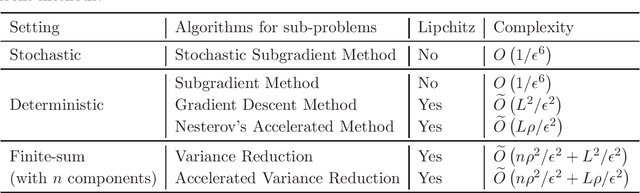

Abstract:In this paper, we consider first-order algorithms for solving a class of non-convex non-concave min-max saddle-point problems, whose objective function is weakly convex (resp. weakly concave) in terms of the variable of minimization (resp. maximization). It has many important applications in machine learning, statistics, and operations research. One such example that attracts tremendous attention recently in machine learning is training Generative Adversarial Networks. We propose an algorithmic framework motivated by the proximal point method, which solve a sequence of strongly monotone variational inequalities constructed by adding a strongly monotone mapping to the original mapping with a periodically updated proximal center. By approximately solving each strongly monotone variational inequality, we prove that the solution obtained by the algorithm converges to a stationary solution of the original min-max problem. Iteration complexities are established for using different algorithms to solve the subproblems, including subgradient method, extragradient method and stochastic subgradient method. To the best of our knowledge, this is the first work that establishes the non-asymptotic convergence to a stationary point of a non-convex non-concave min-max problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge