Guoming Long

Human-Machine Co-Boosted Bug Report Identification with Mutualistic Neural Active Learning

Apr 20, 2026Abstract:Bug reports, encompassing a wide range of bug types, are crucial for maintaining software quality. However, the increasing complexity and volume of bug reports pose a significant challenge in sole manual identification and assignment to the appropriate teams for resolution, as dealing with all the reports is time-consuming and resource-intensive. In this paper, we introduce a cross-project framework, dubbed Mutualistic Neural Active Learning (MNAL), designed for automated and more effective identification of bug reports from GitHub repositories boosted by human-machine collaboration. MNAL utilizes a neural language model that learns and generalizes reports across different projects, coupled with active learning to form neural active learning. A distinctive feature of MNAL is the purposely crafted mutualistic relation between the machine learners (neural language model) and human labelers (developers) when enriching the knowledge learned. That is, the most informative human-labeled reports and their corresponding pseudo-labeled ones are used to update the model while those reports that need to be labeled by developers are more readable and identifiable, thereby enhancing the human-machine teaming therein. We evaluate MNAL using a large scale dataset against the SOTA approaches, baselines, and different variants. The results indicate that MNAL achieves up to 95.8% and 196.0% effort reduction in terms of readability and identifiability during human labeling, respectively, while resulting in a better performance in bug report identification. Additionally, our MNAL is model-agnostic since it is capable of improving the model performance with various underlying neural language models. To further verify the efficacy of our approach, we conducted a qualitative case study involving 10 human participants, who rate MNAL as being more effective while saving more time and monetary resources.

Multifaceted Hierarchical Report Identification for Non-Functional Bugs in Deep Learning Frameworks

Oct 04, 2022

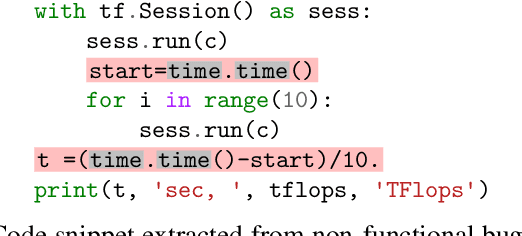

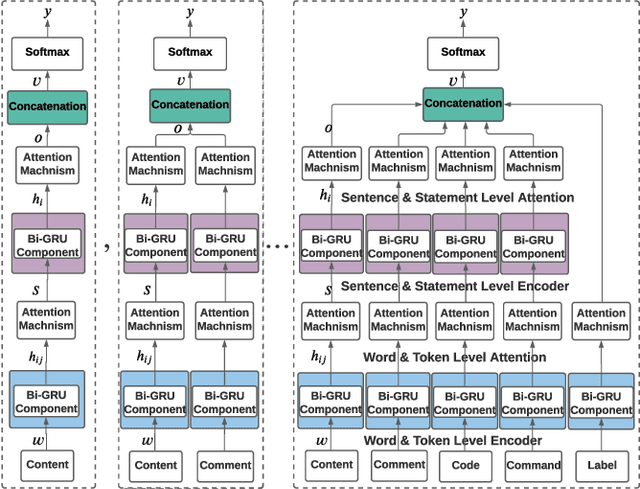

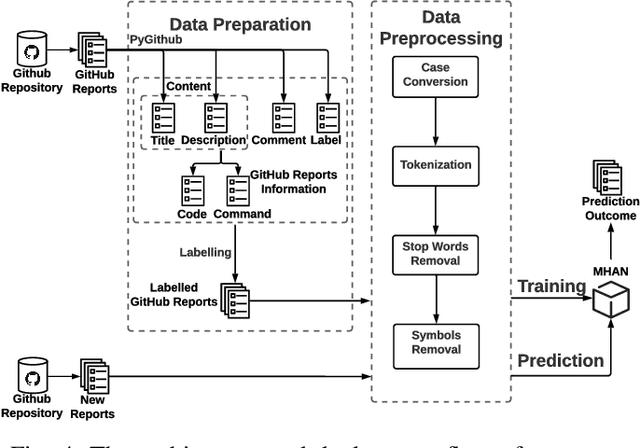

Abstract:Non-functional bugs (e.g., performance- or accuracy-related bugs) in Deep Learning (DL) frameworks can lead to some of the most devastating consequences. Reporting those bugs on a repository such as GitHub is a standard route to fix them. Yet, given the growing number of new GitHub reports for DL frameworks, it is intrinsically difficult for developers to distinguish those that reveal non-functional bugs among the others, and assign them to the right contributor for investigation in a timely manner. In this paper, we propose MHNurf - an end-to-end tool for automatically identifying non-functional bug related reports in DL frameworks. The core of MHNurf is a Multifaceted Hierarchical Attention Network (MHAN) that tackles three unaddressed challenges: (1) learning the semantic knowledge, but doing so by (2) considering the hierarchy (e.g., words/tokens in sentences/statements) and focusing on the important parts (i.e., words, tokens, sentences, and statements) of a GitHub report, while (3) independently extracting information from different types of features, i.e., content, comment, code, command, and label. To evaluate MHNurf, we leverage 3,721 GitHub reports from five DL frameworks for conducting experiments. The results show that MHNurf works the best with a combination of content, comment, and code, which considerably outperforms the classic HAN where only the content is used. MHNurf also produces significantly more accurate results than nine other state-of-the-art classifiers with strong statistical significance, i.e., up to 71% AUC improvement and has the best Scott-Knott rank on four frameworks while 2nd on the remaining one. To facilitate reproduction and promote future research, we have made our dataset, code, and detailed supplementary results publicly available at: https://github.com/ideas-labo/APSEC2022-MHNurf.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge