Guang Cheng

Purdue

Attention Enables Zero Approximation Error

Feb 24, 2022

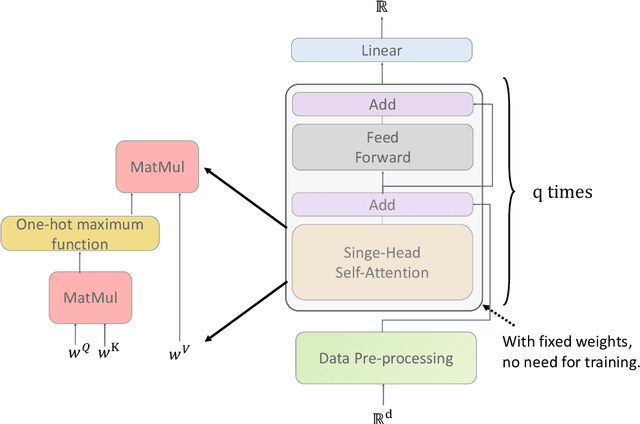

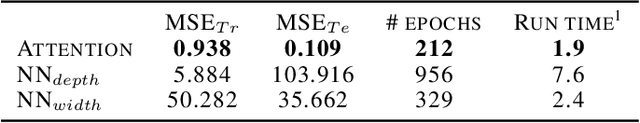

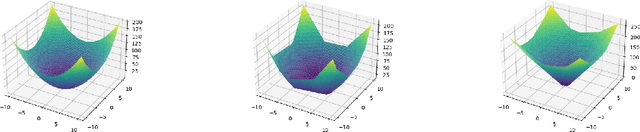

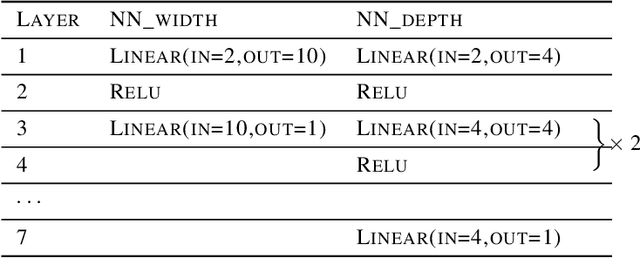

Abstract:Deep learning models have been widely applied in various aspects of daily life. Many variant models based on deep learning structures have achieved even better performances. Attention-based architectures have become almost ubiquitous in deep learning structures. Especially, the transformer model has now defeated the convolutional neural network in image classification tasks to become the most widely used tool. However, the theoretical properties of attention-based models are seldom considered. In this work, we show that with suitable adaptations, the single-head self-attention transformer with a fixed number of transformer encoder blocks and free parameters is able to generate any desired polynomial of the input with no error. The number of transformer encoder blocks is the same as the degree of the target polynomial. Even more exciting, we find that these transformer encoder blocks in this model do not need to be trained. As a direct consequence, we show that the single-head self-attention transformer with increasing numbers of free parameters is universal. These surprising theoretical results clearly explain the outstanding performances of the transformer model and may shed light on future modifications in real applications. We also provide some experiments to verify our theoretical result.

Optimal Learning Rates of Deep Convolutional Neural Networks: Additive Ridge Functions

Feb 24, 2022Abstract:Convolutional neural networks have shown extraordinary abilities in many applications, especially those related to the classification tasks. However, for the regression problem, the abilities of convolutional structures have not been fully understood, and further investigation is needed. In this paper, we consider the mean squared error analysis for deep convolutional neural networks. We show that, for additive ridge functions, convolutional neural networks followed by one fully connected layer with ReLU activation functions can reach optimal mini-max rates (up to a log factor). The convergence rates are dimension independent. This work shows the statistical optimality of convolutional neural networks and may shed light on why convolutional neural networks are able to behave well for high dimensional input.

Benefit of Interpolation in Nearest Neighbor Algorithms

Feb 23, 2022

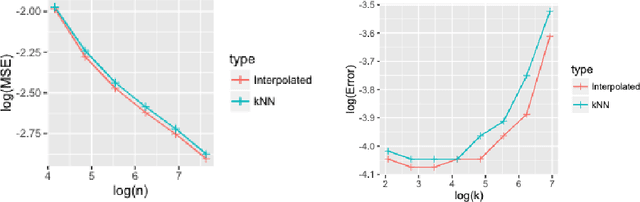

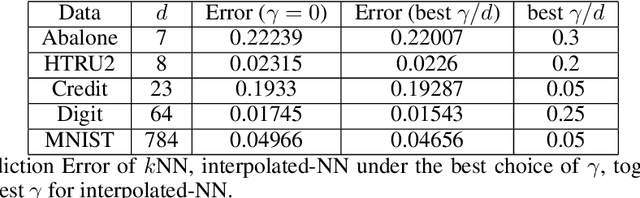

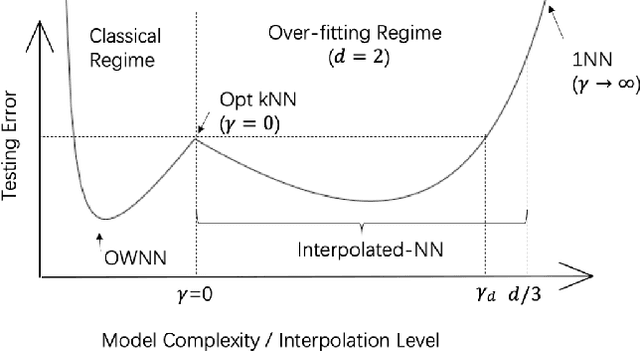

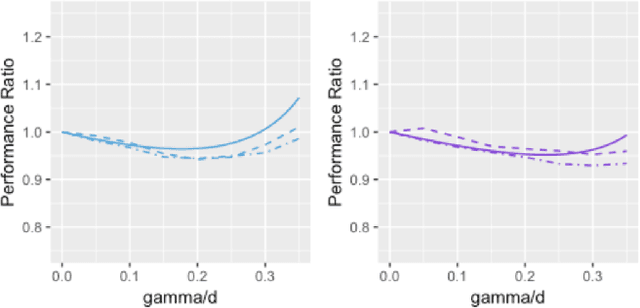

Abstract:In some studies \citep[e.g.,][]{zhang2016understanding} of deep learning, it is observed that over-parametrized deep neural networks achieve a small testing error even when the training error is almost zero. Despite numerous works towards understanding this so-called "double descent" phenomenon \citep[e.g.,][]{belkin2018reconciling,belkin2019two}, in this paper, we turn into another way to enforce zero training error (without over-parametrization) through a data interpolation mechanism. Specifically, we consider a class of interpolated weighting schemes in the nearest neighbors (NN) algorithms. By carefully characterizing the multiplicative constant in the statistical risk, we reveal a U-shaped performance curve for the level of data interpolation in both classification and regression setups. This sharpens the existing result \citep{belkin2018does} that zero training error does not necessarily jeopardize predictive performances and claims a counter-intuitive result that a mild degree of data interpolation actually {\em strictly} improve the prediction performance and statistical stability over those of the (un-interpolated) $k$-NN algorithm. In the end, the universality of our results, such as change of distance measure and corrupted testing data, will also be discussed.

Residual Bootstrap Exploration for Stochastic Linear Bandit

Feb 23, 2022

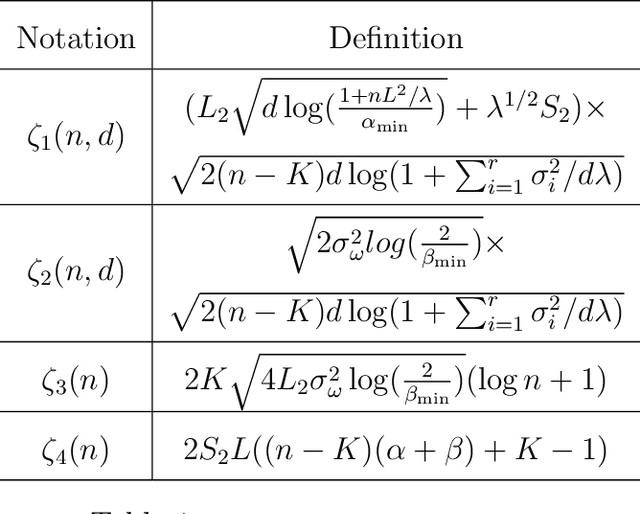

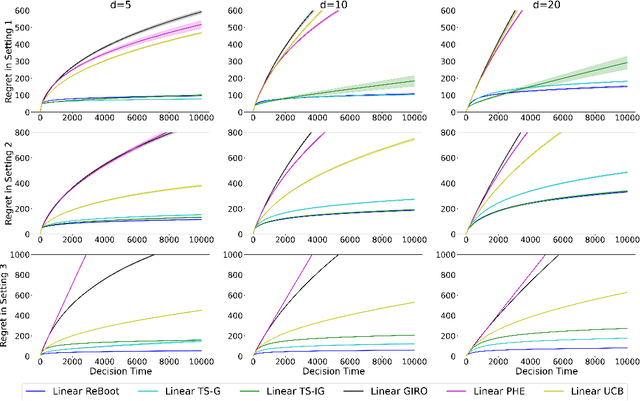

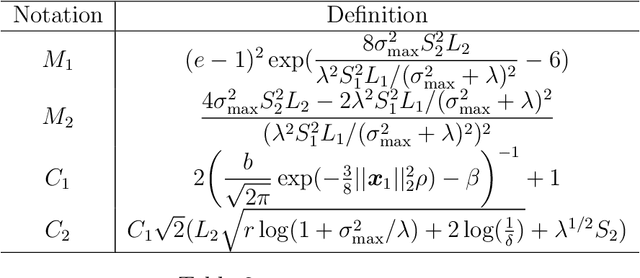

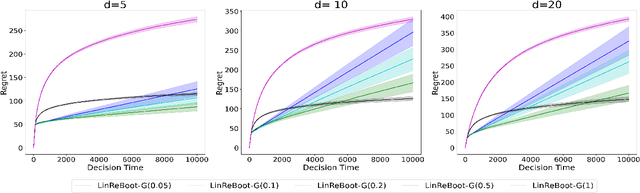

Abstract:We propose a new bootstrap-based online algorithm for stochastic linear bandit problems. The key idea is to adopt residual bootstrap exploration, in which the agent estimates the next step reward by re-sampling the residuals of mean reward estimate. Our algorithm, residual bootstrap exploration for stochastic linear bandit (\texttt{LinReBoot}), estimates the linear reward from its re-sampling distribution and pulls the arm with the highest reward estimate. In particular, we contribute a theoretical framework to demystify residual bootstrap-based exploration mechanisms in stochastic linear bandit problems. The key insight is that the strength of bootstrap exploration is based on collaborated optimism between the online-learned model and the re-sampling distribution of residuals. Such observation enables us to show that the proposed \texttt{LinReBoot} secure a high-probability $\tilde{O}(d \sqrt{n})$ sub-linear regret under mild conditions. Our experiments support the easy generalizability of the \texttt{ReBoot} principle in the various formulations of linear bandit problems and show the significant computational efficiency of \texttt{LinReBoot}.

Unlabeled Data Help: Minimax Analysis and Adversarial Robustness

Feb 14, 2022

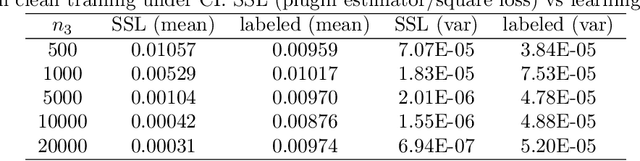

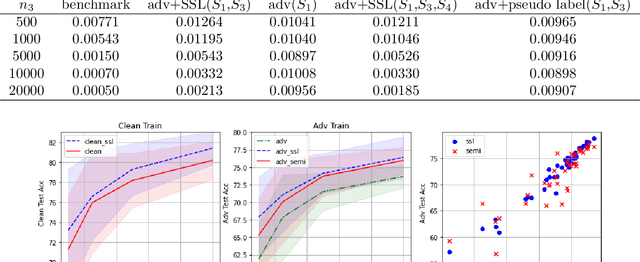

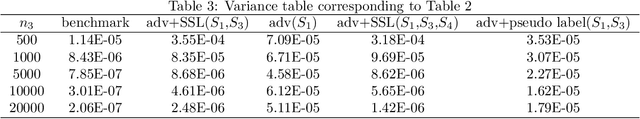

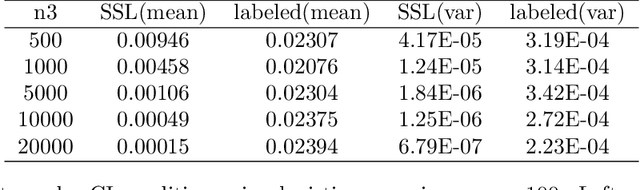

Abstract:The recent proposed self-supervised learning (SSL) approaches successfully demonstrate the great potential of supplementing learning algorithms with additional unlabeled data. However, it is still unclear whether the existing SSL algorithms can fully utilize the information of both labelled and unlabeled data. This paper gives an affirmative answer for the reconstruction-based SSL algorithm \citep{lee2020predicting} under several statistical models. While existing literature only focuses on establishing the upper bound of the convergence rate, we provide a rigorous minimax analysis, and successfully justify the rate-optimality of the reconstruction-based SSL algorithm under different data generation models. Furthermore, we incorporate the reconstruction-based SSL into the existing adversarial training algorithms and show that learning from unlabeled data helps improve the robustness.

High-Dimensional Inference over Networks: Linear Convergence and Statistical Guarantees

Jan 21, 2022

Abstract:We study sparse linear regression over a network of agents, modeled as an undirected graph and no server node. The estimation of the $s$-sparse parameter is formulated as a constrained LASSO problem wherein each agent owns a subset of the $N$ total observations. We analyze the convergence rate and statistical guarantees of a distributed projected gradient tracking-based algorithm under high-dimensional scaling, allowing the ambient dimension $d$ to grow with (and possibly exceed) the sample size $N$. Our theory shows that, under standard notions of restricted strong convexity and smoothness of the loss functions, suitable conditions on the network connectivity and algorithm tuning, the distributed algorithm converges globally at a {\it linear} rate to an estimate that is within the centralized {\it statistical precision} of the model, $O(s\log d/N)$. When $s\log d/N=o(1)$, a condition necessary for statistical consistency, an $\varepsilon$-optimal solution is attained after $\mathcal{O}(\kappa \log (1/\varepsilon))$ gradient computations and $O (\kappa/(1-\rho) \log (1/\varepsilon))$ communication rounds, where $\kappa$ is the restricted condition number of the loss function and $\rho$ measures the network connectivity. The computation cost matches that of the centralized projected gradient algorithm despite having data distributed; whereas the communication rounds reduce as the network connectivity improves. Overall, our study reveals interesting connections between statistical efficiency, network connectivity \& topology, and convergence rate in high dimensions.

Online Bootstrap Inference For Policy Evaluation in Reinforcement Learning

Aug 08, 2021

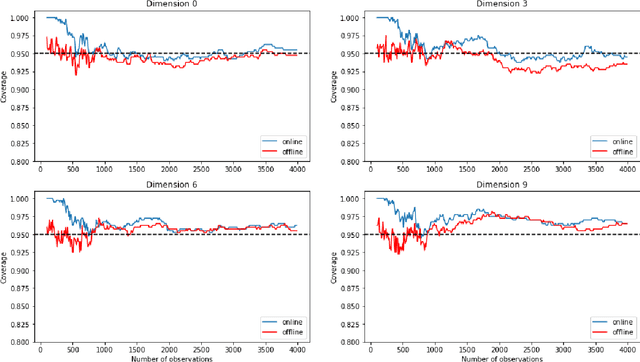

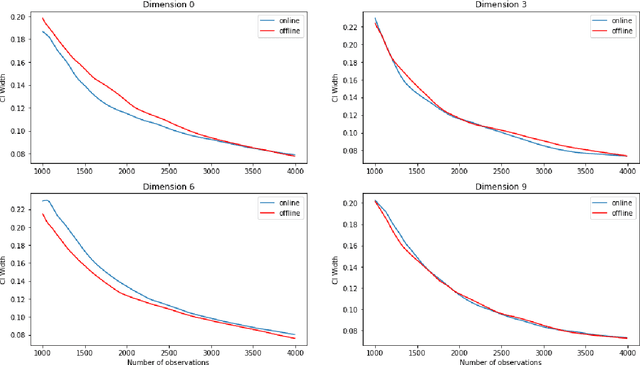

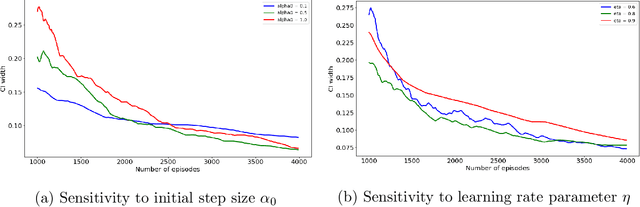

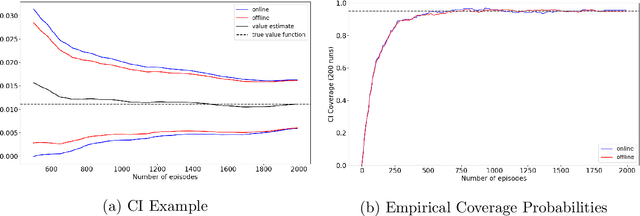

Abstract:The recent emergence of reinforcement learning has created a demand for robust statistical inference methods for the parameter estimates computed using these algorithms. Existing methods for statistical inference in online learning are restricted to settings involving independently sampled observations, while existing statistical inference methods in reinforcement learning (RL) are limited to the batch setting. The online bootstrap is a flexible and efficient approach for statistical inference in linear stochastic approximation algorithms, but its efficacy in settings involving Markov noise, such as RL, has yet to be explored. In this paper, we study the use of the online bootstrap method for statistical inference in RL. In particular, we focus on the temporal difference (TD) learning and Gradient TD (GTD) learning algorithms, which are themselves special instances of linear stochastic approximation under Markov noise. The method is shown to be distributionally consistent for statistical inference in policy evaluation, and numerical experiments are included to demonstrate the effectiveness of this algorithm at statistical inference tasks across a range of real RL environments.

Optimum-statistical collaboration towards efficient black-box optimization

Jun 17, 2021

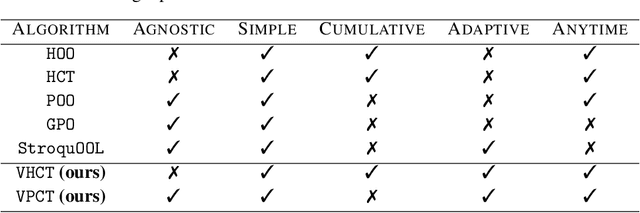

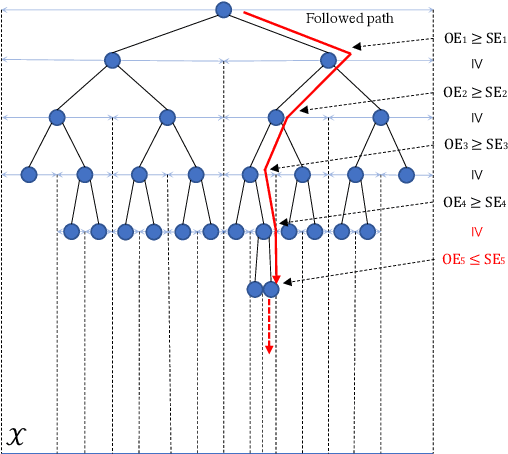

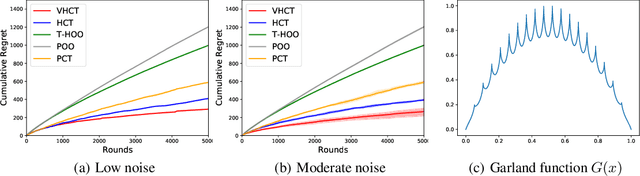

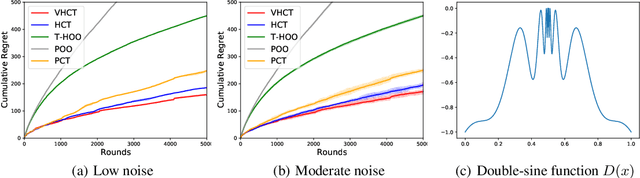

Abstract:With increasingly more hyperparameters involved in their training, machine learning systems demand a better understanding of hyperparameter tuning automation. This has raised interest in studies of provably black-box optimization, which is made more practical by better exploration mechanism implemented in algorithm design, managing the flux of both optimization and statistical errors. Prior efforts focus on delineating optimization errors, but this is deficient: black-box optimization algorithms can be inefficient without considering heterogeneity among reward samples. In this paper, we make the key delineation on the role of statistical uncertainty in black-box optimization, guiding a more efficient algorithm design. We introduce \textit{optimum-statistical collaboration}, a framework of managing the interaction between optimization error flux and statistical error flux evolving in the optimization process. Inspired by this framework, we propose the \texttt{VHCT} algorithms for objective functions with only local-smoothness assumptions. In theory, we prove our algorithm enjoys rate-optimal regret bounds; in experiments, we show the algorithm outperforms prior efforts in extensive settings.

Distributed Bootstrap for Simultaneous Inference Under High Dimensionality

Feb 19, 2021

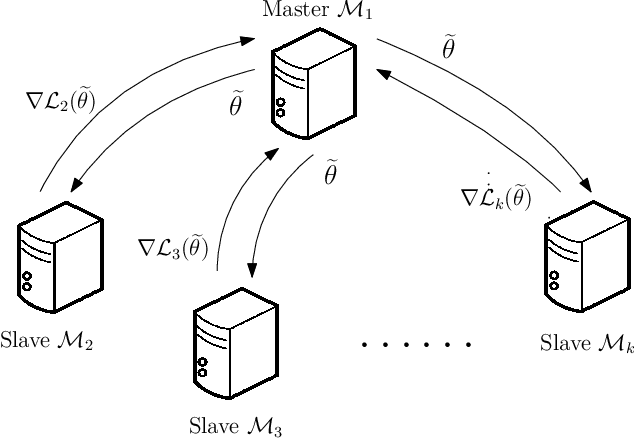

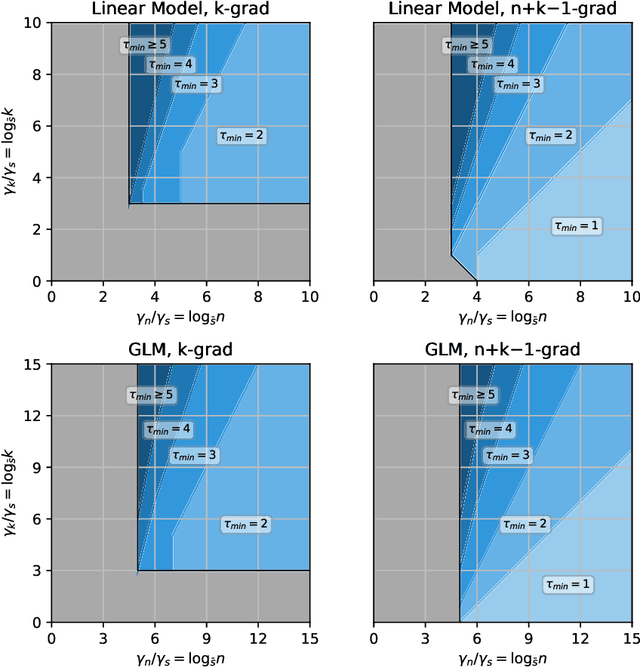

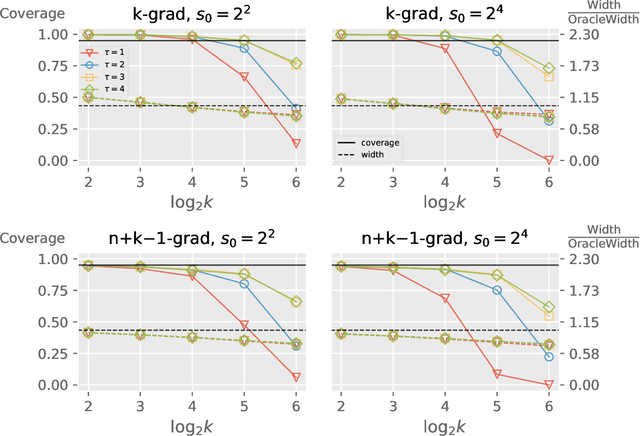

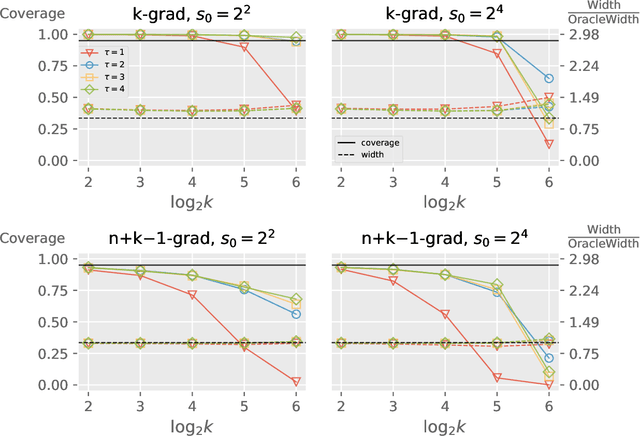

Abstract:We propose a distributed bootstrap method for simultaneous inference on high-dimensional massive data that are stored and processed with many machines. The method produces a $\ell_\infty$-norm confidence region based on a communication-efficient de-biased lasso, and we propose an efficient cross-validation approach to tune the method at every iteration. We theoretically prove a lower bound on the number of communication rounds $\tau_{\min}$ that warrants the statistical accuracy and efficiency. Furthermore, $\tau_{\min}$ only increases logarithmically with the number of workers and intrinsic dimensionality, while nearly invariant to the nominal dimensionality. We test our theory by extensive simulation studies, and a variable screening task on a semi-synthetic dataset based on the US Airline On-time Performance dataset. The code to reproduce the numerical results is available at GitHub: https://github.com/skchao74/Distributed-bootstrap.

Variance Reduction on Adaptive Stochastic Mirror Descent

Dec 26, 2020

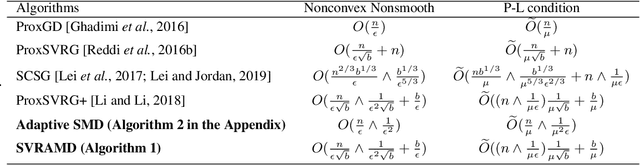

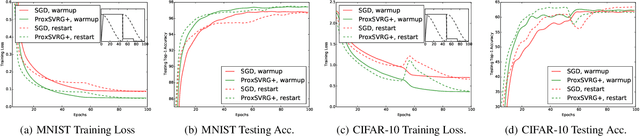

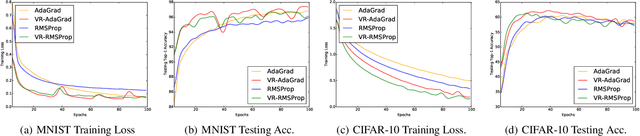

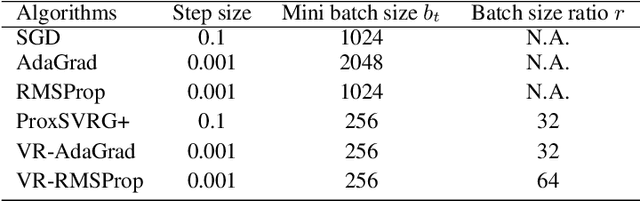

Abstract:We study the idea of variance reduction applied to adaptive stochastic mirror descent algorithms in nonsmooth nonconvex finite-sum optimization problems. We propose a simple yet generalized adaptive mirror descent algorithm with variance reduction named SVRAMD and provide its convergence analysis in different settings. We prove that variance reduction reduces the gradient complexity of most adaptive mirror descent algorithms and boost their convergence. In particular, our general theory implies variance reduction can be applied to algorithms using time-varying step sizes and self-adaptive algorithms such as AdaGrad and RMSProp. Moreover, our convergence rates recover the best existing rates of non-adaptive algorithms. We check the validity of our claims using experiments in deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge