Giovanni De Magistris

Deep Reinforcement Learning for High Precision Assembly Tasks

Sep 22, 2017

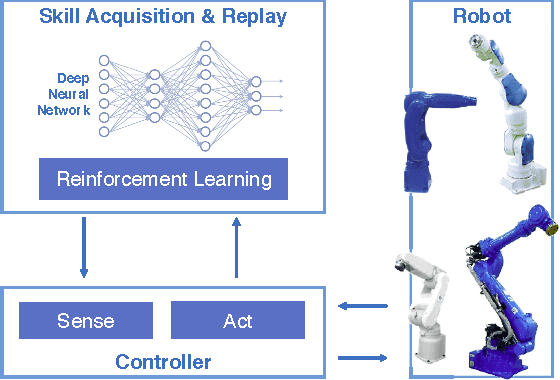

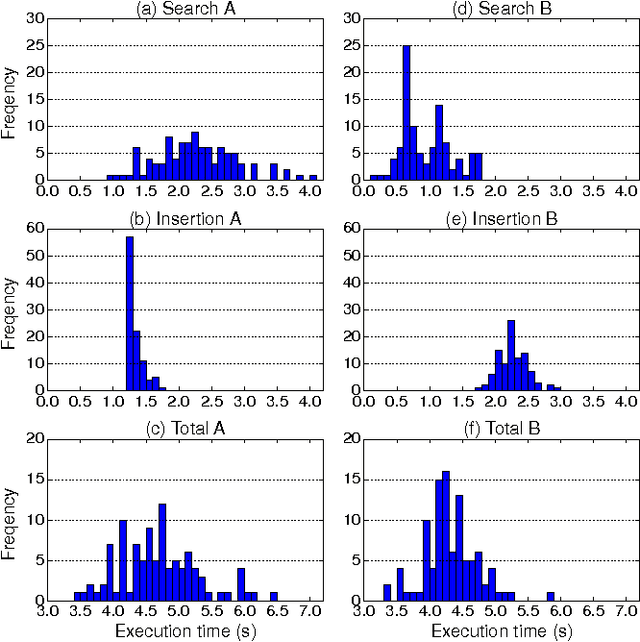

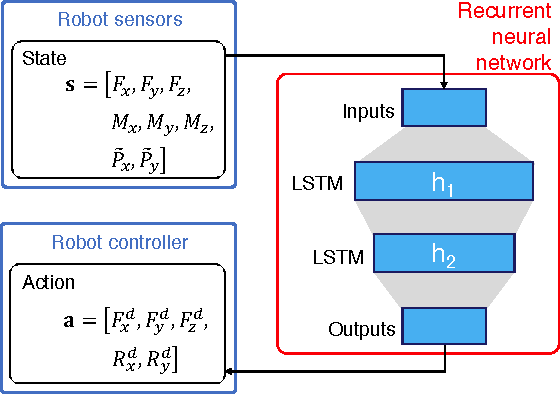

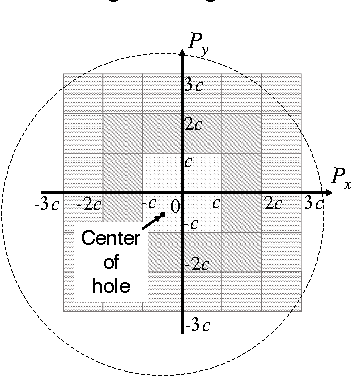

Abstract:High precision assembly of mechanical parts requires accuracy exceeding the robot precision. Conventional part mating methods used in the current manufacturing requires tedious tuning of numerous parameters before deployment. We show how the robot can successfully perform a tight clearance peg-in-hole task through training a recurrent neural network with reinforcement learning. In addition to saving the manual effort, the proposed technique also shows robustness against position and angle errors for the peg-in-hole task. The neural network learns to take the optimal action by observing the robot sensors to estimate the system state. The advantages of our proposed method is validated experimentally on a 7-axis articulated robot arm.

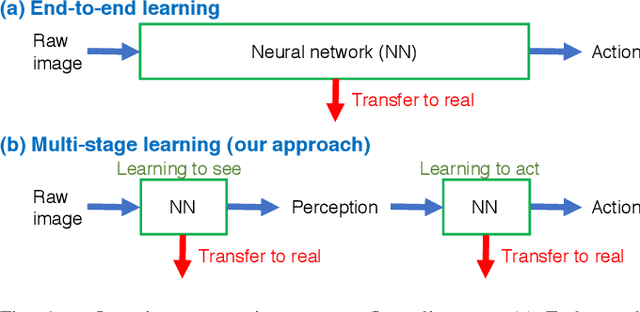

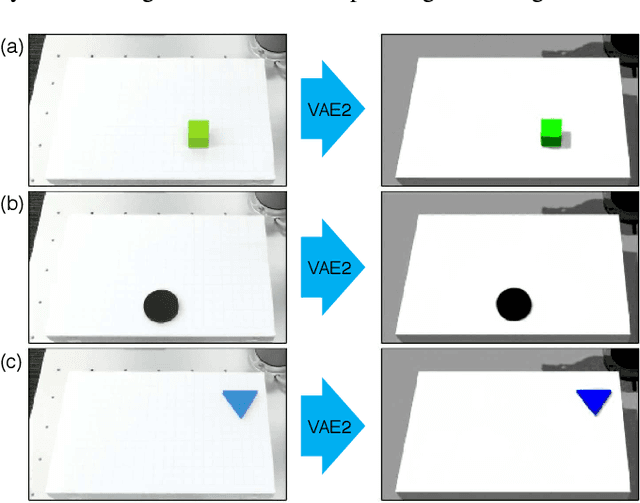

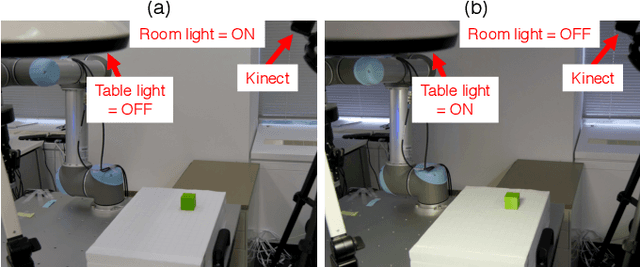

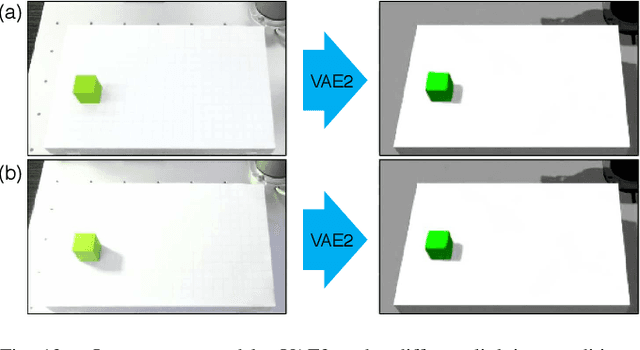

Transfer learning from synthetic to real images using variational autoencoders for robotic applications

Sep 20, 2017

Abstract:Robotic learning in simulation environments provides a faster, more scalable, and safer training methodology than learning directly with physical robots. Also, synthesizing images in a simulation environment for collecting large-scale image data is easy, whereas capturing camera images in the real world is time consuming and expensive. However, learning from only synthetic images may not achieve the desired performance in real environments due to the gap between synthetic and real images. We thus propose a method that transfers learned capability of detecting object position from a simulation environment to the real world. Our method enables us to use only a very limited dataset of real images while leveraging a large dataset of synthetic images using multiple variational autoencoders. It detects object positions 6 to 7 times more precisely than the baseline of directly learning from the dataset of the real images. Object position estimation under varying environmental conditions forms one of the underlying requirement for standard robotic manipulation tasks. We show that the proposed method performs robustly in different lighting conditions or with other distractor objects present for this requirement. Using this detected object position, we transfer pick-and-place or reaching tasks learned in a simulation environment to an actual physical robot without re-training.

Limiting the Reconstruction Capability of Generative Neural Network using Negative Learning

Aug 16, 2017

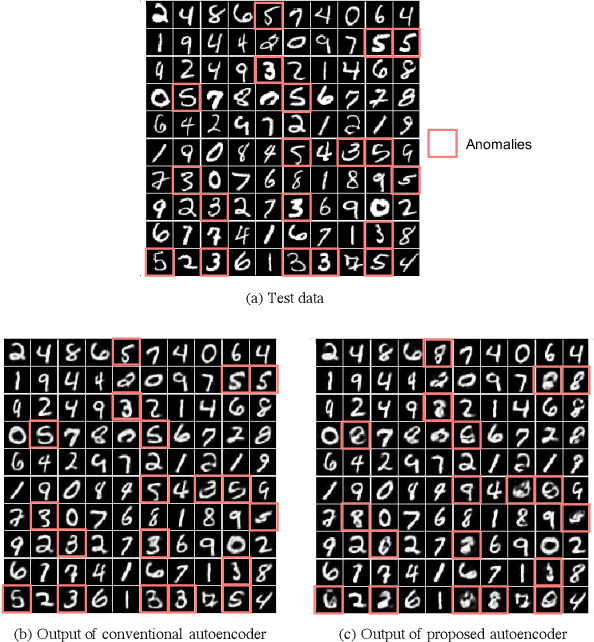

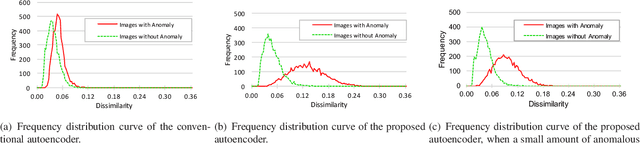

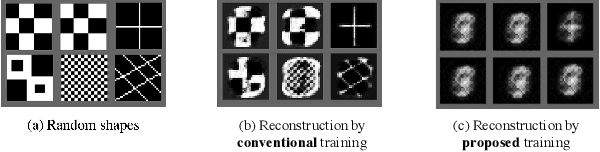

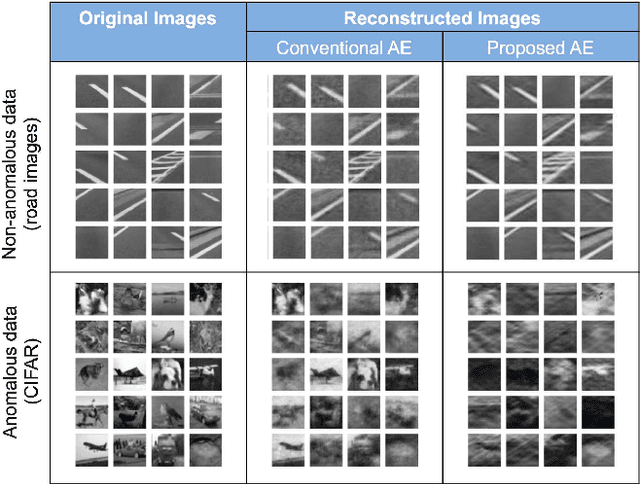

Abstract:Generative models are widely used for unsupervised learning with various applications, including data compression and signal restoration. Training methods for such systems focus on the generality of the network given limited amount of training data. A less researched type of techniques concerns generation of only a single type of input. This is useful for applications such as constraint handling, noise reduction and anomaly detection. In this paper we present a technique to limit the generative capability of the network using negative learning. The proposed method searches the solution in the gradient direction for the desired input and in the opposite direction for the undesired input. One of the application can be anomaly detection where the undesired inputs are the anomalous data. In the results section we demonstrate the features of the algorithm using MNIST handwritten digit dataset and latter apply the technique to a real-world obstacle detection problem. The results clearly show that the proposed learning technique can significantly improve the performance for anomaly detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge