François Pitié

LiteVPNet: A Lightweight Network for Video Encoding Control in Quality-Critical Applications

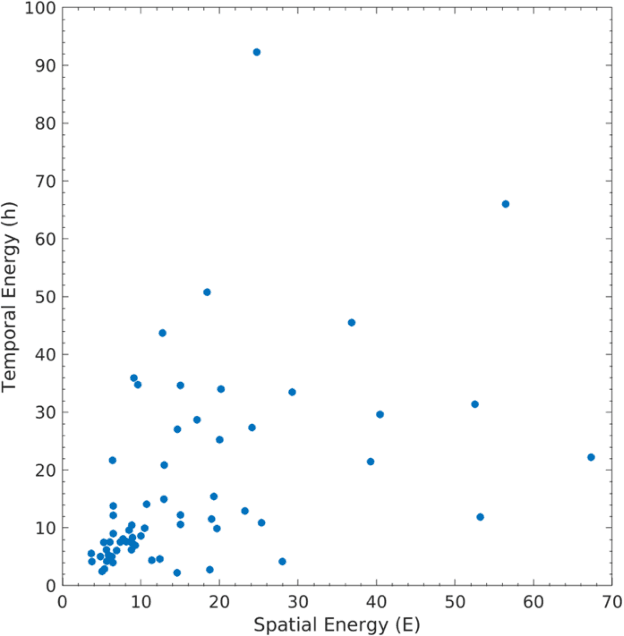

Oct 14, 2025Abstract:In the last decade, video workflows in the cinema production ecosystem have presented new use cases for video streaming technology. These new workflows, e.g. in On-set Virtual Production, present the challenge of requiring precise quality control and energy efficiency. Existing approaches to transcoding often fall short of these requirements, either due to a lack of quality control or computational overhead. To fill this gap, we present a lightweight neural network (LiteVPNet) for accurately predicting Quantisation Parameters for NVENC AV1 encoders that achieve a specified VMAF score. We use low-complexity features, including bitstream characteristics, video complexity measures, and CLIP-based semantic embeddings. Our results demonstrate that LiteVPNet achieves mean VMAF errors below 1.2 points across a wide range of quality targets. Notably, LiteVPNet achieves VMAF errors within 2 points for over 87% of our test corpus, c.f. approx 61% with state-of-the-art methods. LiteVPNet's performance across various quality regions highlights its applicability for enhancing high-value content transport and streaming for more energy-efficient, high-quality media experiences.

Demystifying the use of Compression in Virtual Production

Nov 01, 2024Abstract:Virtual Production (VP) technologies have continued to improve the flexibility of on-set filming and enhance the live concert experience. The core technology of VP relies on high-resolution, high-brightness LED panels to playback/render video content. There are a number of technical challenges to effective deployment e.g. image tile synchronisation across the panels, cross panel colour balancing and compensating for colour fluctuations due to changes in camera angles. Given the complexity and potential quality degradation, the industry prefers "pristine" or lossless compressed source material for displays, which requires significant storage and bandwidth. Modern lossy compression standards like AV1 or H.265 could maintain the same quality at significantly lower bitrates and resource demands. There is yet no agreed methodology for assessing the impact of these standards on quality when the VP scene is recorded in-camera. We present a methodology to assess this impact by comparing lossless and lossy compressed footage displayed through VP screens and recorded in-camera. We assess the quality impact of HAP/NotchLC/Daniel2 and AV1/HEVC/H.264 compression bitrates from 2 Mb/s to 2000 Mb/s with various GOP sizes. Several perceptual quality metrics are then used to automatically evaluate in-camera picture quality, referencing the original uncompressed source content through the LED wall. Our results show that we can achieve the same quality with hybrid codecs as with intermediate encoders at orders of magnitude less bitrate and storage requirements.

Lightweight Video Denoising Using a Classic Bayesian Backbone

Aug 07, 2024

Abstract:In recent years, state-of-the-art image and video denoising networks have become increasingly large, requiring millions of trainable parameters to achieve best-in-class performance. Improved denoising quality has come at the cost of denoising speed, where modern transformer networks are far slower to run than smaller denoising networks such as FastDVDnet and classic Bayesian denoisers such as the Wiener filter. In this paper, we implement a hybrid Wiener filter which leverages small ancillary networks to increase the original denoiser performance, while retaining fast denoising speeds. These networks are used to refine the Wiener coring estimate, optimise windowing functions and estimate the unknown noise profile. Using these methods, we outperform several popular denoisers and remain within 0.2 dB, on average, of the popular VRT transformer. Our method was found to be over x10 faster than the transformer method, with a far lower parameter cost.

Unravelling the Power of Single-Pass Look-Ahead in Modern Codecs for Optimized Transcoding Deployment

Apr 08, 2024

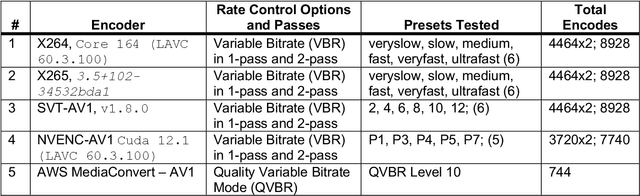

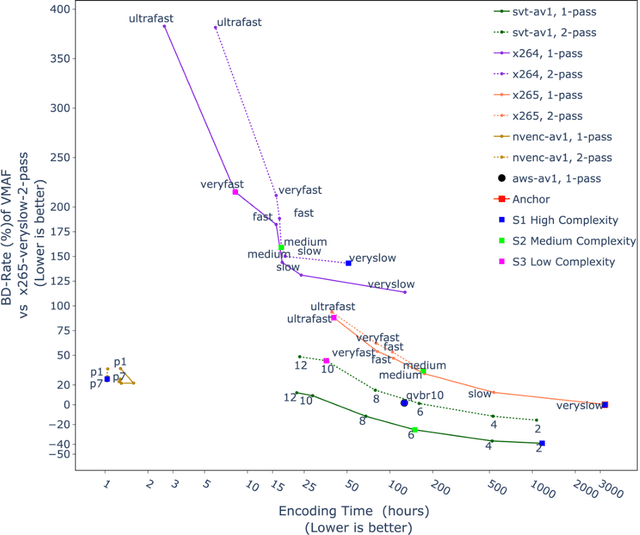

Abstract:Modern video encoders have evolved into sophisticated pieces of software in which various coding tools interact with each other. In the past, singlepass encoding was not considered for Video-On-Demand (VOD) use cases. In this work, we evaluate production-ready encoders for H.264 (x264), H.265 (HEVC), AV1 (SVT-AV1) along with direct comparisons to the latest AV1 encoder inside NVIDIA GPUs (40 series), and AWS Mediaconvert's AV1 implementation. Our experimental results demonstrate single pass encoding inside modern encoder implementations can give us very good quality at a reasonable compute cost. The results are presented as three different scenarios targeting High, Medium, and Low complexity accounting quality/bitrate/compute load. Finally, a set of recommendations is presented for end-users to help decide which encoder/preset combination might be more suited to their use case.

Subjective assessment of the impact of a content adaptive optimiser for compressing 4K HDR content with AV1

Jun 26, 2023

Abstract:Since 2015 video dimensionality has expanded to higher spatial and temporal resolutions and a wider colour gamut. This High Dynamic Range (HDR) content has gained traction in the consumer space as it delivers an enhanced quality of experience. At the same time, the complexity of codecs is growing. This has driven the development of tools for content-adaptive optimisation that achieve optimal rate-distortion performance for HDR video at 4K resolution. While improvements of just a few percentage points in BD-Rate (1-5\%) are significant for the streaming media industry, the impact on subjective quality has been less studied especially for HDR/AV1. In this paper, we conduct a subjective quality assessment (42 subjects) of 4K HDR content with a per-clip optimisation strategy. We correlate these subjective scores with existing popular objective metrics used in standard development and show that some perceptual metrics correlate surprisingly well even though they are not tuned for HDR. We find that the DSQCS protocol is too insensitive to categorically compare the methods but the data allows us to make recommendations about the use of experts vs non-experts in HDR studies, and explain the subjective impact of film grain in HDR content under compression.

Recommendations for Verifying HDR Subjective Testing Workflows

May 19, 2023

Abstract:Over the past few years, there has been an increase in the demand and availability of High Dynamic Range (HDR) displays and content. To ensure the production of high-quality materials, human evaluation is required. However, ascertaining whether the full playback pipeline is indeed HDR-compliant can be challenging. In this paper, we present a set of recommendations for conformance testing to validate various aspects of the testing workflow, including playback, displays, brightness, colours, and viewing environment. We assessed the effectiveness of HDR conversion techniques used in current standards development (3GPP) for making source materials. Additionally, we evaluate HDR display technologies, including OLED and LCD, using both consumer television and a reference monitor.

Filling the gaps in video transcoder deployment in the cloud

Apr 17, 2023

Abstract:Cloud-based deployment of content production and broadcast workflows has continued to disrupt the industry after the pandemic. The key tools required for unlocking cloud workflows, e.g., transcoding, metadata parsing, and streaming playback, are increasingly commoditized. However, as video traffic continues to increase there is a need to consider tools which offer opportunities for further bitrate/quality gains as well as those which facilitate cloud deployment. In this paper we consider preprocessing, rate/distortion optimisation and cloud cost prediction tools which are only just emerging from the research community. These tools are posed as part of the per-clip optimisation approach to transcoding which has been adopted by large streaming media processing entities but has yet to be made more widely available for the industry.

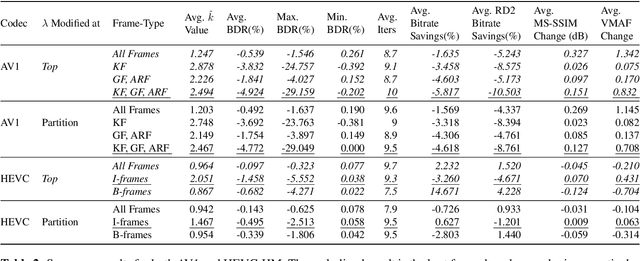

Comparison of HDR quality metrics in Per-Clip Lagrangian multiplier optimisation with AV1

Mar 28, 2023

Abstract:The complexity of modern codecs along with the increased need of delivering high-quality videos at low bitrates has reinforced the idea of a per-clip tailoring of parameters for optimised rate-distortion performance. While the objective quality metrics used for Standard Dynamic Range (SDR) videos have been well studied, the transitioning of consumer displays to support High Dynamic Range (HDR) videos, poses a new challenge to rate-distortion optimisation. In this paper, we review the popular HDR metrics DeltaE100 (DE100), PSNRL100, wPSNR, and HDR-VQM. We measure the impact of employing these metrics in per-clip direct search optimisation of the rate-distortion Lagrange multiplier in AV1. We report, on 35 HDR videos, average Bjontegaard Delta Rate (BD-Rate) gains of 4.675%, 2.226%, and 7.253% in terms of DE100, PSNRL100, and HDR-VQM. We also show that the inclusion of chroma in the quality metrics has a significant impact on optimisation, which can only be partially addressed by the use of chroma offsets.

Pushing The Limits of the Wiener Filter in Image Denoising

Mar 27, 2023

Abstract:As modern image denoiser networks have grown in size, their reported performance in popular real noise benchmarks such as DND and SIDD have now long outperformed classic non-deep learning denoisers such as Wiener and Wavelet-based methods. In this paper, we propose to revisit the Wiener filter and re-assess its potential performance. We show that carefully considering the implementation of the Wiener filter can yield similar performance to popular networks such as DnCNN.

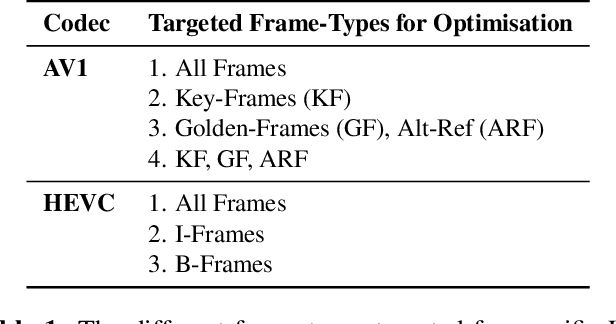

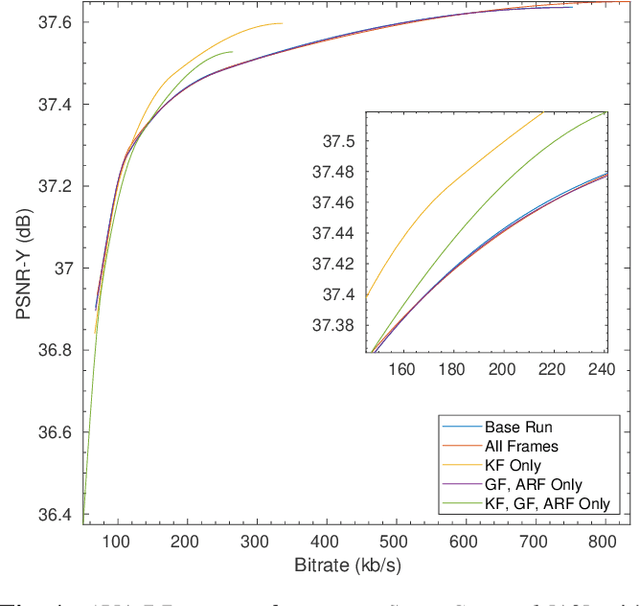

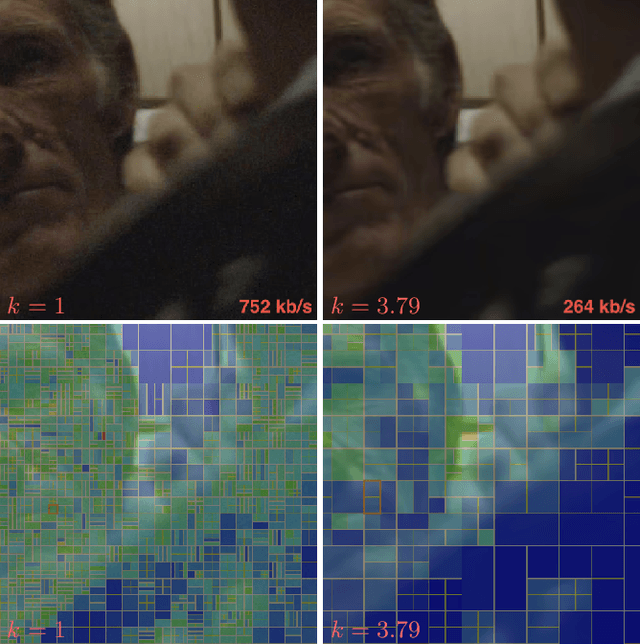

Frame-type Sensitive RDO Control for Content-Adaptive-encoding

Jun 23, 2022

Abstract:Video transcoding is an increasingly important application in the streaming media industry. It has become important to investigate the optimisation of transcoder parameters for a single clip simply because of the immense number of playbacks for popular clips. In this paper, we explore the use of a canned optimiser to estimate the optimal RD tradeoff achievable for a particular clip. We show that by adjusting the Lagrange multiplier in RD optimisation on keyframes alone we can achieve more than 10$\times$ the previous BD-Rate gains possible without affecting quality for any operating point.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge