Farida Farsian

Multivariate time-series forecasting of ASTRI-Horn monitoring data: A Normal Behavior Model

Feb 23, 2026Abstract:This study presents a Normal Behavior Model (NBM) developed to forecast monitoring time-series data from the ASTRI-Horn Cherenkov telescope under normal operating conditions. The analysis focused on 15 physical variables acquired by the Telescope Control Unit between September 2022 and July 2024, representing sensor measurements from the Azimuth and Elevation motors. After data cleaning, resampling, feature selection, and correlation analysis, the dataset was segmented into fixed-length intervals, in which the first I samples represented the input sequence provided to the model, while the forecast length, T, indicated the number of future time steps to be predicted. A sliding-window technique was then applied to increase the number of intervals. A Multi-Layer Perceptron (MLP) was trained to perform multivariate forecasting across all features simultaneously. Model performance was evaluated using the Mean Squared Error (MSE) and the Normalized Median Absolute Deviation (NMAD), and it was also benchmarked against a Long Short-Term Memory (LSTM) network. The MLP model demonstrated consistent results across different features and I-T configurations, and matched the performance of the LSTM while converging faster. It achieved an MSE of 0.019+/-0.003 and an NMAD of 0.032+/-0.009 on the test set under its best configuration (4 hidden layers, 720 units per layer, and I-T lengths of 300 samples each, corresponding to 5 hours at 1-minute resolution). Extending the forecast horizon up to 6.5 hours-the maximum allowed by this configuration-did not degrade performance, confirming the model's effectiveness in providing reliable hour-scale predictions. The proposed NBM provides a powerful tool for enabling early anomaly detection in online ASTRI-Horn monitoring time series, offering a basis for the future development of a prognostics and health management system that supports predictive maintenance.

* 15 pages, 12 figures

Benchmarking Quantum Convolutional Neural Networks for Signal Classification in Simulated Gamma-Ray Burst Detection

Jan 28, 2025

Abstract:This study evaluates the use of Quantum Convolutional Neural Networks (QCNNs) for identifying signals resembling Gamma-Ray Bursts (GRBs) within simulated astrophysical datasets in the form of light curves. The task addressed here focuses on distinguishing GRB-like signals from background noise in simulated Cherenkov Telescope Array Observatory (CTAO) data, the next-generation astrophysical observatory for very high-energy gamma-ray science. QCNNs, a quantum counterpart of classical Convolutional Neural Networks (CNNs), leverage quantum principles to process and analyze high-dimensional data efficiently. We implemented a hybrid quantum-classical machine learning technique using the Qiskit framework, with the QCNNs trained on a quantum simulator. Several QCNN architectures were tested, employing different encoding methods such as Data Reuploading and Amplitude encoding. Key findings include that QCNNs achieved accuracy comparable to classical CNNs, often surpassing 90\%, while using fewer parameters, potentially leading to more efficient models in terms of computational resources. A benchmark study further examined how hyperparameters like the number of qubits and encoding methods affected performance, with more qubits and advanced encoding methods generally enhancing accuracy but increasing complexity. QCNNs showed robust performance on time-series datasets, successfully detecting GRB signals with high precision. The research is a pioneering effort in applying QCNNs to astrophysics, offering insights into their potential and limitations. This work sets the stage for future investigations to fully realize the advantages of QCNNs in astrophysical data analysis.

Inpainting via Generative Adversarial Networks for CMB data analysis

Apr 21, 2020

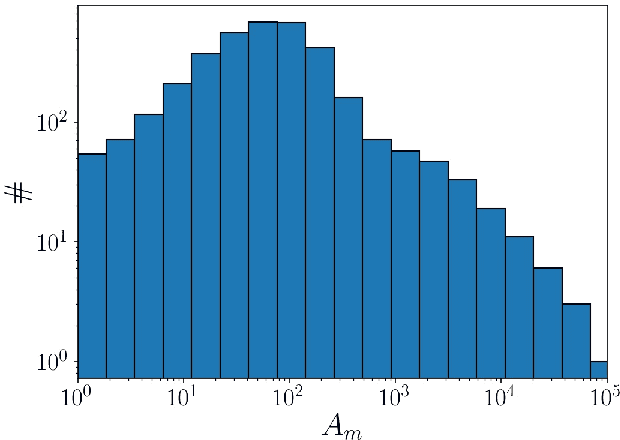

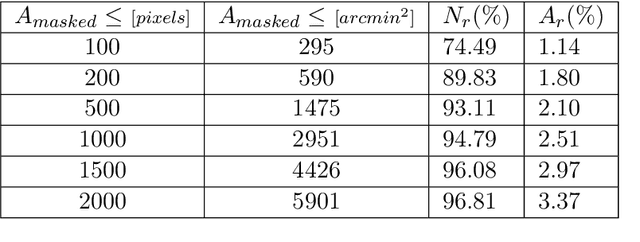

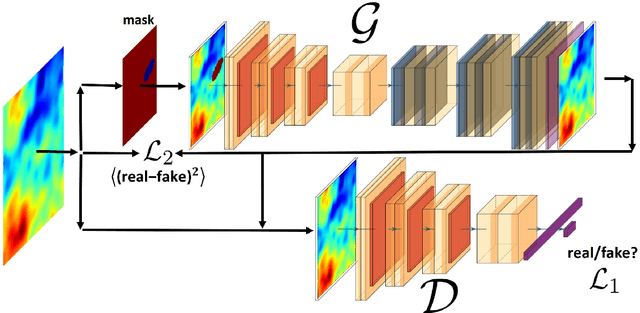

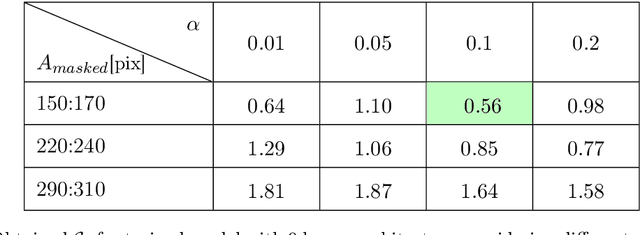

Abstract:In this work, we propose a new method to inpaint the CMB signal in regions masked out following a point source extraction process. We adopt a modified Generative Adversarial Network (GAN) and compare different combinations of internal (hyper-)parameters and training strategies. We study the performance using a suitable $\mathcal{C}_r$ variable in order to estimate the performance regarding the CMB power spectrum recovery. We consider a test set where one point source is masked out in each sky patch with a 1.83 $\times$ 1.83 squared degree extension, which, in our gridding, corresponds to 64 $\times$ 64 pixels. The GAN is optimized for estimating performance on Planck 2018 total intensity simulations. The training makes the GAN effective in reconstructing a masking corresponding to about 1500 pixels with $1\%$ error down to angular scales corresponding to about 5 arcminutes.

Foreground model recognition through Neural Networks for CMB B-mode observations

Mar 04, 2020

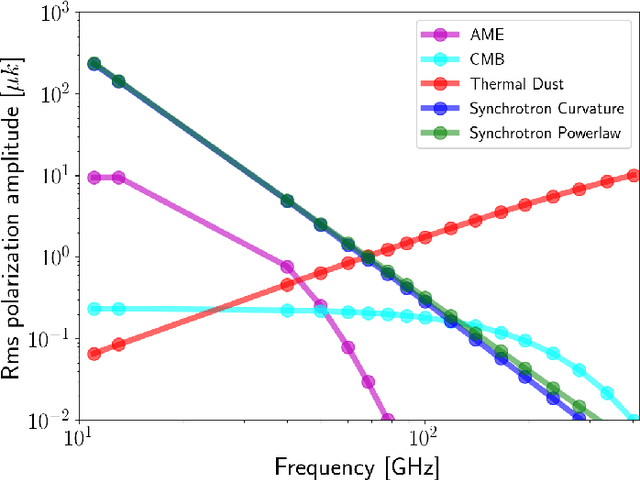

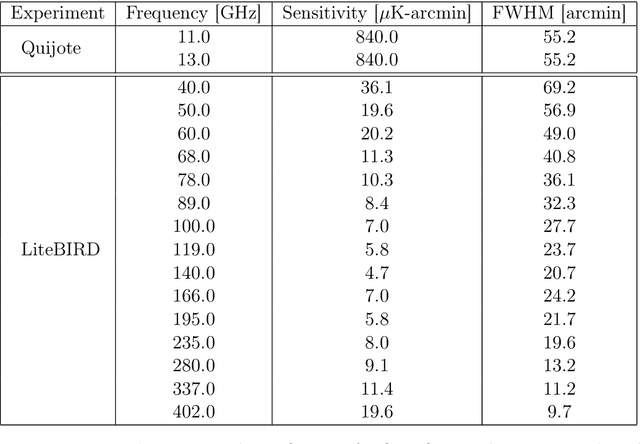

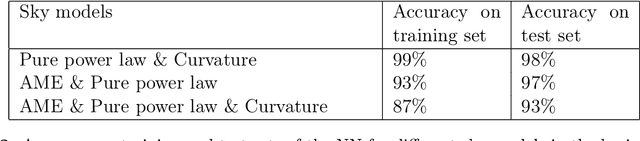

Abstract:In this work we present a Neural Network (NN) algorithm for the identification of the appropriate parametrization of diffuse polarized Galactic emissions in the context of Cosmic Microwave Background (CMB) $B$-mode multi-frequency observations. In particular, we have focused our analysis on low frequency foregrounds relevant for polarization observation: namely Galactic Synchrotron and Anomalous Microwave Emission (AME). We have implemented and tested our approach on a set of simulated maps corresponding to the frequency coverage and sensitivity represented by future satellite and low frequency ground based probes. The NN efficiency in recognizing the right parametrization of foreground emission in different sky regions reaches an accuracy of about $90\%$. We have compared this performance with the $\chi^{2}$ information following parametric foreground estimation using multi-frequency fitting, and quantify the gain provided by a NN approach. Our results show the relevance of model recognition in CMB $B$-mode observations, and highlight the exploitation of dedicated procedures to this purpose.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge