Electron Kebebew

Convolutional Invasion and Expansion Networks for Tumor Growth Prediction

Jan 25, 2018

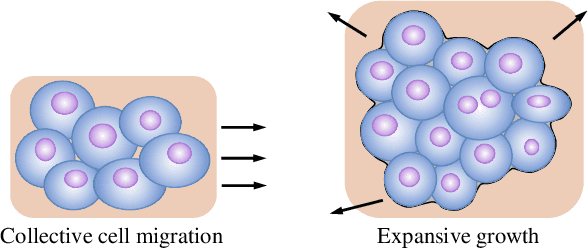

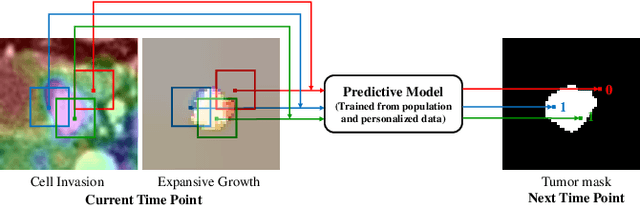

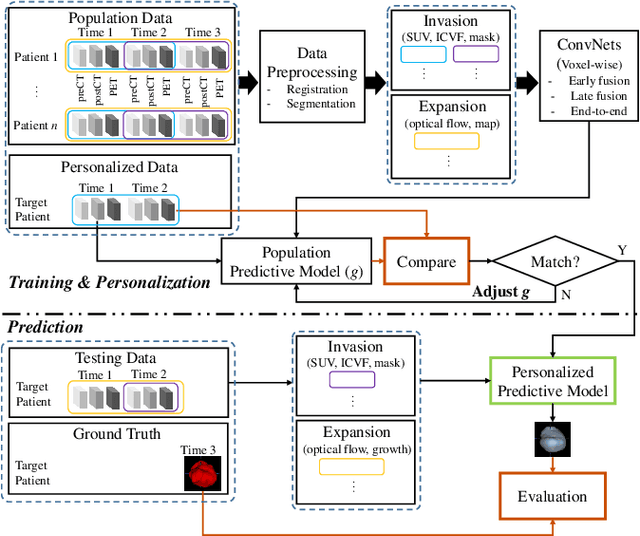

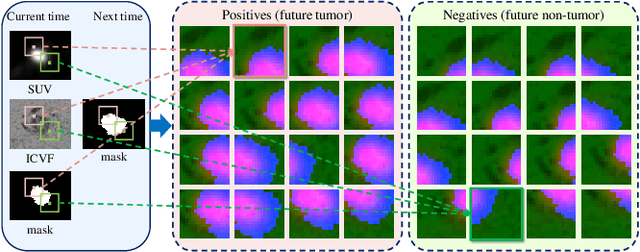

Abstract:Tumor growth is associated with cell invasion and mass-effect, which are traditionally formulated by mathematical models, namely reaction-diffusion equations and biomechanics. Such models can be personalized based on clinical measurements to build the predictive models for tumor growth. In this paper, we investigate the possibility of using deep convolutional neural networks (ConvNets) to directly represent and learn the cell invasion and mass-effect, and to predict the subsequent involvement regions of a tumor. The invasion network learns the cell invasion from information related to metabolic rate, cell density and tumor boundary derived from multimodal imaging data. The expansion network models the mass-effect from the growing motion of tumor mass. We also study different architectures that fuse the invasion and expansion networks, in order to exploit the inherent correlations among them. Our network can easily be trained on population data and personalized to a target patient, unlike most previous mathematical modeling methods that fail to incorporate population data. Quantitative experiments on a pancreatic tumor data set show that the proposed method substantially outperforms a state-of-the-art mathematical model-based approach in both accuracy and efficiency, and that the information captured by each of the two subnetworks are complementary.

Personalized Pancreatic Tumor Growth Prediction via Group Learning

Jun 01, 2017

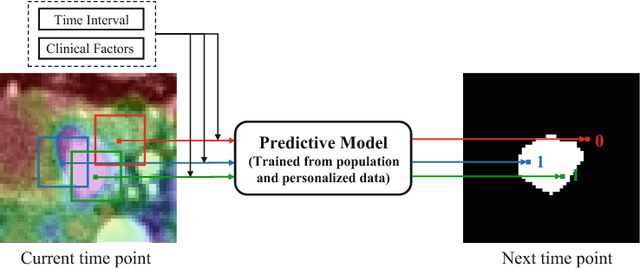

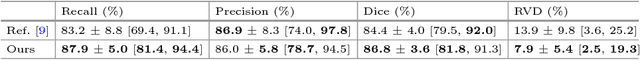

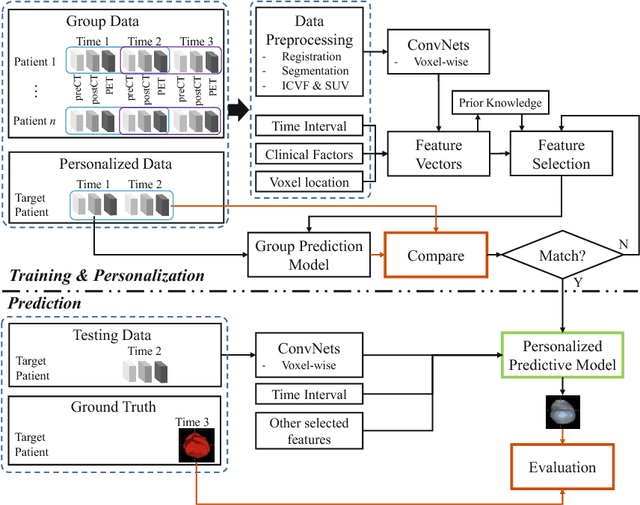

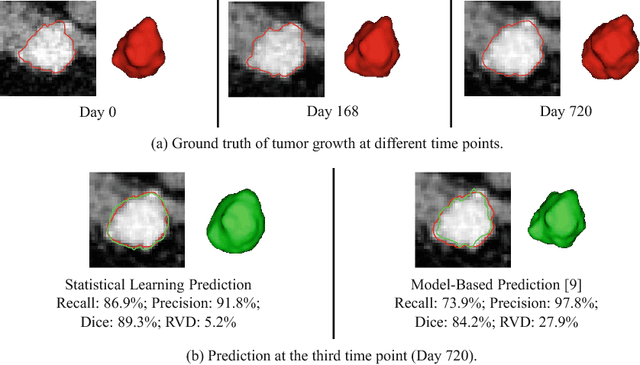

Abstract:Tumor growth prediction, a highly challenging task, has long been viewed as a mathematical modeling problem, where the tumor growth pattern is personalized based on imaging and clinical data of a target patient. Though mathematical models yield promising results, their prediction accuracy may be limited by the absence of population trend data and personalized clinical characteristics. In this paper, we propose a statistical group learning approach to predict the tumor growth pattern that incorporates both the population trend and personalized data, in order to discover high-level features from multimodal imaging data. A deep convolutional neural network approach is developed to model the voxel-wise spatio-temporal tumor progression. The deep features are combined with the time intervals and the clinical factors to feed a process of feature selection. Our predictive model is pretrained on a group data set and personalized on the target patient data to estimate the future spatio-temporal progression of the patient's tumor. Multimodal imaging data at multiple time points are used in the learning, personalization and inference stages. Our method achieves a Dice coefficient of 86.8% +- 3.6% and RVD of 7.9% +- 5.4% on a pancreatic tumor data set, outperforming the DSC of 84.4% +- 4.0% and RVD 13.9% +- 9.8% obtained by a previous state-of-the-art model-based method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge