Elaine Wong

Uncertainty Quantification for Quantum Computing

Mar 26, 2026Abstract:This review is designed to introduce mathematicians and computational scientists to quantum computing (QC) through the lens of uncertainty quantification (UQ) by presenting a mathematically rigorous and accessible narrative for understanding how noise and intrinsic randomness shape quantum computational outcomes in the language of mathematics. By grounding quantum computation in statistical inference, we highlight how mathematical tools such as probabilistic modeling, stochastic analysis, Bayesian inference, and sensitivity analysis, can directly address error propagation and reliability challenges in today's quantum devices. We also connect these methods to key scientific priorities in the field, including scalable uncertainty-aware algorithms and characterization of correlated errors. The purpose is to narrow the conceptual divide between applied mathematics, scientific computing and quantum information sciences, demonstrating how mathematically rooted UQ methodologies can guide validation, error mitigation, and principled algorithm design for emerging quantum technologies, in order to address challenges and opportunities present in modern-day quantum high performance and fault-tolerant quantum computing paradigms.

Scalable Coordinated Learning for H2M/R Applications over Optical Access Networks (Invited)

Feb 27, 2025Abstract:One of the primary research interests adhering to next-generation fiber-wireless access networks is human-to-machine/robot (H2M/R) collaborative communications facilitating Industry 5.0. This paper discusses scalable H2M/R communications across large geographical distances that also allow rapid onboarding of new machines/robots as $\sim72\%$ training time is saved through global-local coordinated learning.

Centralized and Decentralized Non-Cooperative Load-Balancing Games among Competing Cloudlets

May 31, 2020

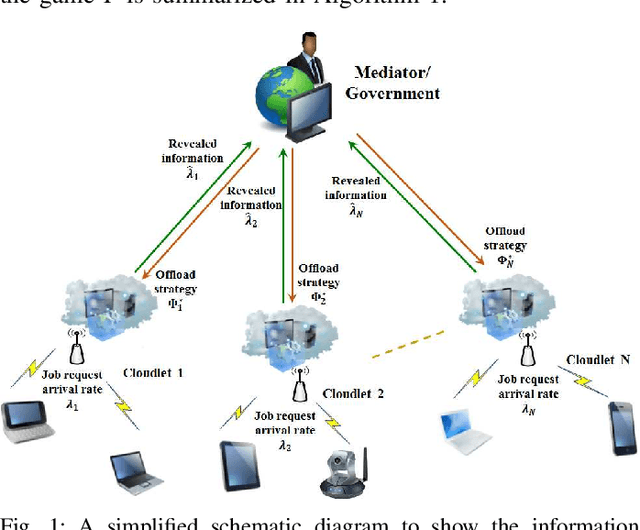

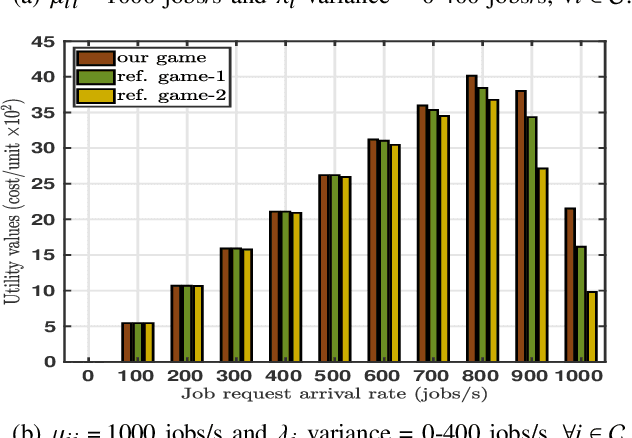

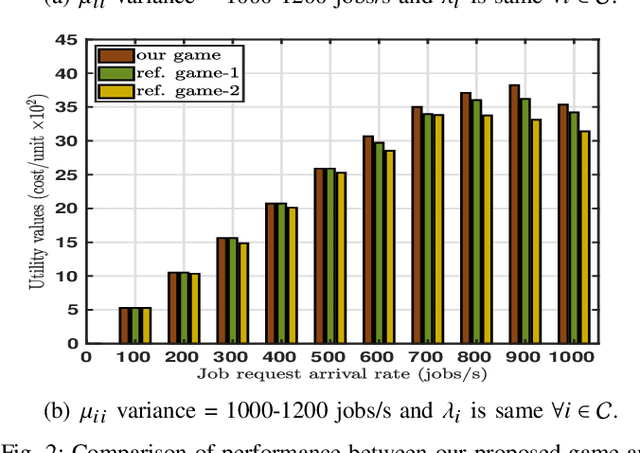

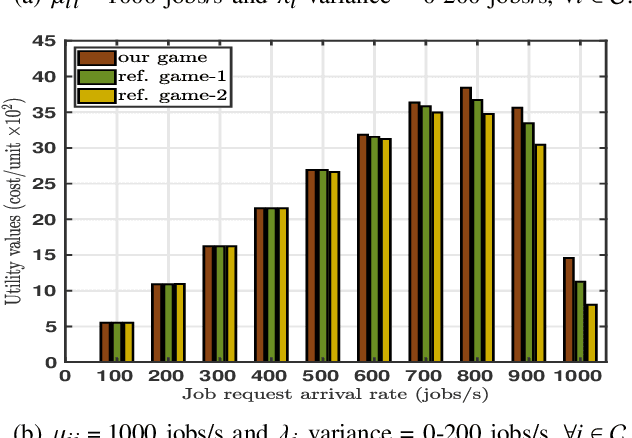

Abstract:Edge computing servers like cloudlets from different service providers that compensate scarce computational, memory, and energy resources of mobile devices, are distributed across access networks. However, depending on the mobility pattern and dynamically varying computational requirements of associated mobile devices, cloudlets at different parts of the network become either overloaded or under-loaded. Hence, load balancing among neighboring cloudlets appears to be an essential research problem. Nonetheless, the existing load balancing frameworks are unsuitable for low-latency applications. Thus, in this paper, we propose an economic and non-cooperative load balancing game for low-latency applications among neighboring cloudlets, from same as well as different service providers. Firstly, we propose a centralized incentive mechanism to compute the unique Nash equilibrium load balancing strategies of the cloudlets under the supervision of a neutral mediator. With this mechanism, we ensure that the truthful revelation of private information to the mediator is a weakly-dominant strategy for both the under-loaded and overloaded cloudlets. Secondly, we propose a continuous-action reinforcement learning automata-based algorithm, which allows each cloudlet to independently compute the Nash equilibrium in a completely distributed network setting. We critically study the convergence properties of the designed learning algorithm, scaffolding our understanding of the underlying load balancing game for faster convergence. Furthermore, through extensive simulations, we study the impacts of exploration and exploitation on learning accuracy. This is the first study to show the effectiveness of reinforcement learning algorithms for load balancing games among neighboring cloudlets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge