Dongbin Xiu

Learning Fine Scale Dynamics from Coarse Observations via Inner Recurrence

Jun 03, 2022

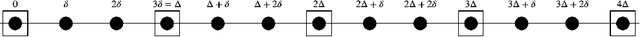

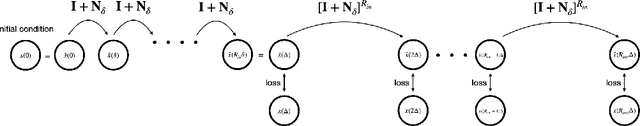

Abstract:Recent work has focused on data-driven learning of the evolution of unknown systems via deep neural networks (DNNs), with the goal of conducting long term prediction of the dynamics of the unknown system. In many real-world applications, data from time-dependent systems are often collected on a time scale that is coarser than desired, due to various restrictions during the data acquisition process. Consequently, the observed dynamics can be severely under-sampled and do not reflect the true dynamics of the underlying system. This paper presents a computational technique to learn the fine-scale dynamics from such coarsely observed data. The method employs inner recurrence of a DNN to recover the fine-scale evolution operator of the underlying system. In addition to mathematical justification, several challenging numerical examples, including unknown systems of both ordinary and partial differential equations, are presented to demonstrate the effectiveness of the proposed method.

Deep Learning of Chaotic Systems from Partially-Observed Data

May 12, 2022

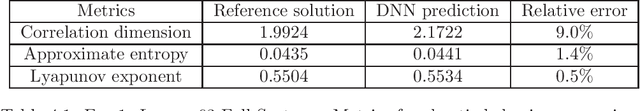

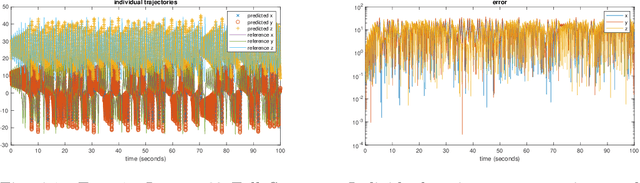

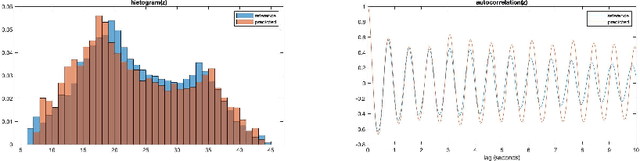

Abstract:Recently, a general data driven numerical framework has been developed for learning and modeling of unknown dynamical systems using fully- or partially-observed data. The method utilizes deep neural networks (DNNs) to construct a model for the flow map of the unknown system. Once an accurate DNN approximation of the flow map is constructed, it can be recursively executed to serve as an effective predictive model of the unknown system. In this paper, we apply this framework to chaotic systems, in particular the well-known Lorenz 63 and 96 systems, and critically examine the predictive performance of the approach. A distinct feature of chaotic systems is that even the smallest perturbations will lead to large (albeit bounded) deviations in the solution trajectories. This makes long-term predictions of the method, or any data driven methods, questionable, as the local model accuracy will eventually degrade and lead to large pointwise errors. Here we employ several other qualitative and quantitative measures to determine whether the chaotic dynamics have been learned. These include phase plots, histograms, autocorrelation, correlation dimension, approximate entropy, and Lyapunov exponent. Using these measures, we demonstrate that the flow map based DNN learning method is capable of accurately modeling chaotic systems, even when only a subset of the state variables are available to the DNNs. For example, for the Lorenz 96 system with 40 state variables, when data of only 3 variables are available, the method is able to learn an effective DNN model for the 3 variables and produce accurately the chaotic behavior of the system.

Robust Modeling of Unknown Dynamical Systems via Ensemble Averaged Learning

Mar 07, 2022

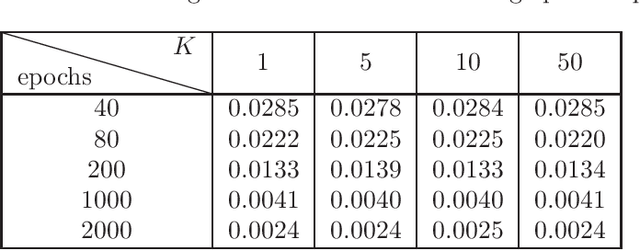

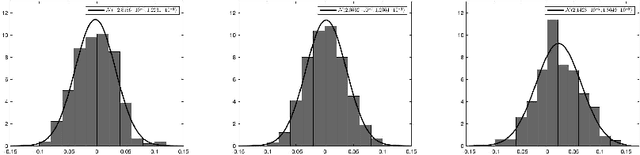

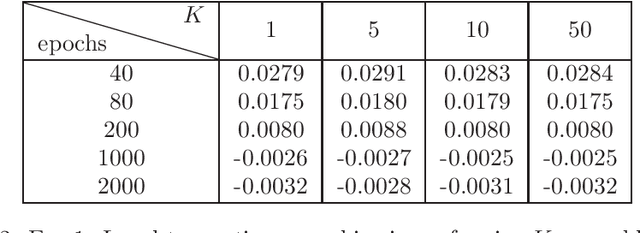

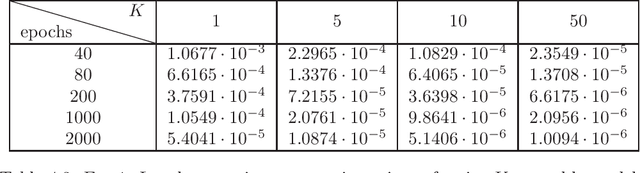

Abstract:Recent work has focused on data-driven learning of the evolution of unknown systems via deep neural networks (DNNs), with the goal of conducting long time prediction of the evolution of the unknown system. Training a DNN with low generalization error is a particularly important task in this case as error is accumulated over time. Because of the inherent randomness in DNN training, chiefly in stochastic optimization, there is uncertainty in the resulting prediction, and therefore in the generalization error. Hence, the generalization error can be viewed as a random variable with some probability distribution. Well-trained DNNs, particularly those with many hyperparameters, typically result in probability distributions for generalization error with low bias but high variance. High variance causes variability and unpredictably in the results of a trained DNN. This paper presents a computational technique which decreases the variance of the generalization error, thereby improving the reliability of the DNN model to generalize consistently. In the proposed ensemble averaging method, multiple models are independently trained and model predictions are averaged at each time step. A mathematical foundation for the method is presented, including results regarding the distribution of the local truncation error. In addition, three time-dependent differential equation problems are considered as numerical examples, demonstrating the effectiveness of the method to decrease variance of DNN predictions generally.

Modeling unknown dynamical systems with hidden parameters

Feb 03, 2022

Abstract:We present a data-driven numerical approach for modeling unknown dynamical systems with missing/hidden parameters. The method is based on training a deep neural network (DNN) model for the unknown system using its trajectory data. A key feature is that the unknown dynamical system contains system parameters that are completely hidden, in the sense that no information about the parameters is available through either the measurement trajectory data or our prior knowledge of the system. We demonstrate that by training a DNN using the trajectory data with sufficient time history, the resulting DNN model can accurately model the unknown dynamical system. For new initial conditions associated with new, and unknown, system parameters, the DNN model can produce accurate system predictions over longer time.

Deep Neural Network Modeling of Unknown Partial Differential Equations in Nodal Space

Jun 07, 2021

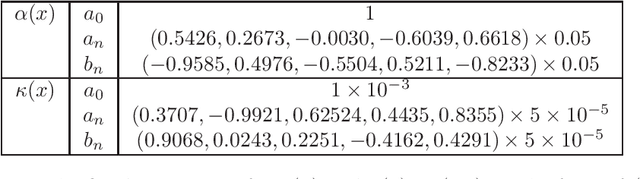

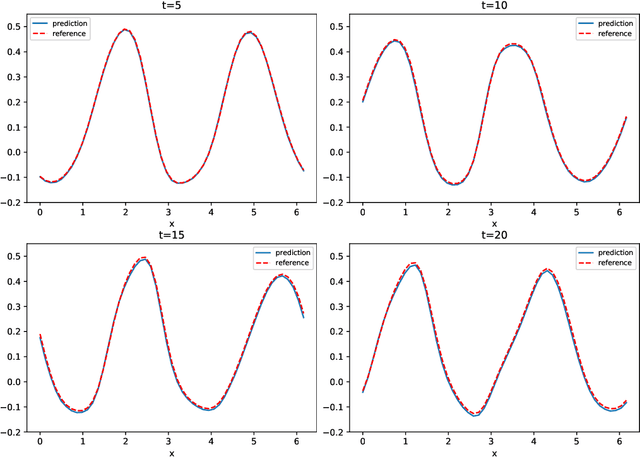

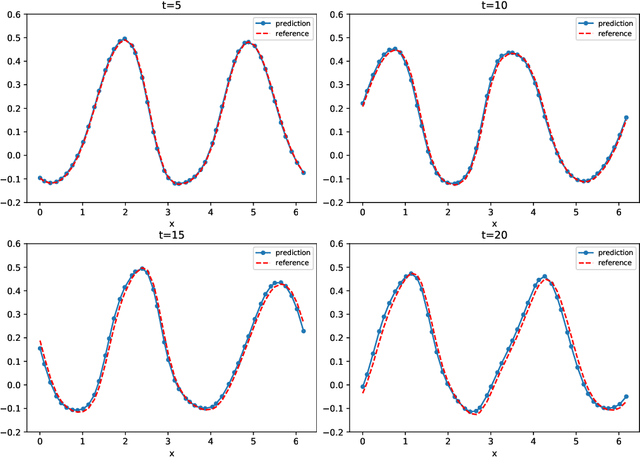

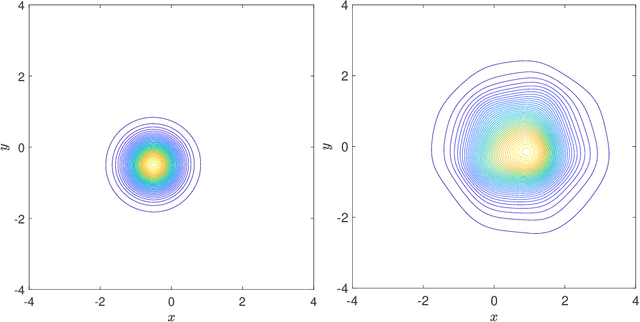

Abstract:We present a numerical framework for deep neural network (DNN) modeling of unknown time-dependent partial differential equations (PDE) using their trajectory data. Unlike the recent work of [Wu and Xiu, J. Comput. Phys. 2020], where the learning takes place in modal/Fourier space, the current method conducts the learning and modeling in physical space and uses measurement data as nodal values. We present a DNN structure that has a direct correspondence to the evolution operator of the underlying PDE, thus establishing the existence of the DNN model. The DNN model also does not require any geometric information of the data nodes. Consequently, a trained DNN defines a predictive model for the underlying unknown PDE over structureless grids. A set of examples, including linear and nonlinear scalar PDE, system of PDEs, in both one dimension and two dimensions, over structured and unstructured grids, are presented to demonstrate the effectiveness of the proposed DNN modeling. Extension to other equations such as differential-integral equations is also discussed.

Data-driven learning of non-autonomous systems

Jun 02, 2020

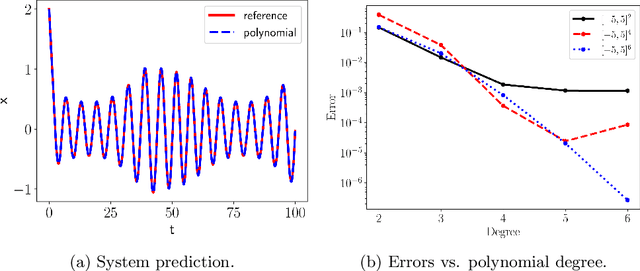

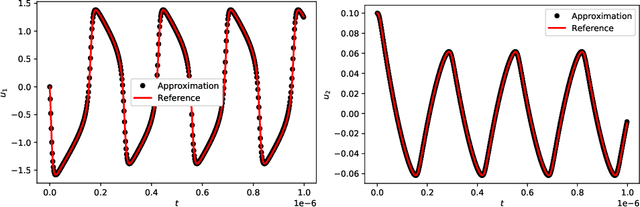

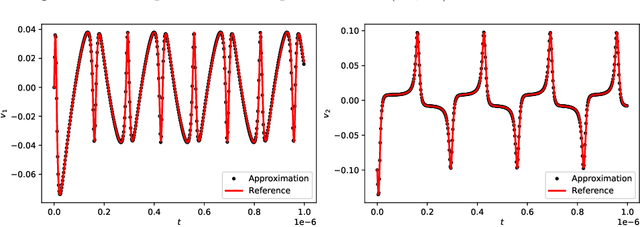

Abstract:We present a numerical framework for recovering unknown non-autonomous dynamical systems with time-dependent inputs. To circumvent the difficulty presented by the non-autonomous nature of the system, our method transforms the solution state into piecewise integration of the system over a discrete set of time instances. The time-dependent inputs are then locally parameterized by using a proper model, for example, polynomial regression, in the pieces determined by the time instances. This transforms the original system into a piecewise parametric system that is locally time invariant. We then design a deep neural network structure to learn the local models. Once the network model is constructed, it can be iteratively used over time to conduct global system prediction. We provide theoretical analysis of our algorithm and present a number of numerical examples to demonstrate the effectiveness of the method.

Learning reduced systems via deep neural networks with memory

Apr 19, 2020

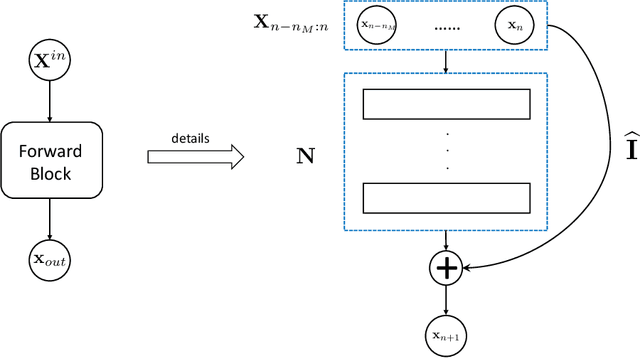

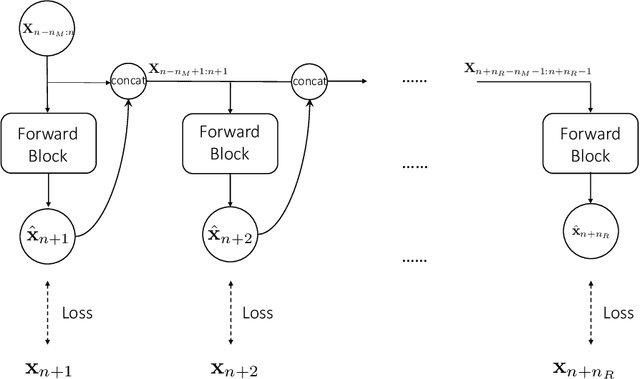

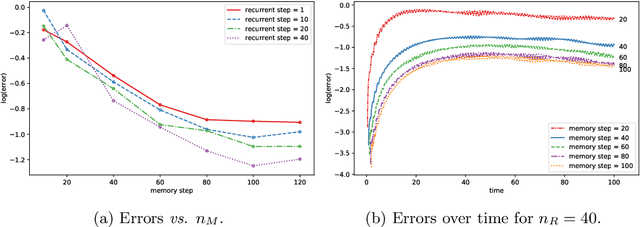

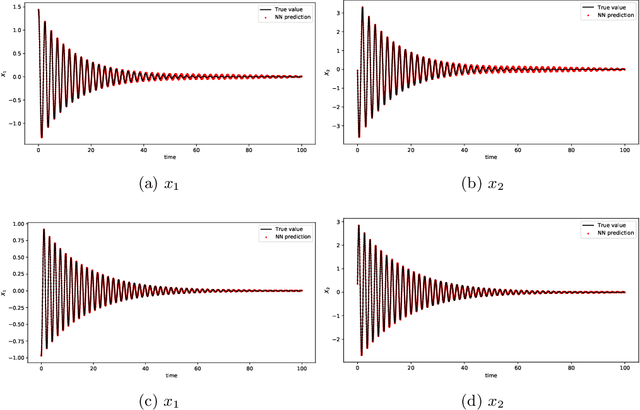

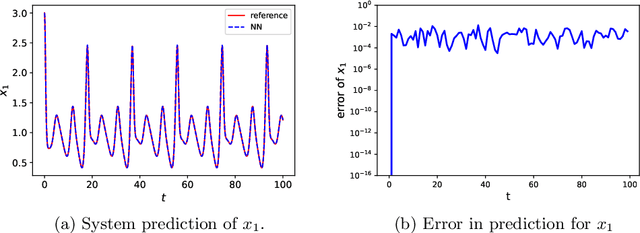

Abstract:We present a general numerical approach for constructing governing equations for unknown dynamical systems when only data on a subset of the state variables are available. The unknown equations for these observed variables are thus a reduced system of the complete set of state variables. Reduced systems possess memory integrals, based on the well known Mori-Zwanzig (MZ) formulism. Our numerical strategy to recover the reduced system starts by formulating a discrete approximation of the memory integral in the MZ formulation. The resulting unknown approximate MZ equations are of finite dimensional, in the sense that a finite number of past history data are involved. We then present a deep neural network structure that directly incorporates the history terms to produce memory in the network. The approach is suitable for any practical systems with finite memory length. We then use a set of numerical examples to demonstrate the effectiveness of our method.

Methods to Recover Unknown Processes in Partial Differential Equations Using Data

Mar 05, 2020

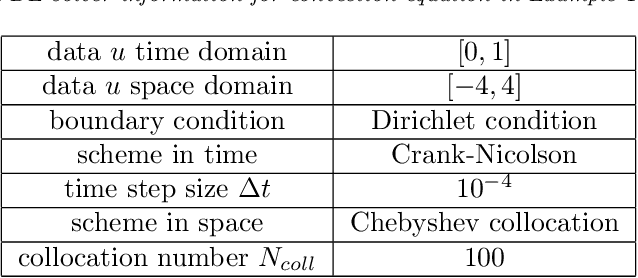

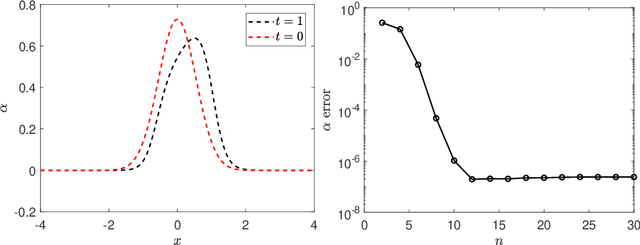

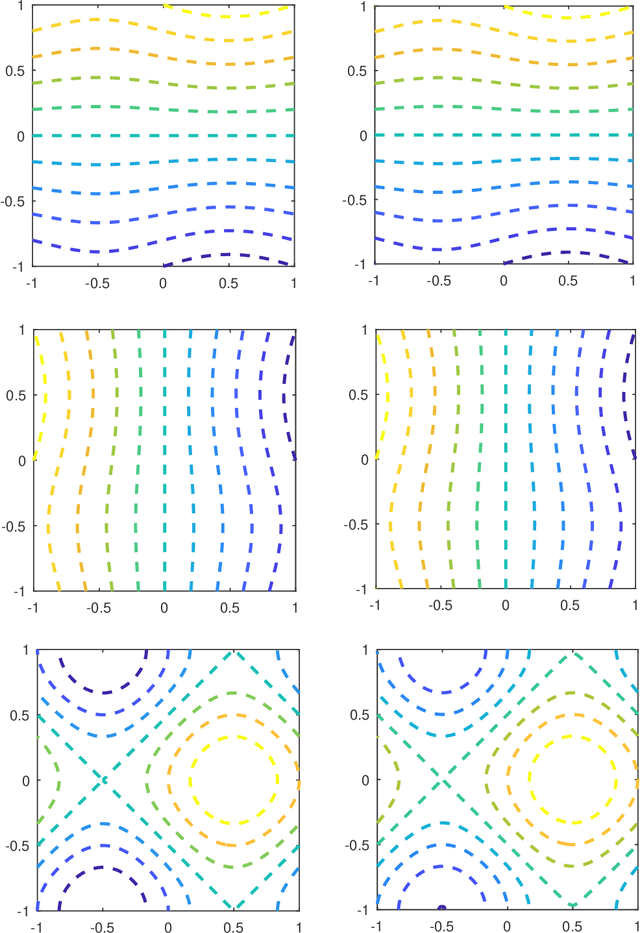

Abstract:We study the problem of identifying unknown processes embedded in time-dependent partial differential equation (PDE) using observational data, with an application to advection-diffusion type PDE. We first conduct theoretical analysis and derive conditions to ensure the solvability of the problem. We then present a set of numerical approaches, including Galerkin type algorithm and collocation type algorithm. Analysis of the algorithms are presented, along with their implementation detail. The Galerkin algorithm is more suitable for practical situations, particularly those with noisy data, as it avoids using derivative/gradient data. Various numerical examples are then presented to demonstrate the performance and properties of the numerical methods.

A Non-Intrusive Correction Algorithm for Classification Problems with Corrupted Data

Feb 11, 2020

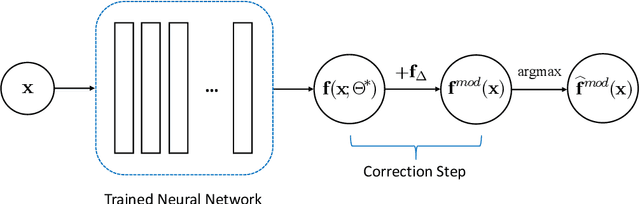

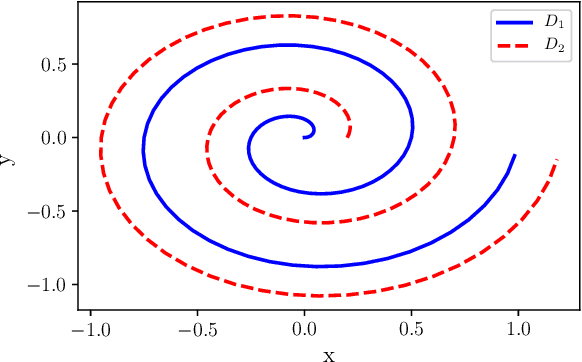

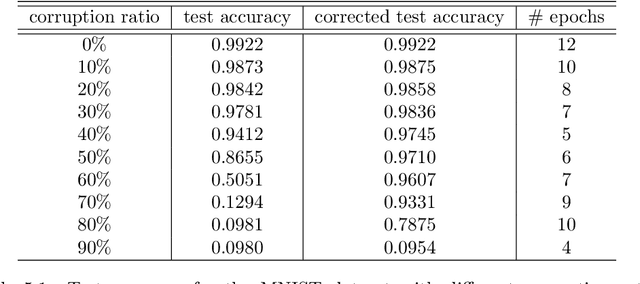

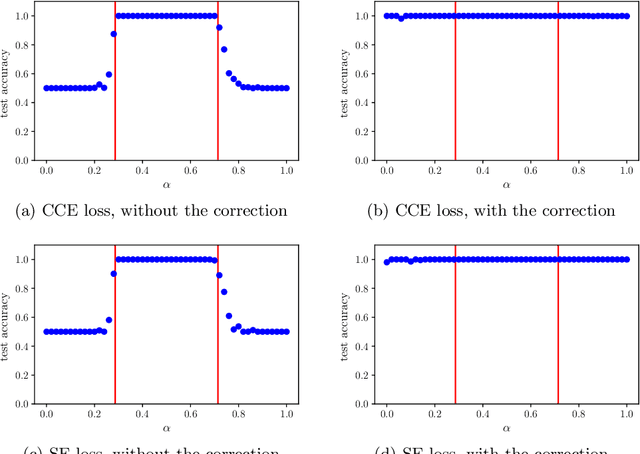

Abstract:A novel correction algorithm is proposed for multi-class classification problems with corrupted training data. The algorithm is non-intrusive, in the sense that it post-processes a trained classification model by adding a correction procedure to the model prediction. The correction procedure can be coupled with any approximators, such as logistic regression, neural networks of various architectures, etc. When training dataset is sufficiently large, we prove that the corrected models deliver correct classification results as if there is no corruption in the training data. For datasets of finite size, the corrected models produce significantly better recovery results, compared to the models without the correction algorithm. All of the theoretical findings in the paper are verified by our numerical examples.

On generalized residue network for deep learning of unknown dynamical systems

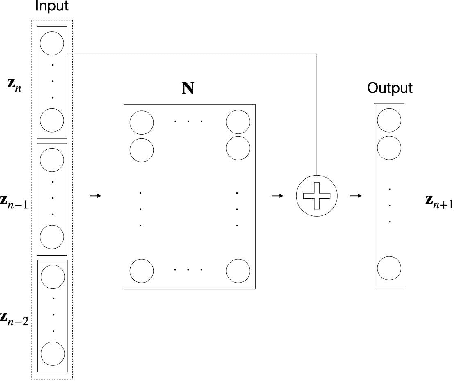

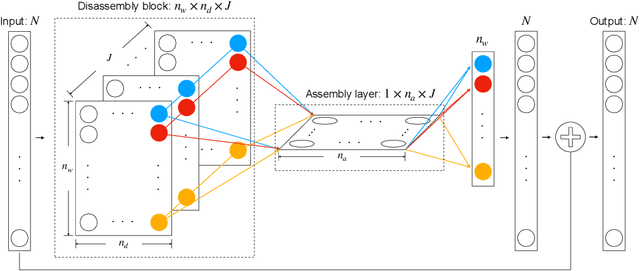

Jan 23, 2020

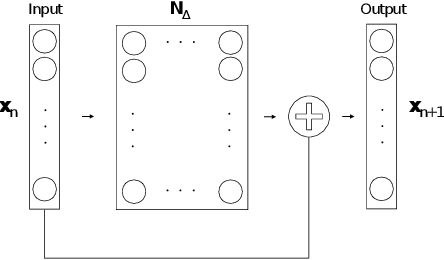

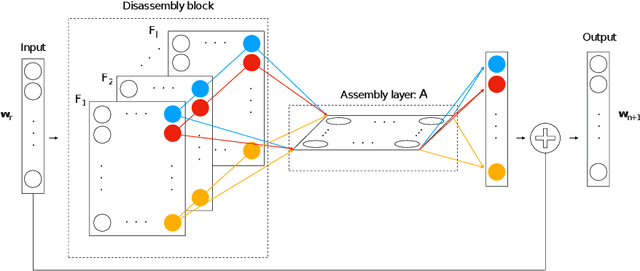

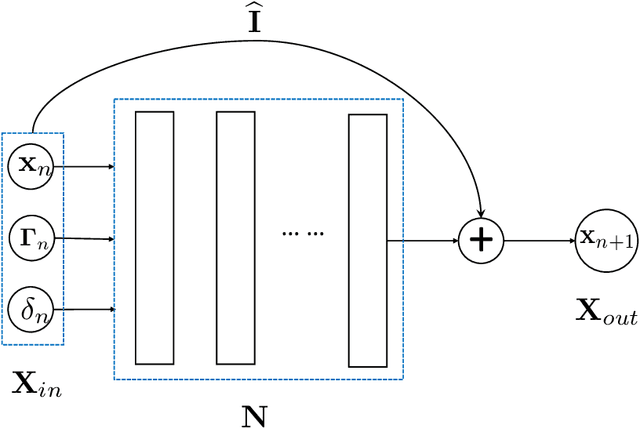

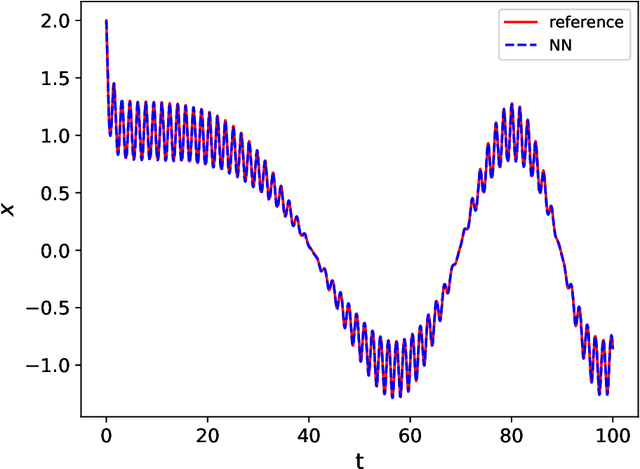

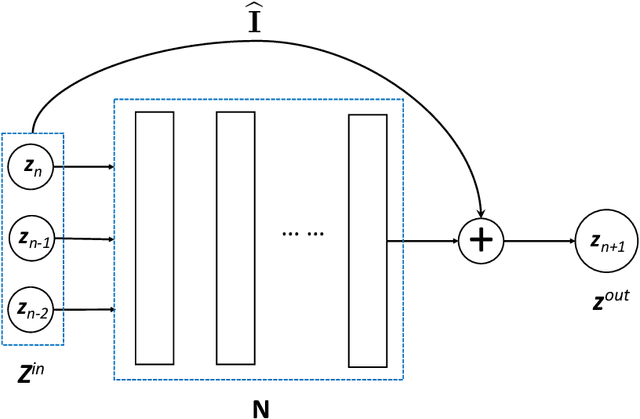

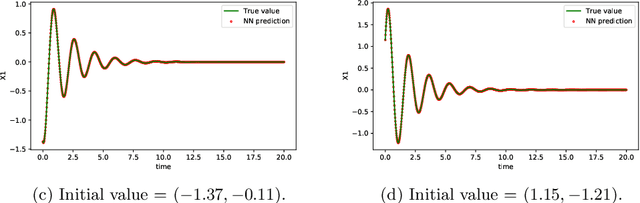

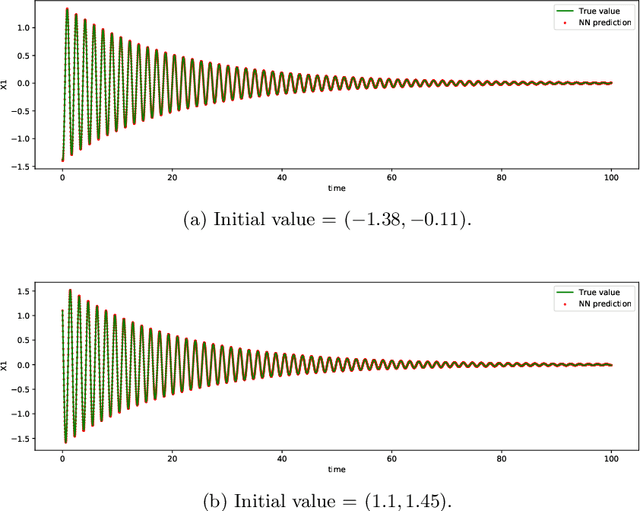

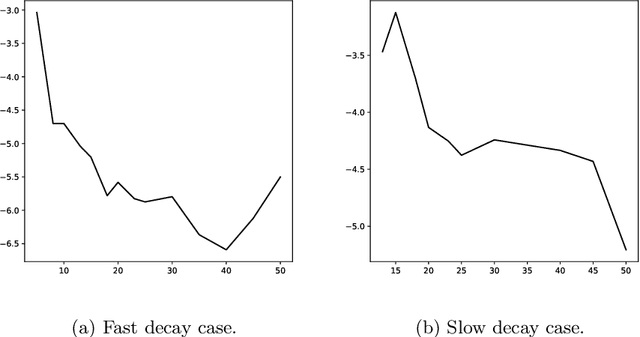

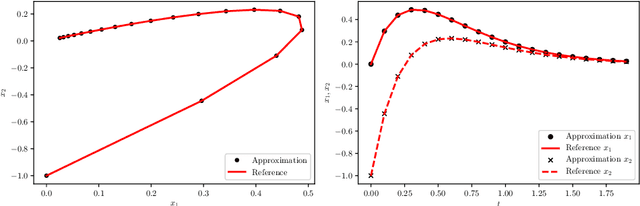

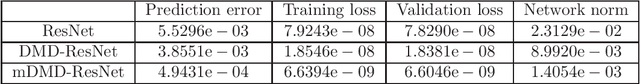

Abstract:We present a general numerical approach for learning unknown dynamical systems using deep neural networks (DNNs). Our method is built upon recent studies that identified the residue network (ResNet) as an effective neural network structure. In this paper, we present a generalized ResNet framework and broadly define residue as the discrepancy between observation data and prediction made by another model, which can be an existing coarse model or reduced-order model. In this case, the generalized ResNet serves as a model correction to the existing model and recovers the unresolved dynamics. When an existing coarse model is not available, we present numerical strategies for fast creation of coarse models, to be used in conjunction with the generalized ResNet. These coarse models are constructed using the same data set and thus do not require additional resources. The generalized ResNet is capable of learning the underlying unknown equations and producing predictions with accuracy higher than the standard ResNet structure. This is demonstrated via several numerical examples, including long-term prediction of a chaotic system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge