Deruo Cheng

SAMSEM -- A Generic and Scalable Approach for IC Metal Line Segmentation

Mar 17, 2026Abstract:In light of globalized hardware supply chains, the assurance of hardware components has gained significant interest, particularly in cryptographic applications and high-stakes scenarios. Identifying metal lines on scanning electron microscope (SEM) images of integrated circuits (ICs) is one essential step in verifying the absence of malicious circuitry in chips manufactured in untrusted environments. Due to varying manufacturing processes and technologies, such verification usually requires tuning parameters and algorithms for each target IC. Often, a machine learning model trained on images of one IC fails to accurately detect metal lines on other ICs. To address this challenge, we create SAMSEM by adapting Meta's Segment Anything Model 2 (SAM2) to the domain of IC metal line segmentation. Specifically, we develop a multi-scale segmentation approach that can handle SEM images of varying sizes, resolutions, and magnifications. Furthermore, we deploy a topology-based loss alongside pixel-based losses to focus our segmentation on electrical connectivity rather than pixel-level accuracy. Based on a hyperparameter optimization, we then fine-tune the SAM2 model to obtain a model that generalizes across different technology nodes, manufacturing materials, sample preparation methods, and SEM imaging technologies. To this end, we leverage an unprecedented dataset of SEM images obtained from 48 metal layers across 14 different ICs. When fine-tuned on seven ICs, SAMSEM achieves an error rate as low as 0.72% when evaluated on other images from the same ICs. For the remaining seven unseen ICs, it still achieves error rates as low as 5.53%. Finally, when fine-tuned on all 14 ICs, we observe an error rate of 0.62%. Hence, SAMSEM proves to be a reliable tool that significantly advances the frontier in metal line segmentation, a key challenge in post-manufacturing IC verification.

Unsupervised Domain Adaptation with Histogram-gated Image Translation for Delayered IC Image Analysis

Sep 27, 2022

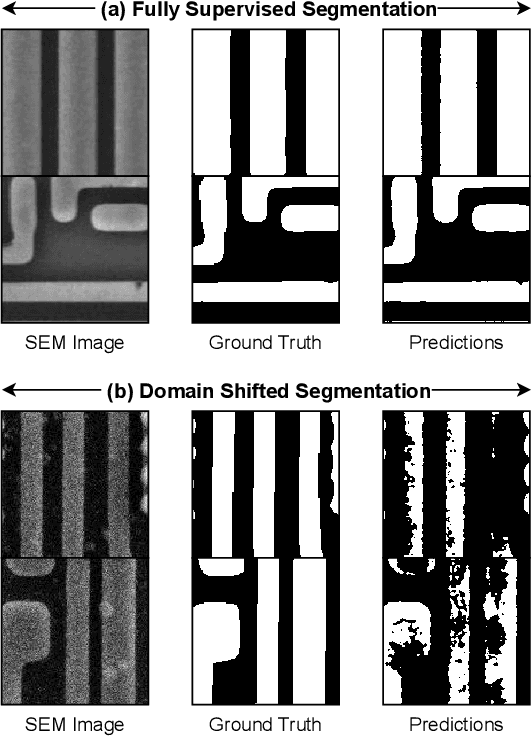

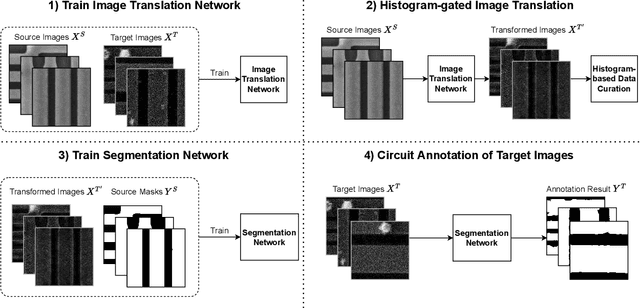

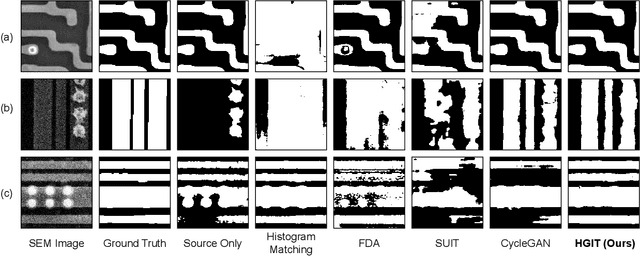

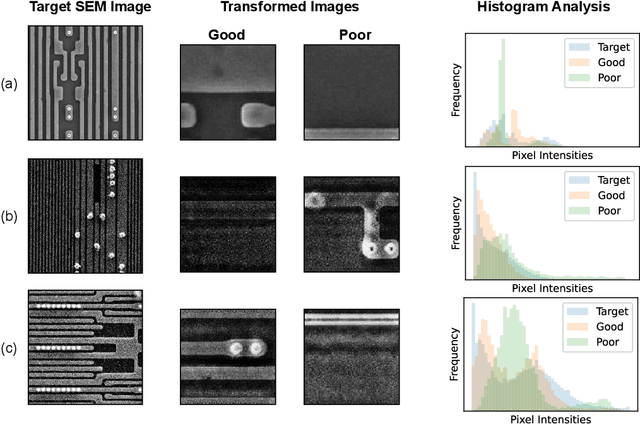

Abstract:Deep learning has achieved great success in the challenging circuit annotation task by employing Convolutional Neural Networks (CNN) for the segmentation of circuit structures. The deep learning approaches require a large amount of manually annotated training data to achieve a good performance, which could cause a degradation in performance if a deep learning model trained on a given dataset is applied to a different dataset. This is commonly known as the domain shift problem for circuit annotation, which stems from the possibly large variations in distribution across different image datasets. The different image datasets could be obtained from different devices or different layers within a single device. To address the domain shift problem, we propose Histogram-gated Image Translation (HGIT), an unsupervised domain adaptation framework which transforms images from a given source dataset to the domain of a target dataset, and utilize the transformed images for training a segmentation network. Specifically, our HGIT performs generative adversarial network (GAN)-based image translation and utilizes histogram statistics for data curation. Experiments were conducted on a single labeled source dataset adapted to three different target datasets (without labels for training) and the segmentation performance was evaluated for each target dataset. We have demonstrated that our method achieves the best performance compared to the reported domain adaptation techniques, and is also reasonably close to the fully supervised benchmark.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge