Daniel E. Koditschek

Coordinated Robot Navigation via Hierarchical Clustering

Jul 06, 2015

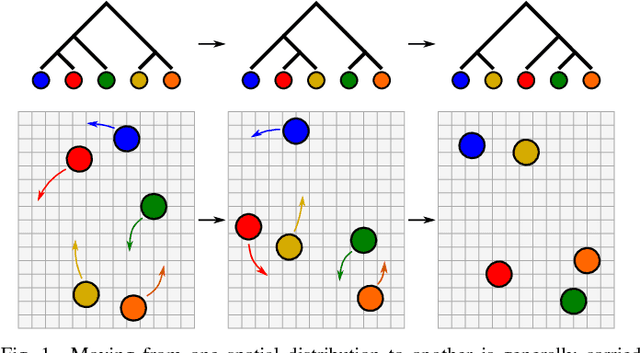

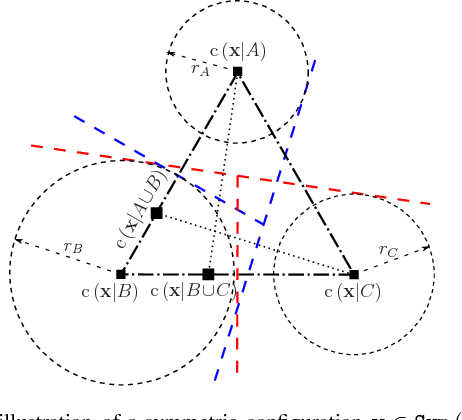

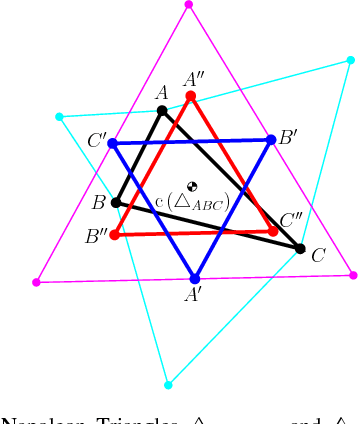

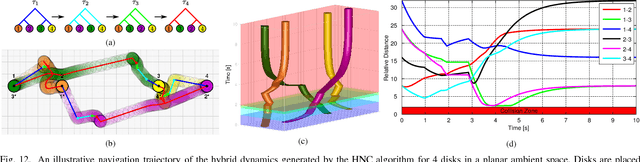

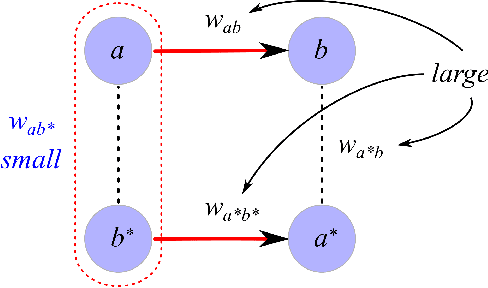

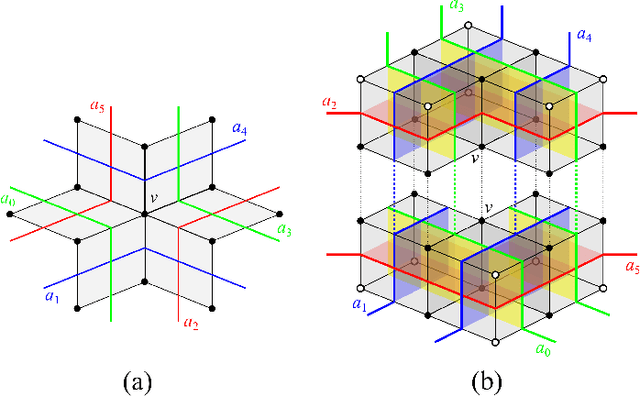

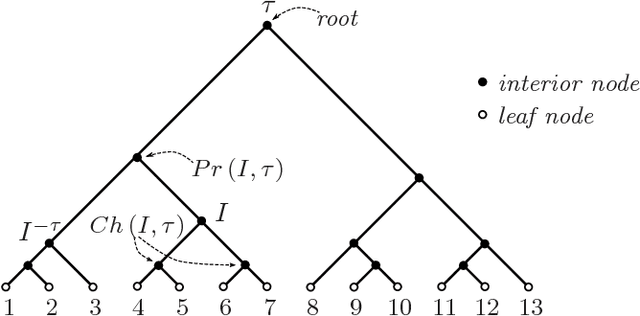

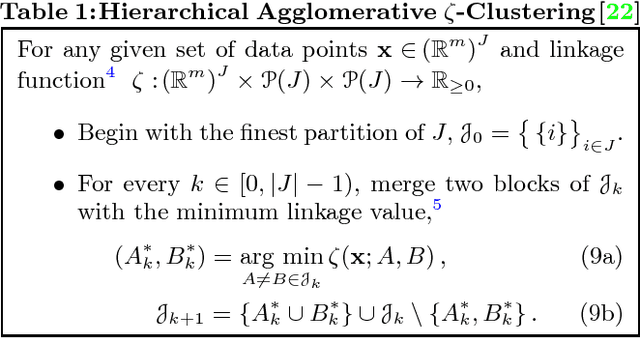

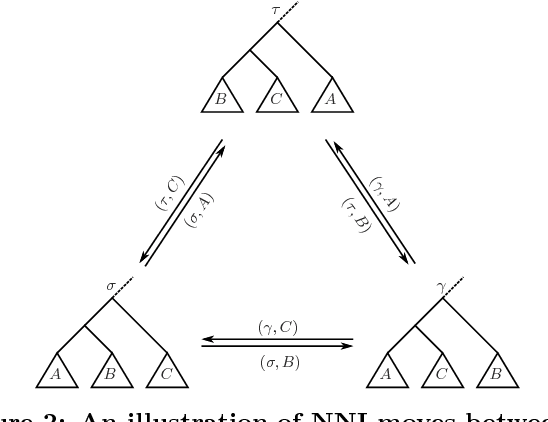

Abstract:We introduce the use of hierarchical clustering for relaxed, deterministic coordination and control of multiple robots. Traditionally an unsupervised learning method, hierarchical clustering offers a formalism for identifying and representing spatially cohesive and segregated robot groups at different resolutions by relating the continuous space of configurations to the combinatorial space of trees. We formalize and exploit this relation, developing computationally effective reactive algorithms for navigating through the combinatorial space in concert with geometric realizations for a particular choice of hierarchical clustering method. These constructions yield computationally effective vector field planners for both hierarchically invariant as well as transitional navigation in the configuration space. We apply these methods to the centralized coordination and control of $n$ perfectly sensed and actuated Euclidean spheres in a $d$-dimensional ambient space (for arbitrary $n$ and $d$). Given a desired configuration supporting a desired hierarchy, we construct a hybrid controller which is quadratic in $n$ and algebraic in $d$ and prove that its execution brings all but a measure zero set of initial configurations to the desired goal with the guarantee of no collisions along the way.

Universal Memory Architectures for Autonomous Machines

Feb 21, 2015

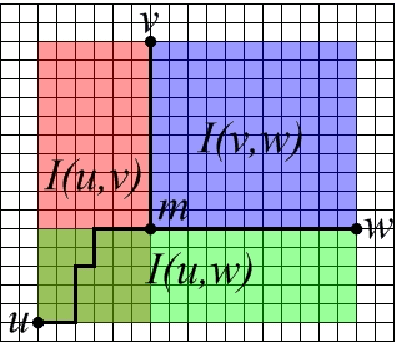

Abstract:We propose a self-organizing memory architecture for perceptual experience, capable of supporting autonomous learning and goal-directed problem solving in the absence of any prior information about the agent's environment. The architecture is simple enough to ensure (1) a quadratic bound (in the number of available sensors) on space requirements, and (2) a quadratic bound on the time-complexity of the update-execute cycle. At the same time, it is sufficiently complex to provide the agent with an internal representation which is (3) minimal among all representations of its class which account for every sensory equivalence class subject to the agent's belief state; (4) capable, in principle, of recovering the homotopy type of the system's state space; (5) learnable with arbitrary precision through a random application of the available actions. The provable properties of an effectively trained memory structure exploit a duality between weak poc sets -- a symbolic (discrete) representation of subset nesting relations -- and non-positively curved cubical complexes, whose rich convexity theory underlies the planning cycle of the proposed architecture.

Anytime Hierarchical Clustering

Apr 13, 2014

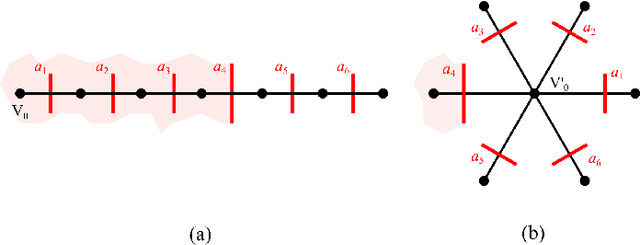

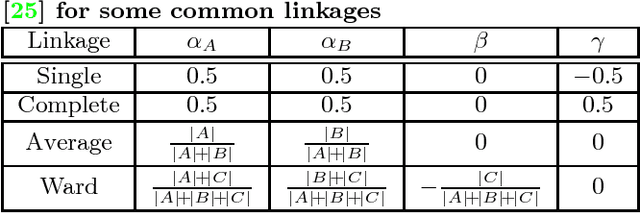

Abstract:We propose a new anytime hierarchical clustering method that iteratively transforms an arbitrary initial hierarchy on the configuration of measurements along a sequence of trees we prove for a fixed data set must terminate in a chain of nested partitions that satisfies a natural homogeneity requirement. Each recursive step re-edits the tree so as to improve a local measure of cluster homogeneity that is compatible with a number of commonly used (e.g., single, average, complete) linkage functions. As an alternative to the standard batch algorithms, we present numerical evidence to suggest that appropriate adaptations of this method can yield decentralized, scalable algorithms suitable for distributed/parallel computation of clustering hierarchies and online tracking of clustering trees applicable to large, dynamically changing databases and anomaly detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge