Daniel Chen

Array Layout Optimization in a 24-Element 38-GHz Active Incoherent Millimeter-Wave Imaging System

Mar 25, 2026Abstract:Active incoherent millimeter-wave (AIM) imaging is a recently developed technique that has been shown to generate fast millimeter-wave imaging using sparse apertures and Fourier domain sampling. In these systems, spatial frequency sampling is determined by cross-correlation between antenna pairs, making array geometry an important aspect that dictates the field of view (FOV) and image quality. This work investigates the impact of array redundancy and spatial sampling diversity on AIM image reconstruction performance. We present a comparative study of three receive array configurations, including one simple circular design and two arrays obtained through optimization strategies designed to maximize unique spatial samples while preserving system resolution and FOV. Performance is evaluated using the image-domain metrics of structural similarity index (SSIM) and peak sidelobe level (PSL), enabling a quantitative assessment of reconstruction fidelity and artifact suppression. We perform experimental validation using a 38-GHz AIM imaging system, implementing a 24-element receive array within a 48-position reconfigurable aperture. Results demonstrate that optimized array configurations improve spatial sampling efficiency and yield measurable gains in reconstruction quality compared to a conventional circular array, highlighting the importance of array design for AIM imaging systems.

Dynamic Decision-Making under Model Misspecification: A Stochastic Stability Approach

Feb 19, 2026Abstract:Dynamic decision-making under model uncertainty is central to many economic environments, yet existing bandit and reinforcement learning algorithms rely on the assumption of correct model specification. This paper studies the behavior and performance of one of the most commonly used Bayesian reinforcement learning algorithms, Thompson Sampling (TS), when the model class is misspecified. We first provide a complete dynamic classification of posterior evolution in a misspecified two-armed Gaussian bandit, identifying distinct regimes: correct model concentration, incorrect model concentration, and persistent belief mixing, characterized by the direction of statistical evidence and the model-action mapping. These regimes yield sharp predictions for limiting beliefs, action frequencies, and asymptotic regret. We then extend the analysis to a general finite model class and develop a unified stochastic stability framework that represents posterior evolution as a Markov process on the belief simplex. This approach characterizes two sufficient conditions to classify the ergodic and transient behaviors and provides inductive dimensional reductions of the posterior dynamics. Our results offer the first qualitative and geometric classification of TS under misspecification, bridging Bayesian learning with evolutionary dynamics, and also build the foundations of robust decision-making in structured bandits.

Parameter-Efficient Fine-Tuning of DINOv2 for Large-Scale Font Classification

Feb 14, 2026Abstract:We present a font classification system capable of identifying 394 font families from rendered text images. Our approach fine-tunes a DINOv2 Vision Transformer using Low-Rank Adaptation (LoRA), achieving approximately 86% top-1 accuracy while training fewer than 1% of the model's 87.2M parameters. We introduce a synthetic dataset generation pipeline that renders Google Fonts at scale with diverse augmentations including randomized colors, alignment, line wrapping, and Gaussian noise, producing training images that generalize to real-world typographic samples. The model incorporates built-in preprocessing to ensure consistency between training and inference, and is deployed as a HuggingFace Inference Endpoint. We release the model, dataset, and full training pipeline as open-source resources.

Imageless Contraband Detection Using a Millimeter-Wave Dynamic Antenna Array via Spatial Fourier Domain Sampling

Jun 09, 2024Abstract:We demonstrate an imageless method of concealed contraband detection using a real-time 75 GHz rotationally dynamic antenna array. The array measures information in the two-dimensional Fourier domain and captures a set of samples that is sufficient for detecting concealed objects yet insufficient for generating full image, thereby preserving the privacy of screened subjects. The small set of Fourier samples contains sharp spatial frequency features in the Fourier domain which correspond to sharp edges of man-made objects such as handguns. We evaluate a set of classification methods: threshold-based, K-nearest neighbor, and support vector machine using radial basis function; all operating on arithmetic features directly extracted from the sampled Fourier-domain responses measured by a dynamically rotating millimeter-wave active interferometer. Noise transmitters are used to produce thermal-like radiation from scenes, enabling direct Fourier-domain sampling, while the rotational dynamics circularly sample the two-dimensional Fourier domain, capturing the sharp-edge induced responses. We experimentally demonstrate the detection of concealed metallic gun-shape object beneath clothing on a real person in a laboratory environment and achieved an accuracy and F1-score both at 0.986. The presented technique not only prevents image formation due to efficient Fourier-domain space sub-sampling but also requires only 211 ms from measurement to decision.

Semi-automated extraction of research topics and trends from NCI funding in radiological sciences from 2000-2020

Jun 22, 2023

Abstract:Investigators, funders, and the public desire knowledge on topics and trends in publicly funded research but current efforts in manual categorization are limited in scale and understanding. We developed a semi-automated approach to extract and name research topics, and applied this to \$1.9B of NCI funding over 21 years in the radiological sciences to determine micro- and macro-scale research topics and funding trends. Our method relies on sequential clustering of existing biomedical-based word embeddings, naming using subject matter experts, and visualization to discover trends at a macroscopic scale above individual topics. We present results using 15 and 60 cluster topics, where we found that 2D projection of grant embeddings reveals two dominant axes: physics-biology and therapeutic-diagnostic. For our dataset, we found that funding for therapeutics- and physics-based research have outpaced diagnostics- and biology-based research, respectively. We hope these results may (1) give insight to funders on the appropriateness of their funding allocation, (2) assist investigators in contextualizing their work and explore neighboring research domains, and (3) allow the public to review where their tax dollars are being allocated.

Explicitly Solvable Continuous-time Inference for Partially Observed Markov Processes

Jan 02, 2023

Abstract:Many natural and engineered systems can be modeled as discrete state Markov processes. Often, only a subset of states are directly observable. Inferring the conditional probability that a system occupies a particular hidden state, given the partial observation, is a problem with broad application. In this paper, we introduce a continuous-time formulation of the sum-product algorithm, which is a well-known discrete-time method for finding the hidden states' conditional probabilities, given a set of finite, discrete-time observations. From our new formulation, we can explicitly solve for the conditional probability of occupying any state, given the transition rates and observations within a finite time window. We apply our algorithm to a realistic model of the cystic fibrosis transmembrane conductance regulator (CFTR) protein for exact inference of the conditional occupancy probability, given a finite time series of partial observations.

Quantum-Inspired Classical Algorithm for Principal Component Regression

Oct 16, 2020

Abstract:This paper presents a sublinear classical algorithm for principal component regression. The algorithm uses quantum-inspired linear algebra, an idea developed by Tang. Using this technique, her algorithm for recommendation systems achieved runtime only polynomially slower than its quantum counterpart. Her work was quickly adapted to solve many other problems in sublinear time complexity. In this work, we developed an algorithm for principal component regression that runs in time polylogarithmic to the number of data points, an exponential speed up over the state-of-the-art algorithm, under the mild assumption that the input is given in some data structure that supports a norm-based sampling procedure. This exponential speed up allows for potential applications in much larger data sets.

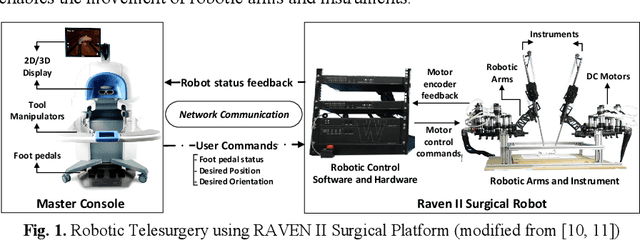

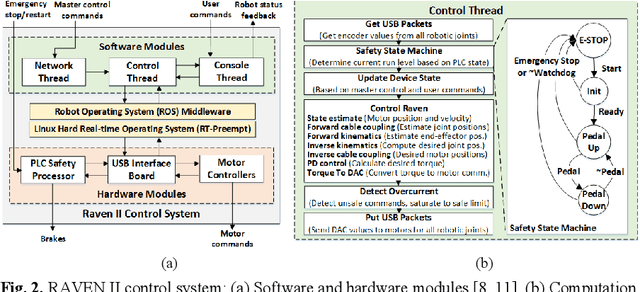

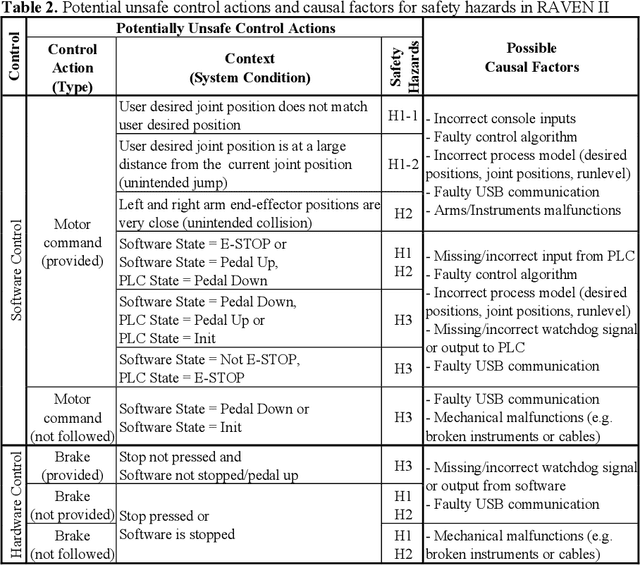

Systems-theoretic Safety Assessment of Robotic Telesurgical Systems

Jul 08, 2015

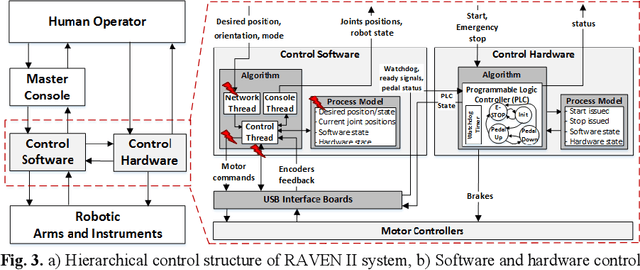

Abstract:Robotic telesurgical systems are one of the most complex medical cyber-physical systems on the market, and have been used in over 1.75 million procedures during the last decade. Despite significant improvements in design of robotic surgical systems through the years, there have been ongoing occurrences of safety incidents during procedures that negatively impact patients. This paper presents an approach for systems-theoretic safety assessment of robotic telesurgical systems using software-implemented fault-injection. We used a systemstheoretic hazard analysis technique (STPA) to identify the potential safety hazard scenarios and their contributing causes in RAVEN II robot, an open-source robotic surgical platform. We integrated the robot control software with a softwareimplemented fault-injection engine which measures the resilience of the system to the identified safety hazard scenarios by automatically inserting faults into different parts of the robot control software. Representative hazard scenarios from real robotic surgery incidents reported to the U.S. Food and Drug Administration (FDA) MAUDE database were used to demonstrate the feasibility of the proposed approach for safety-based design of robotic telesurgical systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge