Christopher L. Magee

Deep Learning for Technical Document Classification

Jun 27, 2021

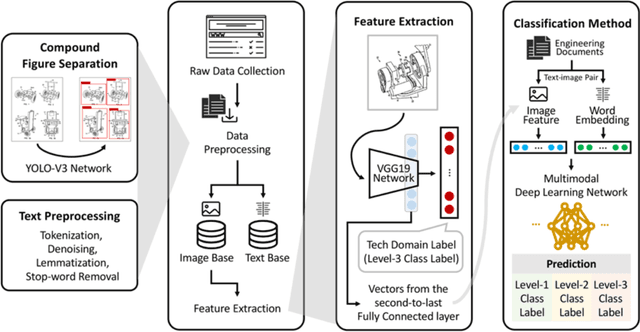

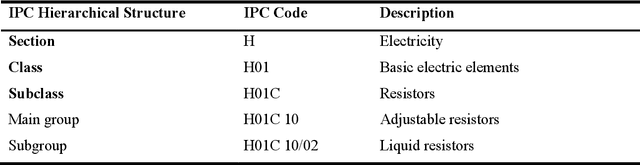

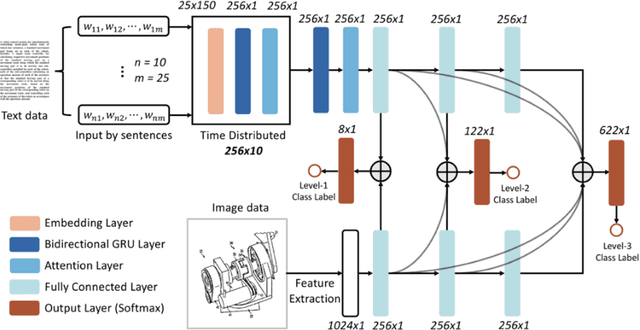

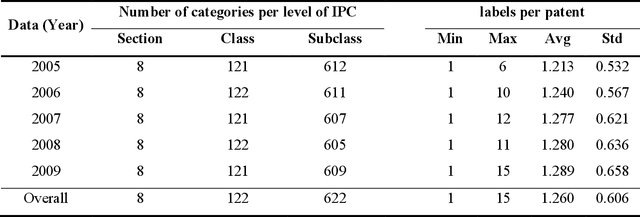

Abstract:In large technology companies, the requirements for managing and organizing technical documents created by engineers and managers in supporting relevant decision making have increased dramatically in recent years, which has led to a higher demand for more scalable, accurate, and automated document classification. Prior studies have primarily focused on processing text for classification and small-scale databases. This paper describes a novel multimodal deep learning architecture, called TechDoc, for technical document classification, which utilizes both natural language and descriptive images to train hierarchical classifiers. The architecture synthesizes convolutional neural networks and recurrent neural networks through an integrated training process. We applied the architecture to a large multimodal technical document database and trained the model for classifying documents based on the hierarchical International Patent Classification system. Our results show that the trained neural network presents a greater classification accuracy than those using a single modality and several earlier text classification methods. The trained model can potentially be scaled to millions of real-world technical documents with both text and figures, which is useful for data and knowledge management in large technology companies and organizations.

A CNN-based Patent Image Retrieval Method for Design Ideation

Mar 10, 2020

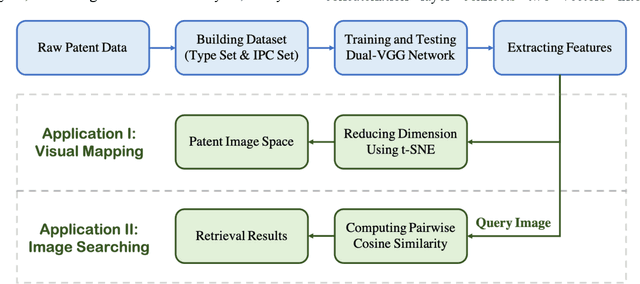

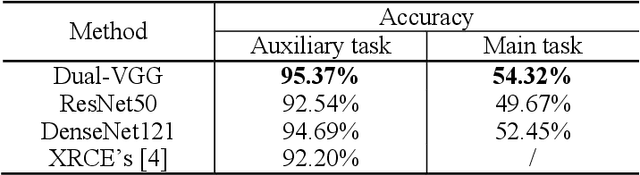

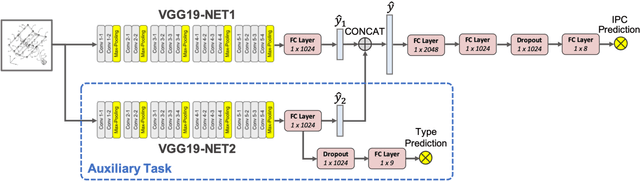

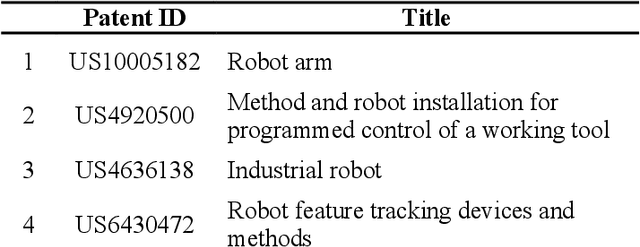

Abstract:The patent database is often used in searches of inspirational stimuli for innovative design opportunities because of its large size, extensive variety and rich design information in patent documents. However, most patent mining research only focuses on textual information and ignores visual information. Herein, we propose a convolutional neural network (CNN)- based patent image retrieval method. The core of this approach is a novel neural network architecture named Dual-VGG that is aimed to accomplish two tasks: visual material type prediction and International Patent Classification (IPC) class label prediction. In turn, the trained neural network provides the deep features in the image embedding vectors that can be utilized for patent image retrieval. The accuracy of both training tasks and patent image embedding space are evaluated to show the performance of our model. This approach is also illustrated in a case study of robot arm design retrieval. Compared to traditional keyword-based searching and Google image searching, the proposed method discovers more useful visual information for engineering design.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge