Christopher J. Ford

SoftHand Model-W: A 3D-Printed, Anthropomorphic, Underactuated Robot Hand with Integrated Wrist and Carpal Tunnel

Apr 01, 2026Abstract:This paper presents the SoftHand Model-W: a 3D-printed, underactuated, anthropomorphic robot hand based on the Pisa/IIT SoftHand, with an integrated antagonistic tendon mechanism and 2 degree-of-freedom tendon-driven wrist. These four degrees-of-acuation provide active flexion and extension to the five fingers, and active flexion/extension and radial/ulnar deviation of the palm through the wrist, while preserving the synergistic and self-adaptive features of such SoftHands. A carpal tunnel-inspired tendon routing allows remote motor placement in the forearm, reducing distal inertia and maintaining a compact form factor. The SoftHand-W is mounted on a 6-axis robot arm and tested with two reorientation tasks requiring coordination between the hand and arm's pose: cube stacking and in-plane disc rotation. Results comparing task time, arm joint travel, and configuration changes with and without wrist actuation show that adding the wrist reduces compensatory and reconfiguration movements of the arm for a quicker task-completion time. Moreover, the wrist enables pick-and-place operations that would be impossible otherwise. Overall, the SoftHand Model-W demonstrates how proximal degrees of freedom are key to achieving versatile, human-like manipulation in real world robotic applications, with a compact design enabling deployment in research and assistive settings.

How to Train your Tactile Model: Tactile Perception with Multi-fingered Robot Hands

Apr 01, 2026Abstract:Rapid deployment of new tactile sensors is essential for scalable robotic manipulation, especially in multi-fingered hands equipped with vision-based tactile sensors. However, current methods for inferring contact properties rely heavily on convolutional neural networks (CNNs), which, while effective on known sensors, require large, sensor-specific datasets. Furthermore, they require retraining for each new sensor due to differences in lens properties, illumination, and sensor wear. Here we introduce TacViT, a novel tactile perception model based on Vision Transformers, designed to generalize on new sensor data. TacViT leverages global self-attention mechanisms to extract robust features from tactile images, enabling accurate contact property inference even on previously unseen sensors. This capability significantly reduces the need for data collection and retraining, accelerating the deployment of new sensors. We evaluate TacViT on sensors for a five-fingered robot hand and demonstrate its superior generalization performance compared to CNNs. Our results highlight TacViTs potential to make tactile sensing more scalable and practical for real-world robotic applications.

Tactile SoftHand-A: 3D-Printed, Tactile, Highly-underactuated, Anthropomorphic Robot Hand with an Antagonistic Tendon Mechanism

Jun 18, 2024

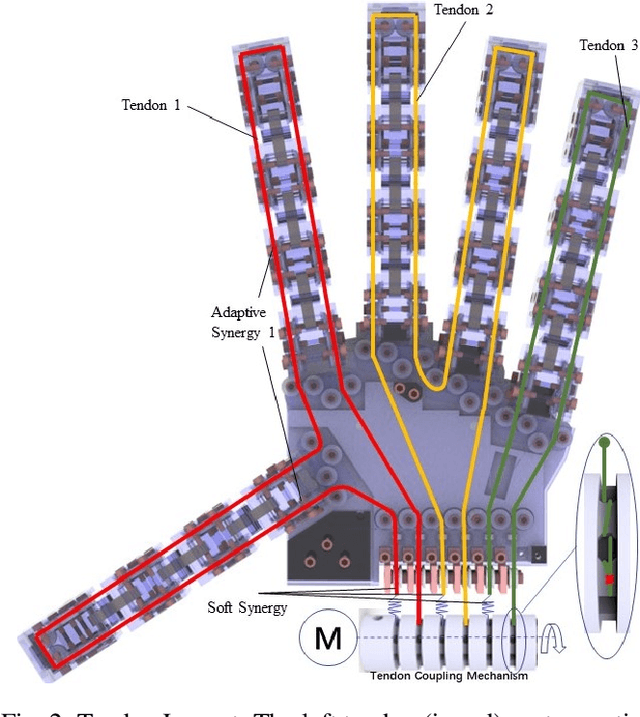

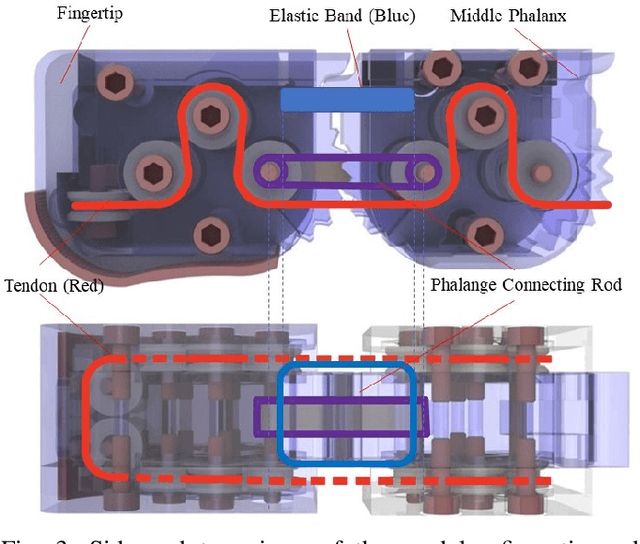

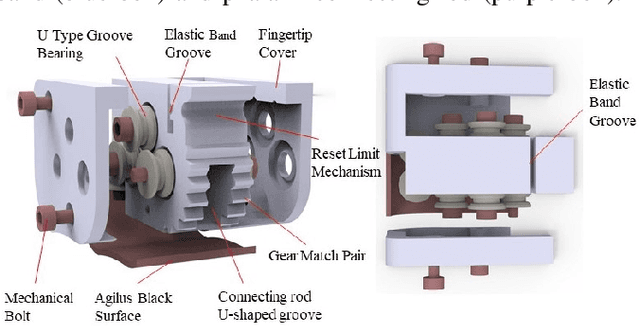

Abstract:For tendon-driven multi-fingered robotic hands, ensuring grasp adaptability while minimizing the number of actuators needed to provide human-like functionality is a challenging problem. Inspired by the Pisa/IIT SoftHand, this paper introduces a 3D-printed, highly-underactuated, five-finger robotic hand named the Tactile SoftHand-A, which features only two actuators. The dual-tendon design allows for the active control of specific (distal or proximal interphalangeal) joints to adjust the hand's grasp gesture. We have also developed a new design of fully 3D-printed tactile sensor that requires no hand assembly and is printed directly as part of the robotic finger. This sensor is integrated into the fingertips and combined with the antagonistic tendon mechanism to develop a human-hand-guided tactile feedback grasping system. The system can actively mirror human hand gestures, adaptively stabilize grasp gestures upon contact, and adjust grasp gestures to prevent object movement after detecting slippage. Finally, we designed four different experiments to evaluate the novel fingers coupled with the antagonistic mechanism for controlling the robotic hand's gestures, adaptive grasping ability, and human-hand-guided tactile feedback grasping capability. The experimental results demonstrate that the Tactile SoftHand-A can adaptively grasp objects of a wide range of shapes and automatically adjust its gripping gestures upon detecting contact and slippage. Overall, this study points the way towards a class of low-cost, accessible, 3D-printable, underactuated human-like robotic hands, and we openly release the designs to facilitate others to build upon this work. This work is Open-sourced at github.com/SoutheastWind/Tactile_SoftHand_A

Tactile-Driven Gentle Grasping for Human-Robot Collaborative Tasks

Mar 16, 2023

Abstract:This paper presents a control scheme for force sensitive, gentle grasping with a Pisa/IIT anthropomorphic SoftHand equipped with a miniaturised version of the TacTip optical tactile sensor on all five fingertips. The tactile sensors provide high-resolution information about a grasp and how the fingers interact with held objects. We first describe a series of hardware developments for performing asynchronous sensor data acquisition and processing, resulting in a fast control loop sufficient for real-time grasp control. We then develop a novel grasp controller that uses tactile feedback from all five fingertip sensors simultaneously to gently and stably grasp 43 objects of varying geometry and stiffness, which is then applied to a human-to-robot handover task. These developments open the door to more advanced manipulation with underactuated hands via fast reflexive control using high-resolution tactile sensing.

BRL/Pisa/IIT SoftHand: A Low-cost, 3D-Printed, Underactuated, Tendon-Driven Hand with Soft and Adaptive Synergies

Jun 25, 2022

Abstract:This paper introduces the BRL/Pisa/IIT (BPI) SoftHand: a single actuator-driven, low-cost, 3D-printed, tendon-driven, underactuated robot hand that can be used to perform a range of grasping tasks. Based on the adaptive synergies of the Pisa/IIT SoftHand, we design a new joint system and tendon routing to facilitate the inclusion of both soft and adaptive synergies, which helps us balance durability, affordability and grasping performance of the hand. The focus of this work is on the design, simulation, synergies and grasping tests of this SoftHand. The novel phalanges are designed and printed based on linkages, gear pairs and geometric restraint mechanisms, and can be applied to most tendon-driven robotic hands. We show that the robot hand can successfully grasp and lift various target objects and adapt to hold complex geometric shapes, reflecting the successful adoption of the soft and adaptive synergies. We intend to open-source the design of the hand so that it can be built cheaply on a home 3D-printer. For more detail: https://sites.google.com/view/bpi-softhandtactile-group-bri/brlpisaiit-softhand-design

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge