Christian Veronesi

Hardware-Aware Neural Feature Extraction for Resource-Constrained Devices

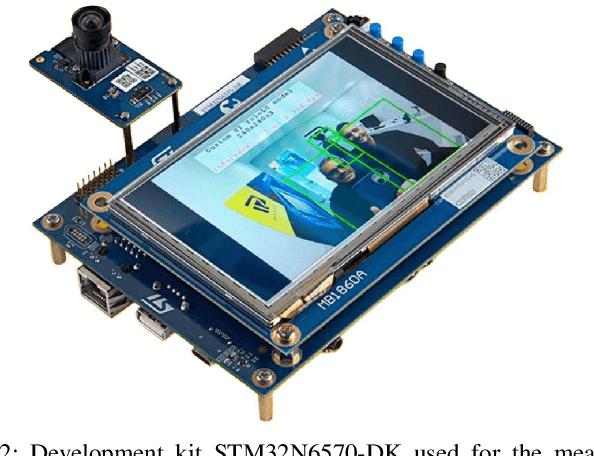

May 05, 2026Abstract:Visual SLAM is a core component of spatial computing systems, yet deploying learned local feature extractors on microcontroller-class hardware remains challenging due to memory, bandwidth, and quantization constraints. While modern neural descriptors provide strong robustness, their practical adoption is often hindered by system-level bottlenecks that are not captured by FLOP-based efficiency metrics. In this work, we introduce Gideon, a hardware-aware neural feature extractor explicitly designed for resource-constrained devices. Our approach combines relational knowledge distillation from a SuperPoint teacher with differentiable neural architecture search (DNAS) under strict memory and operator constraints. Unlike conventional design pipelines, we treat quantization stability and dynamic-range compactness as first-class objectives. We show that architectural choices such as replacing Batch Normalization with affine layers significantly improve INT8 robustness, and that descriptor dimensionality directly governs quantization resilience. Deployed on STM32N6, Gideon achieves 9.003 ms inference time (111 fps) while remaining below a 1.5 MB memory footprint. Remarkably, INT8 quantization induces negligible degradation and occasionally matches full-precision performance. These results demonstrate that robust learned feature extraction can be reconciled with embedded hardware constraints through holistic hardware-algorithm co-design.

Benchmarking Energy and Latency in TinyML: A Novel Method for Resource-Constrained AI

May 21, 2025

Abstract:The rise of IoT has increased the need for on-edge machine learning, with TinyML emerging as a promising solution for resource-constrained devices such as MCU. However, evaluating their performance remains challenging due to diverse architectures and application scenarios. Current solutions have many non-negligible limitations. This work introduces an alternative benchmarking methodology that integrates energy and latency measurements while distinguishing three execution phases pre-inference, inference, and post-inference. Additionally, the setup ensures that the device operates without being powered by an external measurement unit, while automated testing can be leveraged to enhance statistical significance. To evaluate our setup, we tested the STM32N6 MCU, which includes a NPU for executing neural networks. Two configurations were considered: high-performance and Low-power. The variation of the EDP was analyzed separately for each phase, providing insights into the impact of hardware configurations on energy efficiency. Each model was tested 1000 times to ensure statistically relevant results. Our findings demonstrate that reducing the core voltage and clock frequency improve the efficiency of pre- and post-processing without significantly affecting network execution performance. This approach can also be used for cross-platform comparisons to determine the most efficient inference platform and to quantify how pre- and post-processing overhead varies across different hardware implementations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge