Chris Pollard

Hierarchical Neural Simulation-Based Inference Over Event Ensembles

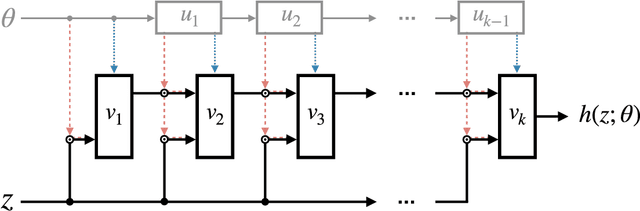

Jun 21, 2023Abstract:When analyzing real-world data it is common to work with event ensembles, which comprise sets of observations that collectively constrain the parameters of an underlying model of interest. Such models often have a hierarchical structure, where "local" parameters impact individual events and "global" parameters influence the entire dataset. We introduce practical approaches for optimal dataset-wide probabilistic inference in cases where the likelihood is intractable, but simulations can be realized via forward modeling. We construct neural estimators for the likelihood(-ratio) or posterior and show that explicitly accounting for the model's hierarchical structure can lead to tighter parameter constraints. We ground our discussion using case studies from the physical sciences, focusing on examples from particle physics (particle collider data) and astrophysics (strong gravitational lensing observations).

Flow Away your Differences: Conditional Normalizing Flows as an Improvement to Reweighting

Apr 28, 2023

Abstract:We present an alternative to reweighting techniques for modifying distributions to account for a desired change in an underlying conditional distribution, as is often needed to correct for mis-modelling in a simulated sample. We employ conditional normalizing flows to learn the full conditional probability distribution from which we sample new events for conditional values drawn from the target distribution to produce the desired, altered distribution. In contrast to common reweighting techniques, this procedure is independent of binning choice and does not rely on an estimate of the density ratio between two distributions. In several toy examples we show that normalizing flows outperform reweighting approaches to match the distribution of the target.We demonstrate that the corrected distribution closes well with the ground truth, and a statistical uncertainty on the training dataset can be ascertained with bootstrapping. In our examples, this leads to a statistical precision up to three times greater than using reweighting techniques with identical sample sizes for the source and target distributions. We also explore an application in the context of high energy particle physics.

Transport away your problems: Calibrating stochastic simulations with optimal transport

Jul 19, 2021

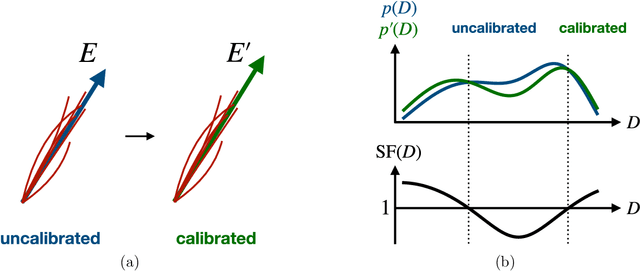

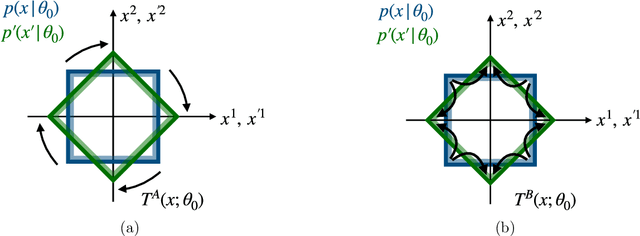

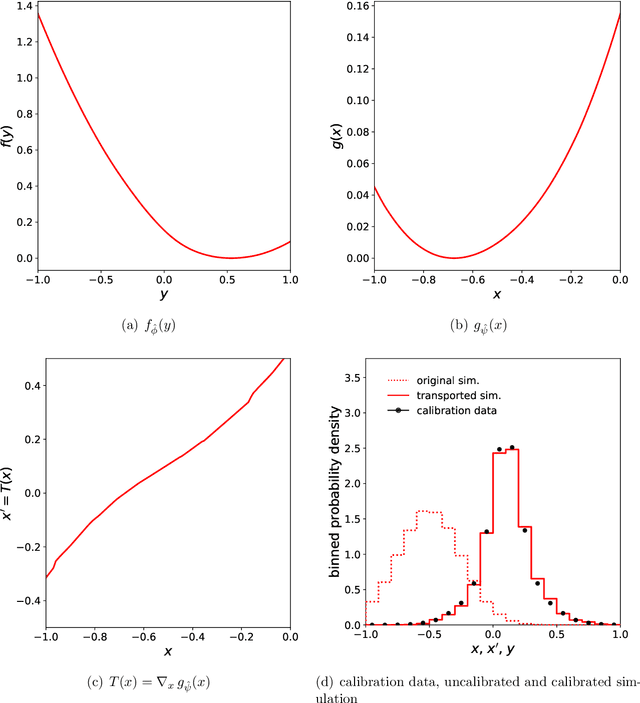

Abstract:Stochastic simulators are an indispensable tool in many branches of science. Often based on first principles, they deliver a series of samples whose distribution implicitly defines a probability measure to describe the phenomena of interest. However, the fidelity of these simulators is not always sufficient for all scientific purposes, necessitating the construction of ad-hoc corrections to "calibrate" the simulation and ensure that its output is a faithful representation of reality. In this paper, we leverage methods from transportation theory to construct such corrections in a systematic way. We use a neural network to compute minimal modifications to the individual samples produced by the simulator such that the resulting distribution becomes properly calibrated. We illustrate the method and its benefits in the context of experimental particle physics, where the need for calibrated stochastic simulators is particularly pronounced.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge