Chris Peterson

A GPU-Oriented Algorithm Design for Secant-Based Dimensionality Reduction

Jul 10, 2018

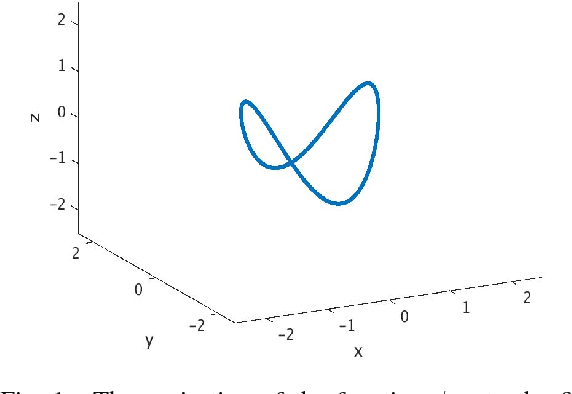

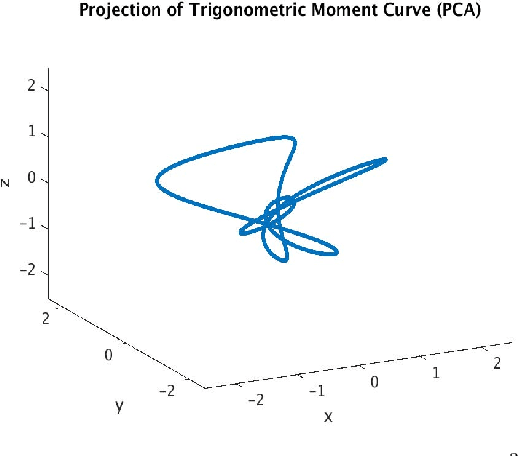

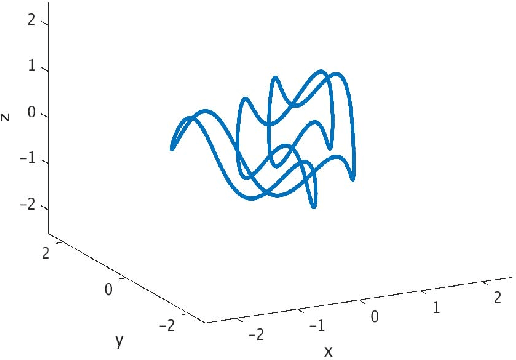

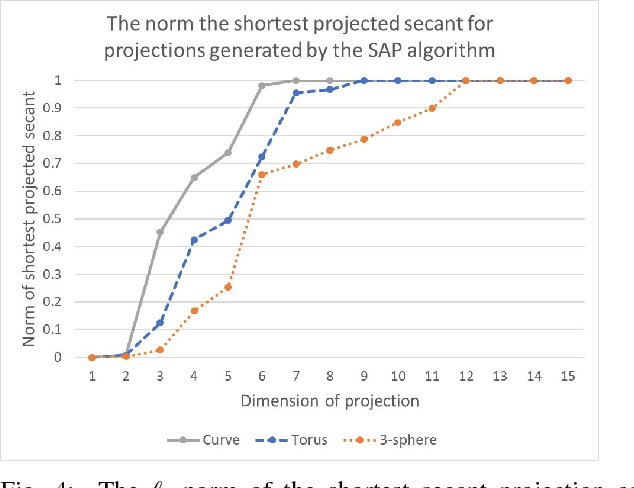

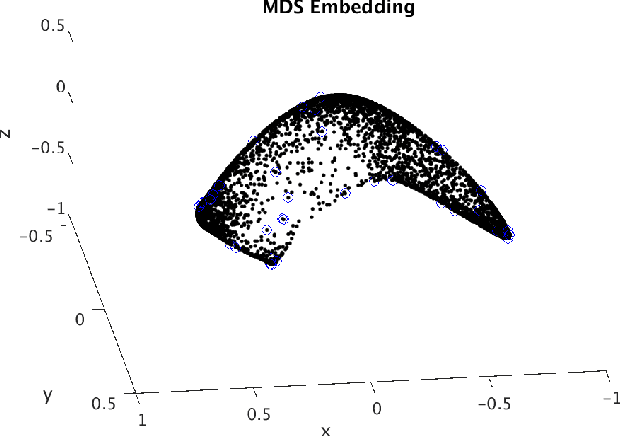

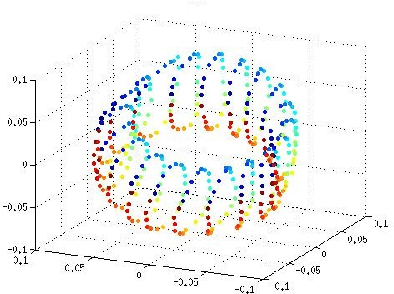

Abstract:Dimensionality-reduction techniques are a fundamental tool for extracting useful information from high-dimensional data sets. Because secant sets encode manifold geometry, they are a useful tool for designing meaningful data-reduction algorithms. In one such approach, the goal is to construct a projection that maximally avoids secant directions and hence ensures that distinct data points are not mapped too close together in the reduced space. This type of algorithm is based on a mathematical framework inspired by the constructive proof of Whitney's embedding theorem from differential topology. Computing all (unit) secants for a set of points is by nature computationally expensive, thus opening the door for exploitation of GPU architecture for achieving fast versions of these algorithms. We present a polynomial-time data-reduction algorithm that produces a meaningful low-dimensional representation of a data set by iteratively constructing improved projections within the framework described above. Key to our algorithm design and implementation is the use of GPUs which, among other things, minimizes the computational time required for the calculation of all secant lines. One goal of this report is to share ideas with GPU experts and to discuss a class of mathematical algorithms that may be of interest to the broader GPU community.

Endmember Extraction on the Grassmannian

Jul 03, 2018

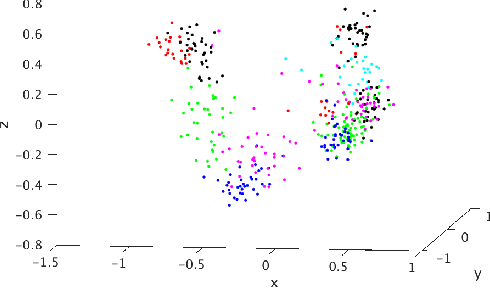

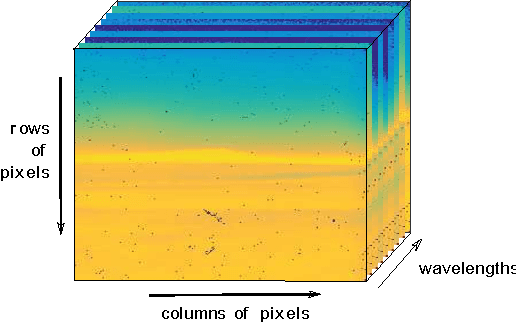

Abstract:Endmember extraction plays a prominent role in a variety of data analysis problems as endmembers often correspond to data representing the purest or best representative of some feature. Identifying endmembers then can be useful for further identification and classification tasks. In settings with high-dimensional data, such as hyperspectral imagery, it can be useful to consider endmembers that are subspaces as they are capable of capturing a wider range of variations of a signature. The endmember extraction problem in this setting thus translates to finding the vertices of the convex hull of a set of points on a Grassmannian. In the presence of noise, it can be less clear whether a point should be considered a vertex. In this paper, we propose an algorithm to extract endmembers on a Grassmannian, identify subspaces of interest that lie near the boundary of a convex hull, and demonstrate the use of the algorithm on a synthetic example and on the 220 spectral band AVIRIS Indian Pines hyperspectral image.

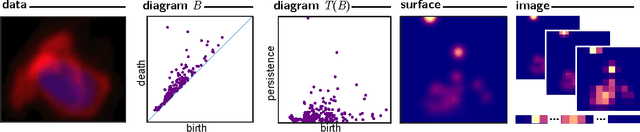

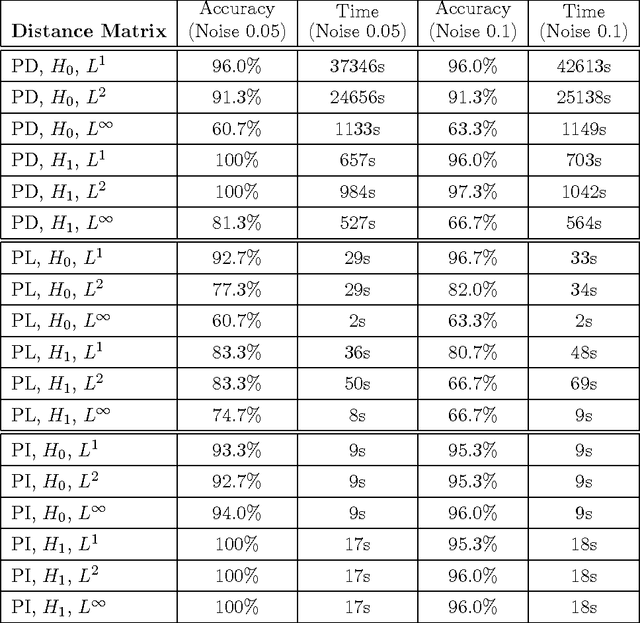

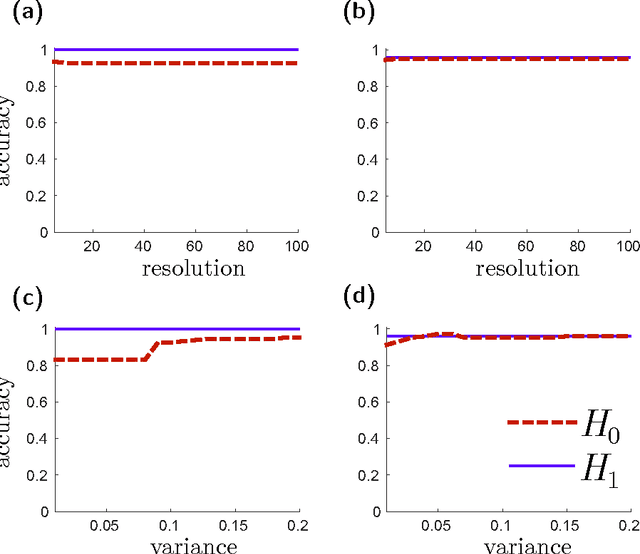

Persistence Images: A Stable Vector Representation of Persistent Homology

Jul 11, 2016

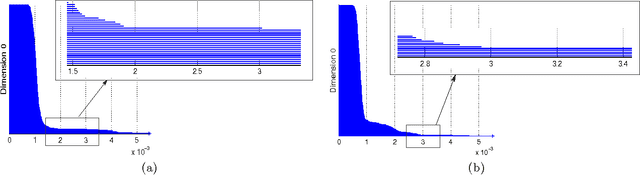

Abstract:Many datasets can be viewed as a noisy sampling of an underlying space, and tools from topological data analysis can characterize this structure for the purpose of knowledge discovery. One such tool is persistent homology, which provides a multiscale description of the homological features within a dataset. A useful representation of this homological information is a persistence diagram (PD). Efforts have been made to map PDs into spaces with additional structure valuable to machine learning tasks. We convert a PD to a finite-dimensional vector representation which we call a persistence image (PI), and prove the stability of this transformation with respect to small perturbations in the inputs. The discriminatory power of PIs is compared against existing methods, showing significant performance gains. We explore the use of PIs with vector-based machine learning tools, such as linear sparse support vector machines, which identify features containing discriminating topological information. Finally, high accuracy inference of parameter values from the dynamic output of a discrete dynamical system (the linked twist map) and a partial differential equation (the anisotropic Kuramoto-Sivashinsky equation) provide a novel application of the discriminatory power of PIs.

* Version 3 contains updated theoretical results supporting methodology; expanded discussion of related works; extended list of references; extended applications section; additional experimental results and new figures

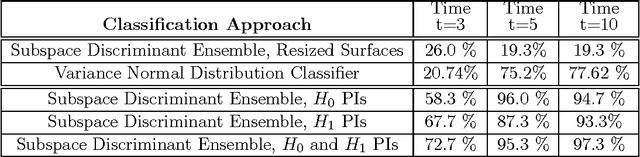

Persistent Homology on Grassmann Manifolds for Analysis of Hyperspectral Movies

Jul 11, 2016

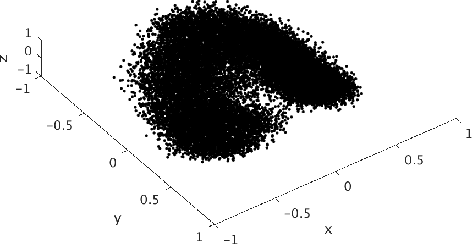

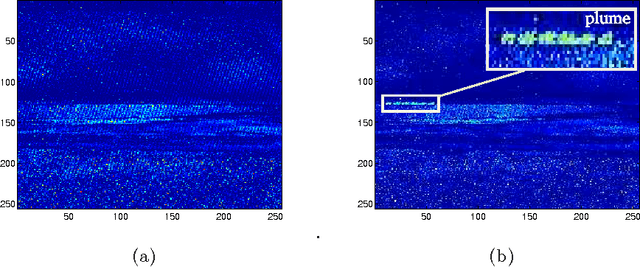

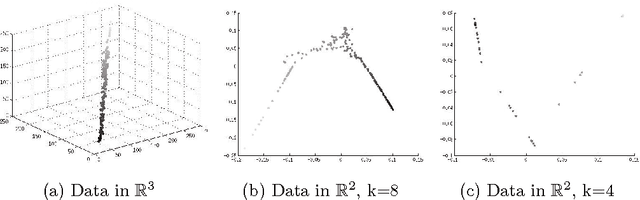

Abstract:The existence of characteristic structure, or shape, in complex data sets has been recognized as increasingly important for mathematical data analysis. This realization has motivated the development of new tools such as persistent homology for exploring topological invariants, or features, in large data sets. In this paper we apply persistent homology to the characterization of gas plumes in time dependent sequences of hyperspectral cubes, i.e. the analysis of 4-way arrays. We investigate hyperspectral movies of Long-Wavelength Infrared data monitoring an experimental release of chemical simulant into the air. Our approach models regions of interest within the hyperspectral data cubes as points on the real Grassmann manifold $G(k, n)$ (whose points parameterize the $k$-dimensional subspaces of $\mathbb{R}^n$), contrasting our approach with the more standard framework in Euclidean space. An advantage of this approach is that it allows a sequence of time slices in a hyperspectral movie to be collapsed to a sequence of points in such a way that some of the key structure within and between the slices is encoded by the points on the Grassmann manifold. This motivates the search for topological features, associated with the evolution of the frames of a hyperspectral movie, within the corresponding points on the Grassmann manifold. The proposed mathematical model affords the processing of large data sets while retaining valuable discriminatory information. In this paper, we discuss how embedding our data in the Grassmann manifold, together with topological data analysis, captures dynamical events that occur as the chemical plume is released and evolves.

* version 2: typos correction

Locally Linear Embedding Clustering Algorithm for Natural Imagery

Feb 20, 2012

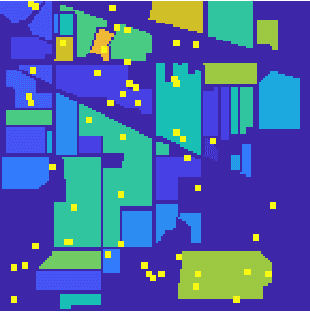

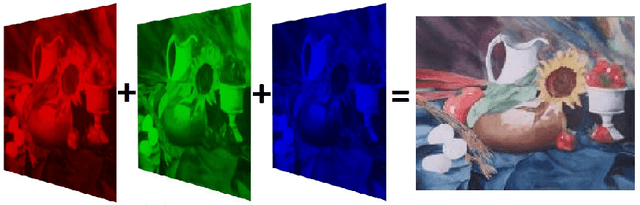

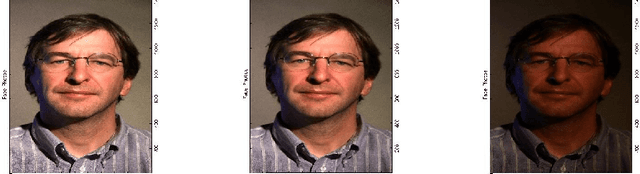

Abstract:The ability to characterize the color content of natural imagery is an important application of image processing. The pixel by pixel coloring of images may be viewed naturally as points in color space, and the inherent structure and distribution of these points affords a quantization, through clustering, of the color information in the image. In this paper, we present a novel topologically driven clustering algorithm that permits segmentation of the color features in a digital image. The algorithm blends Locally Linear Embedding (LLE) and vector quantization by mapping color information to a lower dimensional space, identifying distinct color regions, and classifying pixels together based on both a proximity measure and color content. It is observed that these techniques permit a significant reduction in color resolution while maintaining the visually important features of images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge