Carlo Pinciroli

A Micro-Macro Model of Encounter-Driven Information Diffusion in Robot Swarms

Feb 24, 2026Abstract:In this paper, we propose the problem of Encounter-Driven Information Diffusion (EDID). In EDID, robots are allowed to exchange information only upon meeting. Crucially, EDID assumes that the robots are not allowed to schedule their meetings. As such, the robots have no means to anticipate when, where, and who they will meet. As a step towards the design of storage and routing algorithms for EDID, in this paper we propose a model of information diffusion that captures the essential dynamics of EDID. The model is derived from first principles and is composed of two levels: a micro model, based on a generalization of the concept of `mean free path'; and a macro model, which captures the global dynamics of information diffusion. We validate the model through extensive robot simulations, in which we consider swarm size, communication range, environment size, and different random motion regimes. We conclude the paper with a discussion of the implications of this model on the algorithms that best support information diffusion according to the parameters of interest.

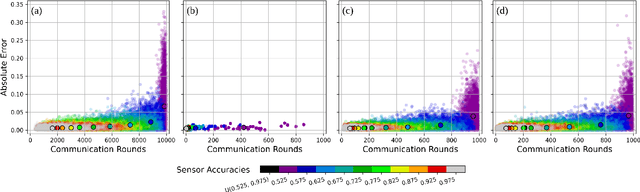

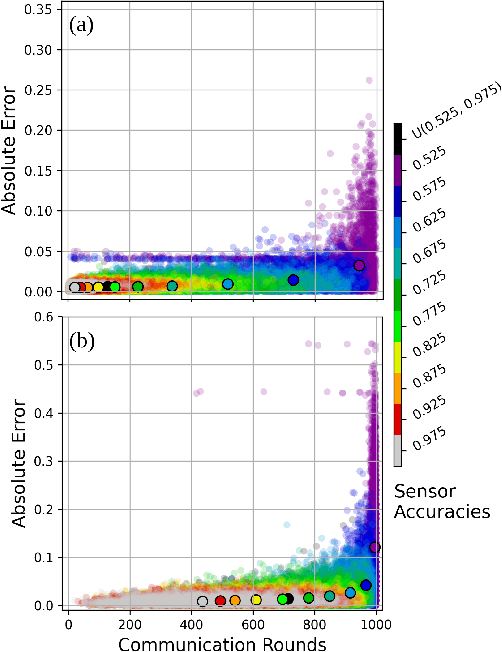

BayesCPF: Enabling Collective Perception in Robot Swarms with Degrading Sensors

Apr 07, 2025Abstract:The collective perception problem -- where a group of robots perceives its surroundings and comes to a consensus on an environmental state -- is a fundamental problem in swarm robotics. Past works studying collective perception use either an entire robot swarm with perfect sensing or a swarm with only a handful of malfunctioning members. A related study proposed an algorithm that does account for an entire swarm of unreliable robots but assumes that the sensor faults are known and remain constant over time. To that end, we build on that study by proposing the Bayes Collective Perception Filter (BayesCPF) that enables robots with continuously degrading sensors to accurately estimate the fill ratio -- the rate at which an environmental feature occurs. Our main contribution is the Extended Kalman Filter within the BayesCPF, which helps swarm robots calibrate for their time-varying sensor degradation. We validate our method across different degradation models, initial conditions, and environments in simulated and physical experiments. Our findings show that, regardless of degradation model assumptions, fill ratio estimation using the BayesCPF is competitive to the case if the true sensor accuracy is known, especially when assumptions regarding the model and initial sensor accuracy levels are preserved.

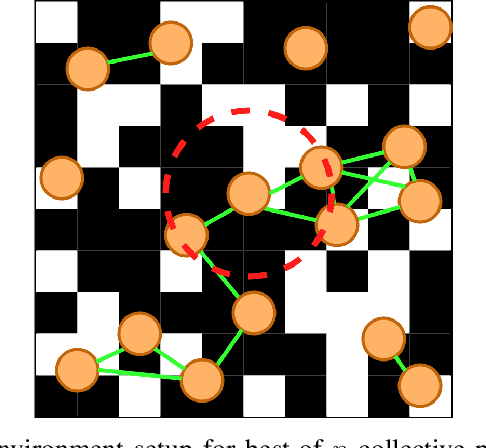

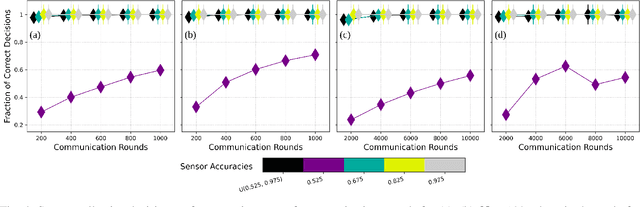

Adaptive Self-Calibration for Minimalistic Collective Perception by Imperfect Robot Swarms

Oct 28, 2024

Abstract:Collective perception is a fundamental problem in swarm robotics, often cast as best-of-$n$ decision-making. Past studies involve robots with perfect sensing or with small numbers of faulty robots. We previously addressed these limitations by proposing an algorithm, here referred to as Minimalistic Collective Perception (MCP) [arxiv:2209.12858], to reach correct decisions despite the entire swarm having severely damaged sensors. However, this algorithm assumes that sensor accuracy is known, which may be infeasible in reality. In this paper, we eliminate this assumption to (i) investigate the decline of estimation performance and (ii) introduce an Adaptive Sensor Degradation Filter (ASDF) to mitigate the decline. We combine the MCP algorithm and a hypothesis test to enable adaptive self-calibration of robots' assumed sensor accuracy. We validate our approach across several parameters of interest. Our findings show that estimation performance by a swarm with correctly known accuracy is superior to that by a swarm unaware of its accuracy. However, the ASDF drastically mitigates the damage, even reaching the performance levels of robots aware a priori of their correct accuracy.

Reactive Multi-Robot Navigation in Outdoor Environments Through Uncertainty-Aware Active Learning of Human Preference Landscape

Sep 25, 2024

Abstract:Compared with single robots, Multi-Robot Systems (MRS) can perform missions more efficiently due to the presence of multiple members with diverse capabilities. However, deploying an MRS in wide real-world environments is still challenging due to uncertain and various obstacles (e.g., building clusters and trees). With a limited understanding of environmental uncertainty on performance, an MRS cannot flexibly adjust its behaviors (e.g., teaming, load sharing, trajectory planning) to ensure both environment adaptation and task accomplishments. In this work, a novel joint preference landscape learning and behavior adjusting framework (PLBA) is designed. PLBA efficiently integrates real-time human guidance to MRS coordination and utilizes Sparse Variational Gaussian Processes with Varying Output Noise to quickly assess human preferences by leveraging spatial correlations between environment characteristics. An optimization-based behavior-adjusting method then safely adapts MRS behaviors to environments. To validate PLBA's effectiveness in MRS behavior adaption, a flood disaster search and rescue task was designed. 20 human users provided 1764 feedback based on human preferences obtained from MRS behaviors related to "task quality", "task progress", "robot safety". The prediction accuracy and adaptation speed results show the effectiveness of PLBA in preference learning and MRS behavior adaption.

Decentralized Multi-Agent Reinforcement Learning with Global State Prediction

Jun 22, 2023

Abstract:Deep reinforcement learning (DRL) has seen remarkable success in the control of single robots. However, applying DRL to robot swarms presents significant challenges. A critical challenge is non-stationarity, which occurs when two or more robots update individual or shared policies concurrently, thereby engaging in an interdependent training process with no guarantees of convergence. Circumventing non-stationarity typically involves training the robots with global information about other agents' states and/or actions. In contrast, in this paper we explore how to remove the need for global information. We pose our problem as a Partially Observable Markov Decision Process, due to the absence of global knowledge on other agents. Using collective transport as a testbed scenario, we study two approaches to multi-agent training. In the first, the robots exchange no messages, and are trained to rely on implicit communication through push-and-pull on the object to transport. In the second approach, we introduce Global State Prediction (GSP), a network trained to forma a belief over the swarm as a whole and predict its future states. We provide a comprehensive study over four well-known deep reinforcement learning algorithms in environments with obstacles, measuring performance as the successful transport of the object to the goal within a desired time-frame. Through an ablation study, we show that including GSP boosts performance and increases robustness when compared with methods that use global knowledge.

Heterogeneous Coalition Formation and Scheduling with Multi-Skilled Robots

Jun 20, 2023

Abstract:We present an approach to task scheduling in heterogeneous multi-robot systems. In our setting, the tasks to complete require diverse skills. We assume that each robot is multi-skilled, i.e., each robot offers a subset of the possible skills. This makes the formation of heterogeneous teams (\emph{coalitions}) a requirement for task completion. We present two centralized algorithms to schedule robots across tasks and to form suitable coalitions, assuming stochastic travel times across tasks. The coalitions are dynamic, in that the robots form and disband coalitions as the schedule is executed. The first algorithm we propose guarantees optimality, but its run-time is acceptable only for small problem instances. The second algorithm we propose can tackle large problems with short run-times, and is based on a heuristic approach that typically reaches 1x-2x of the optimal solution cost.

Minimalistic Collective Perception with Imperfect Sensors

Sep 26, 2022

Abstract:Collective perception is a foundational problem in swarm robotics, in which the swarm must reach consensus on a coherent representation of the environment. An important variant of collective perception casts it as a best-of-$n$ decision-making process, in which the swarm must identify the most likely representation out of a set of alternatives. Past work on this variant primarily focused on characterizing how different algorithms navigate the speed-vs-accuracy tradeoff in a scenario where the swarm must decide on the most frequent environmental feature. Crucially, past work on best-of-$n$ decision-making assumes the robot sensors to be perfect (noise- and fault-less), limiting the real-world applicability of these algorithms. In this paper, we derive from first principles an optimal, probabilistic framework for minimalistic swarm robots equipped with flawed sensors. Then, we validate our approach in a scenario where the swarm collectively decides the frequency of a certain environmental feature. We study the speed and accuracy of the decision-making process with respect to several parameters of interest. Our approach can provide timely and accurate frequency estimates even in presence of severe sensory noise.

Extracting Symbolic Models of Collective Behaviors with Graph Neural Networks and Macro-Micro Evolution

May 02, 2022

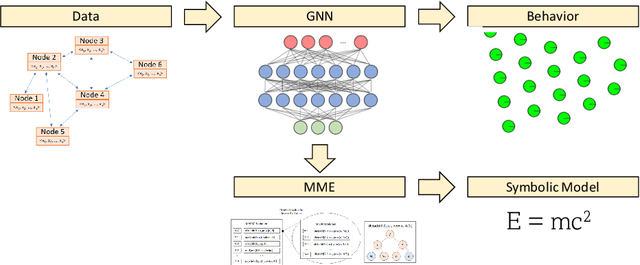

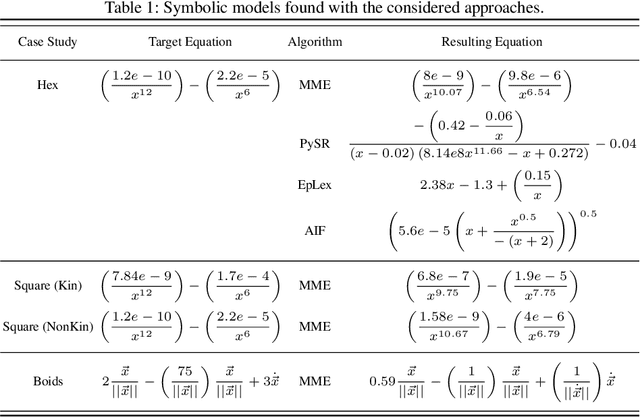

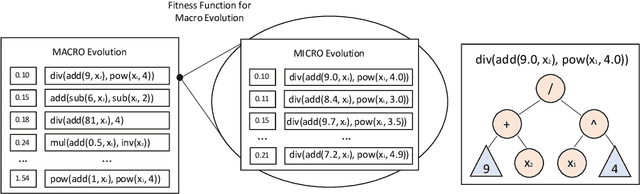

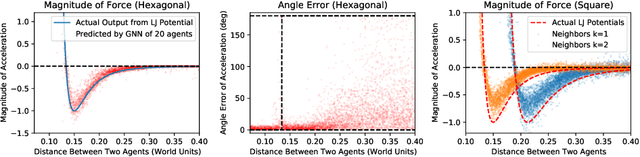

Abstract:Collective behaviors are typically hard to model. The scale of the swarm, the large number of interactions, and the richness and complexity of the behaviors are factors that make it difficult to distill a collective behavior into simple symbolic expressions. In this paper, we propose a novel approach to symbolic regression designed to facilitate such modeling. Using raw and post-processed data as an input, our approach produces viable symbolic expressions that closely model the target behavior. Our approach is composed of two phases. In the first, a graph neural network (GNN) is trained to extract an approximation of the target behavior. In the second phase, the GNN is used to produce data for a nested evolutionary algorithm called macro-micro evolution (MME). The macro layer of this algorithm selects candidate symbolic expressions, while the micro layer tunes its parameters. Experimental evaluation shows that our approach outperforms competing solutions for symbolic regression, making it possible to extract compact expressions for complex swarm behaviors.

A Study of Reinforcement Learning Algorithms for Aggregates of Minimalistic Robots

Mar 28, 2022

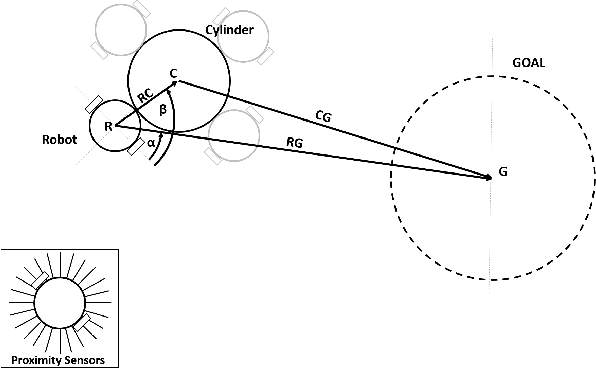

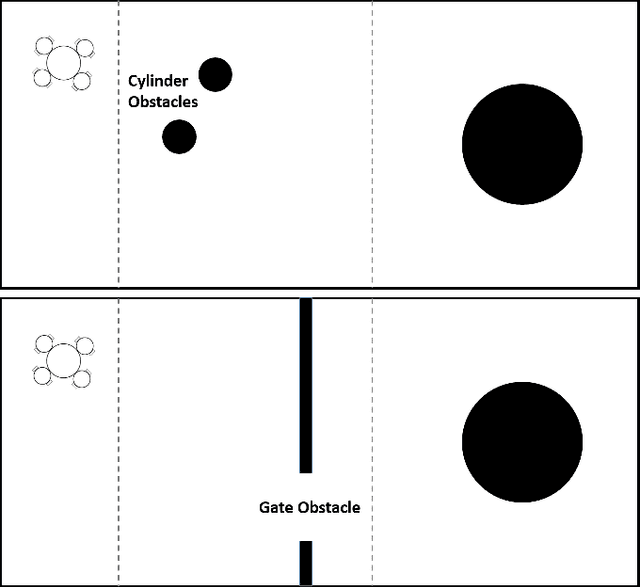

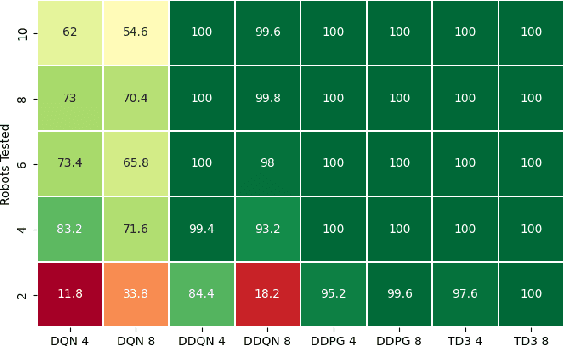

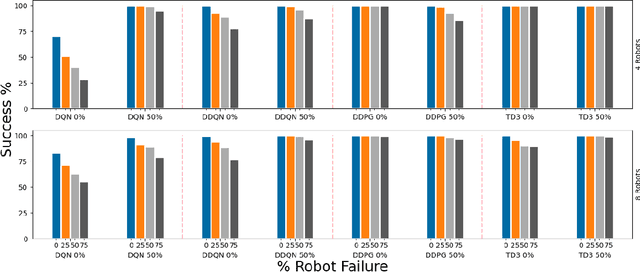

Abstract:The aim of this paper is to study how to apply deep reinforcement learning for the control of aggregates of minimalistic robots. We define aggregates as groups of robots with a physical connection that compels them to form a specified shape. In our case, the robots are pre-attached to an object that must be collectively transported to a known location. Minimalism, in our setting, stems from the barebone capabilities we assume: The robots can sense the target location and the immediate obstacles, but lack the means to communicate explicitly through, e.g., message-passing. In our setting, communication is implicit, i.e., mediated by aggregated push-and-pull on the object exerted by each robot. We analyze the ability to reach coordinated behavior of four well-known algorithms for deep reinforcement learning (DQN, DDQN, DDPG, and TD3). Our experiments include robot failures and different types of environmental obstacles. We compare the performance of the best control strategies found, highlighting strengths and weaknesses of each of the considered training algorithms.

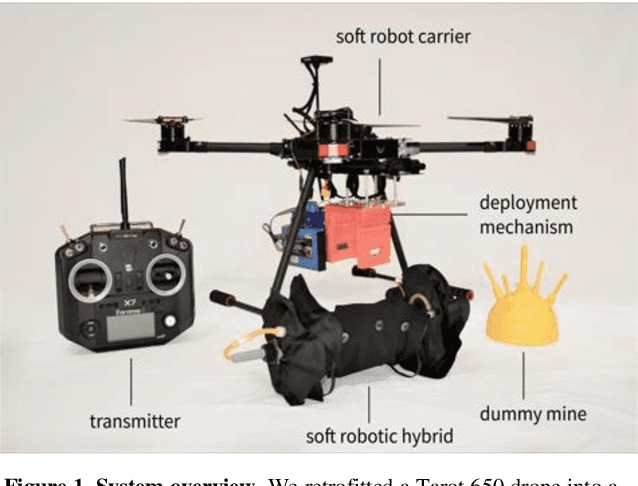

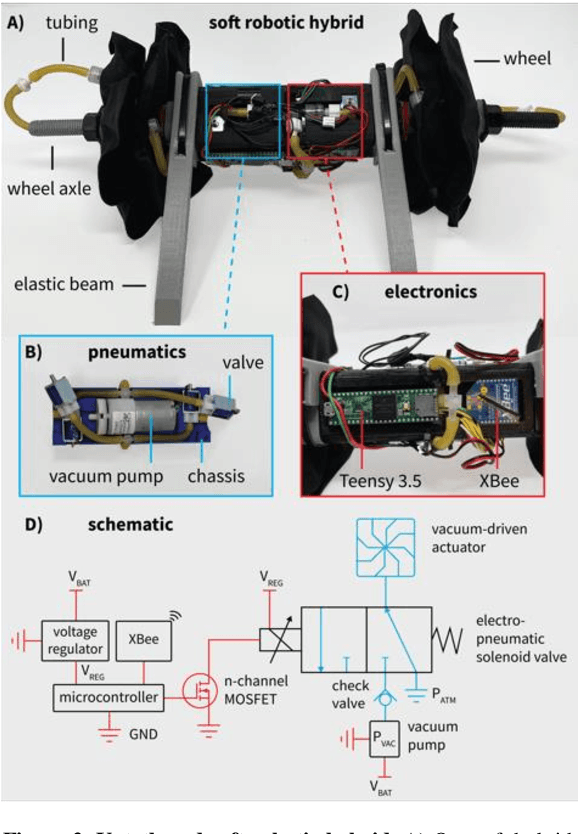

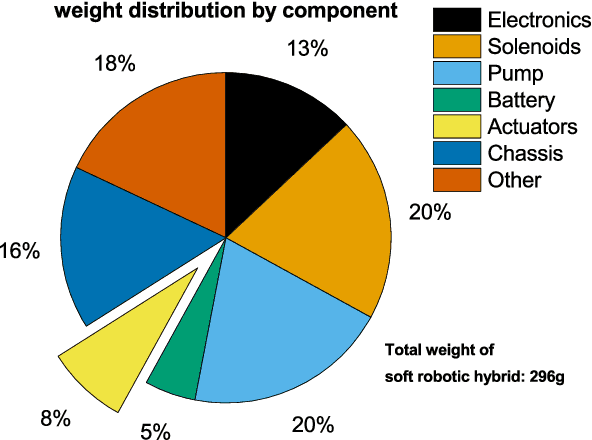

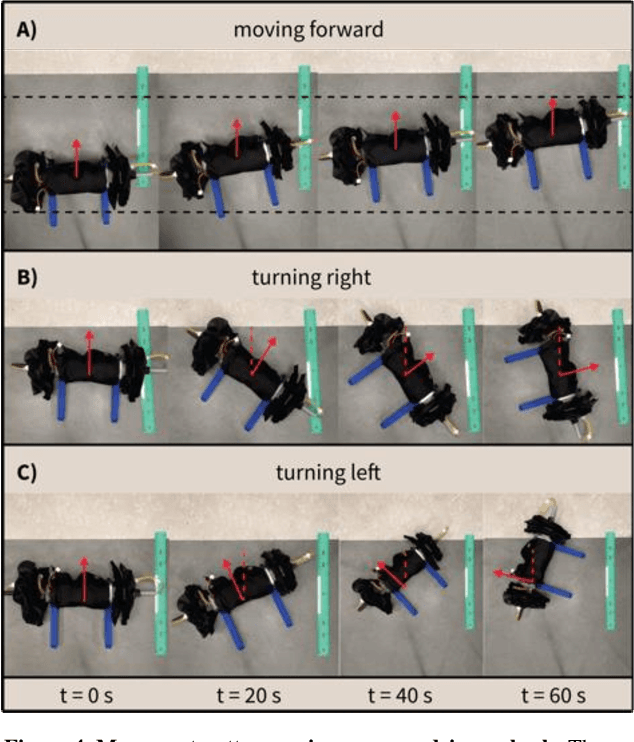

Air-Releasable Soft Robots for Explosive Ordnance Disposal

Feb 07, 2022

Abstract:The demining of landmines using drones is challenging; air-releasable payloads are typically non-intelligent (e.g., water balloons or explosives) and deploying them at even low altitudes (~6 meter) is inherently inaccurate due to complex deployment trajectories and constrained visual awareness by the drone pilot. Soft robotics offers a unique approach for aerial demining, namely due to the robust, low-cost, and lightweight designs of soft robots. Instead of non-intelligent payloads, here, we propose the use of air-releasable soft robots for demining. We developed a full system consisting of an unmanned aerial vehicle retrofitted to a soft robot carrier including a custom-made deployment mechanism, and an air-releasable, lightweight (296 g), untethered soft hybrid robot with integrated electronics that incorporates a new type of a vacuum-based flasher roller actuator system. We demonstrate a deployment cycle in which the drone drops the soft robotic hybrid from an altitude of 4.5 m meters and after which the robot approaches a dummy landmine. By deploying soft robots at points of interest, we can transition soft robotic technologies from the laboratory to real-world environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge