Bernhard Englitz

Deep probabilistic model synthesis enables unified modeling of whole-brain neural activity across individual subjects

Mar 15, 2026Abstract:Many disciplines need quantitative models that synthesize experimental data across multiple instances of the same general system. For example, neuroscientists must combine data from the brains of many individual animals to understand the species' brain in general. However, typical machine learning models treat one system instance at a time. Here we introduce a machine learning framework, deep probabilistic model synthesis (DPMS), that leverages system properties auxiliary to the model to combine data across system instances. DPMS specifically uses variational inference to learn a conditional prior distribution and instance-specific posterior distributions over model parameters that respectively tie together the system instances and capture their unique structure. DPMS can synthesize a wide variety of model classes, such as those for regression, classification, and dimensionality reduction, and we demonstrate its ability to improve upon single-instance models on synthetic data and whole-brain neural activity data from larval zebrafish.

Trace your sources in large-scale data: one ring to find them all

Mar 23, 2018

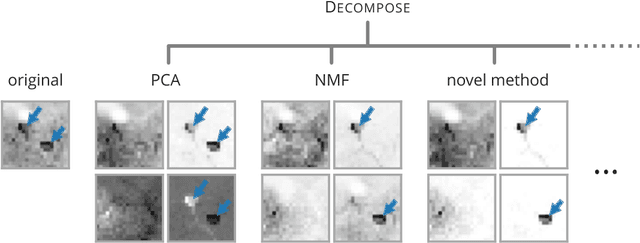

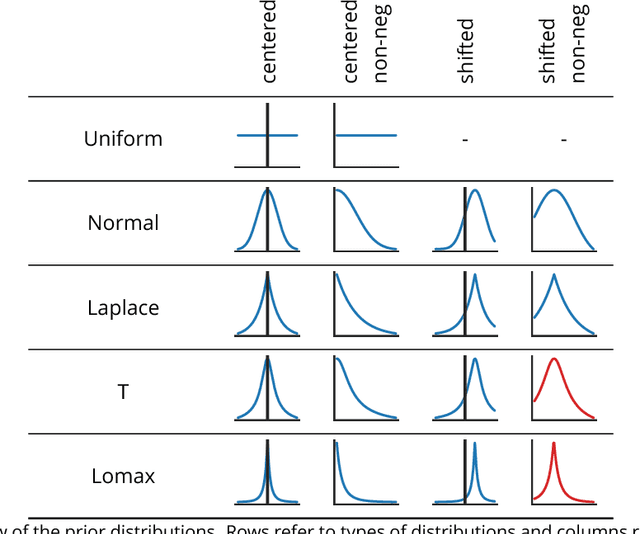

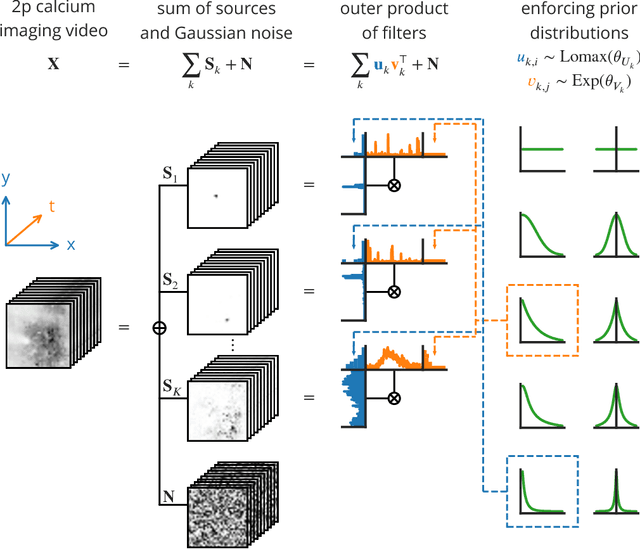

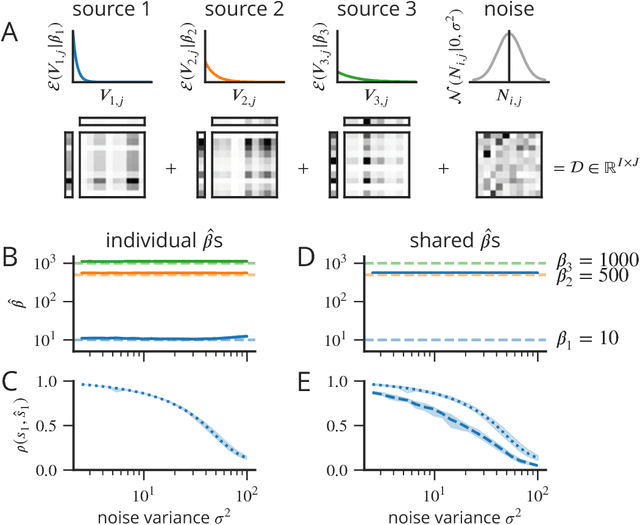

Abstract:An important preprocessing step in most data analysis pipelines aims to extract a small set of sources that explain most of the data. Currently used algorithms for blind source separation (BSS), however, often fail to extract the desired sources and need extensive cross-validation. In contrast, their rarely used probabilistic counterparts can get away with little cross-validation and are more accurate and reliable but no simple and scalable implementations are available. Here we present a novel probabilistic BSS framework (DECOMPOSE) that can be flexibly adjusted to the data, is extensible and easy to use, adapts to individual sources and handles large-scale data through algorithmic efficiency. DECOMPOSE encompasses and generalises many traditional BSS algorithms such as PCA, ICA and NMF and we demonstrate substantial improvements in accuracy and robustness on artificial and real data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge