Benjamin Migliori

Loss Landscape Geometry and the Learning of Symmetries: Or, What Influence Functions Reveal About Robust Generalization

Jan 28, 2026Abstract:We study how neural emulators of partial differential equation solution operators internalize physical symmetries by introducing an influence-based diagnostic that measures the propagation of parameter updates between symmetry-related states, defined as the metric-weighted overlap of loss gradients evaluated along group orbits. This quantity probes the local geometry of the learned loss landscape and goes beyond forward-pass equivariance tests by directly assessing whether learning dynamics couple physically equivalent configurations. Applying our diagnostic to autoregressive fluid flow emulators, we show that orbit-wise gradient coherence provides the mechanism for learning to generalize over symmetry transformations and indicates when training selects a symmetry compatible basin. The result is a novel technique for evaluating if surrogate models have internalized symmetry properties of the known solution operator.

Generative adversarial interpolative autoencoding: adversarial training on latent space interpolations encourage convex latent distributions

Jul 17, 2018

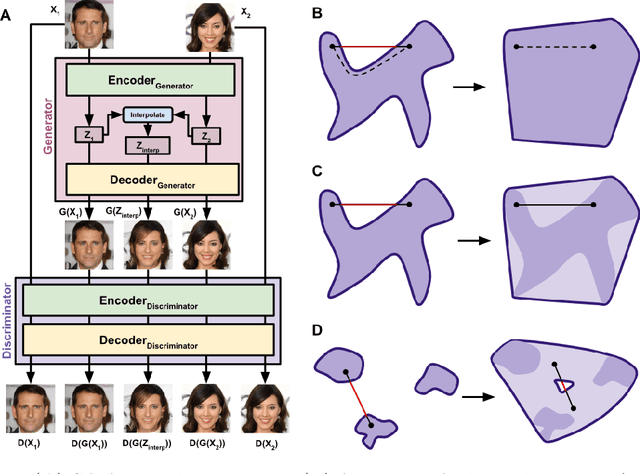

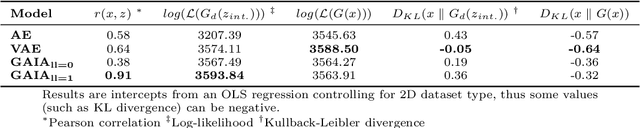

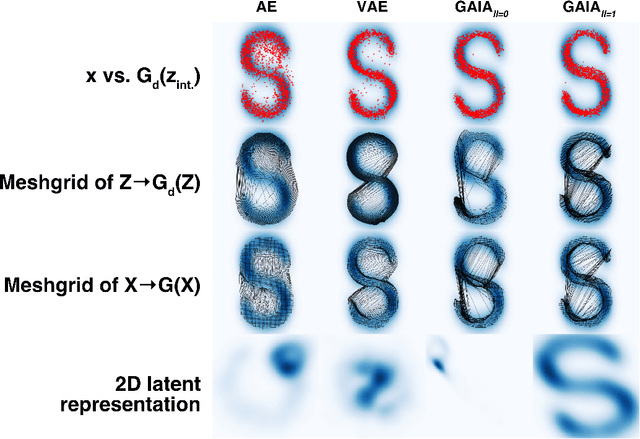

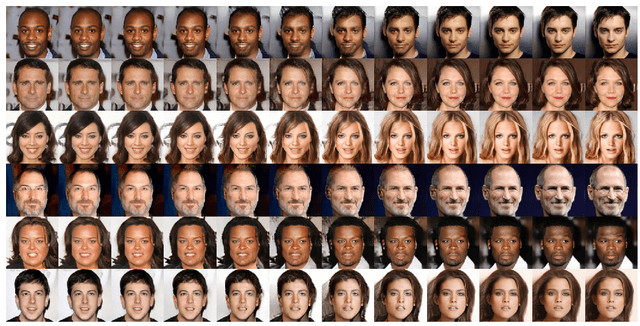

Abstract:We present a neural network architecture based upon the Autoencoder (AE) and Generative Adversarial Network (GAN) that promotes a convex latent distribution by training adversarially on latent space interpolations. By using an AE as both the generator and discriminator of a GAN, we pass a pixel-wise error function across the discriminator, yielding an AE which produces non-blurry samples that match both high- and low-level features of the original images. Interpolations between images in this space remain within the latent-space distribution of real images as trained by the discriminator, and therfore preserve realistic resemblances to the network inputs.

Biologically Inspired Radio Signal Feature Extraction with Sparse Denoising Autoencoders

May 17, 2016

Abstract:Automatic modulation classification (AMC) is an important task for modern communication systems; however, it is a challenging problem when signal features and precise models for generating each modulation may be unknown. We present a new biologically-inspired AMC method without the need for models or manually specified features --- thus removing the requirement for expert prior knowledge. We accomplish this task using regularized stacked sparse denoising autoencoders (SSDAs). Our method selects efficient classification features directly from raw in-phase/quadrature (I/Q) radio signals in an unsupervised manner. These features are then used to construct higher-complexity abstract features which can be used for automatic modulation classification. We demonstrate this process using a dataset generated with a software defined radio, consisting of random input bits encoded in 100-sample segments of various common digital radio modulations. Our results show correct classification rates of > 99% at 7.5 dB signal-to-noise ratio (SNR) and > 92% at 0 dB SNR in a 6-way classification test. Our experiments demonstrate a dramatically new and broadly applicable mechanism for performing AMC and related tasks without the need for expert-defined or modulation-specific signal information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge