Ashish Mehta

The Dynamics of Delusion: Modeling Bidirectional False Belief Amplification in Human-Chatbot Dialogue

Apr 28, 2026Abstract:There is growing concern that AI chatbots might fuel delusional beliefs in users. Some have suggested that humans and chatbots mutually reinforce false beliefs over time, but quantitative evidence is lacking. Using a unique dataset of chat logs from individuals who exhibited delusional thinking, we developed a latent state model that captures accumulating and decaying influences between humans and chatbots. We find that a bidirectional influence model substantially outperforms a unidirectional alternative where humans are the primary driver of delusion. We find that humans exert strong but short-lived influence on chatbots, whereas chatbots exert longer-lasting influence on humans. Moreover, chatbots exert strong, stable self-influence over their own future outputs that tends to perpetuate delusions over long stretches of conversation. In fact, this chatbot self-influence constituted the dominant pathway when considering accumulated influence over time. Overall, these results indicate that humans tend to drive sharp, immediate increases in delusion, whereas chatbots sustain and propagate these effects over longer timescales. Together, these findings provide the first quantitative evidence that human-chatbot interactions can form feedback loops of delusion, decomposable into distinct pathways with dissociable temporal dynamics. By doing so, they can inform the development of safer AI systems.

Characterizing Delusional Spirals through Human-LLM Chat Logs

Mar 17, 2026Abstract:As large language models (LLMs) have proliferated, disturbing anecdotal reports of negative psychological effects, such as delusions, self-harm, and ``AI psychosis,'' have emerged in global media and legal discourse. However, it remains unclear how users and chatbots interact over the course of lengthy delusional ``spirals,'' limiting our ability to understand and mitigate the harm. In our work, we analyze logs of conversations with LLM chatbots from 19 users who report having experienced psychological harms from chatbot use. Many of our participants come from a support group for such chatbot users. We also include chat logs from participants covered by media outlets in widely-distributed stories about chatbot-reinforced delusions. In contrast to prior work that speculates on potential AI harms to mental health, to our knowledge we present the first in-depth study of such high-profile and veridically harmful cases. We develop an inventory of 28 codes and apply it to the $391,562$ messages in the logs. Codes include whether a user demonstrates delusional thinking (15.5% of user messages), a user expresses suicidal thoughts (69 validated user messages), or a chatbot misrepresents itself as sentient (21.2% of chatbot messages). We analyze the co-occurrence of message codes. We find, for example, that messages that declare romantic interest and messages where the chatbot describes itself as sentient occur much more often in longer conversations, suggesting that these topics could promote or result from user over-engagement and that safeguards in these areas may degrade in multi-turn settings. We conclude with concrete recommendations for how policymakers, LLM chatbot developers, and users can use our inventory and conversation analysis tool to understand and mitigate harm from LLM chatbots. Warning: This paper discusses self-harm, trauma, and violence.

Generative Active Learning for Long-tail Trajectory Prediction via Controllable Diffusion Model

Jul 30, 2025

Abstract:While data-driven trajectory prediction has enhanced the reliability of autonomous driving systems, it still struggles with rarely observed long-tail scenarios. Prior works addressed this by modifying model architectures, such as using hypernetworks. In contrast, we propose refining the training process to unlock each model's potential without altering its structure. We introduce Generative Active Learning for Trajectory prediction (GALTraj), the first method to successfully deploy generative active learning into trajectory prediction. It actively identifies rare tail samples where the model fails and augments these samples with a controllable diffusion model during training. In our framework, generating scenarios that are diverse, realistic, and preserve tail-case characteristics is paramount. Accordingly, we design a tail-aware generation method that applies tailored diffusion guidance to generate trajectories that both capture rare behaviors and respect traffic rules. Unlike prior simulation methods focused solely on scenario diversity, GALTraj is the first to show how simulator-driven augmentation benefits long-tail learning in trajectory prediction. Experiments on multiple trajectory datasets (WOMD, Argoverse2) with popular backbones (QCNet, MTR) confirm that our method significantly boosts performance on tail samples and also enhances accuracy on head samples.

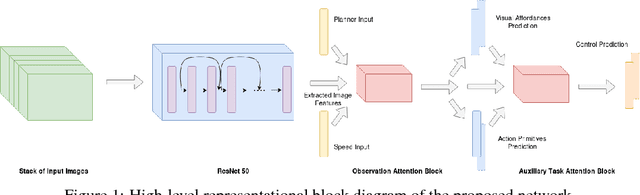

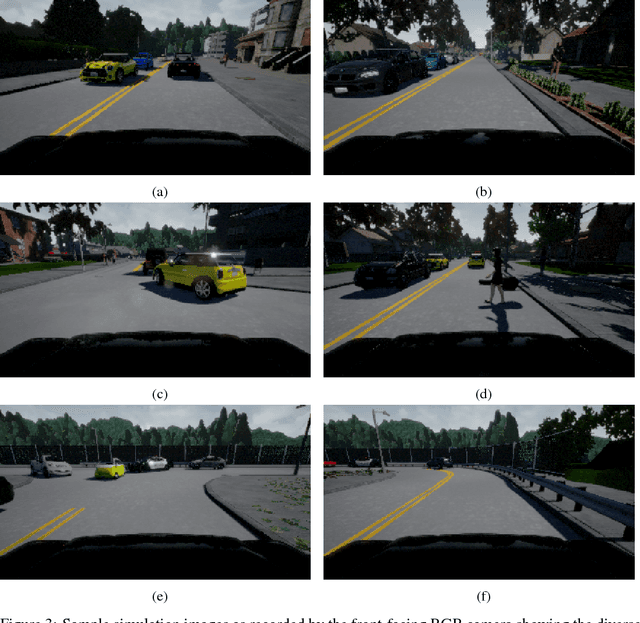

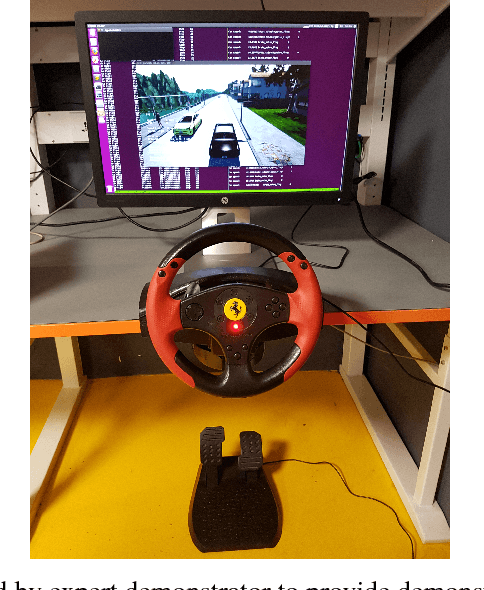

Learning End-to-end Autonomous Driving using Guided Auxiliary Supervision

Aug 30, 2018

Abstract:Learning to drive faithfully in highly stochastic urban settings remains an open problem. To that end, we propose a Multi-task Learning from Demonstration (MT-LfD) framework which uses supervised auxiliary task prediction to guide the main task of predicting the driving commands. Our framework involves an end-to-end trainable network for imitating the expert demonstrator's driving commands. The network intermediately predicts visual affordances and action primitives through direct supervision which provide the aforementioned auxiliary supervised guidance. We demonstrate that such joint learning and supervised guidance facilitates hierarchical task decomposition, assisting the agent to learn faster, achieve better driving performance and increases transparency of the otherwise black-box end-to-end network. We run our experiments to validate the MT-LfD framework in CARLA, an open-source urban driving simulator. We introduce multiple non-player agents in CARLA and induce temporal noise in them for realistic stochasticity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge