Arunachalam Iyer

Automatic Facial Paralysis Estimation with Facial Action Units

Mar 30, 2022

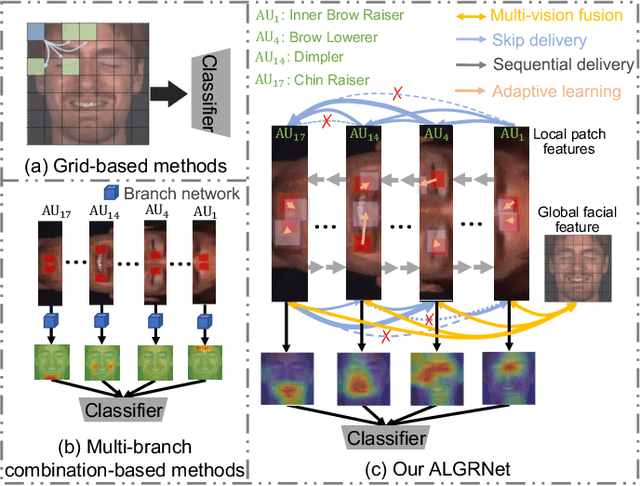

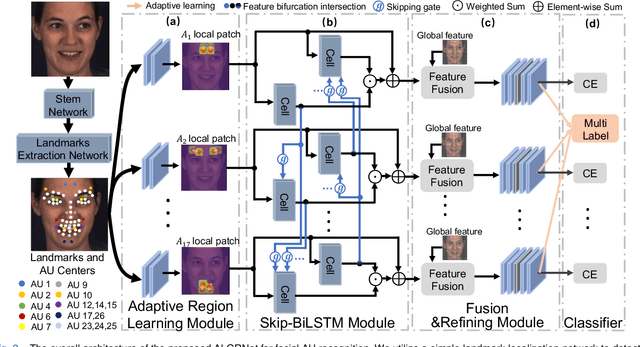

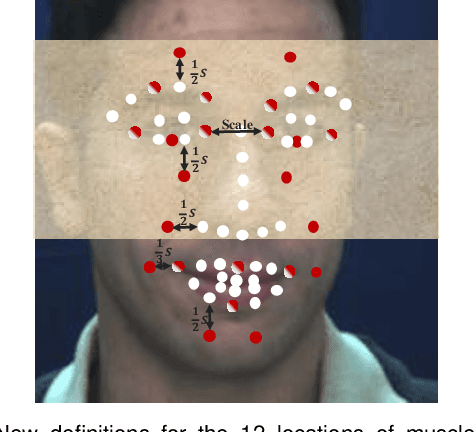

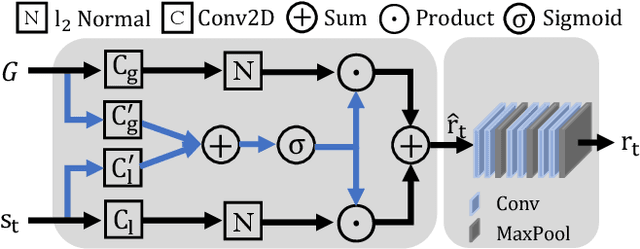

Abstract:Facial palsy is unilateral facial nerve weakness or paralysis of rapid onset with unknown causes. Automatically estimating facial palsy severeness can be helpful for the diagnosis and treatment of people suffering from it across the world. In this work, we develop and experiment with a novel model for estimating facial palsy severity. For this, an effective Facial Action Units (AU) detection technique is incorporated into our model, where AUs refer to a unique set of facial muscle movements used to describe almost every anatomically possible facial expression. In this paper, we propose a novel Adaptive Local-Global Relational Network (ALGRNet) for facial AU detection and use it to classify facial paralysis severity. ALGRNet mainly consists of three main novel structures: (i) an adaptive region learning module that learns the adaptive muscle regions based on the detected landmarks; (ii) a skip-BiLSTM that models the latent relationships among local AUs; and (iii) a feature fusion&refining module that investigates the complementary between the local and global face. Quantitative results on two AU benchmarks, i.e., BP4D and DISFA, demonstrate our ALGRNet can achieve promising AU detection accuracy. We further demonstrate the effectiveness of its application to facial paralysis estimation by migrating ALGRNet to a facial paralysis dataset collected and annotated by medical professionals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge