Arthur Müller

Reinforcement Learning as an Improvement Heuristic for Real-World Production Scheduling

Sep 18, 2024Abstract:The integration of Reinforcement Learning (RL) with heuristic methods is an emerging trend for solving optimization problems, which leverages RL's ability to learn from the data generated during the search process. One promising approach is to train an RL agent as an improvement heuristic, starting with a suboptimal solution that is iteratively improved by applying small changes. We apply this approach to a real-world multiobjective production scheduling problem. Our approach utilizes a network architecture that includes Transformer encoding to learn the relationships between jobs. Afterwards, a probability matrix is generated from which pairs of jobs are sampled and then swapped to improve the solution. We benchmarked our approach against other heuristics using real data from our industry partner, demonstrating its superior performance.

Demystifying Reinforcement Learning in Production Scheduling via Explainable AI

Aug 19, 2024

Abstract:Deep Reinforcement Learning (DRL) is a frequently employed technique to solve scheduling problems. Although DRL agents ace at delivering viable results in short computing times, their reasoning remains opaque. We conduct a case study where we systematically apply two explainable AI (xAI) frameworks, namely SHAP (DeepSHAP) and Captum (Input x Gradient), to describe the reasoning behind scheduling decisions of a specialized DRL agent in a flow production. We find that methods in the xAI literature lack falsifiability and consistent terminology, do not adequately consider domain-knowledge, the target audience or real-world scenarios, and typically provide simple input-output explanations rather than causal interpretations. To resolve this issue, we introduce a hypotheses-based workflow. This approach enables us to inspect whether explanations align with domain knowledge and match the reward hypotheses of the agent. We furthermore tackle the challenge of communicating these insights to third parties by tailoring hypotheses to the target audience, which can serve as interpretations of the agent's behavior after verification. Our proposed workflow emphasizes the repeated verification of explanations and may be applicable to various DRL-based scheduling use cases.

Smaller Batches, Bigger Gains? Investigating the Impact of Batch Sizes on Reinforcement Learning Based Real-World Production Scheduling

Jun 04, 2024Abstract:Production scheduling is an essential task in manufacturing, with Reinforcement Learning (RL) emerging as a key solution. In a previous work, RL was utilized to solve an extended permutation flow shop scheduling problem (PFSSP) for a real-world production line with two stages, linked by a central buffer. The RL agent was trained to sequence equallysized product batches to minimize setup efforts and idle times. However, the substantial impact caused by varying the size of these product batches has not yet been explored. In this follow-up study, we investigate the effects of varying batch sizes, exploring both the quality of solutions and the training dynamics of the RL agent. The results demonstrate that it is possible to methodically identify reasonable boundaries for the batch size. These boundaries are determined on one side by the increasing sample complexity associated with smaller batch sizes, and on the other side by the decreasing flexibility of the agent when dealing with larger batch sizes. This provides the practitioner the ability to make an informed decision regarding the selection of an appropriate batch size. Moreover, we introduce and investigate two new curriculum learning strategies to enable the training with small batch sizes. The findings of this work offer the potential for application in several industrial use cases with comparable scheduling problems.

Bridging the Reality Gap of Reinforcement Learning based Traffic Signal Control using Domain Randomization and Meta Learning

Jul 21, 2023

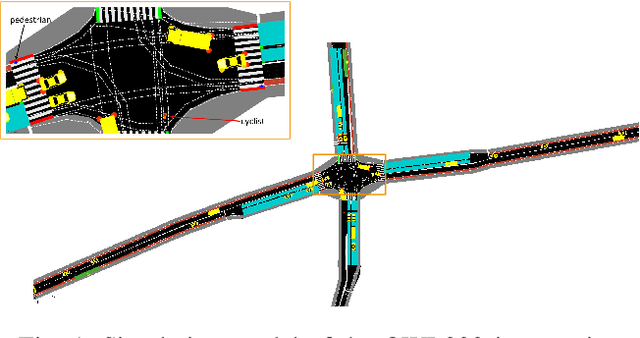

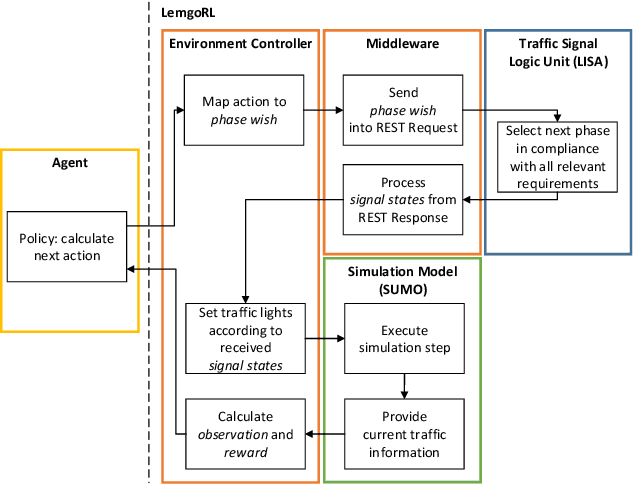

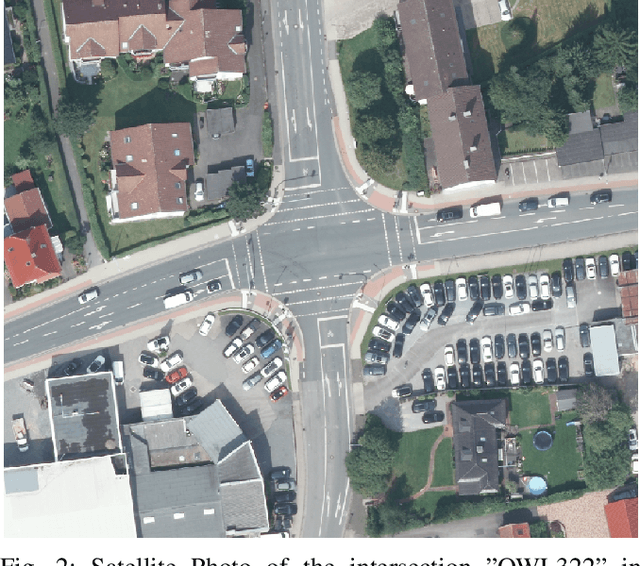

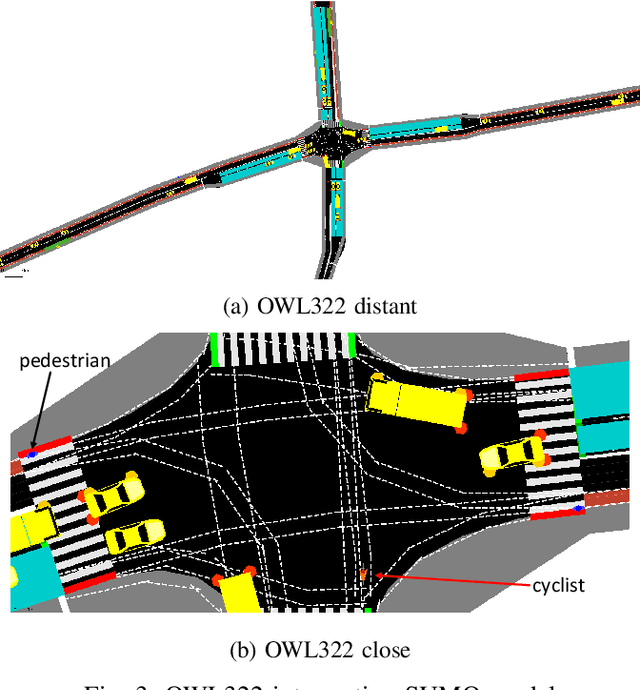

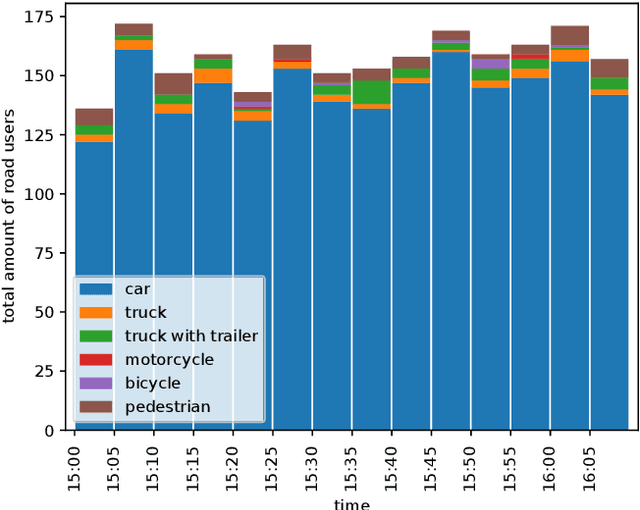

Abstract:Reinforcement Learning (RL) has been widely explored in Traffic Signal Control (TSC) applications, however, still no such system has been deployed in practice. A key barrier to progress in this area is the reality gap, the discrepancy that results from differences between simulation models and their real-world equivalents. In this paper, we address this challenge by first presenting a comprehensive analysis of potential simulation parameters that contribute to this reality gap. We then also examine two promising strategies that can bridge this gap: Domain Randomization (DR) and Model-Agnostic Meta-Learning (MAML). Both strategies were trained with a traffic simulation model of an intersection. In addition, the model was embedded in LemgoRL, a framework that integrates realistic, safety-critical requirements into the control system. Subsequently, we evaluated the performance of the two methods on a separate model of the same intersection that was developed with a different traffic simulator. In this way, we mimic the reality gap. Our experimental results show that both DR and MAML outperform a state-of-the-art RL algorithm, therefore highlighting their potential to mitigate the reality gap in RLbased TSC systems.

Safe and Psychologically Pleasant Traffic Signal Control with Reinforcement Learning using Action Masking

Jun 21, 2022

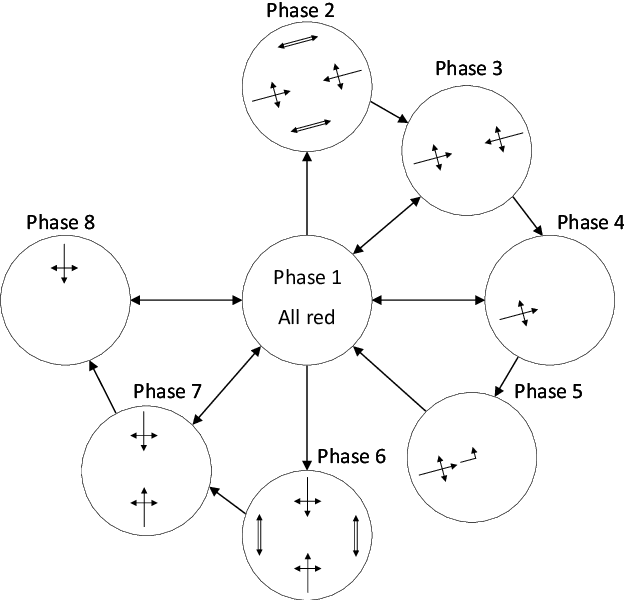

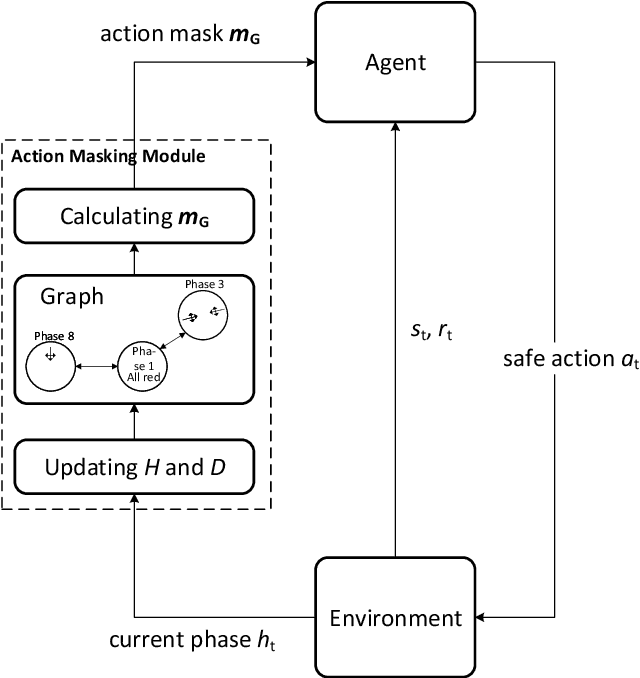

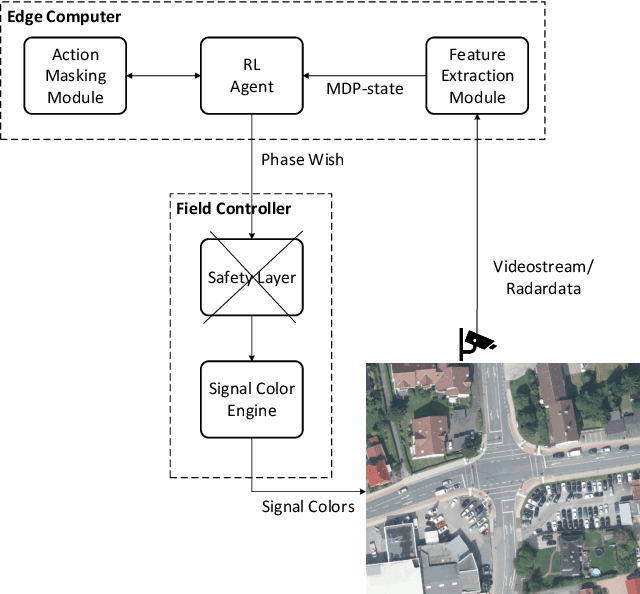

Abstract:Reinforcement learning (RL) for traffic signal control (TSC) has shown better performance in simulation for controlling the traffic flow of intersections than conventional approaches. However, due to several challenges, no RL-based TSC has been deployed in the field yet. One major challenge for real-world deployment is to ensure that all safety requirements are met at all times during operation. We present an approach to ensure safety in a real-world intersection by using an action space that is safe by design. The action space encompasses traffic phases, which represent the combination of non-conflicting signal colors of the intersection. Additionally, an action masking mechanism makes sure that only appropriate phase transitions are carried out. Another challenge for real-world deployment is to ensure a control behavior that avoids stress for road users. We demonstrate how to achieve this by incorporating domain knowledge through extending the action masking mechanism. We test and verify our approach in a realistic simulation scenario. By ensuring safety and psychologically pleasant control behavior, our approach drives development towards real-world deployment of RL for TSC.

LemgoRL: An open-source Benchmark Tool to Train Reinforcement Learning Agents for Traffic Signal Control in a real-world simulation scenario

Mar 30, 2021

Abstract:Sub-optimal control policies in intersection traffic signal controllers (TSC) contribute to congestion and lead to negative effects on human health and the environment. Reinforcement learning (RL) for traffic signal control is a promising approach to design better control policies and has attracted considerable research interest in recent years. However, most work done in this area used simplified simulation environments of traffic scenarios to train RL-based TSC. To deploy RL in real-world traffic systems, the gap between simplified simulation environments and real-world applications has to be closed. Therefore, we propose LemgoRL, a benchmark tool to train RL agents as TSC in a realistic simulation environment of Lemgo, a medium-sized town in Germany. In addition to the realistic simulation model, LemgoRL encompasses a traffic signal logic unit that ensures compliance with all regulatory and safety requirements. LemgoRL offers the same interface as the well-known OpenAI gym toolkit to enable easy deployment in existing research work. Our benchmark tool drives the development of RL algorithms towards real-world applications. We provide LemgoRL as an open-source tool at https://github.com/rl-ina/lemgorl.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge