Artem Yaroshchuk

Environment Classification via Blind Roomprints Estimation

Sep 15, 2022

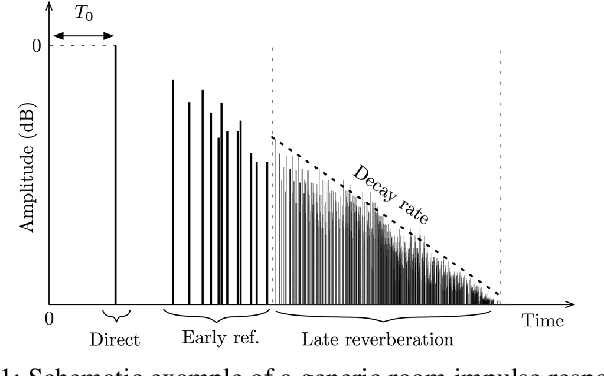

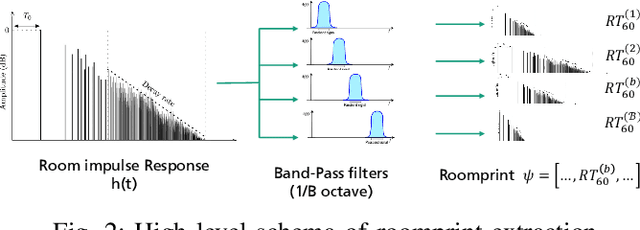

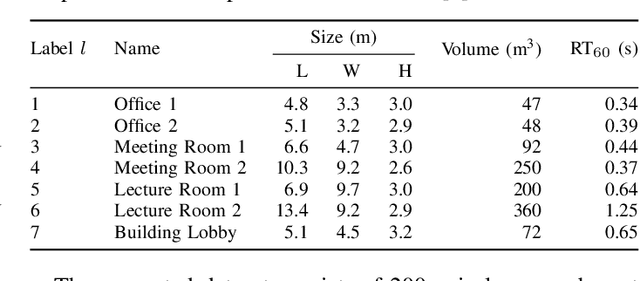

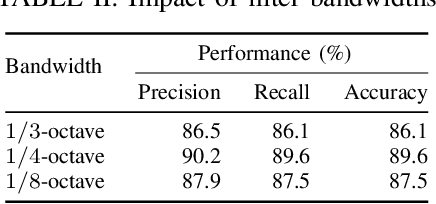

Abstract:In this paper we present a novel approach for environment classification for speech recordings, which does not require the selection of decaying reverberation tails. It is based on a multi-band RT60 analysis of blind channel estimates and achieves an accuracy of up to 93.6% on test recordings derived from the ACE corpus.

Open Challenges in Synthetic Speech Detection

Sep 15, 2022

Abstract:In this paper the current status and open challenges of synthetic speech detection are addressed. The work comprises an initial analysis of available open datasets and of existing detection methods, a description of the requirements for new research datasets compliant with regulations and better representing real-case scenarios, and a discussion of the desired characteristics of future trustworthy detection methods in terms of both functional and non-functional requirements. Compared to other works, based on specific detection solutions or presenting single dataset of synthetic speeches, our paper is meant to orient future state-of-the-art research in the domain, to quickly lessen the current gap between synthesis and detection approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge