Arpan Sainju

Graph Attention Network-Based Detection of Autism Spectrum Disorder

Mar 27, 2026Abstract:Autism Spectrum Disorder (ASD) is a neurodevelopmental condition characterized by atypical brain connectivity. One of the crucial steps in addressing ASD is its early detection. This study introduces a novel computational framework that employs an Attention-Based Graph Convolutional Network, referred to as the GATGraphClassifier, for detecting ASD. We utilize Functional Magnetic Resonance Imaging (fMRI) data from the Autism Brain Imaging Data Exchange (ABIDE) repository to construct functional connectivity matrices using Pearson correlation, which captures interactions between various brain regions. These matrices are then transformed into graph representations, where the nodes and edges represent the brain regions and functional connections, respectively. The GATGraphClassifier employs attention mechanisms to identify critical connectivity patterns, thereby enhancing the model's interpretability and diagnostic accuracy. Our proposed framework demonstrates superior performance across all standard classification metrics compared to existing state-of-the-art methods. Notably, we achieved an average accuracy of 88.79\% on the test data over 30 independent runs, surpassing the benchmark model's performance by 12.27\%. In addition, we identified the crucial brain regions associated with ASD, consistent with the previous studies, and a few novel regions. This study not only contributes to the advancement of ASD detection but also shows the potential for broader adaptability of GATGraphClassifier in analyzing complex relational data in various fields, where understanding intricate connectivity and interaction patterns is essential.

PromptIQ: Who Cares About Prompts? Let System Handle It -- A Component-Aware Framework for T2I Generation

May 09, 2025Abstract:Generating high-quality images without prompt engineering expertise remains a challenge for text-to-image (T2I) models, which often misinterpret poorly structured prompts, leading to distortions and misalignments. While humans easily recognize these flaws, metrics like CLIP fail to capture structural inconsistencies, exposing a key limitation in current evaluation methods. To address this, we introduce PromptIQ, an automated framework that refines prompts and assesses image quality using our novel Component-Aware Similarity (CAS) metric, which detects and penalizes structural errors. Unlike conventional methods, PromptIQ iteratively generates and evaluates images until the user is satisfied, eliminating trial-and-error prompt tuning. Our results show that PromptIQ significantly improves generation quality and evaluation accuracy, making T2I models more accessible for users with little to no prompt engineering expertise.

Mapping road safety features from streetview imagery: A deep learning approach

Jul 15, 2019

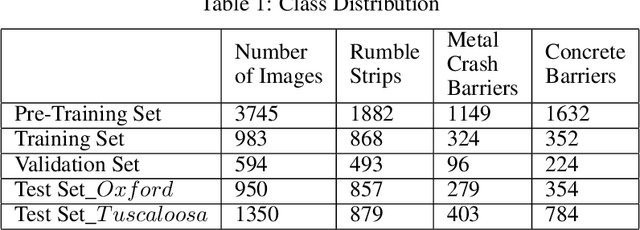

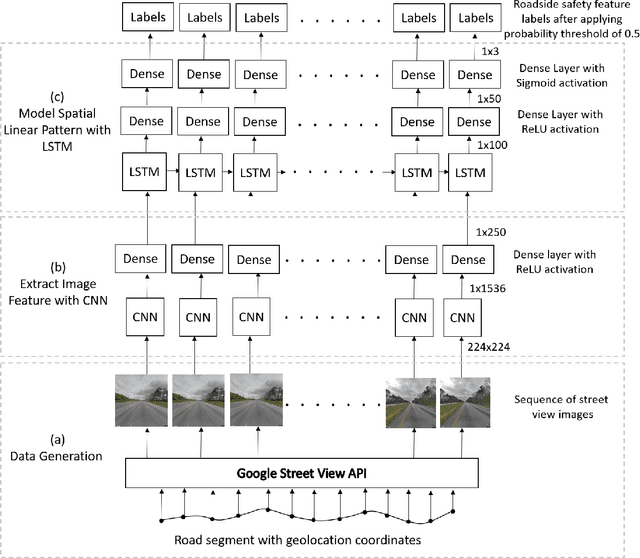

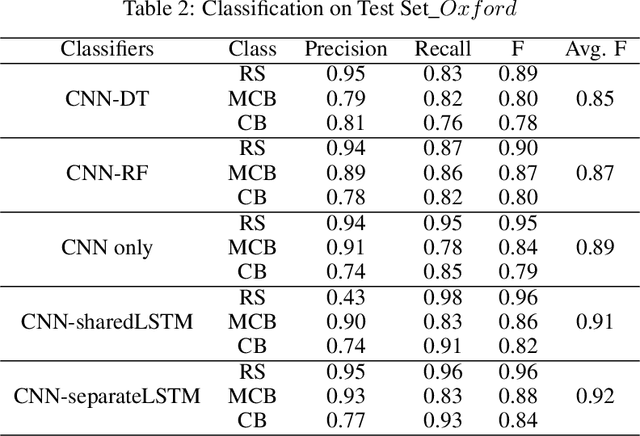

Abstract:Each year, around 6 million car accidents occur in the U.S. on average. Road safety features (e.g., concrete barriers, metal crash barriers, rumble strips) play an important role in preventing or mitigating vehicle crashes. Accurate maps of road safety features is an important component of safety management systems for federal or state transportation agencies, helping traffic engineers identify locations to invest on safety infrastructure. In current practice, mapping road safety features is largely done manually (e.g., observations on the road or visual interpretation of streetview imagery), which is both expensive and time consuming. In this paper, we propose a deep learning approach to automatically map road safety features from streetview imagery. Unlike existing Convolutional Neural Networks (CNNs) that classify each image individually, we propose to further add Recurrent Neural Network (Long Short Term Memory) to capture geographic context of images (spatial autocorrelation effect along linear road network paths). Evaluations on real world streetview imagery show that our proposed model outperforms several baseline methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge