Animesh Jha

MAEB: Massive Audio Embedding Benchmark

Feb 17, 2026Abstract:We introduce the Massive Audio Embedding Benchmark (MAEB), a large-scale benchmark covering 30 tasks across speech, music, environmental sounds, and cross-modal audio-text reasoning in 100+ languages. We evaluate 50+ models and find that no single model dominates across all tasks: contrastive audio-text models excel at environmental sound classification (e.g., ESC50) but score near random on multilingual speech tasks (e.g., SIB-FLEURS), while speech-pretrained models show the opposite pattern. Clustering remains challenging for all models, with even the best-performing model achieving only modest results. We observe that models excelling on acoustic understanding often perform poorly on linguistic tasks, and vice versa. We also show that the performance of audio encoders on MAEB correlates highly with their performance when used in audio large language models. MAEB is derived from MAEB+, a collection of 98 tasks. MAEB is designed to maintain task diversity while reducing evaluation cost, and it integrates into the MTEB ecosystem for unified evaluation across text, image, and audio modalities. We release MAEB and all 98 tasks along with code and a leaderboard at https://github.com/embeddings-benchmark/mteb.

Certified Unlearning for Neural Networks

Jun 08, 2025

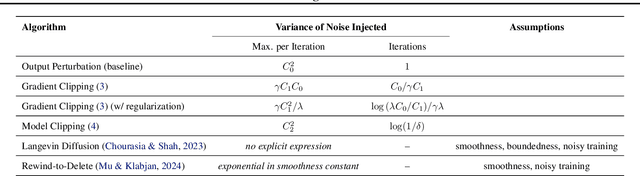

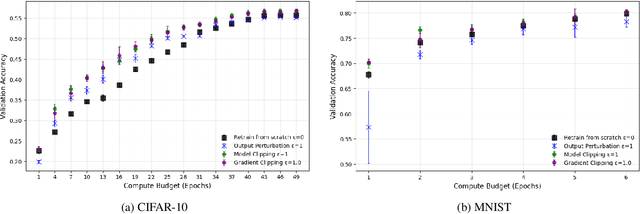

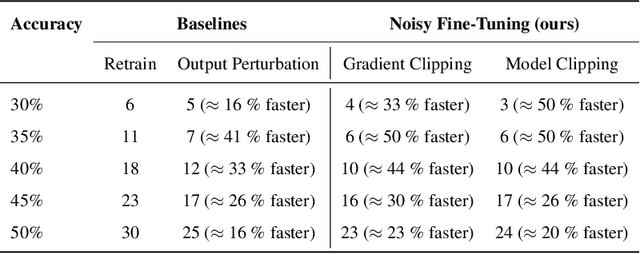

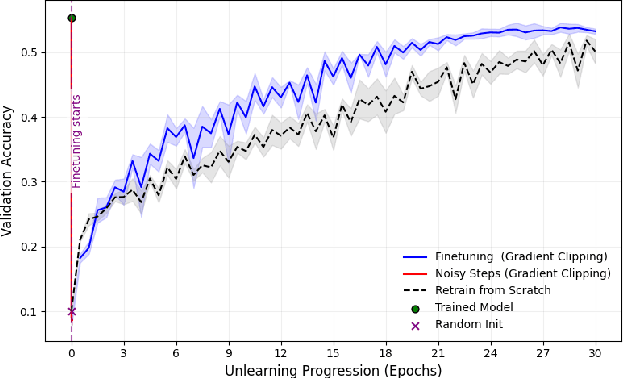

Abstract:We address the problem of machine unlearning, where the goal is to remove the influence of specific training data from a model upon request, motivated by privacy concerns and regulatory requirements such as the "right to be forgotten." Unfortunately, existing methods rely on restrictive assumptions or lack formal guarantees. To this end, we propose a novel method for certified machine unlearning, leveraging the connection between unlearning and privacy amplification by stochastic post-processing. Our method uses noisy fine-tuning on the retain data, i.e., data that does not need to be removed, to ensure provable unlearning guarantees. This approach requires no assumptions about the underlying loss function, making it broadly applicable across diverse settings. We analyze the theoretical trade-offs in efficiency and accuracy and demonstrate empirically that our method not only achieves formal unlearning guarantees but also performs effectively in practice, outperforming existing baselines. Our code is available at https://github.com/stair-lab/certified-unlearningneural-networks-icml-2025

[Re] Differentiable Spatial Planning using Transformers

Aug 19, 2022![Figure 1 for [Re] Differentiable Spatial Planning using Transformers](/_next/image?url=https%3A%2F%2Fai2-s2-public.s3.amazonaws.com%2Ffigures%2F2017-08-08%2Ff52887523e4052a448b8dced56e196661b8109ea%2F4-Figure1-1.png&w=640&q=75)

![Figure 2 for [Re] Differentiable Spatial Planning using Transformers](/_next/image?url=https%3A%2F%2Fai2-s2-public.s3.amazonaws.com%2Ffigures%2F2017-08-08%2Ff52887523e4052a448b8dced56e196661b8109ea%2F5-Table1-1.png&w=640&q=75)

![Figure 3 for [Re] Differentiable Spatial Planning using Transformers](/_next/image?url=https%3A%2F%2Fai2-s2-public.s3.amazonaws.com%2Ffigures%2F2017-08-08%2Ff52887523e4052a448b8dced56e196661b8109ea%2F5-Figure2-1.png&w=640&q=75)

![Figure 4 for [Re] Differentiable Spatial Planning using Transformers](/_next/image?url=https%3A%2F%2Fai2-s2-public.s3.amazonaws.com%2Ffigures%2F2017-08-08%2Ff52887523e4052a448b8dced56e196661b8109ea%2F6-Table2-1.png&w=640&q=75)

Abstract:This report covers our reproduction effort of the paper 'Differentiable Spatial Planning using Transformers' by Chaplot et al. . In this paper, the problem of spatial path planning in a differentiable way is considered. They show that their proposed method of using Spatial Planning Transformers outperforms prior data-driven models and leverages differentiable structures to learn mapping without a ground truth map simultaneously. We verify these claims by reproducing their experiments and testing their method on new data. We also investigate the stability of planning accuracy with maps with increased obstacle complexity. Efforts to investigate and verify the learnings of the Mapper module were met with failure stemming from a paucity of computational resources and unreachable authors.

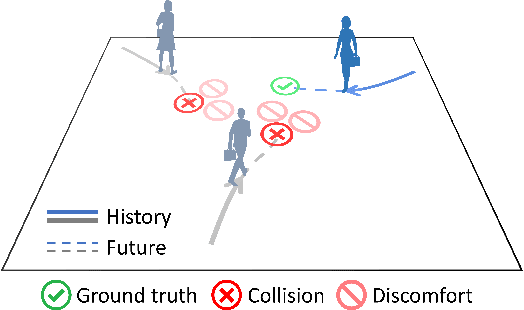

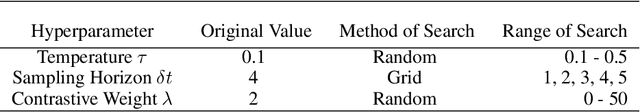

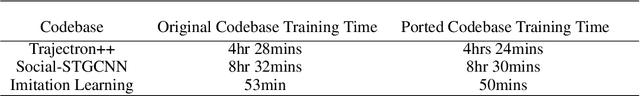

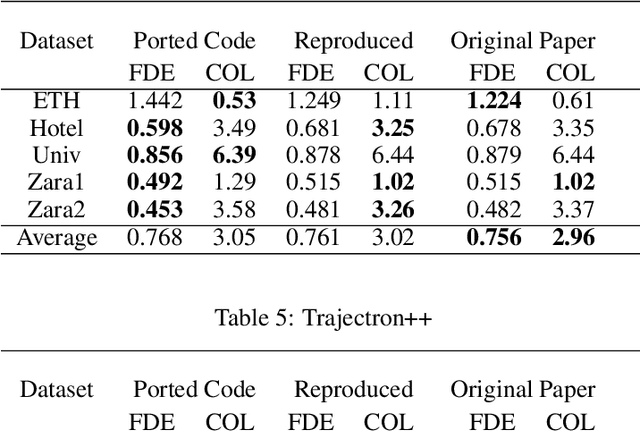

Reproducibility Report: Contrastive Learning of Socially-aware Motion Representations

Aug 18, 2022

Abstract:The following paper is a reproducibility report for "Social NCE: Contrastive Learning of Socially-aware Motion Representations" {\cite{liu2020snce}} published in ICCV 2021 as part of the ML Reproducibility Challenge 2021. The original code was made available by the author \footnote{\href{https://github.com/vita-epfl/social-nce}{https://github.com/vita-epfl/social-nce}}. We attempted to verify the results claimed by the authors and reimplemented their code in PyTorch Lightning.

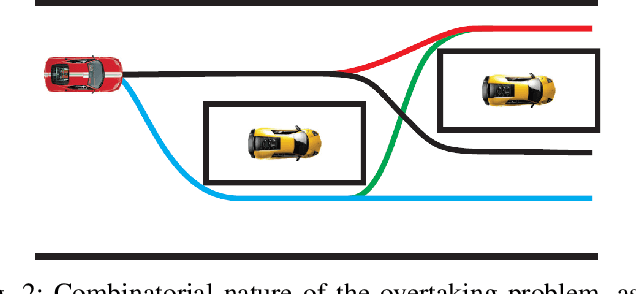

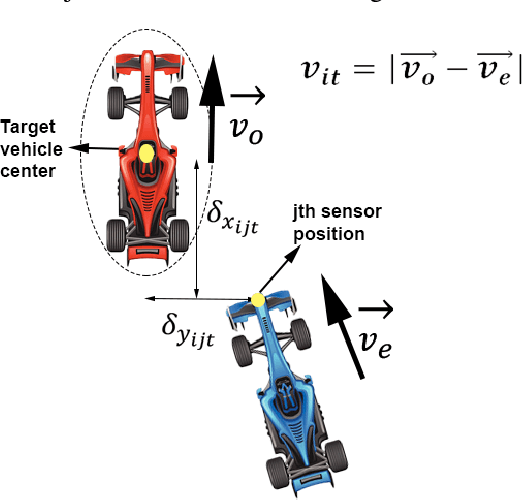

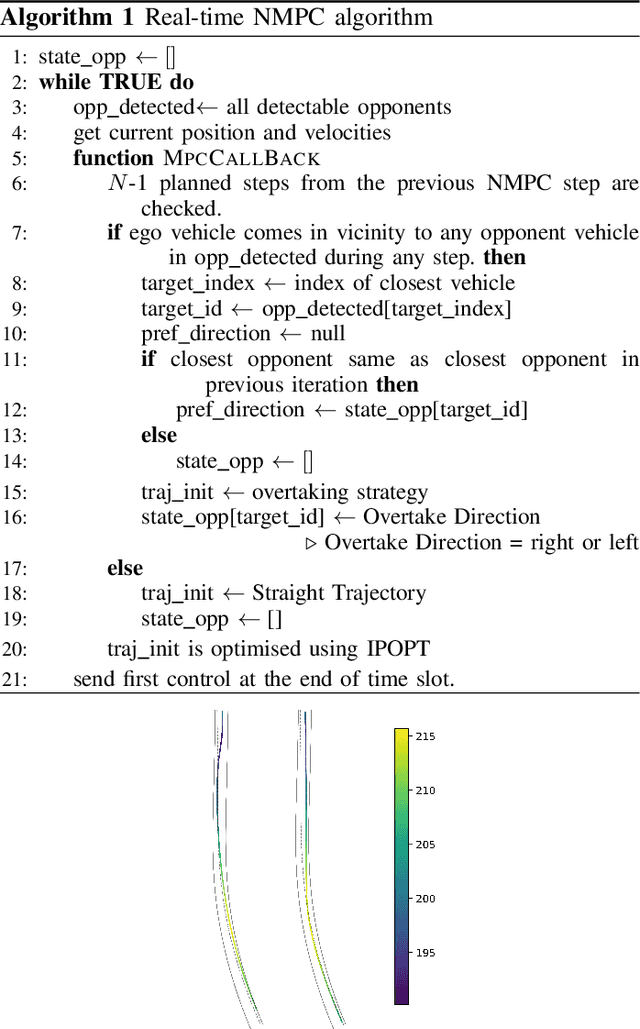

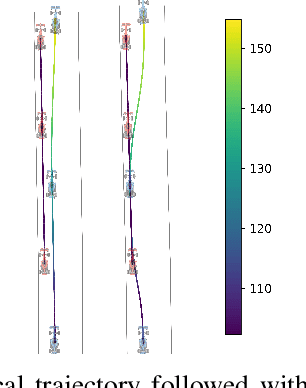

Local NMPC on Global Optimised Path for Autonomous Racing

Sep 15, 2021

Abstract:The paper presents a strategy for the control of anautonomous racing car on a pre-mapped track. Using a dynamic model of the vehicle, the optimal racing line is computed, taking track boundaries into account. With the optimal racing line as areference, a local nonlinear model predictive controller (NMPC) is proposed, which takes into account multiple local objectives like making more progress along the race line, avoiding collision with opponent vehicles, and use of drafting to achieve more progress.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge