Andrew W. Singletary

Safety Guardrails in the Sky: Realizing Control Barrier Functions on the VISTA F-16 Jet

Mar 29, 2026Abstract:The advancement of autonomous systems -- from legged robots to self-driving vehicles and aircraft -- necessitates executing increasingly high-performance and dynamic motions without ever putting the system or its environment in harm's way. In this paper, we introduce Guardrails -- a novel runtime assurance mechanism that guarantees dynamic safety for autonomous systems, allowing them to safely evolve on the edge of their operational domains. Rooted in the theory of control barrier functions, Guardrails offers a control strategy that carefully blends commands from a human or AI operator with safe control actions to guarantee safe behavior. To demonstrate its capabilities, we implemented Guardrails on an F-16 fighter jet and conducted flight tests where Guardrails supervised a human pilot to enforce g-limits, altitude bounds, geofence constraints, and combinations thereof. Throughout extensive flight testing, Guardrails successfully ensured safety, keeping the pilot in control when safe to do so and minimally modifying unsafe pilot inputs otherwise.

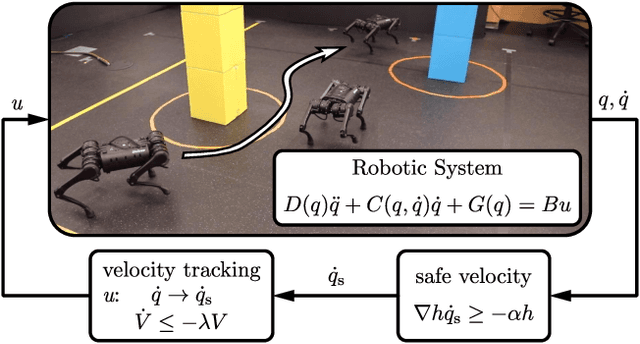

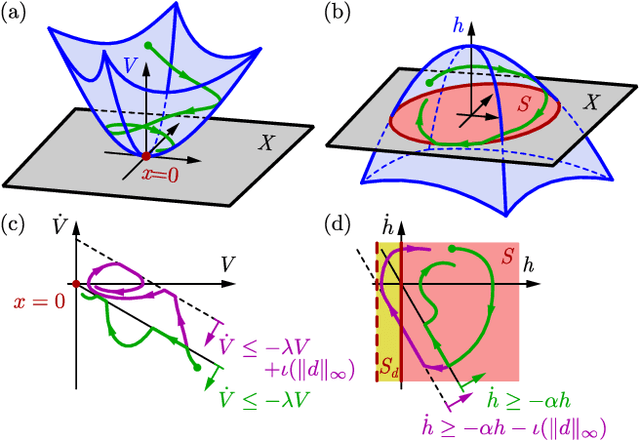

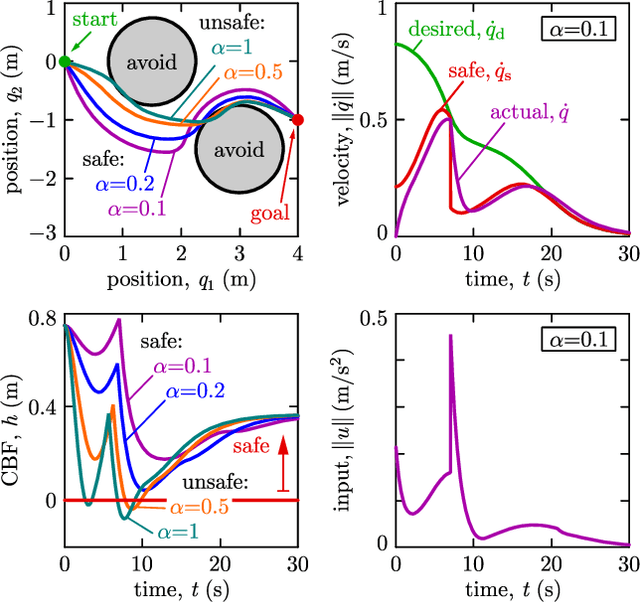

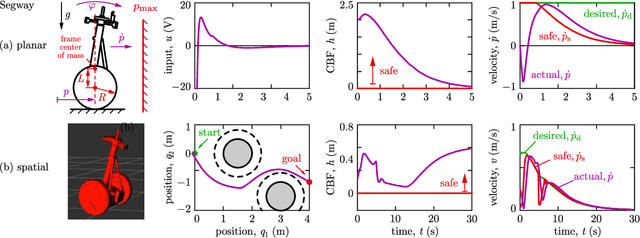

Model-Free Safety-Critical Control for Robotic Systems

Sep 19, 2021

Abstract:This paper presents a framework for the safety-critical control of robotic systems, when safety is defined on safe regions in the configuration space. To maintain safety, we synthesize a safe velocity based on control barrier function theory without relying on a -- potentially complicated -- high-fidelity dynamical model of the robot. Then, we track the safe velocity with a tracking controller. This culminates in model-free safety critical control. We prove theoretical safety guarantees for the proposed method. Finally, we demonstrate that this approach is application-agnostic. We execute an obstacle avoidance task with a Segway in high-fidelity simulation, as well as with a Drone and a Quadruped in hardware experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge