Andreas Baumbach

Weight transport through spike timing for robust local gradients

Mar 04, 2025

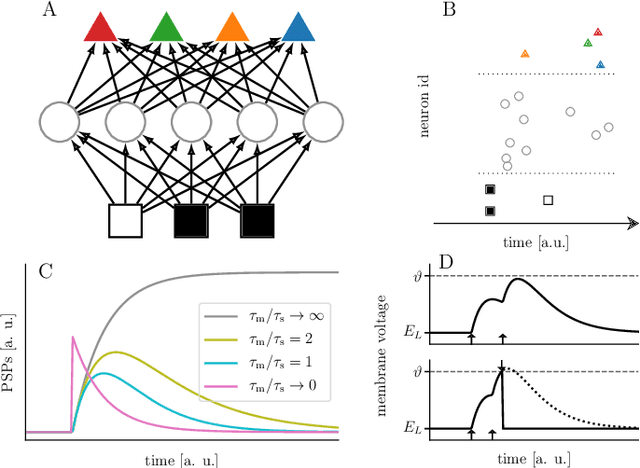

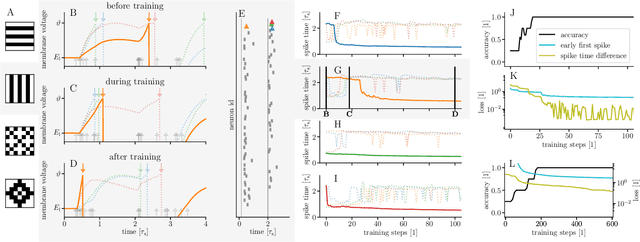

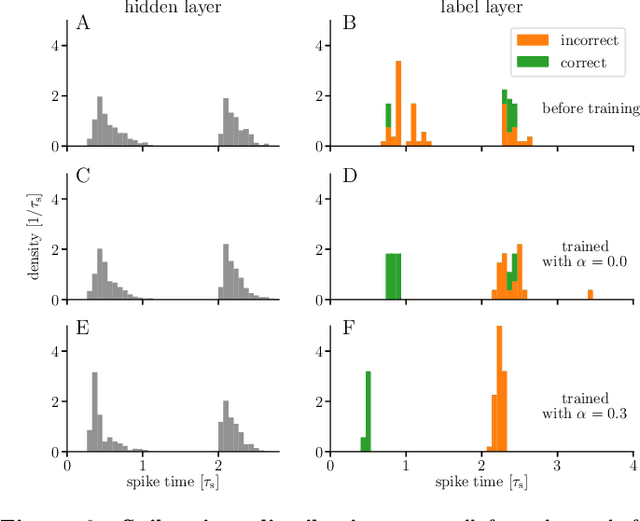

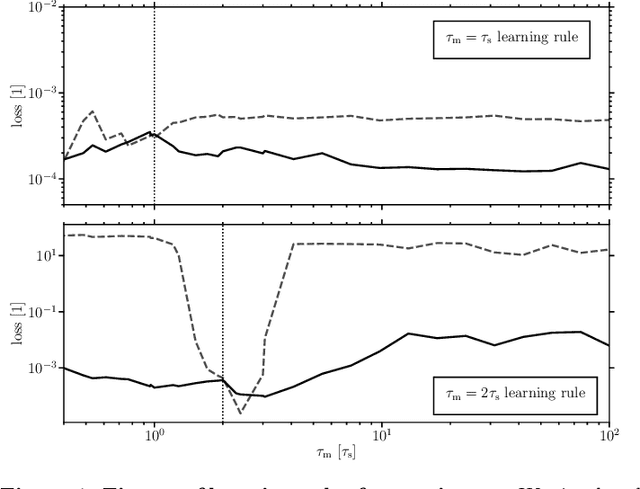

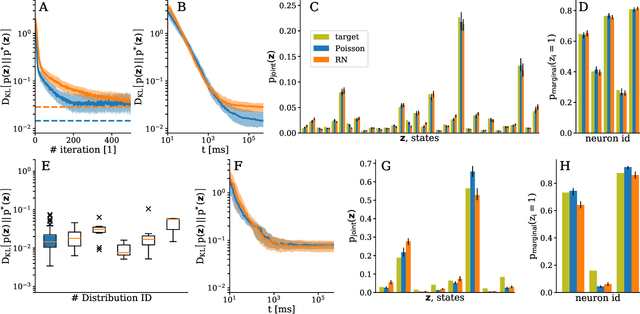

Abstract:In both machine learning and in computational neuroscience, plasticity in functional neural networks is frequently expressed as gradient descent on a cost. Often, this imposes symmetry constraints that are difficult to reconcile with local computation, as is required for biological networks or neuromorphic hardware. For example, wake-sleep learning in networks characterized by Boltzmann distributions builds on the assumption of symmetric connectivity. Similarly, the error backpropagation algorithm is notoriously plagued by the weight transport problem between the representation and the error stream. Existing solutions such as feedback alignment tend to circumvent the problem by deferring to the robustness of these algorithms to weight asymmetry. However, they are known to scale poorly with network size and depth. We introduce spike-based alignment learning (SAL), a complementary learning rule for spiking neural networks, which uses spike timing statistics to extract and correct the asymmetry between effective reciprocal connections. Apart from being spike-based and fully local, our proposed mechanism takes advantage of noise. Based on an interplay between Hebbian and anti-Hebbian plasticity, synapses can thereby recover the true local gradient. This also alleviates discrepancies that arise from neuron and synapse variability -- an omnipresent property of physical neuronal networks. We demonstrate the efficacy of our mechanism using different spiking network models. First, we show how SAL can significantly improve convergence to the target distribution in probabilistic spiking networks as compared to Hebbian plasticity alone. Second, in neuronal hierarchies based on cortical microcircuits, we show how our proposed mechanism effectively enables the alignment of feedback weights to the forward pathway, thus allowing the backpropagation of correct feedback errors.

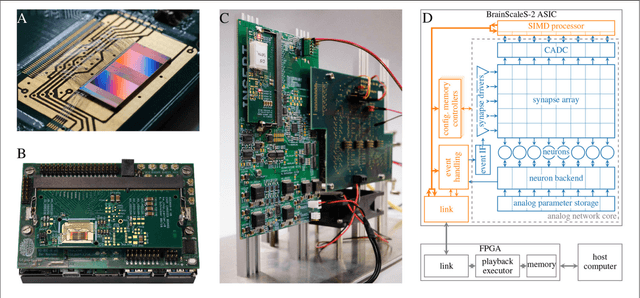

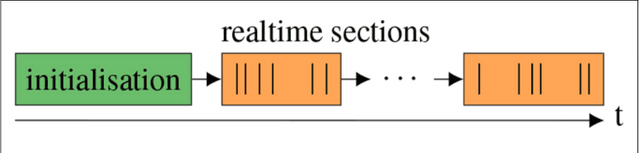

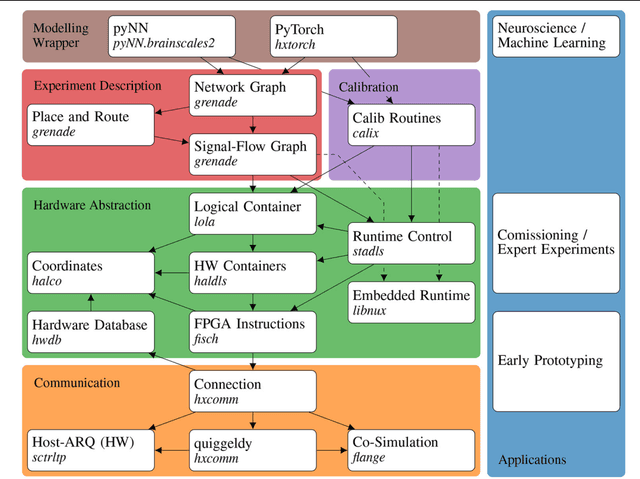

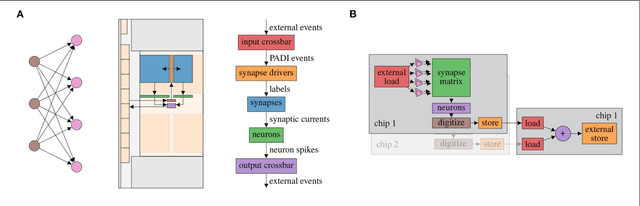

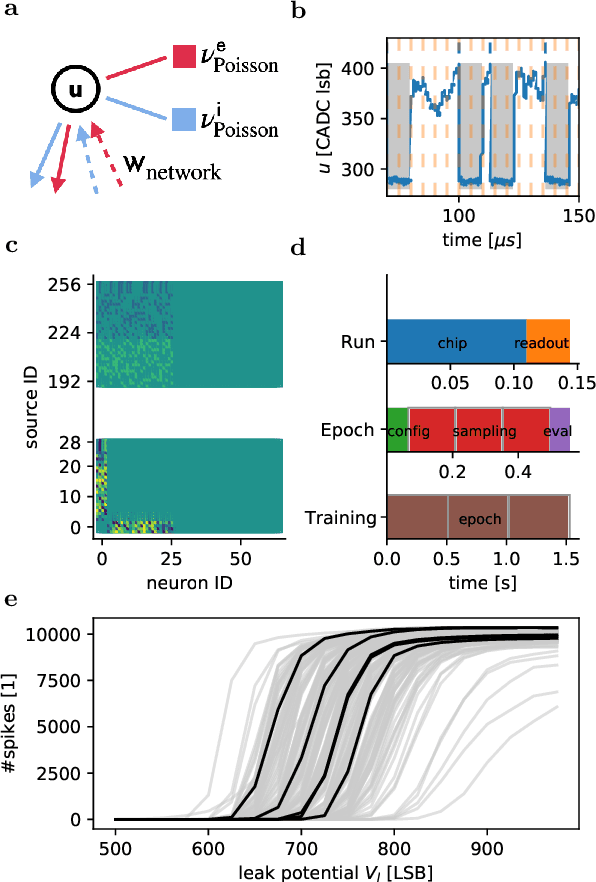

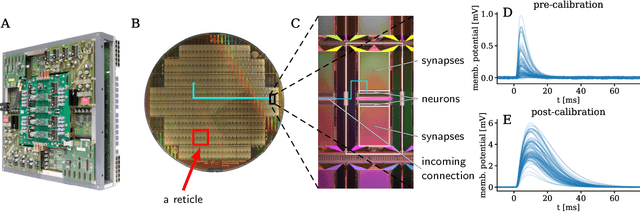

A Scalable Approach to Modeling on Accelerated Neuromorphic Hardware

Mar 21, 2022

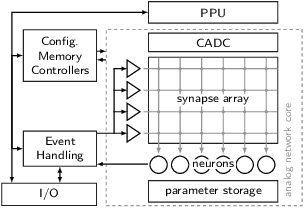

Abstract:Neuromorphic systems open up opportunities to enlarge the explorative space for computational research. However, it is often challenging to unite efficiency and usability. This work presents the software aspects of this endeavor for the BrainScaleS-2 system, a hybrid accelerated neuromorphic hardware architecture based on physical modeling. We introduce key aspects of the BrainScaleS-2 Operating System: experiment workflow, API layering, software design, and platform operation. We present use cases to discuss and derive requirements for the software and showcase the implementation. The focus lies on novel system and software features such as multi-compartmental neurons, fast re-configuration for hardware-in-the-loop training, applications for the embedded processors, the non-spiking operation mode, interactive platform access, and sustainable hardware/software co-development. Finally, we discuss further developments in terms of hardware scale-up, system usability and efficiency.

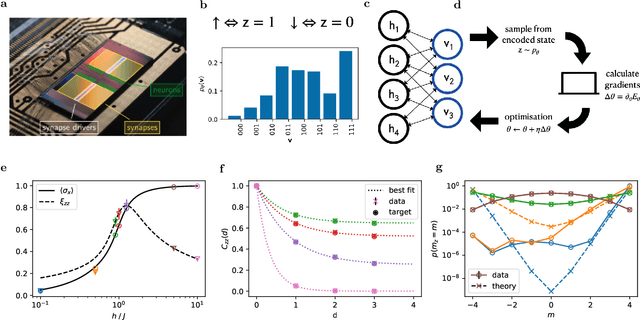

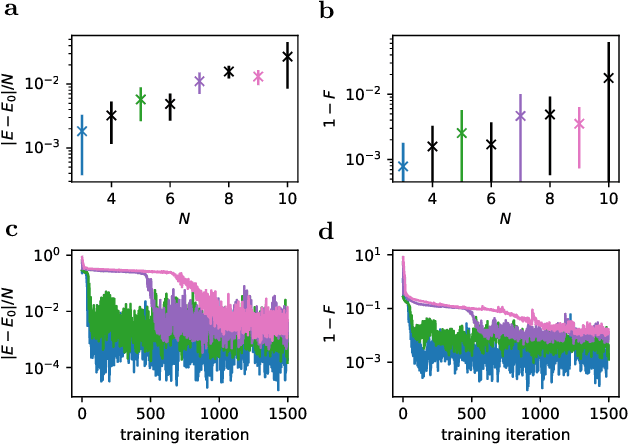

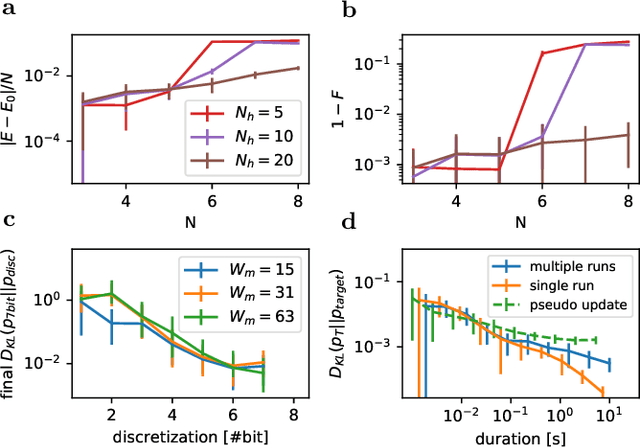

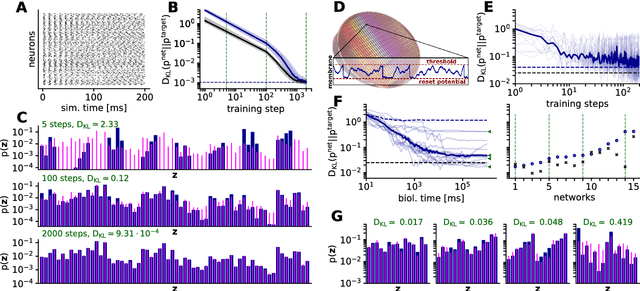

Variational learning of quantum ground states on spiking neuromorphic hardware

Oct 01, 2021

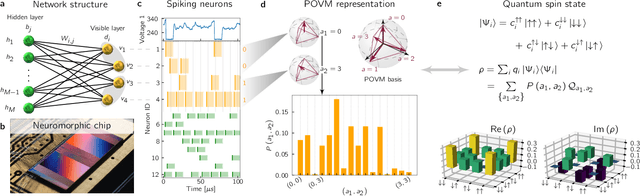

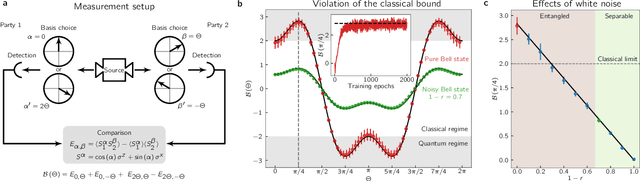

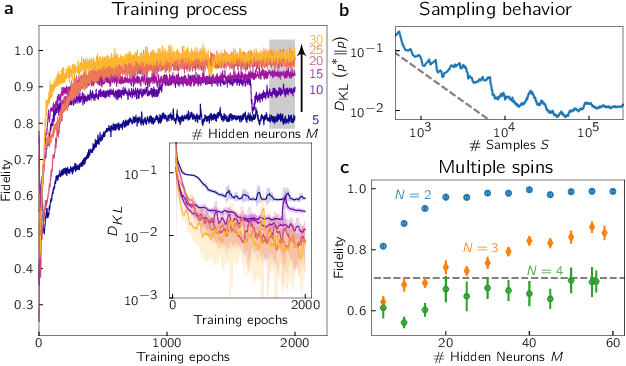

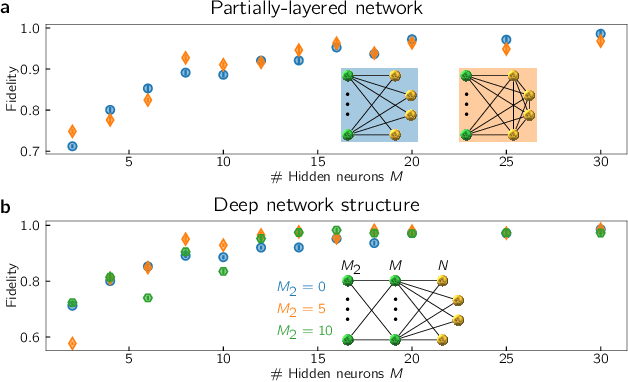

Abstract:We train a neuromorphic hardware chip to approximate the ground states of quantum spin models by variational energy minimization. Compared to variational artificial neural networks using Markov chain Monte Carlo for sample generation, this approach has the advantage that the neuromorphic device generates samples in a fast and inherently parallel fashion. We develop a training algorithm and apply it to the transverse field Ising model, showing good performance at moderate system sizes ($N\leq 10$). A systematic hyperparameter study shows that scalability to larger system sizes mainly depends on sample quality which is limited by parameter drifts on the analog neuromorphic chip. The learning performance shows a threshold behavior as a function of the number of variational parameters of the ansatz, with approximately $50$ hidden neurons being sufficient for representing critical ground states up to $N=10$. The 6+1-bit resolution of the network parameters does not limit the reachable approximation quality in the current setup. Our work provides an important step towards harnessing the capabilities of neuromorphic hardware for tackling the curse of dimensionality in quantum many-body problems.

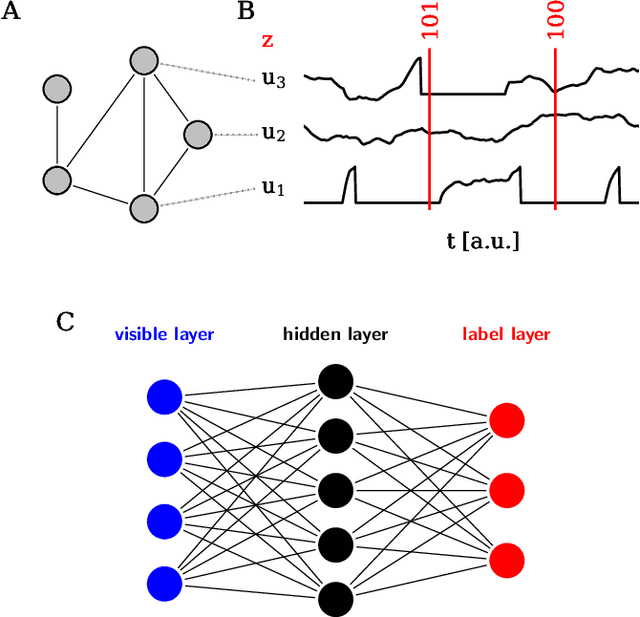

Spiking neuromorphic chip learns entangled quantum states

Aug 05, 2020

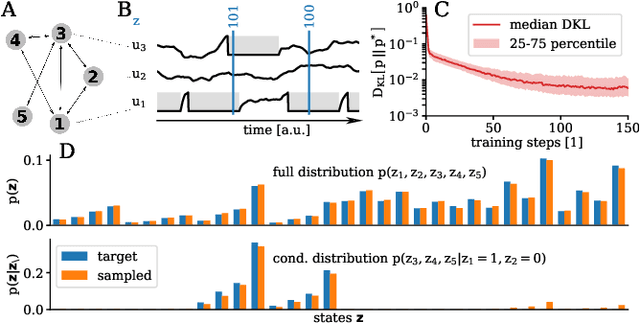

Abstract:Neuromorphic systems are designed to emulate certain structural and dynamical properties of biological neuronal networks, with the aim of inheriting the brain's functional performance and energy efficiency in artificial-intelligence applications [1,2]. Among the platforms existing today, the spike-based BrainScaleS system stands out by realizing fast analog dynamics which can boost computationally expensive tasks [3]. Here we use the latest BrainScaleS generation [4] for the algorithm-free simulation of quantum systems, thereby opening up an entirely new application space for these devices. This requires an appropriate spike-based representation of quantum states and an associated training method for imprinting a desired target state onto the network. We employ a representation of quantum states using probability distributions [5,6], enabling the use of a Bayesian sampling framework for spiking neurons [7]. For training, we developed a Hebbian learning scheme that explicitly exploits the inherent speed of the substrate, which enables us to realize a variety of network topologies. We encoded maximally entangled states of up to four qubits and observed fidelities that imply genuine $N$-partite entanglement. In particular, the encoding of entangled pure and mixed two-qubit states reaches a quality that allows the observation of Bell correlations, thus demonstrating that non-classical features of quantum systems can be captured by spiking neural dynamics. Our work establishes an intriguing connection between quantum systems and classical spiking networks, and demonstrates the feasibility of simulating quantum systems with neuromorphic hardware.

Versatile emulation of spiking neural networks on an accelerated neuromorphic substrate

Dec 30, 2019

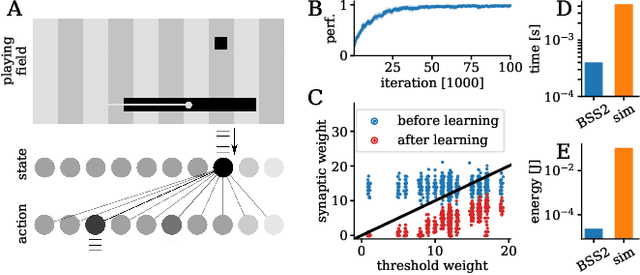

Abstract:We present first experimental results on the novel BrainScaleS-2 neuromorphic architecture based on an analog neuro-synaptic core and augmented by embedded microprocessors for complex plasticity and experiment control. The high acceleration factor of 1000 compared to biological dynamics enables the execution of computationally expensive tasks, by allowing the fast emulation of long-duration experiments or rapid iteration over many consecutive trials. The flexibility of our architecture is demonstrated in a suite of five distinct experiments, which emphasize different aspects of the BrainScaleS-2 system.

Fast and deep neuromorphic learning with time-to-first-spike coding

Dec 24, 2019

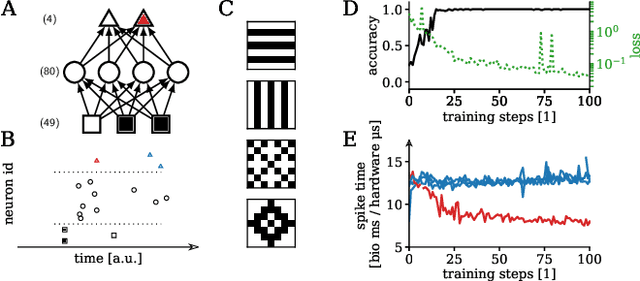

Abstract:For a biological agent operating under environmental pressure, energy consumption and reaction times are of critical importance. Similarly, engineered systems also strive for short time-to-solution and low energy-to-solution characteristics. At the level of neuronal implementation, this implies achieving the desired results with as few and as early spikes as possible. In the time-to-first-spike coding framework, both of these goals are inherently emerging features of learning. Here, we describe a rigorous derivation of error-backpropagation-based learning for hierarchical networks of leaky integrate-and-fire neurons. We explicitly address two issues that are relevant for both biological plausibility and applicability to neuromorphic substrates by incorporating dynamics with finite time constants and by optimizing the backward pass with respect to substrate variability. This narrows the gap between previous models of first-spike-time learning and biological neuronal dynamics, thereby also enabling fast and energy-efficient inference on analog neuromorphic devices that inherit these dynamics from their biological archetypes, which we demonstrate on two generations of the BrainScaleS analog neuromorphic architecture.

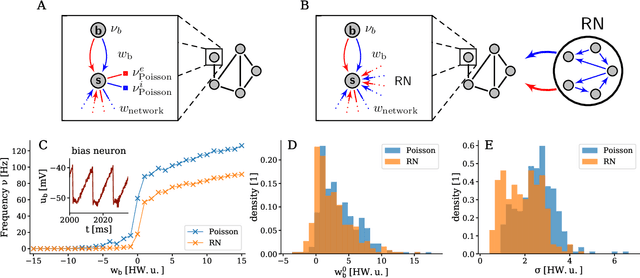

Stochasticity from function - why the Bayesian brain may need no noise

Sep 21, 2018

Abstract:An increasing body of evidence suggests that the trial-to-trial variability of spiking activity in the brain is not mere noise, but rather the reflection of a sampling-based encoding scheme for probabilistic computing. Since the precise statistical properties of neural activity are important in this context, many models assume an ad-hoc source of well-behaved, explicit noise, either on the input or on the output side of single neuron dynamics, most often assuming an independent Poisson process in either case. However, these assumptions are somewhat problematic: neighboring neurons tend to share receptive fields, rendering both their input and their output correlated; at the same time, neurons are known to behave largely deterministically, as a function of their membrane potential and conductance. We suggest that spiking neural networks may, in fact, have no need for noise to perform sampling-based Bayesian inference. We study analytically the effect of auto- and cross-correlations in functionally Bayesian spiking networks and demonstrate how their effect translates to synaptic interaction strengths, rendering them controllable through synaptic plasticity. This allows even small ensembles of interconnected deterministic spiking networks to simultaneously and co-dependently shape their output activity through learning, enabling them to perform complex Bayesian computation without any need for noise, which we demonstrate in silico, both in classical simulation and in neuromorphic emulation. These results close a gap between the abstract models and the biology of functionally Bayesian spiking networks, effectively reducing the architectural constraints imposed on physical neural substrates required to perform probabilistic computing, be they biological or artificial.

Generative models on accelerated neuromorphic hardware

Jul 11, 2018

Abstract:The traditional von Neumann computer architecture faces serious obstacles, both in terms of miniaturization and in terms of heat production, with increasing performance. Artificial neural (neuromorphic) substrates represent an alternative approach to tackle this challenge. A special subset of these systems follow the principle of "physical modeling" as they directly use the physical properties of the underlying substrate to realize computation with analog components. While these systems are potentially faster and/or more energy efficient than conventional computers, they require robust models that can cope with their inherent limitations in terms of controllability and range of parameters. A natural source of inspiration for robust models is neuroscience as the brain faces similar challenges. It has been recently suggested that sampling with the spiking dynamics of neurons is potentially suitable both as a generative and a discriminative model for artificial neural substrates. In this work we present the implementation of sampling with leaky integrate-and-fire neurons on the BrainScaleS physical model system. We prove the sampling property of the network and demonstrate its applicability to high-dimensional datasets. The required stochasticity is provided by a spiking random network on the same substrate. This allows the system to run in a self-contained fashion without external stochastic input from the host environment. The implementation provides a basis as a building block in large-scale biologically relevant emulations, as a fast approximate sampler or as a framework to realize on-chip learning on (future generations of) accelerated spiking neuromorphic hardware. Our work contributes to the development of robust computation on physical model systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge